Andrew T. Campbell

MindScape Study: Integrating LLM and Behavioral Sensing for Personalized AI-Driven Journaling Experiences

Sep 15, 2024Abstract:Mental health concerns are prevalent among college students, highlighting the need for effective interventions that promote self-awareness and holistic well-being. MindScape pioneers a novel approach to AI-powered journaling by integrating passively collected behavioral patterns such as conversational engagement, sleep, and location with Large Language Models (LLMs). This integration creates a highly personalized and context-aware journaling experience, enhancing self-awareness and well-being by embedding behavioral intelligence into AI. We present an 8-week exploratory study with 20 college students, demonstrating the MindScape app's efficacy in enhancing positive affect (7%), reducing negative affect (11%), loneliness (6%), and anxiety and depression, with a significant week-over-week decrease in PHQ-4 scores (-0.25 coefficient), alongside improvements in mindfulness (7%) and self-reflection (6%). The study highlights the advantages of contextual AI journaling, with participants particularly appreciating the tailored prompts and insights provided by the MindScape app. Our analysis also includes a comparison of responses to AI-driven contextual versus generic prompts, participant feedback insights, and proposed strategies for leveraging contextual AI journaling to improve well-being on college campuses. By showcasing the potential of contextual AI journaling to support mental health, we provide a foundation for further investigation into the effects of contextual AI journaling on mental health and well-being.

Contextual AI Journaling: Integrating LLM and Time Series Behavioral Sensing Technology to Promote Self-Reflection and Well-being using the MindScape App

Mar 30, 2024Abstract:MindScape aims to study the benefits of integrating time series behavioral patterns (e.g., conversational engagement, sleep, location) with Large Language Models (LLMs) to create a new form of contextual AI journaling, promoting self-reflection and well-being. We argue that integrating behavioral sensing in LLMs will likely lead to a new frontier in AI. In this Late-Breaking Work paper, we discuss the MindScape contextual journal App design that uses LLMs and behavioral sensing to generate contextual and personalized journaling prompts crafted to encourage self-reflection and emotional development. We also discuss the MindScape study of college students based on a preliminary user study and our upcoming study to assess the effectiveness of contextual AI journaling in promoting better well-being on college campuses. MindScape represents a new application class that embeds behavioral intelligence in AI.

MoodCapture: Depression Detection Using In-the-Wild Smartphone Images

Feb 25, 2024Abstract:MoodCapture presents a novel approach that assesses depression based on images automatically captured from the front-facing camera of smartphones as people go about their daily lives. We collect over 125,000 photos in the wild from N=177 participants diagnosed with major depressive disorder for 90 days. Images are captured naturalistically while participants respond to the PHQ-8 depression survey question: \textit{``I have felt down, depressed, or hopeless''}. Our analysis explores important image attributes, such as angle, dominant colors, location, objects, and lighting. We show that a random forest trained with face landmarks can classify samples as depressed or non-depressed and predict raw PHQ-8 scores effectively. Our post-hoc analysis provides several insights through an ablation study, feature importance analysis, and bias assessment. Importantly, we evaluate user concerns about using MoodCapture to detect depression based on sharing photos, providing critical insights into privacy concerns that inform the future design of in-the-wild image-based mental health assessment tools.

Using Mobile Data and Deep Models to Assess Auditory Verbal Hallucinations

Apr 20, 2023

Abstract:Hallucination is an apparent perception in the absence of real external sensory stimuli. An auditory hallucination is a perception of hearing sounds that are not real. A common form of auditory hallucination is hearing voices in the absence of any speakers which is known as Auditory Verbal Hallucination (AVH). AVH is fragments of the mind's creation that mostly occur in people diagnosed with mental illnesses such as bipolar disorder and schizophrenia. Assessing the valence of hallucinated voices (i.e., how negative or positive voices are) can help measure the severity of a mental illness. We study N=435 individuals, who experience hearing voices, to assess auditory verbal hallucination. Participants report the valence of voices they hear four times a day for a month through ecological momentary assessments with questions that have four answering scales from ``not at all'' to ``extremely''. We collect these self-reports as the valence supervision of AVH events via a mobile application. Using the application, participants also record audio diaries to describe the content of hallucinated voices verbally. In addition, we passively collect mobile sensing data as contextual signals. We then experiment with how predictive these linguistic and contextual cues from the audio diary and mobile sensing data are of an auditory verbal hallucination event. Finally, using transfer learning and data fusion techniques, we train a neural net model that predicts the valance of AVH with a performance of 54\% top-1 and 72\% top-2 F1 score.

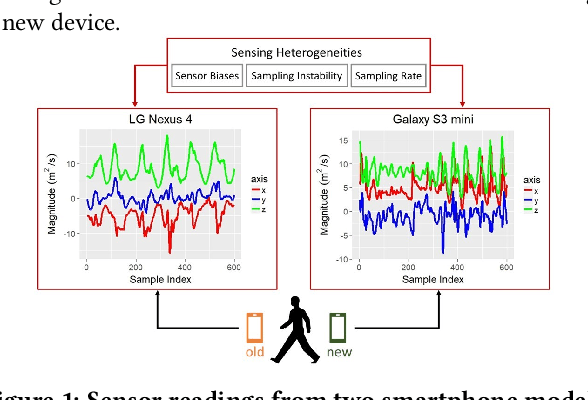

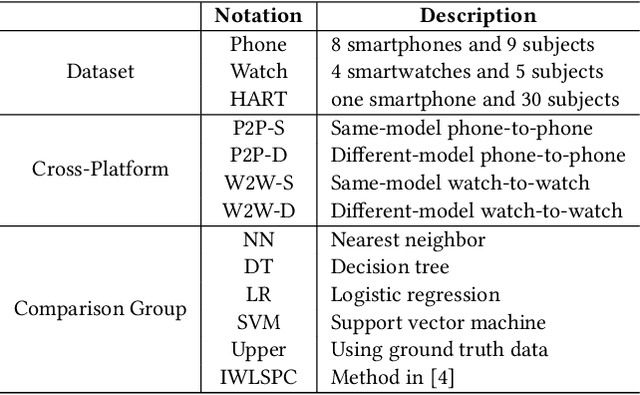

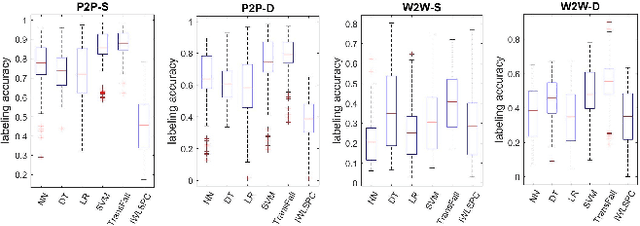

Transfer Learning for Activity Recognition in Mobile Health

Jul 12, 2020

Abstract:While activity recognition from inertial sensors holds potential for mobile health, differences in sensing platforms and user movement patterns cause performance degradation. Aiming to address these challenges, we propose a transfer learning framework, TransFall, for sensor-based activity recognition. TransFall's design contains a two-tier data transformation, a label estimation layer, and a model generation layer to recognize activities for the new scenario. We validate TransFall analytically and empirically.

Jointly Predicting Job Performance, Personality, Cognitive Ability, Affect, and Well-Being

Jun 10, 2020

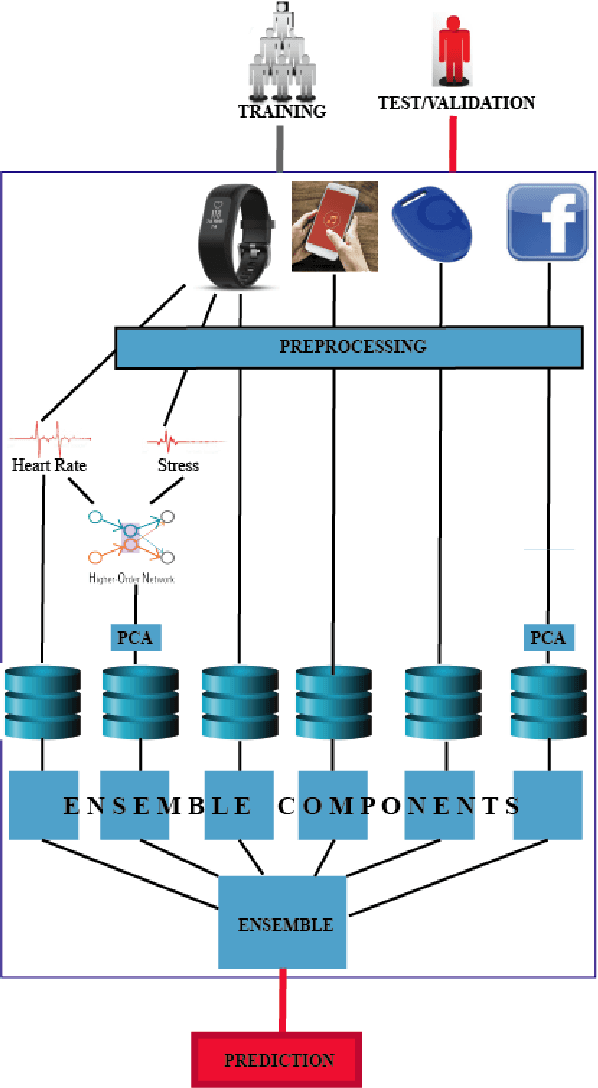

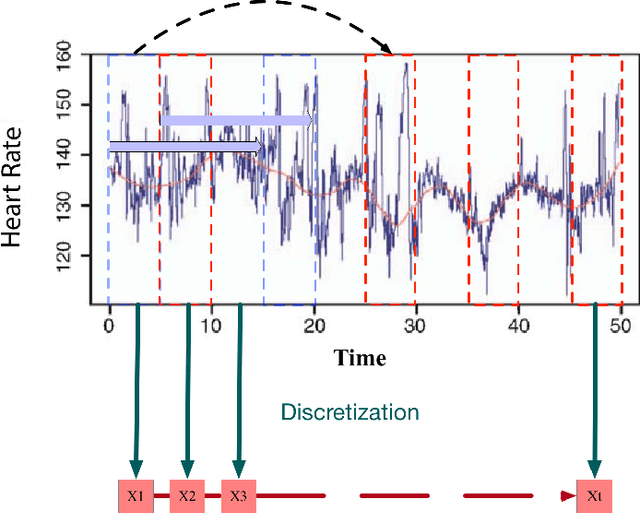

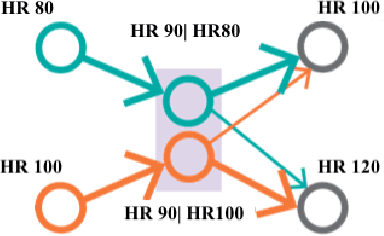

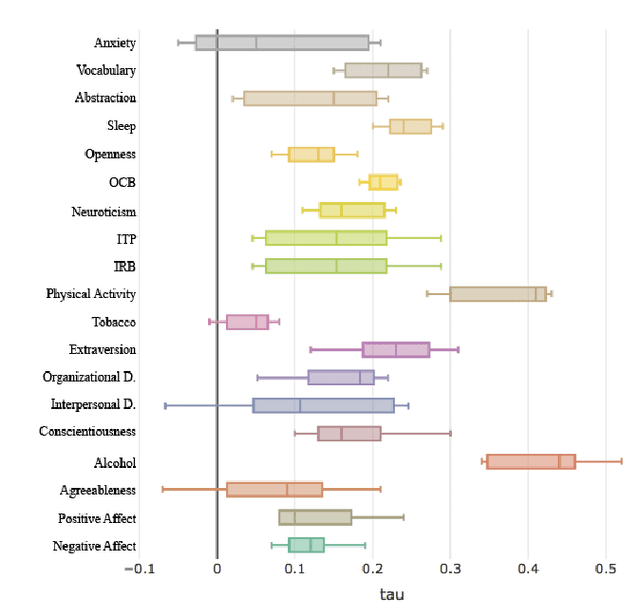

Abstract:Assessment of job performance, personalized health and psychometric measures are domains where data-driven and ubiquitous computing exhibits the potential of a profound impact in the future. Existing techniques use data extracted from questionnaires, sensors (wearable, computer, etc.), or other traits, to assess well-being and cognitive attributes of individuals. However, these techniques can neither predict individual's well-being and psychological traits in a global manner nor consider the challenges associated to processing the data available, that is incomplete and noisy. In this paper, we create a benchmark for predictive analysis of individuals from a perspective that integrates: physical and physiological behavior, psychological states and traits, and job performance. We design data mining techniques as benchmark and uses real noisy and incomplete data derived from wearable sensors to predict 19 constructs based on 12 standardized well-validated tests. The study included 757 participants who were knowledge workers in organizations across the USA with varied work roles. We developed a data mining framework to extract the meaningful predictors for each of the 19 variables under consideration. Our model is the first benchmark that combines these various instrument-derived variables in a single framework to understand people's behavior by leveraging real uncurated data from wearable, mobile, and social media sources. We verify our approach experimentally using the data obtained from our longitudinal study. The results show that our framework is consistently reliable and capable of predicting the variables under study better than the baselines when prediction is restricted to the noisy, incomplete data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge