Gloria Mark

Jointly Predicting Job Performance, Personality, Cognitive Ability, Affect, and Well-Being

Jun 10, 2020

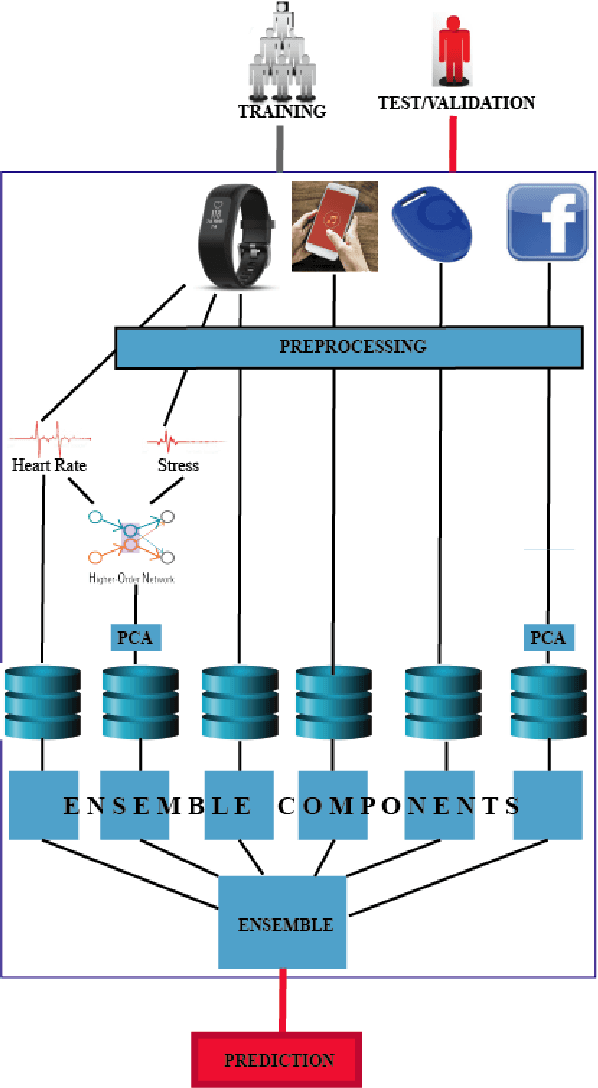

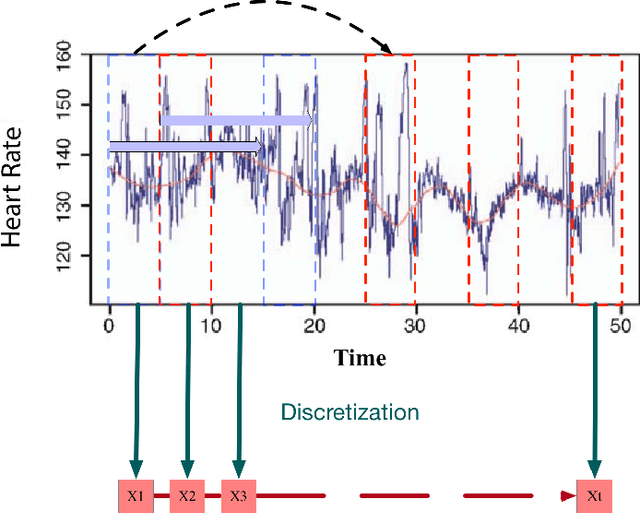

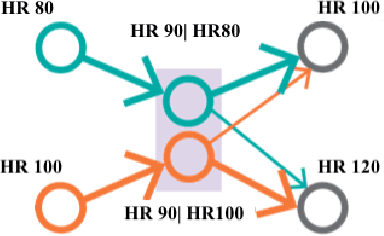

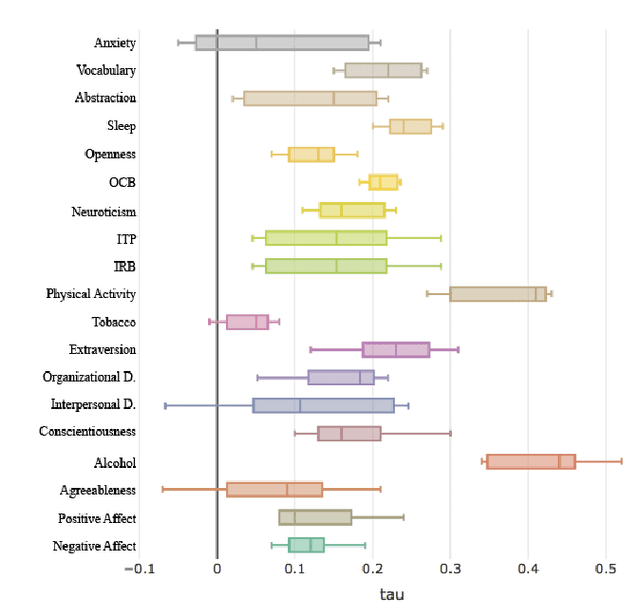

Abstract:Assessment of job performance, personalized health and psychometric measures are domains where data-driven and ubiquitous computing exhibits the potential of a profound impact in the future. Existing techniques use data extracted from questionnaires, sensors (wearable, computer, etc.), or other traits, to assess well-being and cognitive attributes of individuals. However, these techniques can neither predict individual's well-being and psychological traits in a global manner nor consider the challenges associated to processing the data available, that is incomplete and noisy. In this paper, we create a benchmark for predictive analysis of individuals from a perspective that integrates: physical and physiological behavior, psychological states and traits, and job performance. We design data mining techniques as benchmark and uses real noisy and incomplete data derived from wearable sensors to predict 19 constructs based on 12 standardized well-validated tests. The study included 757 participants who were knowledge workers in organizations across the USA with varied work roles. We developed a data mining framework to extract the meaningful predictors for each of the 19 variables under consideration. Our model is the first benchmark that combines these various instrument-derived variables in a single framework to understand people's behavior by leveraging real uncurated data from wearable, mobile, and social media sources. We verify our approach experimentally using the data obtained from our longitudinal study. The results show that our framework is consistently reliable and capable of predicting the variables under study better than the baselines when prediction is restricted to the noisy, incomplete data.

Tell Me About Yourself: Using an AI-Powered Chatbot to Conduct Conversational Surveys

May 25, 2019

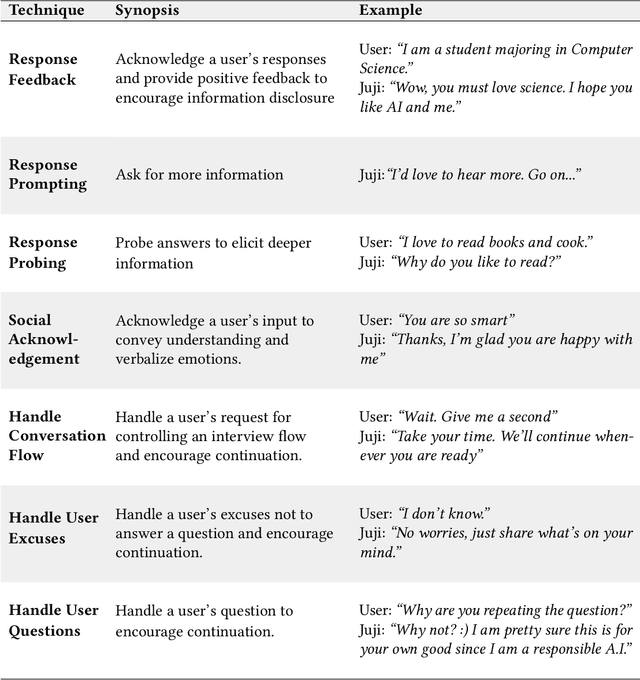

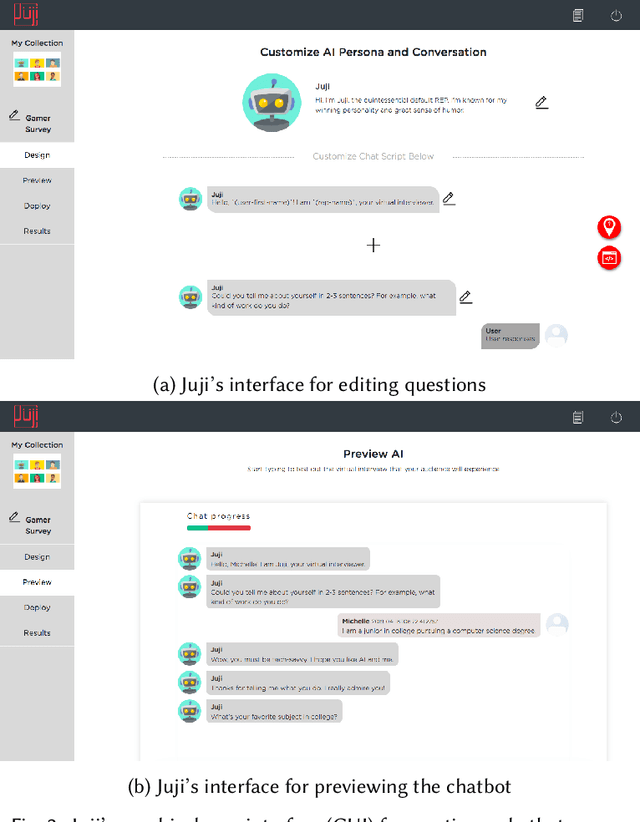

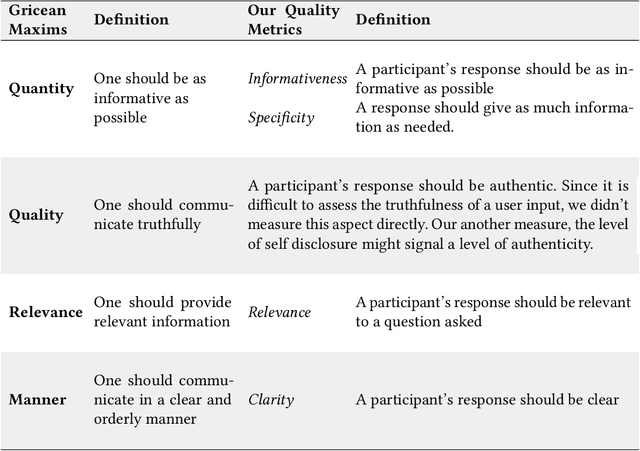

Abstract:The rise of increasingly more powerful chatbots offers a new way to collect information through conversational surveys, where a chatbot asks open-ended questions, interprets a user's free-text responses, and probes answers when needed. To investigate the effectiveness and limitations of such a chatbot in conducting surveys, we conducted a field study involving about 600 participants. In this study, half of the participants took a typical online survey on Qualtrics and the other half interacted with an AI-powered chatbot to complete a conversational survey. Our detailed analysis of over 5200 free-text responses revealed that the chatbot drove a significantly higher level of participant engagement and elicited significantly better quality responses in terms of relevance, depth, and readability. Based on our results, we discuss design implications for creating AI-powered chatbots to conduct effective surveys and beyond.

Confiding in and Listening to Virtual Agents: The Effect of Personality

Nov 02, 2018

Abstract:We present an intelligent virtual interviewer that engages with a user in a text-based conversation and automatically infers the user's psychological traits, such as personality. We investigate how the personality of a virtual interviewer influences a user's behavior from two perspectives: the user's willingness to confide in, and listen to, a virtual interviewer. We have developed two virtual interviewers with distinct personalities and deployed them in a real-world recruiting event. We present findings from completed interviews with 316 actual job applicants. Notably, users are more willing to confide in and listen to a virtual interviewer with a serious, assertive personality. Moreover, users' personality traits, inferred from their chat text, influence their perception of a virtual interviewer, and their willingness to confide in and listen to a virtual interviewer. Finally, we discuss the implications of our work on building hyper-personalized, intelligent agents based on user traits.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge