Andrew Beaulieu

A Systematic Study of Data Modalities and Strategies for Co-training Large Behavior Models for Robot Manipulation

Feb 01, 2026Abstract:Large behavior models have shown strong dexterous manipulation capabilities by extending imitation learning to large-scale training on multi-task robot data, yet their generalization remains limited by the insufficient robot data coverage. To expand this coverage without costly additional data collection, recent work relies on co-training: jointly learning from target robot data and heterogeneous data modalities. However, how different co-training data modalities and strategies affect policy performance remains poorly understood. We present a large-scale empirical study examining five co-training data modalities: standard vision-language data, dense language annotations for robot trajectories, cross-embodiment robot data, human videos, and discrete robot action tokens across single- and multi-phase training strategies. Our study leverages 4,000 hours of robot and human manipulation data and 50M vision-language samples to train vision-language-action policies. We evaluate 89 policies over 58,000 simulation rollouts and 2,835 real-world rollouts. Our results show that co-training with forms of vision-language and cross-embodiment robot data substantially improves generalization to distribution shifts, unseen tasks, and language following, while discrete action token variants yield no significant benefits. Combining effective modalities produces cumulative gains and enables rapid adaptation to unseen long-horizon dexterous tasks via fine-tuning. Training exclusively on robot data degrades the visiolinguistic understanding of the vision-language model backbone, while co-training with effective modalities restores these capabilities. Explicitly conditioning action generation on chain-of-thought traces learned from co-training data does not improve performance in our simulation benchmark. Together, these results provide practical guidance for building scalable generalist robot policies.

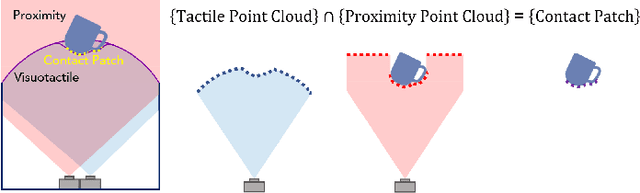

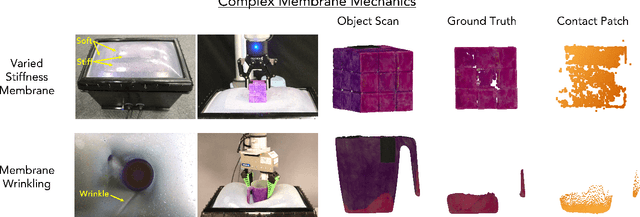

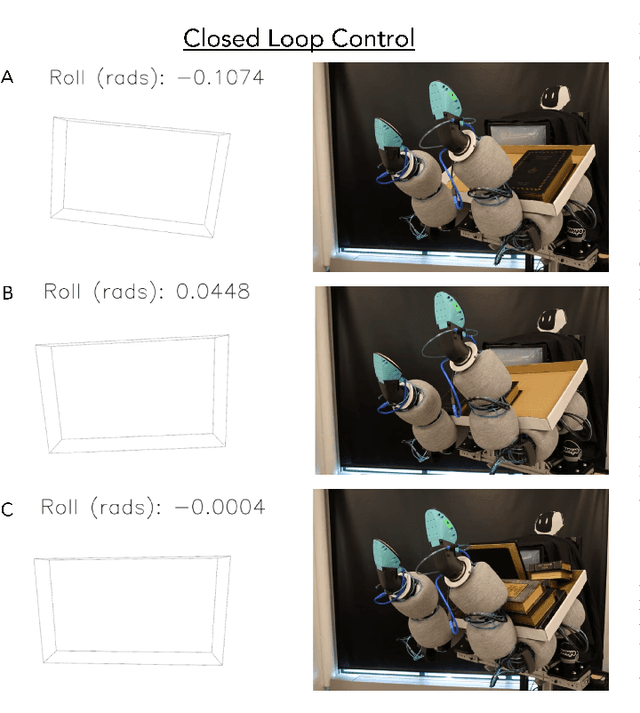

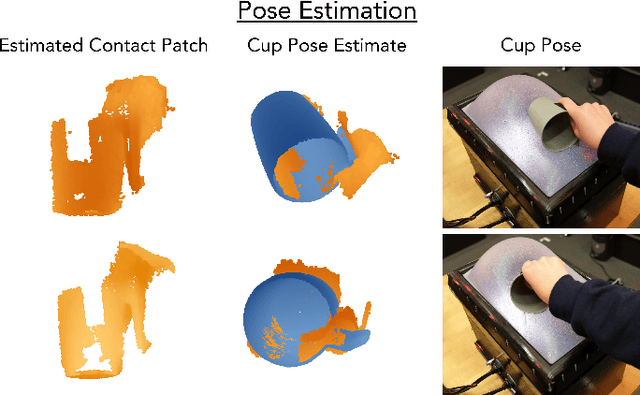

Proximity and Visuotactile Point Cloud Fusion for Contact Patches in Extreme Deformation

Jul 07, 2023

Abstract:Equipping robots with the sense of touch is critical to emulating the capabilities of humans in real world manipulation tasks. Visuotactile sensors are a popular tactile sensing strategy due to data output compatible with computer vision algorithms and accurate, high resolution estimates of local object geometry. However, these sensors struggle to accommodate high deformations of the sensing surface during object interactions, hindering more informative contact with cm-scale objects frequently encountered in the real world. The soft interfaces of visuotactile sensors are often made of hyperelastic elastomers, which are difficult to simulate quickly and accurately when extremely deformed for tactile information. Additionally, many visuotactile sensors that rely on strict internal light conditions or pattern tracking will fail if the surface is highly deformed. In this work, we propose an algorithm that fuses proximity and visuotactile point clouds for contact patch segmentation that is entirely independent from membrane mechanics. This algorithm exploits the synchronous, high-res proximity and visuotactile modalities enabled by an extremely deformable, selectively transmissive soft membrane, which uses visible light for visuotactile sensing and infrared light for proximity depth. We present the hardware design, membrane fabrication, and evaluation of our contact patch algorithm in low (10%), medium (60%), and high (100%+) membrane strain states. We compare our algorithm against three baselines: proximity-only, tactile-only, and a membrane mechanics model. Our proposed algorithm outperforms all baselines with an average RMSE under 2.8mm of the contact patch geometry across all strain ranges. We demonstrate our contact patch algorithm in four applications: varied stiffness membranes, torque and shear-induced wrinkling, closed loop control for whole body manipulation, and pose estimation.

Punyo-1: Soft tactile-sensing upper-body robot for large object manipulation and physical human interaction

Nov 17, 2021

Abstract:The manipulation of large objects and the ability to safely operate in the vicinity of humans are key capabilities of a general purpose domestic robotic assistant. We present the design of a soft, tactile-sensing humanoid upper-body robot and demonstrate whole-body rich-contact manipulation strategies for handling large objects. We demonstrate our hardware design philosophy for outfitting off-the-shelf hard robot arms and other upper-body components with soft tactile-sensing modules, including: (i) low-cost, cut-resistant, contact pressure localizing coverings for the arms, (ii) paws based on TRI's Soft-bubble sensors for the end effectors, and (iii) compliant force/geometry sensors for the coarse geometry-sensing surface/chest. We leverage the mechanical intelligence and tactile sensing of these modules to develop and demonstrate motion primitives for whole-body grasping control. We evaluate the hardware's effectiveness in achieving grasps of varying strengths over a variety of large domestic objects. Our results demonstrate the importance of exploiting softness and tactile sensing in contact-rich manipulation strategies, as well as a path forward for whole-body force-controlled interactions with the world.

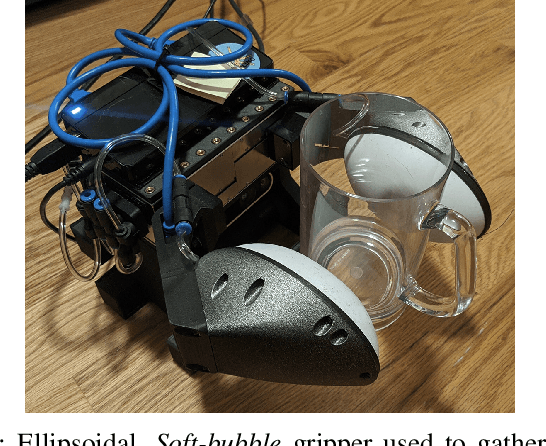

Variable compliance and geometry regulation of Soft-Bubble grippers with active pressure control

Mar 15, 2021

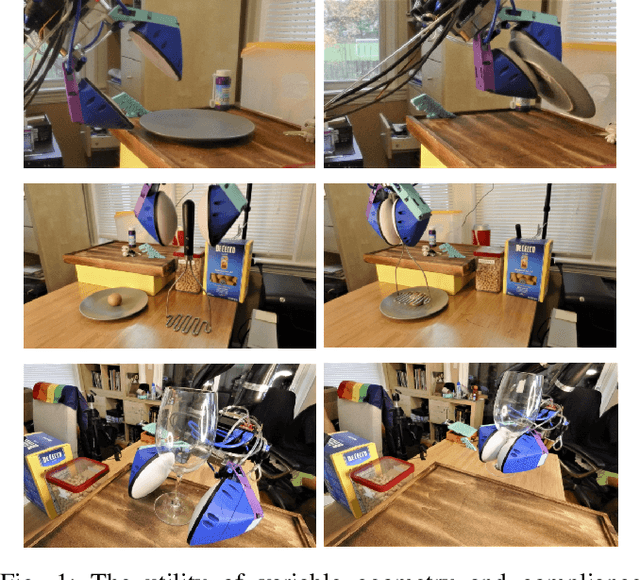

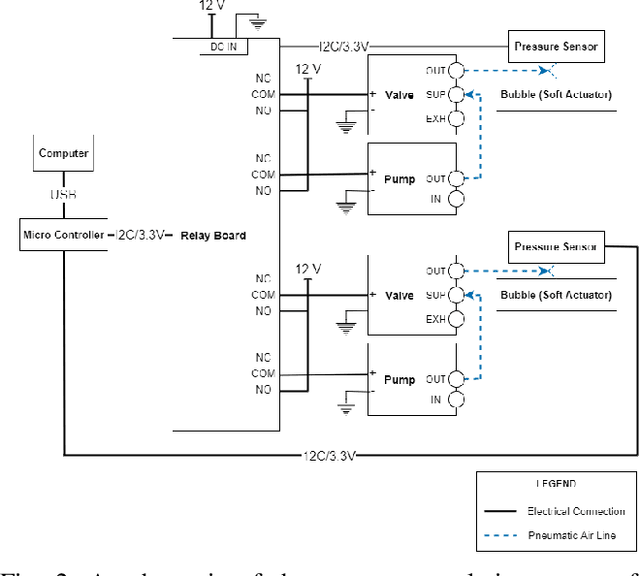

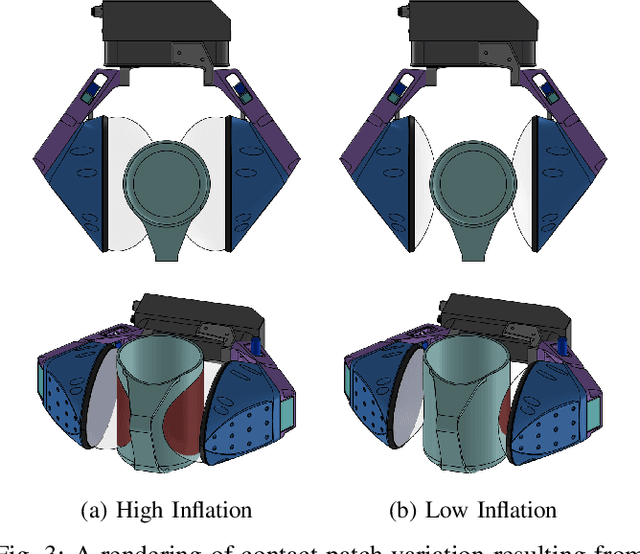

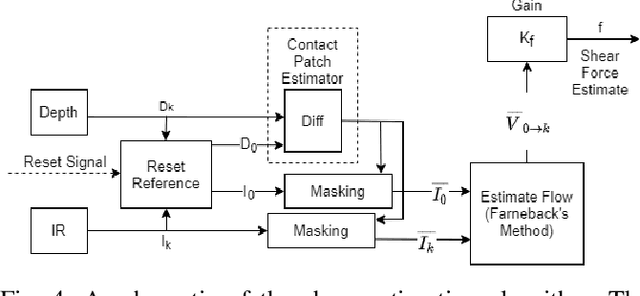

Abstract:While compliant grippers have become increasingly commonplace in robot manipulation, finding the right stiffness and geometry for grasping the widest variety of objects remains a key challenge. Adjusting both stiffness and gripper geometry on the fly may provide the versatility needed to manipulate the large range of objects found in domestic environments. We present a system for actively controlling the geometry (inflation level) and compliance of Soft-bubble grippers - air filled, highly compliant parallel gripper fingers incorporating visuotactile sensing. The proposed system enables large, controlled changes in gripper finger geometry and grasp stiffness, as well as simple in-hand manipulation. We also demonstrate, despite these changes, the continued viability of advanced perception capabilities such as dense geometry and shear force measurement - we present a straightforward extension of our previously presented approach for measuring shear induced displacements using the internal imaging sensor and taking into account pressure and geometry changes. We quantify the controlled variation of grasp-free geometry, grasp stiffness and contact patch geometry resulting from pressure regulation and we demonstrate new capabilities for the gripper in the home by grasping in constrained spaces, manipulating tools requiring lower and higher stiffness grasps, as well as contact patch modulation.

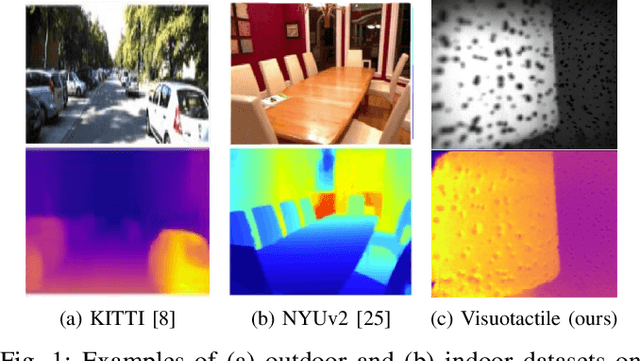

Monocular Depth Estimation for Soft Visuotactile Sensors

Jan 05, 2021

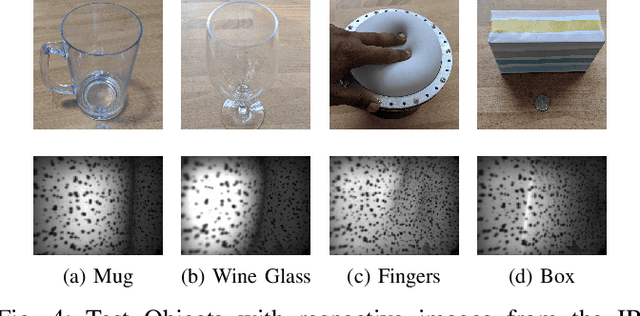

Abstract:Fluid-filled soft visuotactile sensors such as the Soft-bubbles alleviate key challenges for robust manipulation, as they enable reliable grasps along with the ability to obtain high-resolution sensory feedback on contact geometry and forces. Although they are simple in construction, their utility has been limited due to size constraints introduced by enclosed custom IR/depth imaging sensors to directly measure surface deformations. Towards mitigating this limitation, we investigate the application of state-of-the-art monocular depth estimation to infer dense internal (tactile) depth maps directly from the internal single small IR imaging sensor. Through real-world experiments, we show that deep networks typically used for long-range depth estimation (1-100m) can be effectively trained for precise predictions at a much shorter range (1-100mm) inside a mostly textureless deformable fluid-filled sensor. We propose a simple supervised learning process to train an object-agnostic network requiring less than 10 random poses in contact for less than 10 seconds for a small set of diverse objects (mug, wine glass, box, and fingers in our experiments). We show that our approach is sample-efficient, accurate, and generalizes across different objects and sensor configurations unseen at training time. Finally, we discuss the implications of our approach for the design of soft visuotactile sensors and grippers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge