Zhuang Qi

FedPDPO: Federated Personalized Direct Preference Optimization for Large Language Model Alignment

Mar 20, 2026Abstract:Aligning large language models (LLMs) with human preferences in federated learning (FL) is challenging due to decentralized, privacy-sensitive, and highly non-IID preference data. Direct Preference Optimization (DPO) offers an efficient alternative to reinforcement learning with human feedback (RLHF), but its direct application in FL suffers from severe performance degradation under non-IID data and limited generalization of implicit rewards. To bridge this gap, we propose FedPDPO (Federated Personalized Direct Preference Optimization), a personalized federated framework for preference alignment of LLMs. It adopts a parameter-efficient fine-tuning architecture where each client maintains a frozen pretrained LLM backbone augmented with a Low-Rank Adaptation (LoRA) adapter, enabling communication-efficient aggregation. To address non-IID heterogeneity, we devise (1) the globally shared LoRA adapter with the personalized client-specific LLM head. Moreover, we introduce (2) a personalized DPO training strategy with a client-specific explicit reward head to complement implicit rewards and further alleviate non-IID heterogeneity, and (3) a bottleneck adapter to balance global and local features. We provide theoretical analysis establishing the probabilistic foundation and soundness. Extensive experiments on multiple preference datasets demonstrate state-of-the-art performance, achieving up to 4.80% average accuracy improvements in federated intra-domain and cross-domain settings.

ProtoConNet: Prototypical Augmentation and Alignment for Open-Set Few-Shot Image Classification

Jul 16, 2025

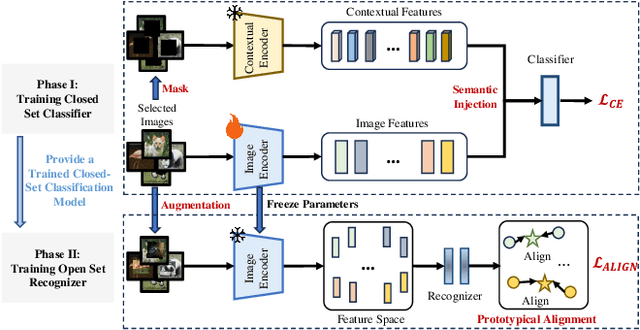

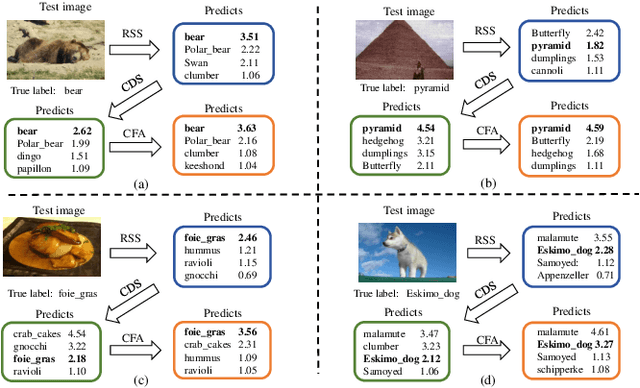

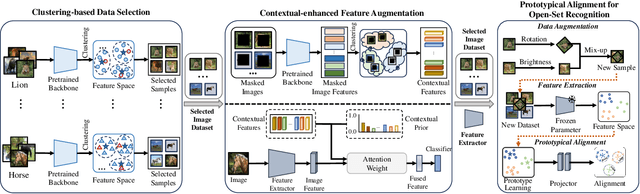

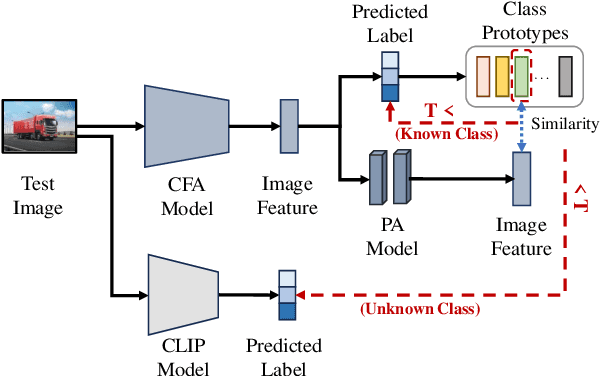

Abstract:Open-set few-shot image classification aims to train models using a small amount of labeled data, enabling them to achieve good generalization when confronted with unknown environments. Existing methods mainly use visual information from a single image to learn class representations to distinguish known from unknown categories. However, these methods often overlook the benefits of integrating rich contextual information. To address this issue, this paper proposes a prototypical augmentation and alignment method, termed ProtoConNet, which incorporates background information from different samples to enhance the diversity of the feature space, breaking the spurious associations between context and image subjects in few-shot scenarios. Specifically, it consists of three main modules: the clustering-based data selection (CDS) module mines diverse data patterns while preserving core features; the contextual-enhanced semantic refinement (CSR) module builds a context dictionary to integrate into image representations, which boosts the model's robustness in various scenarios; and the prototypical alignment (PA) module reduces the gap between image representations and class prototypes, amplifying feature distances for known and unknown classes. Experimental results from two datasets verified that ProtoConNet enhances the effectiveness of representation learning in few-shot scenarios and identifies open-set samples, making it superior to existing methods.

Balancing the Past and Present: A Coordinated Replay Framework for Federated Class-Incremental Learning

Jul 10, 2025Abstract:Federated Class Incremental Learning (FCIL) aims to collaboratively process continuously increasing incoming tasks across multiple clients. Among various approaches, data replay has become a promising solution, which can alleviate forgetting by reintroducing representative samples from previous tasks. However, their performance is typically limited by class imbalance, both within the replay buffer due to limited global awareness and between replayed and newly arrived classes. To address this issue, we propose a class wise balancing data replay method for FCIL (FedCBDR), which employs a global coordination mechanism for class-level memory construction and reweights the learning objective to alleviate the aforementioned imbalances. Specifically, FedCBDR has two key components: 1) the global-perspective data replay module reconstructs global representations of prior task in a privacy-preserving manner, which then guides a class-aware and importance-sensitive sampling strategy to achieve balanced replay; 2) Subsequently, to handle class imbalance across tasks, the task aware temperature scaling module adaptively adjusts the temperature of logits at both class and instance levels based on task dynamics, which reduces the model's overconfidence in majority classes while enhancing its sensitivity to minority classes. Experimental results verified that FedCBDR achieves balanced class-wise sampling under heterogeneous data distributions and improves generalization under task imbalance between earlier and recent tasks, yielding a 2%-15% Top-1 accuracy improvement over six state-of-the-art methods.

Empowering Vision Transformers with Multi-Scale Causal Intervention for Long-Tailed Image Classification

May 13, 2025Abstract:Causal inference has emerged as a promising approach to mitigate long-tail classification by handling the biases introduced by class imbalance. However, along with the change of advanced backbone models from Convolutional Neural Networks (CNNs) to Visual Transformers (ViT), existing causal models may not achieve an expected performance gain. This paper investigates the influence of existing causal models on CNNs and ViT variants, highlighting that ViT's global feature representation makes it hard for causal methods to model associations between fine-grained features and predictions, which leads to difficulties in classifying tail classes with similar visual appearance. To address these issues, this paper proposes TSCNet, a two-stage causal modeling method to discover fine-grained causal associations through multi-scale causal interventions. Specifically, in the hierarchical causal representation learning stage (HCRL), it decouples the background and objects, applying backdoor interventions at both the patch and feature level to prevent model from using class-irrelevant areas to infer labels which enhances fine-grained causal representation. In the counterfactual logits bias calibration stage (CLBC), it refines the optimization of model's decision boundary by adaptive constructing counterfactual balanced data distribution to remove the spurious associations in the logits caused by data distribution. Extensive experiments conducted on various long-tail benchmarks demonstrate that the proposed TSCNet can eliminate multiple biases introduced by data imbalance, which outperforms existing methods.

Semantic-Space-Intervened Diffusive Alignment for Visual Classification

May 09, 2025Abstract:Cross-modal alignment is an effective approach to improving visual classification. Existing studies typically enforce a one-step mapping that uses deep neural networks to project the visual features to mimic the distribution of textual features. However, they typically face difficulties in finding such a projection due to the two modalities in both the distribution of class-wise samples and the range of their feature values. To address this issue, this paper proposes a novel Semantic-Space-Intervened Diffusive Alignment method, termed SeDA, models a semantic space as a bridge in the visual-to-textual projection, considering both types of features share the same class-level information in classification. More importantly, a bi-stage diffusion framework is developed to enable the progressive alignment between the two modalities. Specifically, SeDA first employs a Diffusion-Controlled Semantic Learner to model the semantic features space of visual features by constraining the interactive features of the diffusion model and the category centers of visual features. In the later stage of SeDA, the Diffusion-Controlled Semantic Translator focuses on learning the distribution of textual features from the semantic space. Meanwhile, the Progressive Feature Interaction Network introduces stepwise feature interactions at each alignment step, progressively integrating textual information into mapped features. Experimental results show that SeDA achieves stronger cross-modal feature alignment, leading to superior performance over existing methods across multiple scenarios.

Federated Deconfounding and Debiasing Learning for Out-of-Distribution Generalization

May 08, 2025Abstract:Attribute bias in federated learning (FL) typically leads local models to optimize inconsistently due to the learning of non-causal associations, resulting degraded performance. Existing methods either use data augmentation for increasing sample diversity or knowledge distillation for learning invariant representations to address this problem. However, they lack a comprehensive analysis of the inference paths, and the interference from confounding factors limits their performance. To address these limitations, we propose the \underline{Fed}erated \underline{D}econfounding and \underline{D}ebiasing \underline{L}earning (FedDDL) method. It constructs a structured causal graph to analyze the model inference process, and performs backdoor adjustment to eliminate confounding paths. Specifically, we design an intra-client deconfounding learning module for computer vision tasks to decouple background and objects, generating counterfactual samples that establish a connection between the background and any label, which stops the model from using the background to infer the label. Moreover, we design an inter-client debiasing learning module to construct causal prototypes to reduce the proportion of the background in prototype components. Notably, it bridges the gap between heterogeneous representations via causal prototypical regularization. Extensive experiments on 2 benchmarking datasets demonstrate that \methodname{} significantly enhances the model capability to focus on main objects in unseen data, leading to 4.5\% higher Top-1 Accuracy on average over 9 state-of-the-art existing methods.

Towards Initialization-Agnostic Clustering with Iterative Adaptive Resonance Theory

May 07, 2025Abstract:The clustering performance of Fuzzy Adaptive Resonance Theory (Fuzzy ART) is highly dependent on the preset vigilance parameter, where deviations in its value can lead to significant fluctuations in clustering results, severely limiting its practicality for non-expert users. Existing approaches generally enhance vigilance parameter robustness through adaptive mechanisms such as particle swarm optimization and fuzzy logic rules. However, they often introduce additional hyperparameters or complex frameworks that contradict the original simplicity of the algorithm. To address this, we propose Iterative Refinement Adaptive Resonance Theory (IR-ART), which integrates three key phases into a unified iterative framework: (1) Cluster Stability Detection: A dynamic stability detection module that identifies unstable clusters by analyzing the change of sample size (number of samples in the cluster) in iteration. (2) Unstable Cluster Deletion: An evolutionary pruning module that eliminates low-quality clusters. (3) Vigilance Region Expansion: A vigilance region expansion mechanism that adaptively adjusts similarity thresholds. Independent of the specific execution of clustering, these three phases sequentially focus on analyzing the implicit knowledge within the iterative process, adjusting weights and vigilance parameters, thereby laying a foundation for the next iteration. Experimental evaluation on 15 datasets demonstrates that IR-ART improves tolerance to suboptimal vigilance parameter values while preserving the parameter simplicity of Fuzzy ART. Case studies visually confirm the algorithm's self-optimization capability through iterative refinement, making it particularly suitable for non-expert users in resource-constrained scenarios.

Federated Out-of-Distribution Generalization: A Causal Augmentation View

Apr 28, 2025Abstract:Federated learning aims to collaboratively model by integrating multi-source information to obtain a model that can generalize across all client data. Existing methods often leverage knowledge distillation or data augmentation to mitigate the negative impact of data bias across clients. However, the limited performance of teacher models on out-of-distribution samples and the inherent quality gap between augmented and original data hinder their effectiveness and they typically fail to leverage the advantages of incorporating rich contextual information. To address these limitations, this paper proposes a Federated Causal Augmentation method, termed FedCAug, which employs causality-inspired data augmentation to break the spurious correlation between attributes and categories. Specifically, it designs a causal region localization module to accurately identify and decouple the background and objects in the image, providing rich contextual information for causal data augmentation. Additionally, it designs a causality-inspired data augmentation module that integrates causal features and within-client context to generate counterfactual samples. This significantly enhances data diversity, and the entire process does not require any information sharing between clients, thereby contributing to the protection of data privacy. Extensive experiments conducted on three datasets reveal that FedCAug markedly reduces the model's reliance on background to predict sample labels, achieving superior performance compared to state-of-the-art methods.

Global Intervention and Distillation for Federated Out-of-Distribution Generalization

Apr 01, 2025Abstract:Attribute skew in federated learning leads local models to focus on learning non-causal associations, guiding them towards inconsistent optimization directions, which inevitably results in performance degradation and unstable convergence. Existing methods typically leverage data augmentation to enhance sample diversity or employ knowledge distillation to learn invariant representations. However, the instability in the quality of generated data and the lack of domain information limit their performance on unseen samples. To address these issues, this paper presents a global intervention and distillation method, termed FedGID, which utilizes diverse attribute features for backdoor adjustment to break the spurious association between background and label. It includes two main modules, where the global intervention module adaptively decouples objects and backgrounds in images, injects background information into random samples to intervene in the sample distribution, which links backgrounds to all categories to prevent the model from treating background-label associations as causal. The global distillation module leverages a unified knowledge base to guide the representation learning of client models, preventing local models from overfitting to client-specific attributes. Experimental results on three datasets demonstrate that FedGID enhances the model's ability to focus on the main subjects in unseen data and outperforms existing methods in collaborative modeling.

Robust Visual Tracking via Iterative Gradient Descent and Threshold Selection

Jun 02, 2024

Abstract:Visual tracking fundamentally involves regressing the state of the target in each frame of a video. Despite significant progress, existing regression-based trackers still tend to experience failures and inaccuracies. To enhance the precision of target estimation, this paper proposes a tracking technique based on robust regression. Firstly, we introduce a novel robust linear regression estimator, which achieves favorable performance when the error vector follows i.i.d Gaussian-Laplacian distribution. Secondly, we design an iterative process to quickly solve the problem of outliers. In fact, the coefficients are obtained by Iterative Gradient Descent and Threshold Selection algorithm (IGDTS). In addition, we expend IGDTS to a generative tracker, and apply IGDTS-distance to measure the deviation between the sample and the model. Finally, we propose an update scheme to capture the appearance changes of the tracked object and ensure that the model is updated correctly. Experimental results on several challenging image sequences show that the proposed tracker outperformance existing trackers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge