Zhihao Xue

Multi-Level Service Performance Forecasting via Spatiotemporal Graph Neural Networks

Aug 09, 2025Abstract:This paper proposes a spatiotemporal graph neural network-based performance prediction algorithm to address the challenge of forecasting performance fluctuations in distributed backend systems with multi-level service call structures. The method abstracts system states at different time slices into a sequence of graph structures. It integrates the runtime features of service nodes with the invocation relationships among services to construct a unified spatiotemporal modeling framework. The model first applies a graph convolutional network to extract high-order dependency information from the service topology. Then it uses a gated recurrent network to capture the dynamic evolution of performance metrics over time. A time encoding mechanism is also introduced to enhance the model's ability to represent non-stationary temporal sequences. The architecture is trained in an end-to-end manner, optimizing the multi-layer nested structure to achieve high-precision regression of future service performance metrics. To validate the effectiveness of the proposed method, a large-scale public cluster dataset is used. A series of multi-dimensional experiments are designed, including variations in time windows and concurrent load levels. These experiments comprehensively evaluate the model's predictive performance and stability. The experimental results show that the proposed model outperforms existing representative methods across key metrics such as MAE, RMSE, and R2. It maintains strong robustness under varying load intensities and structural complexities. These results demonstrate the model's practical potential for backend service performance management tasks.

Towards Universal Learning-based Model for Cardiac Image Reconstruction: Summary of the CMRxRecon2024 Challenge

Mar 05, 2025Abstract:Cardiovascular magnetic resonance (CMR) offers diverse imaging contrasts for assessment of cardiac function and tissue characterization. However, acquiring each single CMR modality is often time-consuming, and comprehensive clinical protocols require multiple modalities with various sampling patterns, further extending the overall acquisition time and increasing susceptibility to motion artifacts. Existing deep learning-based reconstruction methods are often designed for specific acquisition parameters, which limits their ability to generalize across a variety of scan scenarios. As part of the CMRxRecon Series, the CMRxRecon2024 challenge provides diverse datasets encompassing multi-modality multi-view imaging with various sampling patterns, and a platform for the international community to develop and benchmark reconstruction solutions in two well-crafted tasks. Task 1 is a modality-universal setting, evaluating the out-of-distribution generalization of the reconstructed model, while Task 2 follows sampling-universal setting assessing the one-for-all adaptability of the universal model. Main contributions include providing the first and largest publicly available multi-modality, multi-view cardiac k-space dataset; developing a benchmarking platform that simulates clinical acceleration protocols, with a shared code library and tutorial for various k-t undersampling patterns and data processing; giving technical insights of enhanced data consistency based on physic-informed networks and adaptive prompt-learning embedding to be versatile to different clinical settings; additional finding on evaluation metrics to address the limitations of conventional ground-truth references in universal reconstruction tasks.

DARCS: Memory-Efficient Deep Compressed Sensing Reconstruction for Acceleration of 3D Whole-Heart Coronary MR Angiography

Feb 02, 2024

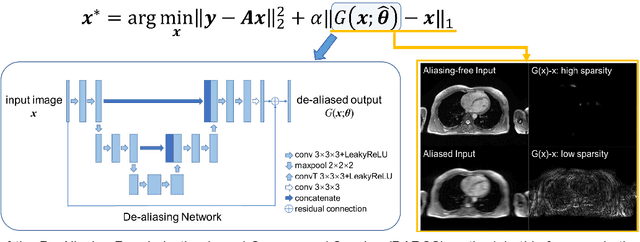

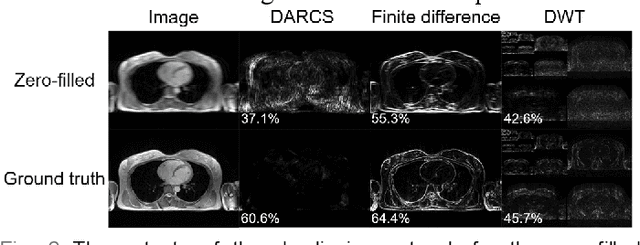

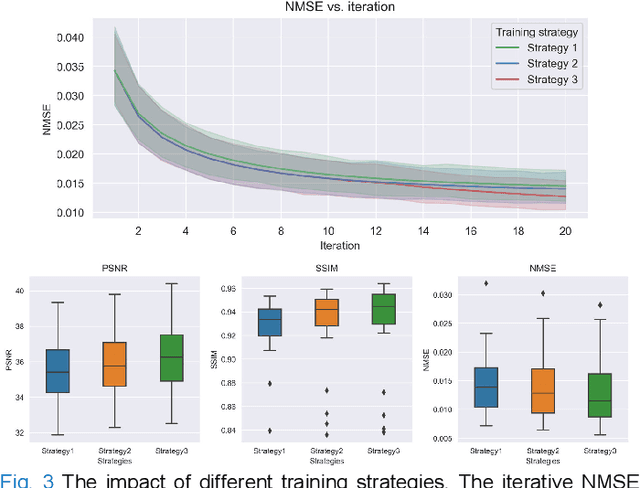

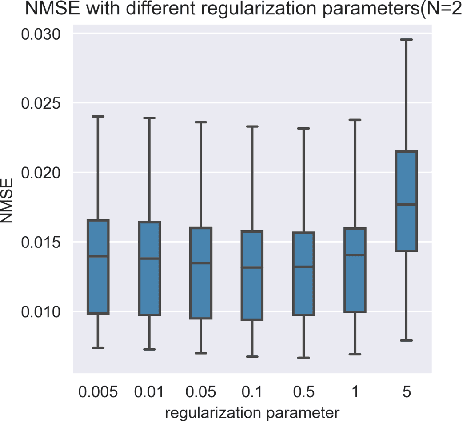

Abstract:Three-dimensional coronary magnetic resonance angiography (CMRA) demands reconstruction algorithms that can significantly suppress the artifacts from a heavily undersampled acquisition. While unrolling-based deep reconstruction methods have achieved state-of-the-art performance on 2D image reconstruction, their application to 3D reconstruction is hindered by the large amount of memory needed to train an unrolled network. In this study, we propose a memory-efficient deep compressed sensing method by employing a sparsifying transform based on a pre-trained artifact estimation network. The motivation is that the artifact image estimated by a well-trained network is sparse when the input image is artifact-free, and less sparse when the input image is artifact-affected. Thus, the artifact-estimation network can be used as an inherent sparsifying transform. The proposed method, named De-Aliasing Regularization based Compressed Sensing (DARCS), was compared with a traditional compressed sensing method, de-aliasing generative adversarial network (DAGAN), model-based deep learning (MoDL), and plug-and-play for accelerations of 3D CMRA. The results demonstrate that the proposed method improved the reconstruction quality relative to the compared methods by a large margin. Furthermore, the proposed method well generalized for different undersampling rates and noise levels. The memory usage of the proposed method was only 63% of that needed by MoDL. In conclusion, the proposed method achieves improved reconstruction quality for 3D CMRA with reduced memory burden.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge