Yingjiu Li

SelfDefend: LLMs Can Defend Themselves against Jailbreaking in a Practical Manner

Jun 08, 2024Abstract:Jailbreaking is an emerging adversarial attack that bypasses the safety alignment deployed in off-the-shelf large language models (LLMs) and has evolved into four major categories: optimization-based attacks such as Greedy Coordinate Gradient (GCG), jailbreak template-based attacks such as "Do-Anything-Now", advanced indirect attacks like DrAttack, and multilingual jailbreaks. However, delivering a practical jailbreak defense is challenging because it needs to not only handle all the above jailbreak attacks but also incur negligible delay to user prompts, as well as be compatible with both open-source and closed-source LLMs. Inspired by how the traditional security concept of shadow stacks defends against memory overflow attacks, this paper introduces a generic LLM jailbreak defense framework called SelfDefend, which establishes a shadow LLM defense instance to concurrently protect the target LLM instance in the normal stack and collaborate with it for checkpoint-based access control. The effectiveness of SelfDefend builds upon our observation that existing LLMs (both target and defense LLMs) have the capability to identify harmful prompts or intentions in user queries, which we empirically validate using the commonly used GPT-3.5/4 models across all major jailbreak attacks. Our measurements show that SelfDefend enables GPT-3.5 to suppress the attack success rate (ASR) by 8.97-95.74% (average: 60%) and GPT-4 by even 36.36-100% (average: 83%), while incurring negligible effects on normal queries. To further improve the defense's robustness and minimize costs, we employ a data distillation approach to tune dedicated open-source defense models. These models outperform four SOTA defenses and match the performance of GPT-4-based SelfDefend, with significantly lower extra delays. We also empirically show that the tuned models are robust to targeted GCG and prompt injection attacks.

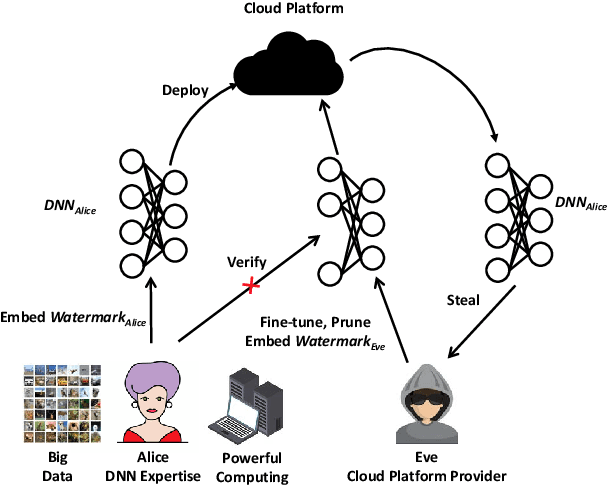

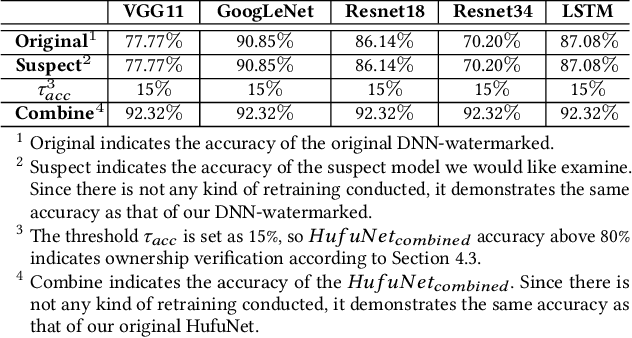

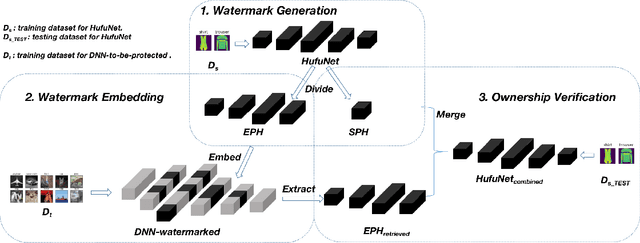

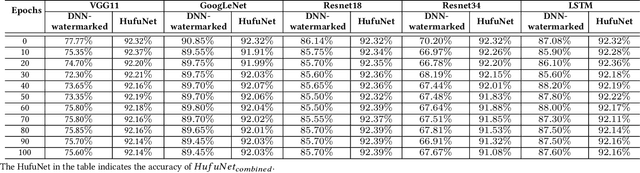

HufuNet: Embedding the Left Piece as Watermark and Keeping the Right Piece for Ownership Verification in Deep Neural Networks

Mar 25, 2021

Abstract:Due to the wide use of highly-valuable and large-scale deep neural networks (DNNs), it becomes crucial to protect the intellectual property of DNNs so that the ownership of disputed or stolen DNNs can be verified. Most existing solutions embed backdoors in DNN model training such that DNN ownership can be verified by triggering distinguishable model behaviors with a set of secret inputs. However, such solutions are vulnerable to model fine-tuning and pruning. They also suffer from fraudulent ownership claim as attackers can discover adversarial samples and use them as secret inputs to trigger distinguishable behaviors from stolen models. To address these problems, we propose a novel DNN watermarking solution, named HufuNet, for protecting the ownership of DNN models. We evaluate HufuNet rigorously on four benchmark datasets with five popular DNN models, including convolutional neural network (CNN) and recurrent neural network (RNN). The experiments demonstrate HufuNet is highly robust against model fine-tuning/pruning, kernels cutoff/supplement, functionality-equivalent attack, and fraudulent ownership claims, thus highly promising to protect large-scale DNN models in the real-world.

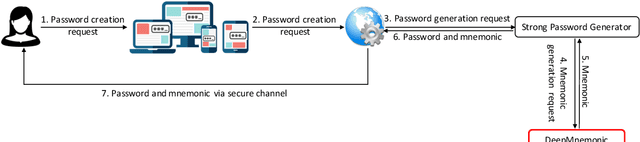

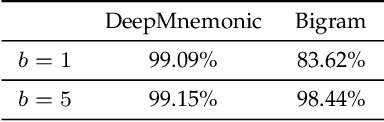

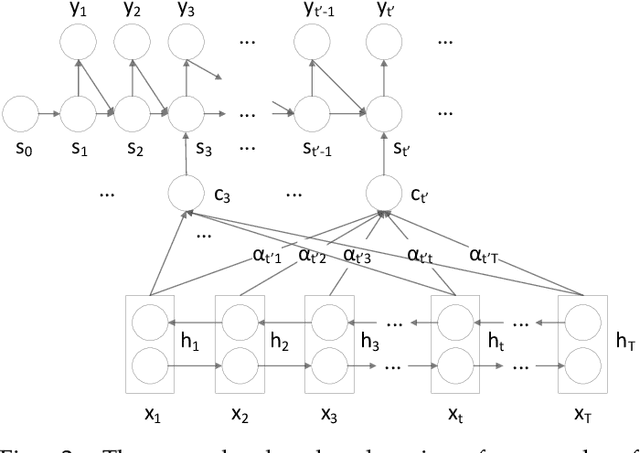

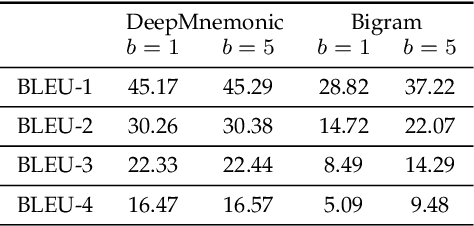

DeepMnemonic: Password Mnemonic Generation via Deep Attentive Encoder-Decoder Model

Jun 24, 2020

Abstract:Strong passwords are fundamental to the security of password-based user authentication systems. In recent years, much effort has been made to evaluate password strength or to generate strong passwords. Unfortunately, the usability or memorability of the strong passwords has been largely neglected. In this paper, we aim to bridge the gap between strong password generation and the usability of strong passwords. We propose to automatically generate textual password mnemonics, i.e., natural language sentences, which are intended to help users better memorize passwords. We introduce \textit{DeepMnemonic}, a deep attentive encoder-decoder framework which takes a password as input and then automatically generates a mnemonic sentence for the password. We conduct extensive experiments to evaluate DeepMnemonic on the real-world data sets. The experimental results demonstrate that DeepMnemonic outperforms a well-known baseline for generating semantically meaningful mnemonic sentences. Moreover, the user study further validates that the generated mnemonic sentences by DeepMnemonic are useful in helping users memorize strong passwords.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge