Xinwei Yang

CANDY: Benchmarking LLMs' Limitations and Assistive Potential in Chinese Misinformation Fact-Checking

Sep 04, 2025

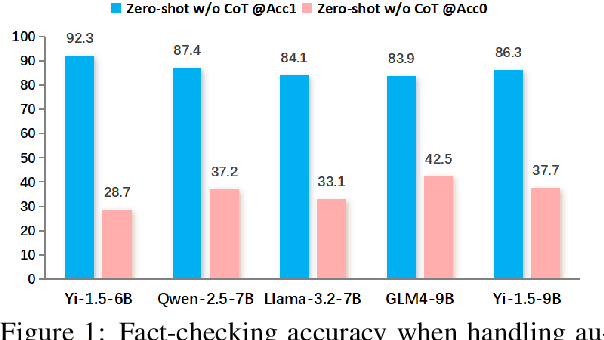

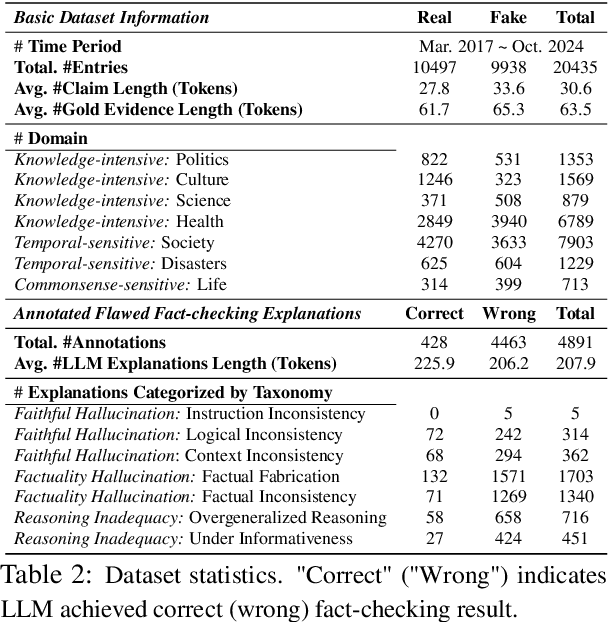

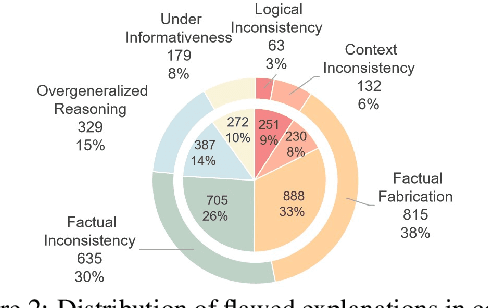

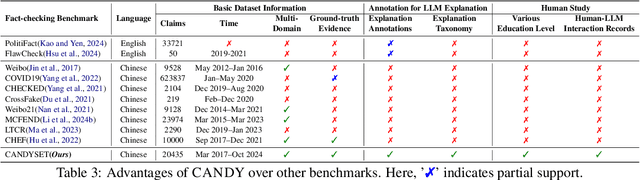

Abstract:The effectiveness of large language models (LLMs) to fact-check misinformation remains uncertain, despite their growing use. To this end, we present CANDY, a benchmark designed to systematically evaluate the capabilities and limitations of LLMs in fact-checking Chinese misinformation. Specifically, we curate a carefully annotated dataset of ~20k instances. Our analysis shows that current LLMs exhibit limitations in generating accurate fact-checking conclusions, even when enhanced with chain-of-thought reasoning and few-shot prompting. To understand these limitations, we develop a taxonomy to categorize flawed LLM-generated explanations for their conclusions and identify factual fabrication as the most common failure mode. Although LLMs alone are unreliable for fact-checking, our findings indicate their considerable potential to augment human performance when deployed as assistive tools in scenarios. Our dataset and code can be accessed at https://github.com/SCUNLP/CANDY

ELABORATION: A Comprehensive Benchmark on Human-LLM Competitive Programming

May 22, 2025

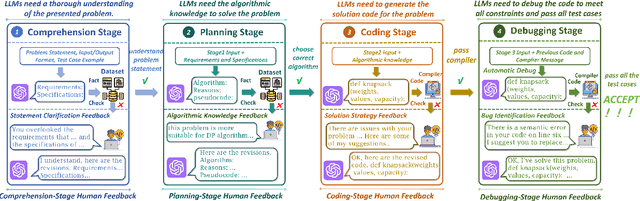

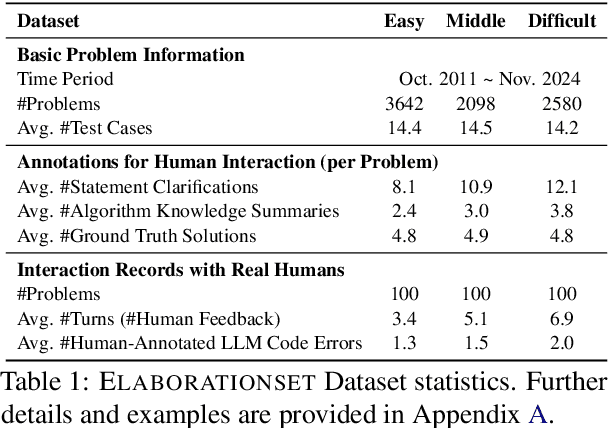

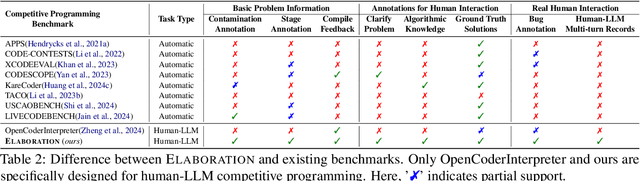

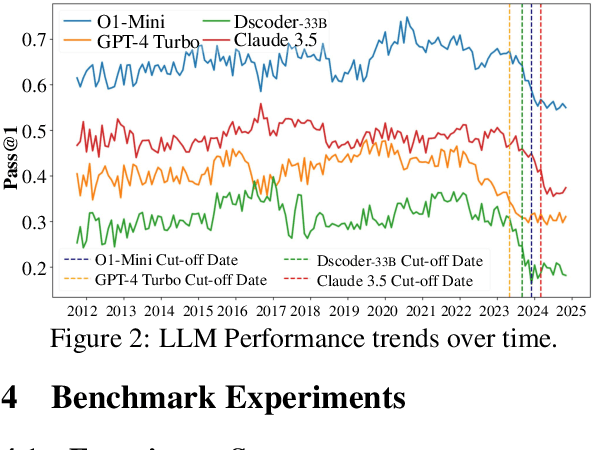

Abstract:While recent research increasingly emphasizes the value of human-LLM collaboration in competitive programming and proposes numerous empirical methods, a comprehensive understanding remains elusive due to the fragmented nature of existing studies and their use of diverse, application-specific human feedback. Thus, our work serves a three-fold purpose: First, we present the first taxonomy of human feedback consolidating the entire programming process, which promotes fine-grained evaluation. Second, we introduce ELABORATIONSET, a novel programming dataset specifically designed for human-LLM collaboration, meticulously annotated to enable large-scale simulated human feedback and facilitate costeffective real human interaction studies. Third, we introduce ELABORATION, a novel benchmark to facilitate a thorough assessment of human-LLM competitive programming. With ELABORATION, we pinpoint strengthes and weaknesses of existing methods, thereby setting the foundation for future improvement. Our code and dataset are available at https://github.com/SCUNLP/ELABORATION

Physics Reasoner: Knowledge-Augmented Reasoning for Solving Physics Problems with Large Language Models

Dec 18, 2024

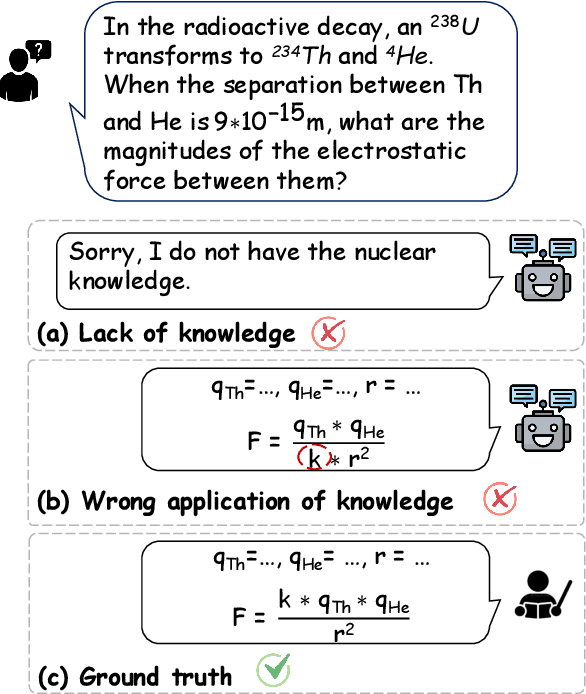

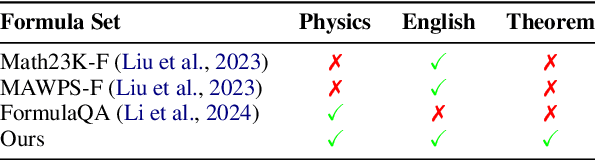

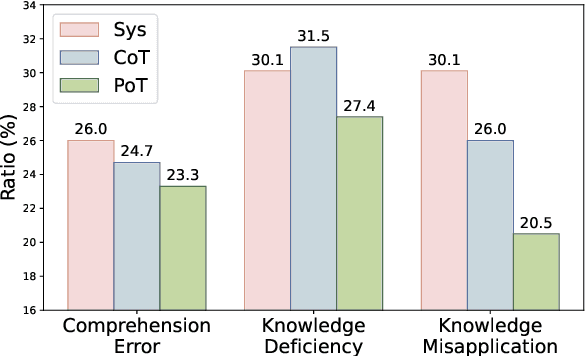

Abstract:Physics problems constitute a significant aspect of reasoning, necessitating complicated reasoning ability and abundant physics knowledge. However, existing large language models (LLMs) frequently fail due to a lack of knowledge or incorrect knowledge application. To mitigate these issues, we propose Physics Reasoner, a knowledge-augmented framework to solve physics problems with LLMs. Specifically, the proposed framework constructs a comprehensive formula set to provide explicit physics knowledge and utilizes checklists containing detailed instructions to guide effective knowledge application. Namely, given a physics problem, Physics Reasoner solves it through three stages: problem analysis, formula retrieval, and guided reasoning. During the process, checklists are employed to enhance LLMs' self-improvement in the analysis and reasoning stages. Empirically, Physics Reasoner mitigates the issues of insufficient knowledge and incorrect application, achieving state-of-the-art performance on SciBench with an average accuracy improvement of 5.8%.

Co-Matching: Towards Human-Machine Collaborative Legal Case Matching

May 16, 2024Abstract:Recent efforts have aimed to improve AI machines in legal case matching by integrating legal domain knowledge. However, successful legal case matching requires the tacit knowledge of legal practitioners, which is difficult to verbalize and encode into machines. This emphasizes the crucial role of involving legal practitioners in high-stakes legal case matching. To address this, we propose a collaborative matching framework called Co-Matching, which encourages both the machine and the legal practitioner to participate in the matching process, integrating tacit knowledge. Unlike existing methods that rely solely on the machine, Co-Matching allows both the legal practitioner and the machine to determine key sentences and then combine them probabilistically. Co-Matching introduces a method called ProtoEM to estimate human decision uncertainty, facilitating the probabilistic combination. Experimental results demonstrate that Co-Matching consistently outperforms existing legal case matching methods, delivering significant performance improvements over human- and machine-based matching in isolation (on average, +5.51% and +8.71%, respectively). Further analysis shows that Co-Matching also ensures better human-machine collaboration effectiveness. Our study represents a pioneering effort in human-machine collaboration for the matching task, marking a milestone for future collaborative matching studies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge