Xingchao Peng

Network Architecture Search for Domain Adaptation

Aug 13, 2020

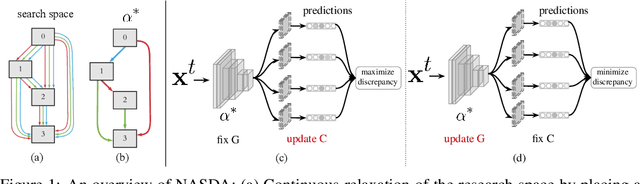

Abstract:Deep networks have been used to learn transferable representations for domain adaptation. Existing deep domain adaptation methods systematically employ popular hand-crafted networks designed specifically for image-classification tasks, leading to sub-optimal domain adaptation performance. In this paper, we present Neural Architecture Search for Domain Adaptation (NASDA), a principle framework that leverages differentiable neural architecture search to derive the optimal network architecture for domain adaptation task. NASDA is designed with two novel training strategies: neural architecture search with multi-kernel Maximum Mean Discrepancy to derive the optimal architecture, and adversarial training between a feature generator and a batch of classifiers to consolidate the feature generator. We demonstrate experimentally that NASDA leads to state-of-the-art performance on several domain adaptation benchmarks.

Domain2Vec: Domain Embedding for Unsupervised Domain Adaptation

Jul 17, 2020

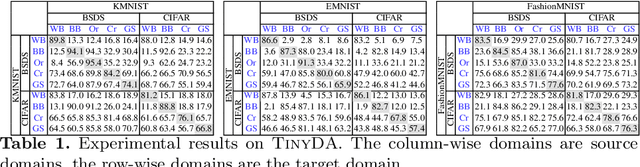

Abstract:Conventional unsupervised domain adaptation (UDA) studies the knowledge transfer between a limited number of domains. This neglects the more practical scenario where data are distributed in numerous different domains in the real world. The domain similarity between those domains is critical for domain adaptation performance. To describe and learn relations between different domains, we propose a novel Domain2Vec model to provide vectorial representations of visual domains based on joint learning of feature disentanglement and Gram matrix. To evaluate the effectiveness of our Domain2Vec model, we create two large-scale cross-domain benchmarks. The first one is TinyDA, which contains 54 domains and about one million MNIST-style images. The second benchmark is DomainBank, which is collected from 56 existing vision datasets. We demonstrate that our embedding is capable of predicting domain similarities that match our intuition about visual relations between different domains. Extensive experiments are conducted to demonstrate the power of our new datasets in benchmarking state-of-the-art multi-source domain adaptation methods, as well as the advantage of our proposed model.

Learning Domain Adaptive Features with Unlabeled Domain Bridges

Dec 10, 2019

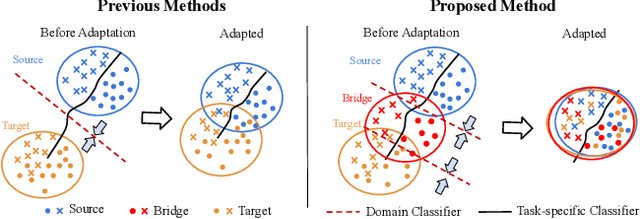

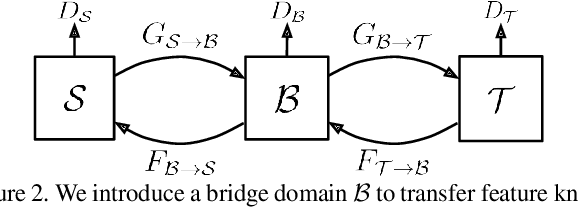

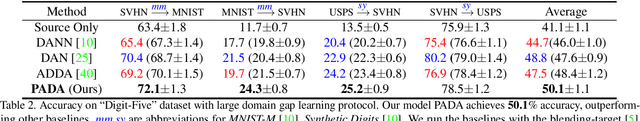

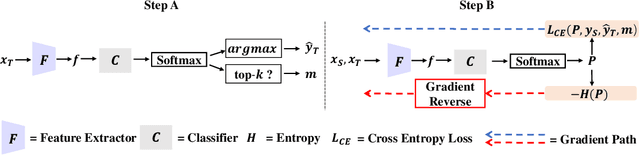

Abstract:Conventional cross-domain image-to-image translation or unsupervised domain adaptation methods assume that the source domain and target domain are closely related. This neglects a practical scenario where the domain discrepancy between the source and target is excessively large. In this paper, we propose a novel approach to learn domain adaptive features between the largely-gapped source and target domains with unlabeled domain bridges. Firstly, we introduce the framework of Cycle-consistency Flow Generative Adversarial Networks (CFGAN) that utilizes domain bridges to perform image-to-image translation between two distantly distributed domains. Secondly, we propose the Prototypical Adversarial Domain Adaptation (PADA) model which utilizes unlabeled bridge domains to align feature distribution between source and target with a large discrepancy. Extensive quantitative and qualitative experiments are conducted to demonstrate the effectiveness of our proposed models.

Federated Adversarial Domain Adaptation

Nov 05, 2019

Abstract:Federated learning improves data privacy and efficiency in machine learning performed over networks of distributed devices, such as mobile phones, IoT and wearable devices, etc. Yet models trained with federated learning can still fail to generalize to new devices due to the problem of domain shift. Domain shift occurs when the labeled data collected by source nodes statistically differs from the target node's unlabeled data. In this work, we present a principled approach to the problem of federated domain adaptation, which aims to align the representations learned among the different nodes with the data distribution of the target node. Our approach extends adversarial adaptation techniques to the constraints of the federated setting. In addition, we devise a dynamic attention mechanism and leverage feature disentanglement to enhance knowledge transfer. Empirically, we perform extensive experiments on several image and text classification tasks and show promising results under unsupervised federated domain adaptation setting.

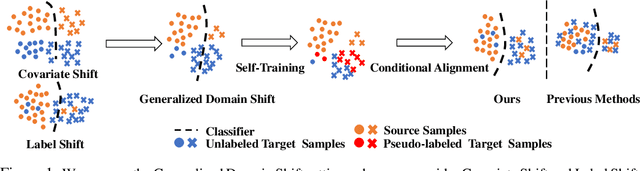

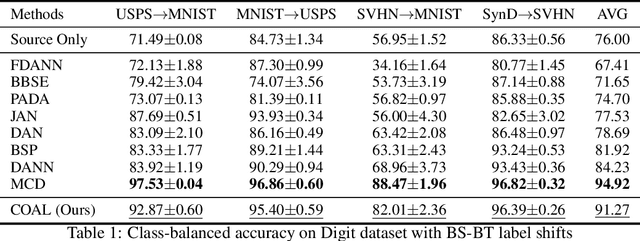

Generalized Domain Adaptation with Covariate and Label Shift CO-ALignment

Oct 23, 2019

Abstract:Unsupervised knowledge transfer has a great potential to improve the generalizability of deep models to novel domains. Yet the current literature assumes that the label distribution is domain-invariant and only aligns the covariate or vice versa. In this paper, we explore the task of Generalized Domain Adaptation (GDA): How to transfer knowledge across different domains in the presence of both covariate and label shift? We propose a covariate and label distribution CO-ALignment (COAL) model to tackle this problem. Our model leverages prototype-based conditional alignment and label distribution estimation to diminish the covariate and label shifts, respectively. We demonstrate experimentally that when both types of shift exist in the data, COAL leads to state-of-the-art performance on several cross-domain benchmarks.

Domain Agnostic Learning with Disentangled Representations

Apr 28, 2019

Abstract:Unsupervised model transfer has the potential to greatly improve the generalizability of deep models to novel domains. Yet the current literature assumes that the separation of target data into distinct domains is known as a priori. In this paper, we propose the task of Domain-Agnostic Learning (DAL): How to transfer knowledge from a labeled source domain to unlabeled data from arbitrary target domains? To tackle this problem, we devise a novel Deep Adversarial Disentangled Autoencoder (DADA) capable of disentangling domain-specific features from class identity. We demonstrate experimentally that when the target domain labels are unknown, DADA leads to state-of-the-art performance on several image classification datasets.

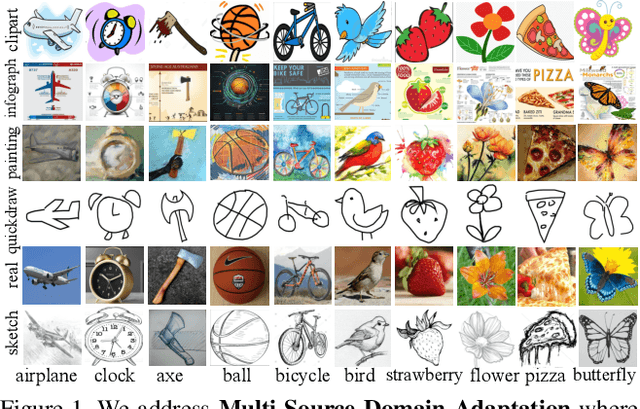

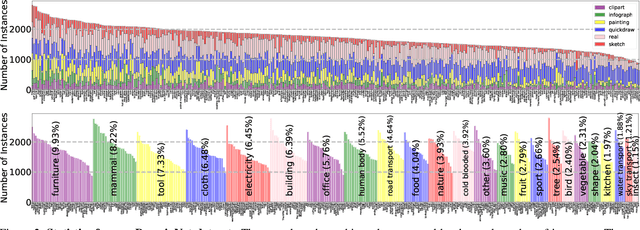

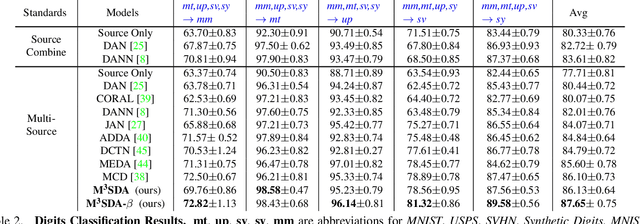

Moment Matching for Multi-Source Domain Adaptation

Dec 04, 2018

Abstract:Conventional unsupervised domain adaptation (UDA) assumes that training data are sampled from a single domain. This neglects the more practical scenario where training data are collected from multiple sources, requiring multi-source domain adaptation. We make three major contributions towards addressing this problem. First, we propose a new deep learning approach, Moment Matching for Multi-Source Domain Adaptation M3SDA, which aims to transfer knowledge learned from multiple labeled source domains to an unlabeled target domain by dynamically aligning moments of their feature distributions. Second, we provide a sound theoretical analysis of moment-related error bounds for multi-source domain adaptation. Third, we collect and annotate by far the largest UDA dataset with six distinct domains and approximately 0.6 million images distributed among 345 categories, addressing the gap in data availability for multi-source UDA research. Extensive experiments are performed to demonstrate the effectiveness of our proposed model, which outperforms existing state-of-the-art methods by a large margin.

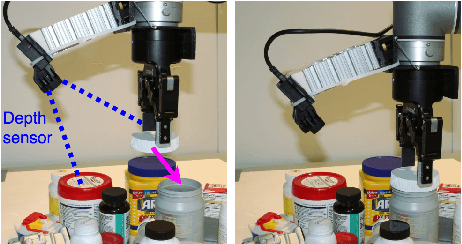

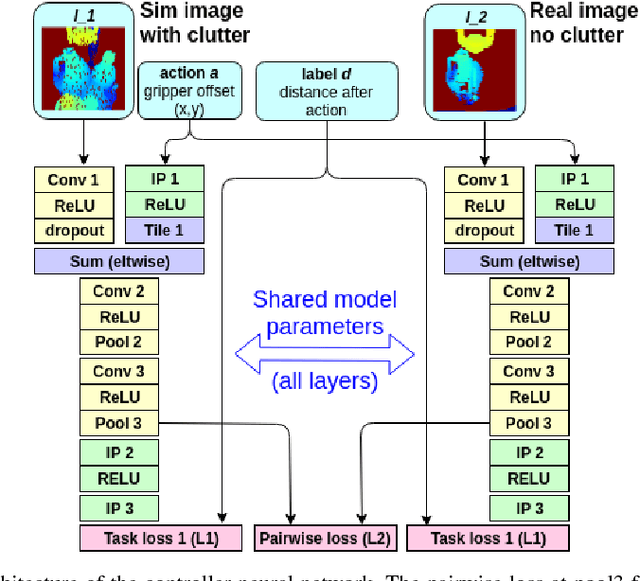

Adapting control policies from simulation to reality using a pairwise loss

Oct 26, 2018

Abstract:This paper proposes an approach to domain transfer based on a pairwise loss function that helps transfer control policies learned in simulation onto a real robot. We explore the idea in the context of a 'category level' manipulation task where a control policy is learned that enables a robot to perform a mating task involving novel objects. We explore the case where depth images are used as the main form of sensor input. Our experimental results demonstrate that proposed method consistently outperforms baseline methods that train only in simulation or that combine real and simulated data in a naive way.

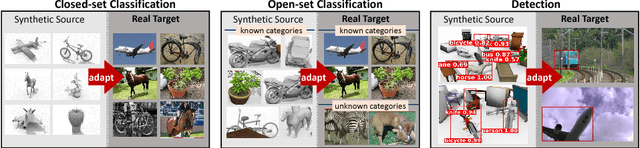

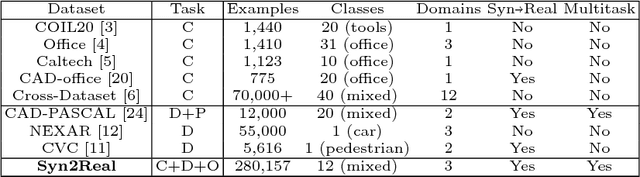

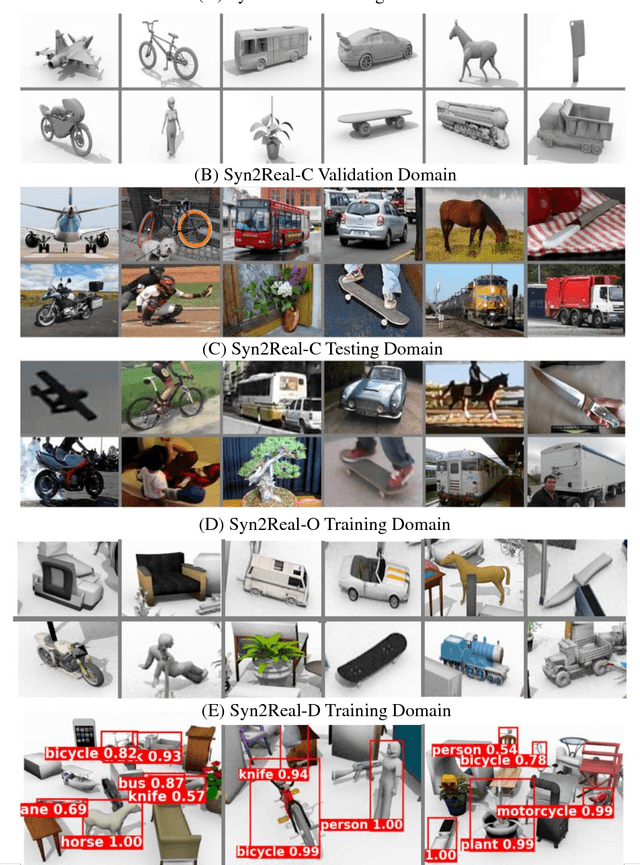

Syn2Real: A New Benchmark forSynthetic-to-Real Visual Domain Adaptation

Jun 26, 2018

Abstract:Unsupervised transfer of object recognition models from synthetic to real data is an important problem with many potential applications. The challenge is how to "adapt" a model trained on simulated images so that it performs well on real-world data without any additional supervision. Unfortunately, current benchmarks for this problem are limited in size and task diversity. In this paper, we present a new large-scale benchmark called Syn2Real, which consists of a synthetic domain rendered from 3D object models and two real-image domains containing the same object categories. We define three related tasks on this benchmark: closed-set object classification, open-set object classification, and object detection. Our evaluation of multiple state-of-the-art methods reveals a large gap in adaptation performance between the easier closed-set classification task and the more difficult open-set and detection tasks. We conclude that developing adaptation methods that work well across all three tasks presents a significant future challenge for syn2real domain transfer.

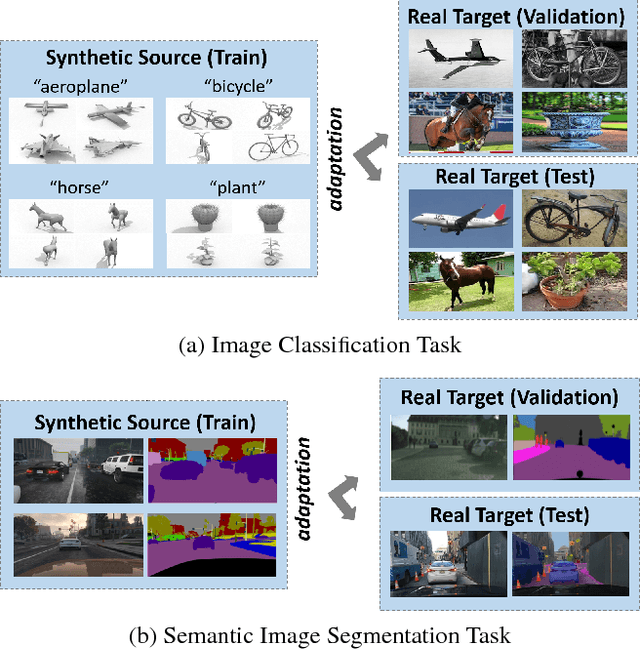

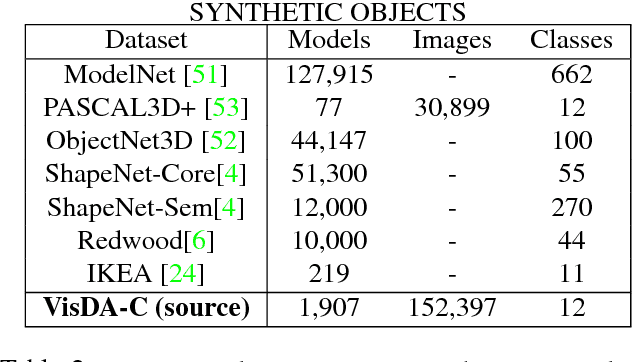

VisDA: The Visual Domain Adaptation Challenge

Nov 29, 2017

Abstract:We present the 2017 Visual Domain Adaptation (VisDA) dataset and challenge, a large-scale testbed for unsupervised domain adaptation across visual domains. Unsupervised domain adaptation aims to solve the real-world problem of domain shift, where machine learning models trained on one domain must be transferred and adapted to a novel visual domain without additional supervision. The VisDA2017 challenge is focused on the simulation-to-reality shift and has two associated tasks: image classification and image segmentation. The goal in both tracks is to first train a model on simulated, synthetic data in the source domain and then adapt it to perform well on real image data in the unlabeled test domain. Our dataset is the largest one to date for cross-domain object classification, with over 280K images across 12 categories in the combined training, validation and testing domains. The image segmentation dataset is also large-scale with over 30K images across 18 categories in the three domains. We compare VisDA to existing cross-domain adaptation datasets and provide a baseline performance analysis using various domain adaptation models that are currently popular in the field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge