Moment Matching for Multi-Source Domain Adaptation

Paper and Code

Dec 04, 2018

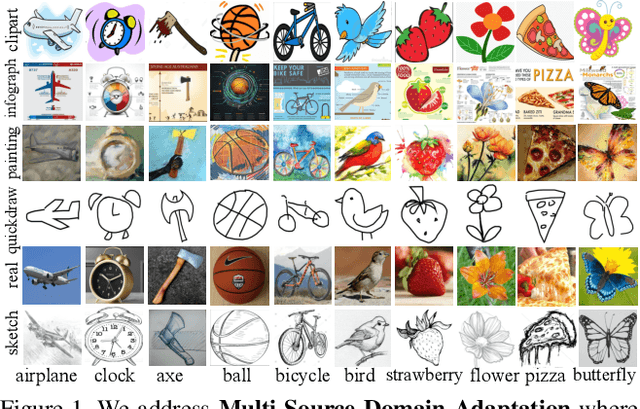

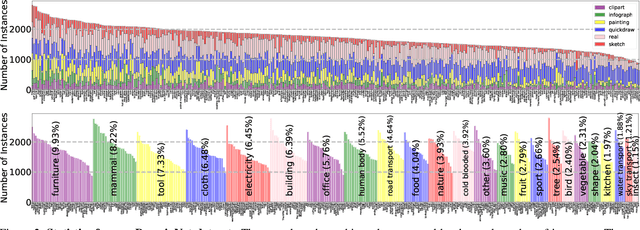

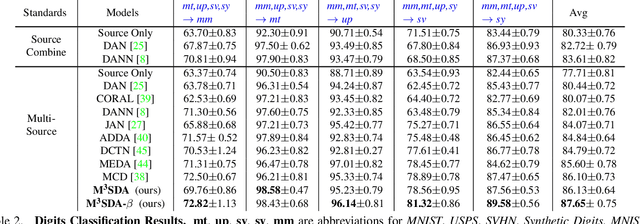

Conventional unsupervised domain adaptation (UDA) assumes that training data are sampled from a single domain. This neglects the more practical scenario where training data are collected from multiple sources, requiring multi-source domain adaptation. We make three major contributions towards addressing this problem. First, we propose a new deep learning approach, Moment Matching for Multi-Source Domain Adaptation M3SDA, which aims to transfer knowledge learned from multiple labeled source domains to an unlabeled target domain by dynamically aligning moments of their feature distributions. Second, we provide a sound theoretical analysis of moment-related error bounds for multi-source domain adaptation. Third, we collect and annotate by far the largest UDA dataset with six distinct domains and approximately 0.6 million images distributed among 345 categories, addressing the gap in data availability for multi-source UDA research. Extensive experiments are performed to demonstrate the effectiveness of our proposed model, which outperforms existing state-of-the-art methods by a large margin.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge