Xiatao Sun

RuleSmith: Multi-Agent LLMs for Automated Game Balancing

Feb 05, 2026Abstract:Game balancing is a longstanding challenge requiring repeated playtesting, expert intuition, and extensive manual tuning. We introduce RuleSmith, the first framework that achieves automated game balancing by leveraging the reasoning capabilities of multi-agent LLMs. It couples a game engine, multi-agent LLMs self-play, and Bayesian optimization operating over a multi-dimensional rule space. As a proof of concept, we instantiate RuleSmith on CivMini, a simplified civilization-style game containing heterogeneous factions, economy systems, production rules, and combat mechanics, all governed by tunable parameters. LLM agents interpret textual rulebooks and game states to generate actions, to conduct fast evaluation of balance metrics such as win-rate disparities. To search the parameter landscape efficiently, we integrate Bayesian optimization with acquisition-based adaptive sampling and discrete projection: promising candidates receive more evaluation games for accurate assessment, while exploratory candidates receive fewer games for efficient exploration. Experiments show that RuleSmith converges to highly balanced configurations and provides interpretable rule adjustments that can be directly applied to downstream game systems. Our results illustrate that LLM simulation can serve as a powerful surrogate for automating design and balancing in complex multi-agent environments.

Subsecond 3D Mesh Generation for Robot Manipulation

Dec 30, 2025Abstract:3D meshes are a fundamental representation widely used in computer science and engineering. In robotics, they are particularly valuable because they capture objects in a form that aligns directly with how robots interact with the physical world, enabling core capabilities such as predicting stable grasps, detecting collisions, and simulating dynamics. Although automatic 3D mesh generation methods have shown promising progress in recent years, potentially offering a path toward real-time robot perception, two critical challenges remain. First, generating high-fidelity meshes is prohibitively slow for real-time use, often requiring tens of seconds per object. Second, mesh generation by itself is insufficient. In robotics, a mesh must be contextually grounded, i.e., correctly segmented from the scene and registered with the proper scale and pose. Additionally, unless these contextual grounding steps remain efficient, they simply introduce new bottlenecks. In this work, we introduce an end-to-end system that addresses these challenges, producing a high-quality, contextually grounded 3D mesh from a single RGB-D image in under one second. Our pipeline integrates open-vocabulary object segmentation, accelerated diffusion-based mesh generation, and robust point cloud registration, each optimized for both speed and accuracy. We demonstrate its effectiveness in a real-world manipulation task, showing that it enables meshes to be used as a practical, on-demand representation for robotics perception and planning.

PRISM-DP: Spatial Pose-based Observations for Diffusion-Policies via Segmentation, Mesh Generation, and Pose Tracking

May 01, 2025Abstract:Diffusion-based visuomotor policies generate robot motions by learning to denoise action-space trajectories conditioned on observations. These observations are commonly streams of RGB images, whose high dimensionality includes substantial task-irrelevant information, requiring large models to extract relevant patterns. In contrast, using more structured observations, such as the spatial poses (positions and orientations) of key objects over time, enables training more compact policies that can recognize relevant patterns with fewer parameters. However, obtaining accurate object poses in open-set, real-world environments remains challenging. For instance, it is impractical to assume that all relevant objects are equipped with markers, and recent learning-based 6D pose estimation and tracking methods often depend on pre-scanned object meshes, requiring manual reconstruction. In this work, we propose PRISM-DP, an approach that leverages segmentation, mesh generation, pose estimation, and pose tracking models to enable compact diffusion policy learning directly from the spatial poses of task-relevant objects. Crucially, because PRISM-DP uses a mesh generation model, it eliminates the need for manual mesh processing or creation, improving scalability and usability in open-set, real-world environments. Experiments across a range of tasks in both simulation and real-world settings show that PRISM-DP outperforms high-dimensional image-based diffusion policies and achieves performance comparable to policies trained with ground-truth state information. We conclude with a discussion of the broader implications and limitations of our approach.

Dynamic Rank Adjustment in Diffusion Policies for Efficient and Flexible Training

Feb 07, 2025

Abstract:Diffusion policies trained via offline behavioral cloning have recently gained traction in robotic motion generation. While effective, these policies typically require a large number of trainable parameters. This model size affords powerful representations but also incurs high computational cost during training. Ideally, it would be beneficial to dynamically adjust the trainable portion as needed, balancing representational power with computational efficiency. For example, while overparameterization enables diffusion policies to capture complex robotic behaviors via offline behavioral cloning, the increased computational demand makes online interactive imitation learning impractical due to longer training time. To address this challenge, we present a framework, called DRIFT, that uses the Singular Value Decomposition to enable dynamic rank adjustment during diffusion policy training. We implement and demonstrate the benefits of this framework in DRIFT-DAgger, an imitation learning algorithm that can seamlessly slide between an offline bootstrapping phase and an online interactive phase. We perform extensive experiments to better understand the proposed framework, and demonstrate that DRIFT-DAgger achieves improved sample efficiency and faster training with minimal impact on model performance.

A Comparative Study on State-Action Spaces for Learning Viewpoint Selection and Manipulation with Diffusion Policy

Sep 22, 2024Abstract:Robotic manipulation tasks often rely on static cameras for perception, which can limit flexibility, particularly in scenarios like robotic surgery and cluttered environments where mounting static cameras is impractical. Ideally, robots could jointly learn a policy for dynamic viewpoint and manipulation. However, it remains unclear which state-action space is most suitable for this complex learning process. To enable manipulation with dynamic viewpoints and to better understand impacts from different state-action spaces on this policy learning process, we conduct a comparative study on the state-action spaces for policy learning and their impacts on the performance of visuomotor policies that integrate viewpoint selection with manipulation. Specifically, we examine the configuration space of the robotic system, the end-effector space with a dual-arm Inverse Kinematics (IK) solver, and the reduced end-effector space with a look-at IK solver to optimize rotation for viewpoint selection. We also assess variants with different rotation representations. Our results demonstrate that state-action spaces utilizing Euler angles with the look-at IK achieve superior task success rates compared to other spaces. Further analysis suggests that these performance differences are driven by inherent variations in the high-frequency components across different state-action spaces and rotation representations.

Developing Trajectory Planning with Behavioral Cloning and Proximal Policy Optimization for Path-Tracking and Static Obstacle Nudging

Sep 09, 2024

Abstract:End-to-end approaches with Reinforcement Learning (RL) and Imitation Learning (IL) have gained increasing popularity in autonomous driving. However, they do not involve explicit reasoning like classic robotics workflow, nor planning with horizons, leading strategies implicit and myopic. In this paper, we introduce our trajectory planning method that uses Behavioral Cloning (BC) for path-tracking and Proximal Policy Optimization (PPO) bootstrapped by BC for static obstacle nudging. It outputs lateral offset values to adjust the given reference trajectory, and performs modified path for different controllers. Our experimental results show that the algorithm can do path-tracking that mimics the expert performance, and avoiding collision to fixed obstacles by trial and errors. This method makes a good attempt at planning with learning-based methods in trajectory planning problems of autonomous driving.

Learning Optimal Trajectories for Quadrotors

Sep 26, 2023

Abstract:This paper presents a novel learning-based trajectory planning framework for quadrotors that combines model-based optimization techniques with deep learning. Specifically, we formulate the trajectory optimization problem as a quadratic programming (QP) problem with dynamic and collision-free constraints using piecewise trajectory segments through safe flight corridors [1]. We train neural networks to directly learn the time allocation for each segment to generate optimal smooth and fast trajectories. Furthermore, the constrained optimization problem is applied as a separate implicit layer for back-propagating in the network, for which the differential loss function can be obtained. We introduce an additional penalty function to penalize time allocations which result in solutions that violate the constraints to accelerate the training process and increase the success rate of the original optimization problem. To this end, we enable a flexible number of sequences of piece-wise trajectories by adding an extra end-of-sentence token during training. We illustrate the performance of the proposed method via extensive simulation and experimentation and show that it works in real time in diverse, cluttered environments.

MEGA-DAgger: Imitation Learning with Multiple Imperfect Experts

Mar 05, 2023

Abstract:Imitation learning has been widely applied to various autonomous systems thanks to recent development in interactive algorithms that address covariate shift and compounding errors induced by traditional approaches like behavior cloning. However, existing interactive imitation learning methods assume access to one perfect expert. Whereas in reality, it is more likely to have multiple imperfect experts instead. In this paper, we propose MEGA-DAgger, a new DAgger variant that is suitable for interactive learning with multiple imperfect experts. First, unsafe demonstrations are filtered while aggregating the training data, so the imperfect demonstrations have little influence when training the novice policy. Next, experts are evaluated and compared on scenarios-specific metrics to resolve the conflicted labels among experts. Through experiments in autonomous racing scenarios, we demonstrate that policy learned using MEGA-DAgger can outperform both experts and policies learned using the state-of-the-art interactive imitation learning algorithm. The supplementary video can be found at https://youtu.be/pYQiPSHk6dU.

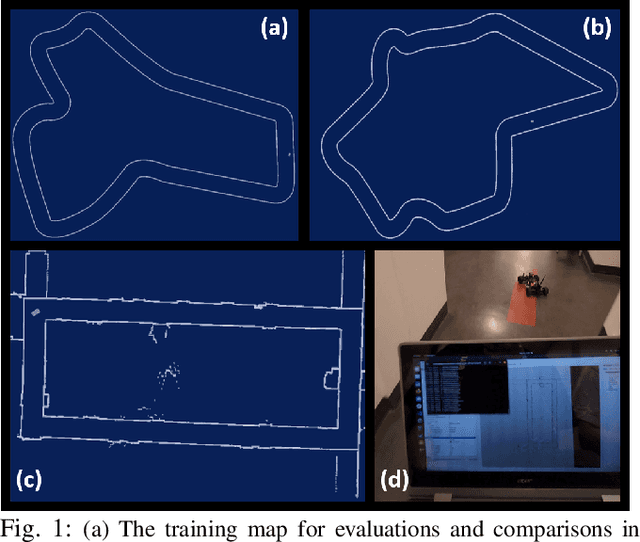

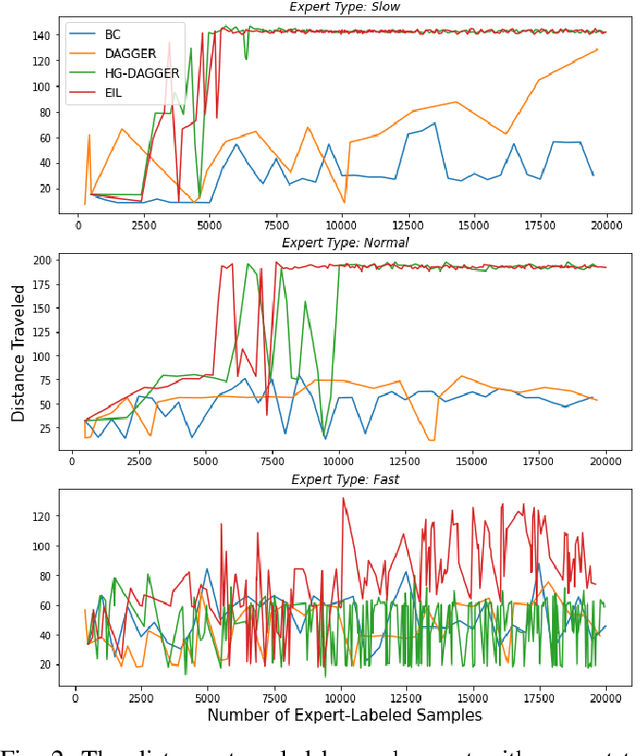

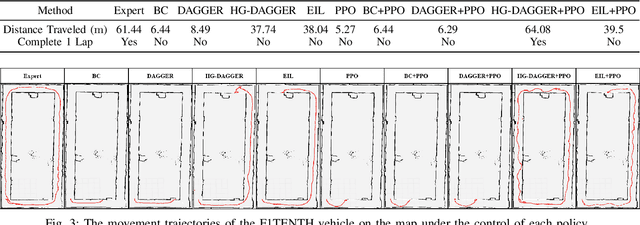

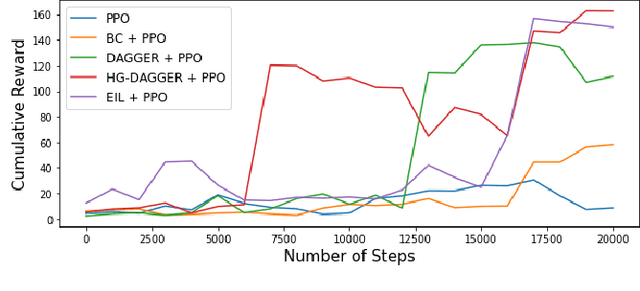

A Benchmark Comparison of Imitation Learning-based Control Policies for Autonomous Racing

Sep 29, 2022

Abstract:Autonomous racing with scaled race cars has gained increasing attention as an effective approach for developing perception, planning and control algorithms for safe autonomous driving at the limits of the vehicle's handling. To train agile control policies for autonomous racing, learning-based approaches largely utilize reinforcement learning, albeit with mixed results. In this study, we benchmark a variety of imitation learning policies for racing vehicles that are applied directly or for bootstrapping reinforcement learning both in simulation and on scaled real-world environments. We show that interactive imitation learning techniques outperform traditional imitation learning methods and can greatly improve the performance of reinforcement learning policies by bootstrapping thanks to its better sample efficiency. Our benchmarks provide a foundation for future research on autonomous racing using Imitation Learning and Reinforcement Learning.

Multi-Agent Exploration of an Unknown Sparse Landmark Complex via Deep Reinforcement Learning

Sep 23, 2022

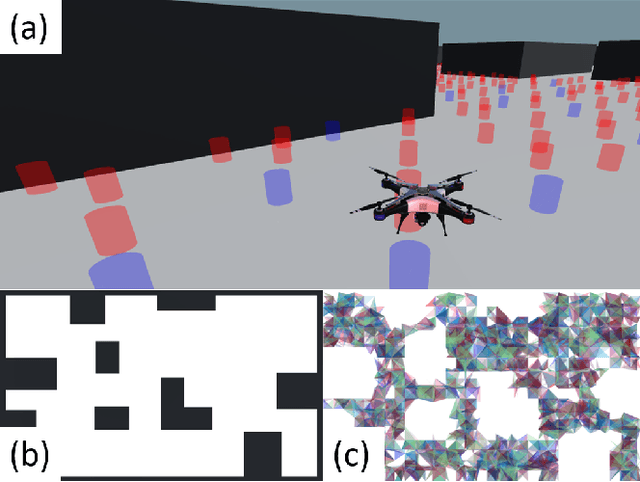

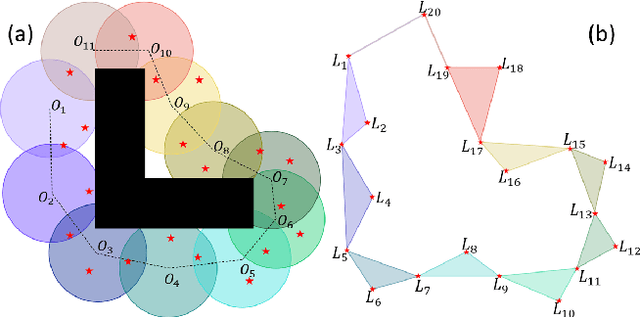

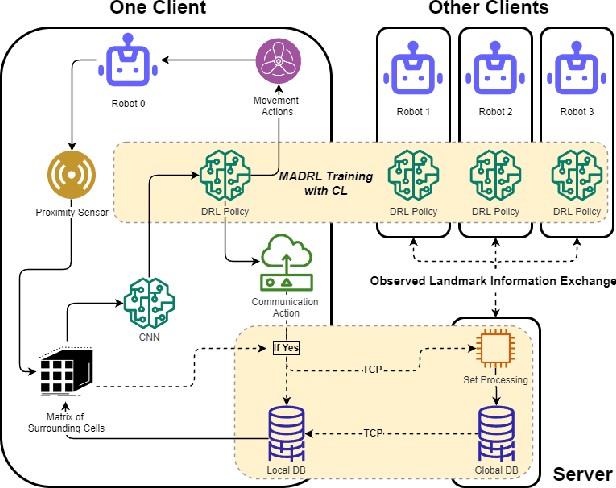

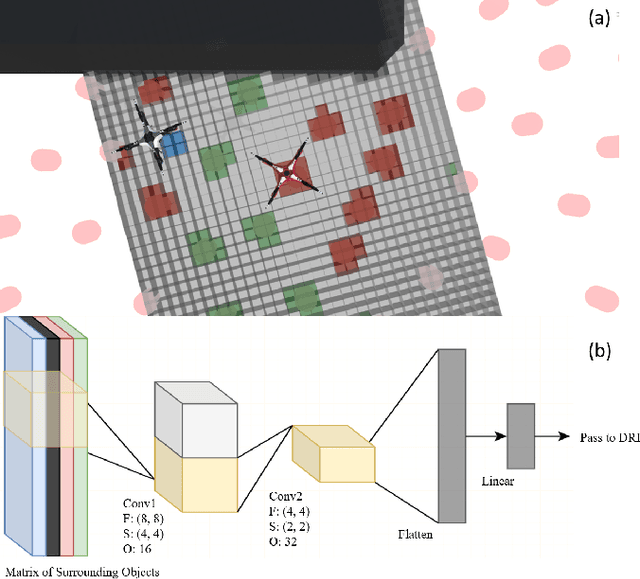

Abstract:In recent years Landmark Complexes have been successfully employed for localization-free and metric-free autonomous exploration using a group of sensing-limited and communication-limited robots in a GPS-denied environment. To ensure rapid and complete exploration, existing works make assumptions on the density and distribution of landmarks in the environment. These assumptions may be overly restrictive, especially in hazardous environments where landmarks may be destroyed or completely missing. In this paper, we first propose a deep reinforcement learning framework for multi-agent cooperative exploration in environments with sparse landmarks while reducing client-server communication. By leveraging recent development on partial observability and credit assignment, our framework can train the exploration policy efficiently for multi-robot systems. The policy receives individual rewards from actions based on a proximity sensor with limited range and resolution, which is combined with group rewards to encourage collaborative exploration and construction of the Landmark Complex through observation of 0-, 1- and 2-dimensional simplices. In addition, we employ a three-stage curriculum learning strategy to mitigate the reward sparsity by gradually adding random obstacles and destroying random landmarks. Experiments in simulation demonstrate that our method outperforms the state-of-the-art landmark complex exploration method in efficiency among different environments with sparse landmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge