Wenpeng Xiao

DreamLight: Towards Harmonious and Consistent Image Relighting

Jun 17, 2025Abstract:We introduce a model named DreamLight for universal image relighting in this work, which can seamlessly composite subjects into a new background while maintaining aesthetic uniformity in terms of lighting and color tone. The background can be specified by natural images (image-based relighting) or generated from unlimited text prompts (text-based relighting). Existing studies primarily focus on image-based relighting, while with scant exploration into text-based scenarios. Some works employ intricate disentanglement pipeline designs relying on environment maps to provide relevant information, which grapples with the expensive data cost required for intrinsic decomposition and light source. Other methods take this task as an image translation problem and perform pixel-level transformation with autoencoder architecture. While these methods have achieved decent harmonization effects, they struggle to generate realistic and natural light interaction effects between the foreground and background. To alleviate these challenges, we reorganize the input data into a unified format and leverage the semantic prior provided by the pretrained diffusion model to facilitate the generation of natural results. Moreover, we propose a Position-Guided Light Adapter (PGLA) that condenses light information from different directions in the background into designed light query embeddings, and modulates the foreground with direction-biased masked attention. In addition, we present a post-processing module named Spectral Foreground Fixer (SFF) to adaptively reorganize different frequency components of subject and relighted background, which helps enhance the consistency of foreground appearance. Extensive comparisons and user study demonstrate that our DreamLight achieves remarkable relighting performance.

Automatic Animation of Hair Blowing in Still Portrait Photos

Sep 25, 2023

Abstract:We propose a novel approach to animate human hair in a still portrait photo. Existing work has largely studied the animation of fluid elements such as water and fire. However, hair animation for a real image remains underexplored, which is a challenging problem, due to the high complexity of hair structure and dynamics. Considering the complexity of hair structure, we innovatively treat hair wisp extraction as an instance segmentation problem, where a hair wisp is referred to as an instance. With advanced instance segmentation networks, our method extracts meaningful and natural hair wisps. Furthermore, we propose a wisp-aware animation module that animates hair wisps with pleasing motions without noticeable artifacts. The extensive experiments show the superiority of our method. Our method provides the most pleasing and compelling viewing experience in the qualitative experiments and outperforms state-of-the-art still-image animation methods by a large margin in the quantitative evaluation. Project url: \url{https://nevergiveu.github.io/AutomaticHairBlowing/}

From Continuity to Editability: Inverting GANs with Consecutive Images

Aug 13, 2021

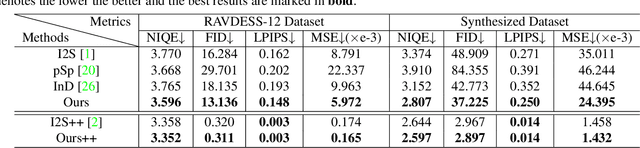

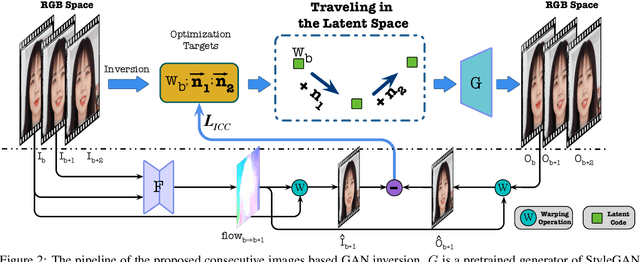

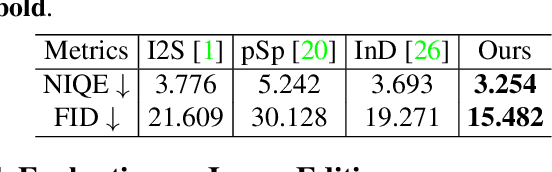

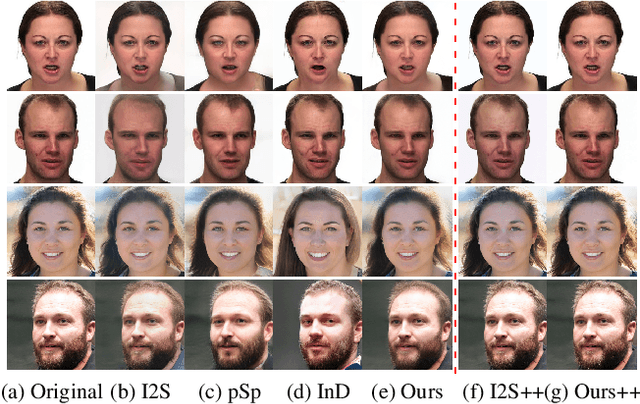

Abstract:Existing GAN inversion methods are stuck in a paradox that the inverted codes can either achieve high-fidelity reconstruction, or retain the editing capability. Having only one of them clearly cannot realize real image editing. In this paper, we resolve this paradox by introducing consecutive images (\eg, video frames or the same person with different poses) into the inversion process. The rationale behind our solution is that the continuity of consecutive images leads to inherent editable directions. This inborn property is used for two unique purposes: 1) regularizing the joint inversion process, such that each of the inverted code is semantically accessible from one of the other and fastened in a editable domain; 2) enforcing inter-image coherence, such that the fidelity of each inverted code can be maximized with the complement of other images. Extensive experiments demonstrate that our alternative significantly outperforms state-of-the-art methods in terms of reconstruction fidelity and editability on both the real image dataset and synthesis dataset. Furthermore, our method provides the first support of video-based GAN inversion, and an interesting application of unsupervised semantic transfer from consecutive images. Source code can be found at: \url{https://github.com/cnnlstm/InvertingGANs_with_ConsecutiveImgs}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge