Wei Guan

GLOW: Graph-Language Co-Reasoning for Agentic Workflow Performance Prediction

Dec 11, 2025

Abstract:Agentic Workflows (AWs) have emerged as a promising paradigm for solving complex tasks. However, the scalability of automating their generation is severely constrained by the high cost and latency of execution-based evaluation. Existing AW performance prediction methods act as surrogates but fail to simultaneously capture the intricate topological dependencies and the deep semantic logic embedded in AWs. To address this limitation, we propose GLOW, a unified framework for AW performance prediction that combines the graph-structure modeling capabilities of GNNs with the reasoning power of LLMs. Specifically, we introduce a graph-oriented LLM, instruction-tuned on graph tasks, to extract topologically aware semantic features, which are fused with GNN-encoded structural representations. A contrastive alignment strategy further refines the latent space to distinguish high-quality AWs. Extensive experiments on FLORA-Bench show that GLOW outperforms state-of-the-art baselines in prediction accuracy and ranking utility.

LogLLM: Log-based Anomaly Detection Using Large Language Models

Nov 13, 2024

Abstract:Software systems often record important runtime information in logs to help with troubleshooting. Log-based anomaly detection has become a key research area that aims to identify system issues through log data, ultimately enhancing the reliability of software systems. Traditional deep learning methods often struggle to capture the semantic information embedded in log data, which is typically organized in natural language. In this paper, we propose LogLLM, a log-based anomaly detection framework that leverages large language models (LLMs). LogLLM employs BERT for extracting semantic vectors from log messages, while utilizing Llama, a transformer decoder-based model, for classifying log sequences. Additionally, we introduce a projector to align the vector representation spaces of BERT and Llama, ensuring a cohesive understanding of log semantics. Unlike conventional methods that require log parsers to extract templates, LogLLM preprocesses log messages with regular expressions, streamlining the entire process. Our framework is trained through a novel three-stage procedure designed to enhance performance and adaptability. Experimental results across four public datasets demonstrate that LogLLM outperforms state-of-the-art methods. Even when handling unstable logs, it effectively captures the semantic meaning of log messages and detects anomalies accurately.

DABL: Detecting Semantic Anomalies in Business Processes Using Large Language Models

Jun 22, 2024Abstract:Detecting anomalies in business processes is crucial for ensuring operational success. While many existing methods rely on statistical frequency to detect anomalies, it's important to note that infrequent behavior doesn't necessarily imply undesirability. To address this challenge, detecting anomalies from a semantic viewpoint proves to be a more effective approach. However, current semantic anomaly detection methods treat a trace (i.e., process instance) as multiple event pairs, disrupting long-distance dependencies. In this paper, we introduce DABL, a novel approach for detecting semantic anomalies in business processes using large language models (LLMs). We collect 143,137 real-world process models from various domains. By generating normal traces through the playout of these process models and simulating both ordering and exclusion anomalies, we fine-tune Llama 2 using the resulting log. Through extensive experiments, we demonstrate that DABL surpasses existing state-of-the-art semantic anomaly detection methods in terms of both generalization ability and learning of given processes. Users can directly apply DABL to detect semantic anomalies in their own datasets without the need for additional training. Furthermore, DABL offers the capability to interpret the causes of anomalies in natural language, providing valuable insights into the detected anomalies.

AIM 2020 Challenge on Real Image Super-Resolution: Methods and Results

Sep 25, 2020

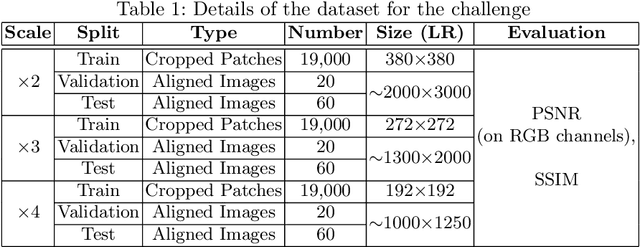

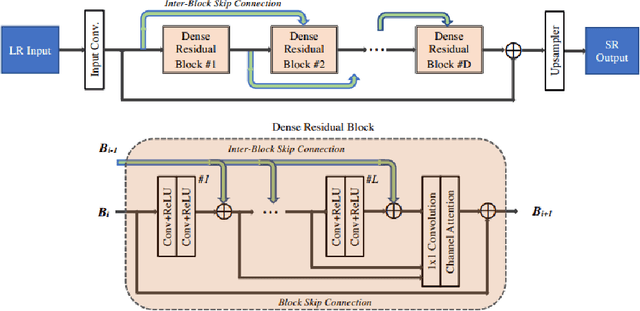

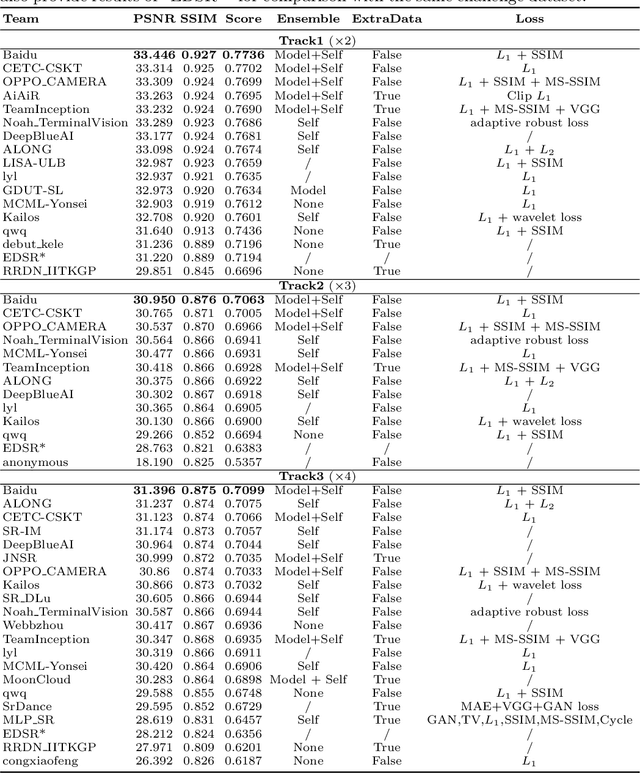

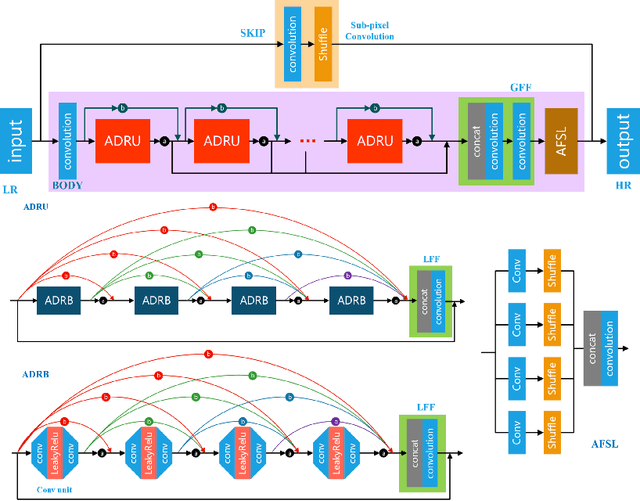

Abstract:This paper introduces the real image Super-Resolution (SR) challenge that was part of the Advances in Image Manipulation (AIM) workshop, held in conjunction with ECCV 2020. This challenge involves three tracks to super-resolve an input image for $\times$2, $\times$3 and $\times$4 scaling factors, respectively. The goal is to attract more attention to realistic image degradation for the SR task, which is much more complicated and challenging, and contributes to real-world image super-resolution applications. 452 participants were registered for three tracks in total, and 24 teams submitted their results. They gauge the state-of-the-art approaches for real image SR in terms of PSNR and SSIM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge