Tommaso Carraro

LTNtorch: PyTorch Implementation of Logic Tensor Networks

Sep 24, 2024Abstract:Logic Tensor Networks (LTN) is a Neuro-Symbolic framework that effectively incorporates deep learning and logical reasoning. In particular, LTN allows defining a logical knowledge base and using it as the objective of a neural model. This makes learning by logical reasoning possible as the parameters of the model are optimized by minimizing a loss function composed of a set of logical formulas expressing facts about the learning task. The framework learns via gradient-descent optimization. Fuzzy logic, a relaxation of classical logic permitting continuous truth values in the interval [0,1], makes this learning possible. Specifically, the training of an LTN consists of three steps. Firstly, (1) the training data is used to ground the formulas. Then, (2) the formulas are evaluated, and the loss function is computed. Lastly, (3) the gradients are back-propagated through the logical computational graph, and the weights of the neural model are changed so the knowledge base is maximally satisfied. LTNtorch is the fully documented and tested PyTorch implementation of Logic Tensor Networks. This paper presents the formalization of LTN and how LTNtorch implements it. Moreover, it provides a basic binary classification example.

A Benchmark Suite for Systematically Evaluating Reasoning Shortcuts

Jun 14, 2024

Abstract:The advent of powerful neural classifiers has increased interest in problems that require both learning and reasoning. These problems are critical for understanding important properties of models, such as trustworthiness, generalization, interpretability, and compliance to safety and structural constraints. However, recent research observed that tasks requiring both learning and reasoning on background knowledge often suffer from reasoning shortcuts (RSs): predictors can solve the downstream reasoning task without associating the correct concepts to the high-dimensional data. To address this issue, we introduce rsbench, a comprehensive benchmark suite designed to systematically evaluate the impact of RSs on models by providing easy access to highly customizable tasks affected by RSs. Furthermore, rsbench implements common metrics for evaluating concept quality and introduces novel formal verification procedures for assessing the presence of RSs in learning tasks. Using rsbench, we highlight that obtaining high quality concepts in both purely neural and neuro-symbolic models is a far-from-solved problem. rsbench is available at: https://unitn-sml.github.io/rsbench.

Novel Applications for VAE-based Anomaly Detection Systems

Apr 26, 2022

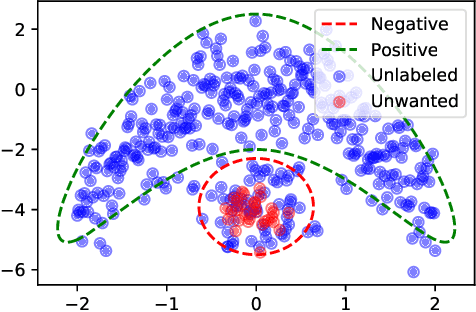

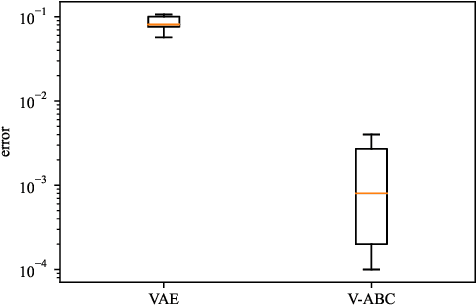

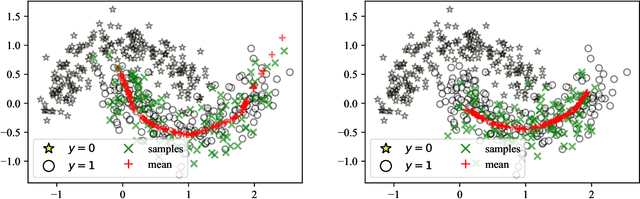

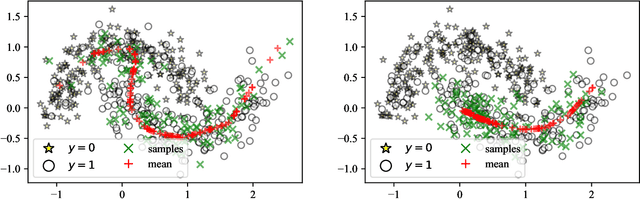

Abstract:The recent rise in deep learning technologies fueled innovation and boosted scientific research. Their achievements enabled new research directions for deep generative modeling (DGM), an increasingly popular approach that can create novel and unseen data, starting from a given data set. As the technology shows promising applications, many ethical issues also arise. For example, their misuse can enable disinformation campaigns and powerful phishing attempts. Research also indicates different biases affect deep learning models, leading to social issues such as misrepresentation. In this work, we formulate a novel setting to deal with similar problems, showing that a repurposed anomaly detection system effectively generates novel data, avoiding generating specified unwanted data. We propose Variational Auto-encoding Binary Classifiers (V-ABC): a novel model that repurposes and extends the Auto-encoding Binary Classifier (ABC) anomaly detector, using the Variational Auto-encoder (VAE). We survey the limitations of existing approaches and explore many tools to show the model's inner workings in an interpretable way. This proposal has excellent potential for generative applications: models that rely on user-generated data could automatically filter out unwanted content, such as offensive language, obscene images, and misleading information.

Bayes Point Rule Set Learning

Apr 11, 2022

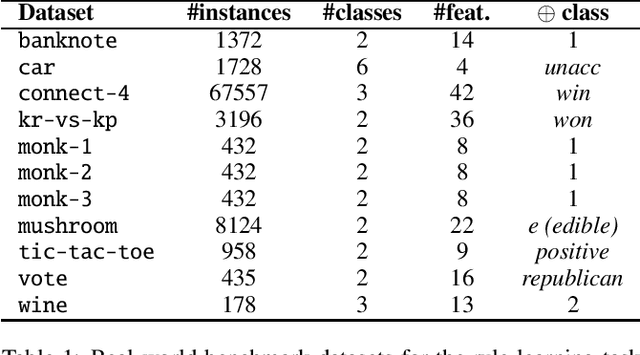

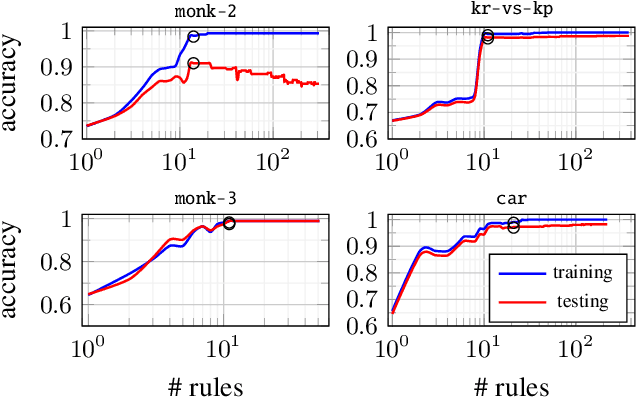

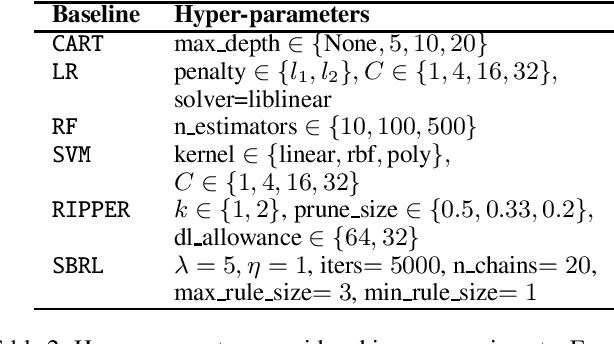

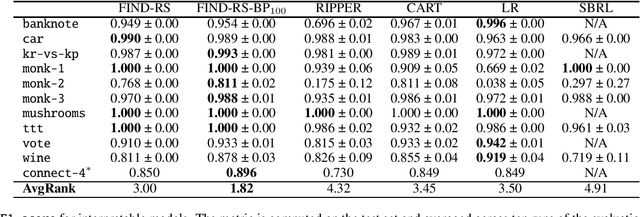

Abstract:Interpretability is having an increasingly important role in the design of machine learning algorithms. However, interpretable methods tend to be less accurate than their black-box counterparts. Among others, DNFs (Disjunctive Normal Forms) are arguably the most interpretable way to express a set of rules. In this paper, we propose an effective bottom-up extension of the popular FIND-S algorithm to learn DNF-type rulesets. The algorithm greedily finds a partition of the positive examples. The produced DNF is a set of conjunctive rules, each corresponding to the most specific rule consistent with a part of positive and all negative examples. We also propose two principled extensions of this method, approximating the Bayes Optimal Classifier by aggregating DNF decision rules. Finally, we provide a methodology to significantly improve the explainability of the learned rules while retaining their generalization capabilities. An extensive comparison with state-of-the-art symbolic and statistical methods on several benchmark data sets shows that our proposal provides an excellent balance between explainability and accuracy.

Conditioned Variational Autoencoder for top-N item recommendation

May 04, 2020

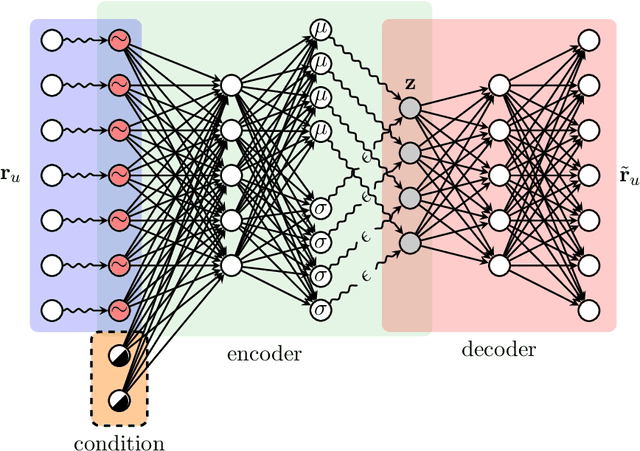

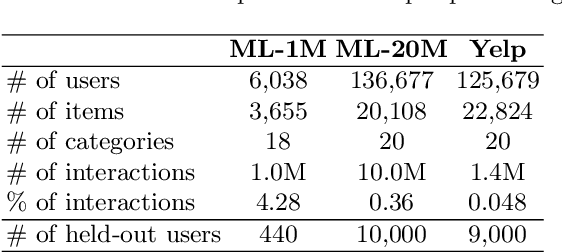

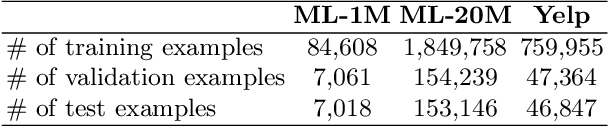

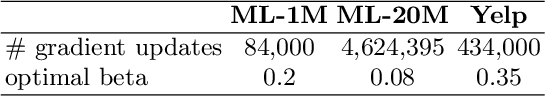

Abstract:In this paper, we propose a Conditioned Variational Autoencoder (C-VAE) for constrained top-N item recommendation where the recommended items must satisfy a given condition. The proposed model architecture is similar to a standard VAE in which the condition vector is fed into the encoder. The constrained ranking is learned during training thanks to a new reconstruction loss that takes the input condition into account. We show that our model generalizes the state-of-the-art Mult-VAE collaborative filtering model. Moreover, we provide insights on what C-VAE learns in the latent space, providing a human-friendly interpretation. Experimental results underline the potential of C-VAE in providing accurate recommendations under constraints. Finally, the performed analyses suggest that C-VAE can be used in other recommendation scenarios, such as context-aware recommendation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge