Théo Ryffel

Differential Privacy Guarantees for Stochastic Gradient Langevin Dynamics

Feb 05, 2022

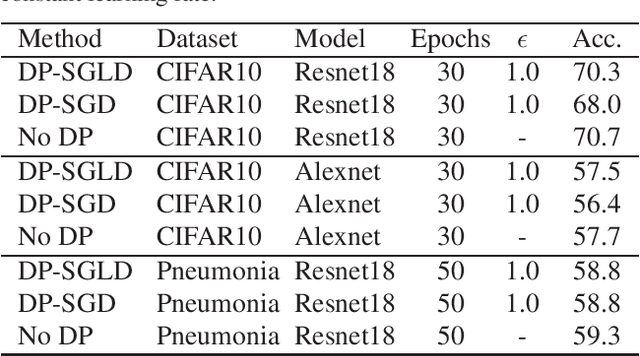

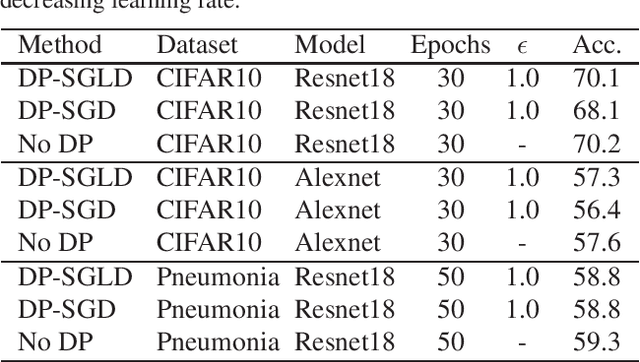

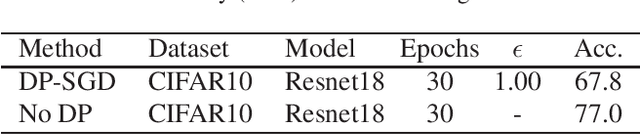

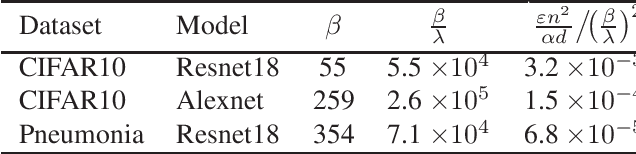

Abstract:We analyse the privacy leakage of noisy stochastic gradient descent by modeling R\'enyi divergence dynamics with Langevin diffusions. Inspired by recent work on non-stochastic algorithms, we derive similar desirable properties in the stochastic setting. In particular, we prove that the privacy loss converges exponentially fast for smooth and strongly convex objectives under constant step size, which is a significant improvement over previous DP-SGD analyses. We also extend our analysis to arbitrary sequences of varying step sizes and derive new utility bounds. Last, we propose an implementation and our experiments show the practical utility of our approach compared to classical DP-SGD libraries.

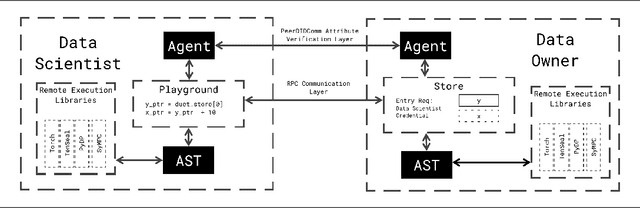

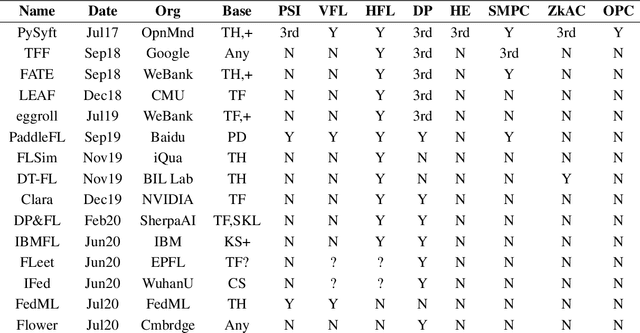

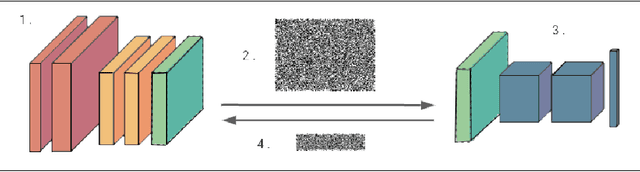

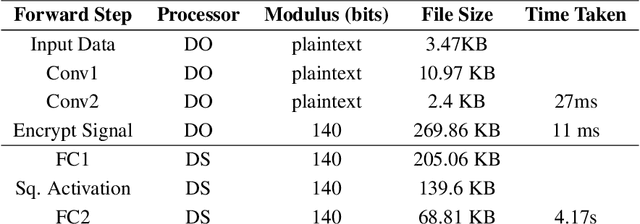

Syft 0.5: A Platform for Universally Deployable Structured Transparency

Apr 27, 2021

Abstract:We present Syft 0.5, a general-purpose framework that combines a core group of privacy-enhancing technologies that facilitate a universal set of structured transparency systems. This framework is demonstrated through the design and implementation of a novel privacy-preserving inference information flow where we pass homomorphically encrypted activation signals through a split neural network for inference. We show that splitting the model further up the computation chain significantly reduces the computation time of inference and the payload size of activation signals at the cost of model secrecy. We evaluate our proposed flow with respect to its provision of the core structural transparency principles.

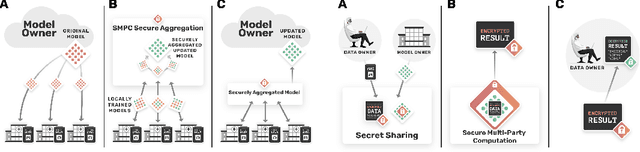

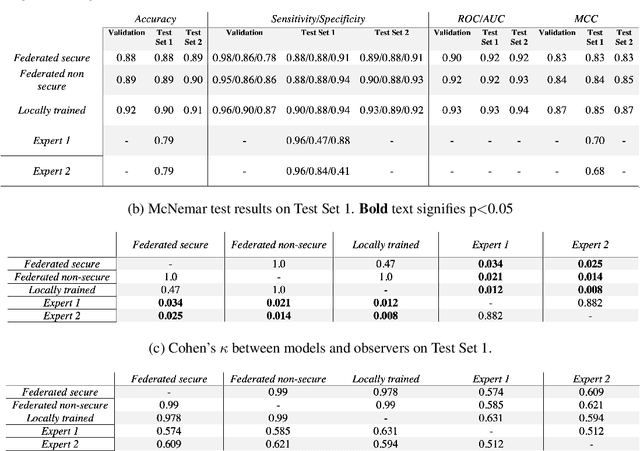

Privacy-preserving medical image analysis

Dec 10, 2020

Abstract:The utilisation of artificial intelligence in medicine and healthcare has led to successful clinical applications in several domains. The conflict between data usage and privacy protection requirements in such systems must be resolved for optimal results as well as ethical and legal compliance. This calls for innovative solutions such as privacy-preserving machine learning (PPML). We present PriMIA (Privacy-preserving Medical Image Analysis), a software framework designed for PPML in medical imaging. In a real-life case study we demonstrate significantly better classification performance of a securely aggregated federated learning model compared to human experts on unseen datasets. Furthermore, we show an inference-as-a-service scenario for end-to-end encrypted diagnosis, where neither the data nor the model are revealed. Lastly, we empirically evaluate the framework's security against a gradient-based model inversion attack and demonstrate that no usable information can be recovered from the model.

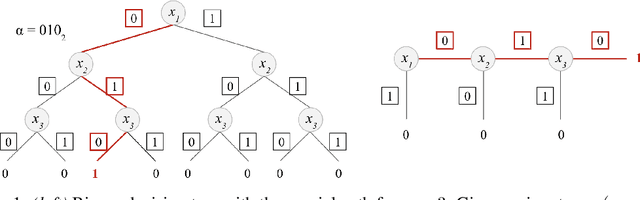

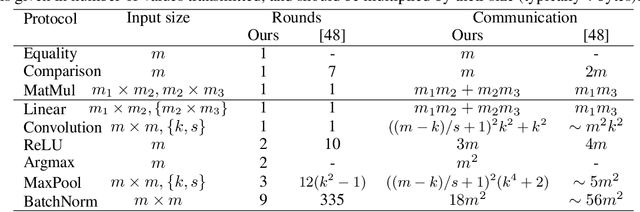

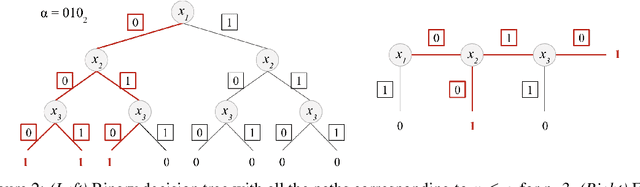

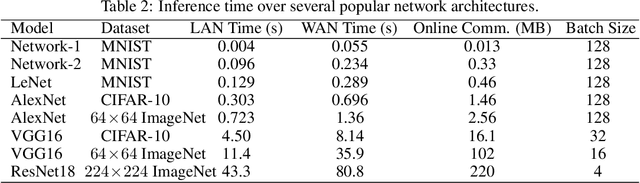

ARIANN: Low-Interaction Privacy-Preserving Deep Learning via Function Secret Sharing

Jun 08, 2020

Abstract:We propose ARIANN, a low-interaction framework to perform private training and inference of standard deep neural networks on sensitive data. This framework implements semi-honest 2-party computation and leverages function secret sharing, a recent cryptographic protocol that only uses lightweight primitives to achieve an efficient online phase with a single message of the size of the inputs, for operations like comparison and multiplication which are building blocks of neural networks. Built on top of PyTorch, it offers a wide range of functions including ReLU, MaxPool and BatchNorm, and allows to use models like AlexNet or ResNet18. We report experimental results for inference and training over distant servers. Last, we propose an extension to support n-party private federated learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge