Sumudu Samarakoon

6G Resilience -- White Paper

Sep 10, 2025

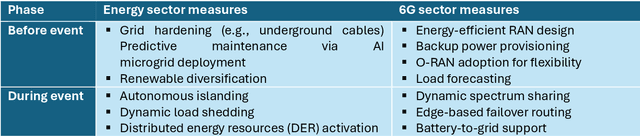

Abstract:6G must be designed to withstand, adapt to, and evolve amid prolonged, complex disruptions. Mobile networks' shift from efficiency-first to sustainability-aware has motivated this white paper to assert that resilience is a primary design goal, alongside sustainability and efficiency, encompassing technology, architecture, and economics. We promote resilience by analysing dependencies between mobile networks and other critical systems, such as energy, transport, and emergency services, and illustrate how cascading failures spread through infrastructures. We formalise resilience using the 3R framework: reliability, robustness, resilience. Subsequently, we translate this into measurable capabilities: graceful degradation, situational awareness, rapid reconfiguration, and learning-driven improvement and recovery. Architecturally, we promote edge-native and locality-aware designs, open interfaces, and programmability to enable islanded operations, fallback modes, and multi-layer diversity (radio, compute, energy, timing). Key enablers include AI-native control loops with verifiable behaviour, zero-trust security rooted in hardware and supply-chain integrity, and networking techniques that prioritise critical traffic, time-sensitive flows, and inter-domain coordination. Resilience also has a techno-economic aspect: open platforms and high-quality complementors generate ecosystem externalities that enhance resilience while opening new markets. We identify nine business-model groups and several patterns aligned with the 3R objectives, and we outline governance and standardisation. This white paper serves as an initial step and catalyst for 6G resilience. It aims to inspire researchers, professionals, government officials, and the public, providing them with the essential components to understand and shape the development of 6G resilience.

Cross-Domain Lifelong Reinforcement Learning for Wireless Sensor Networks

Aug 25, 2025Abstract:Wireless sensor networks (WSNs) with energy harvesting (EH) are expected to play a vital role in intelligent 6G systems, especially in industrial sensing and control, where continuous operation and sustainable energy use are critical. Given limited energy resources, WSNs must operate efficiently to ensure long-term performance. Their deployment, however, is challenged by dynamic environments where EH conditions, network scale, and traffic rates change over time. In this work, we address system dynamics that yield different learning tasks, where decision variables remain fixed but strategies vary, as well as learning domains, where both decision space and strategies evolve. To handle such scenarios, we propose a cross-domain lifelong reinforcement learning (CD-L2RL) framework for energy-efficient WSN design. Our CD-L2RL algorithm leverages prior experience to accelerate adaptation across tasks and domains. Unlike conventional approaches based on Markov decision processes or Lyapunov optimization, which assume relatively stable environments, our solution achieves rapid policy adaptation by reusing knowledge from past tasks and domains to ensure continuous operations. We validate the approach through extensive simulations under diverse conditions. Results show that our method improves adaptation speed by up to 35% over standard reinforcement learning and up to 70% over Lyapunov-based optimization, while also increasing total harvested energy. These findings highlight the strong potential of CD-L2RL for deployment in dynamic 6G WSNs.

Zero-Shot Generalization for Blockage Localization in mmWave Communication

Dec 18, 2024

Abstract:This paper introduces a novel method for predicting blockages in millimeter-wave (mmWave) communication systems towards enabling reliable connectivity. It employs a self-supervised learning approach to label radio frequency (RF) data with the locations of blockage-causing objects extracted from light detection and ranging (LiDAR) data, which is then used to train a deep learning model that predicts object`s location only using RF data. Then, the predicted location is utilized to predict blockages, enabling adaptability without retraining when transmitter-receiver positions change. Evaluations demonstrate up to 74% accuracy in predicting blockage locations in dynamic environments, showcasing the robustness of the proposed solution.

Real-Time Remote Control via VR over Limited Wireless Connectivity

Jun 25, 2024Abstract:This work introduces a solution to enhance human-robot interaction over limited wireless connectivity. The goal is toenable remote control of a robot through a virtual reality (VR)interface, ensuring a smooth transition to autonomous mode in the event of connectivity loss. The VR interface provides accessto a dynamic 3D virtual map that undergoes continuous updatesusing real-time sensor data collected and transmitted by therobot. Furthermore, the robot monitors wireless connectivity and automatically switches to a autonomous mode in scenarios with limited connectivity. By integrating four key functionalities: real-time mapping, remote control through glasses VR, continuous monitoring of wireless connectivity, and autonomous navigation during limited connectivity, we achieve seamless end-to-end operation.

Maze Discovery using Multiple Robots via Federated Learning

Jun 25, 2024

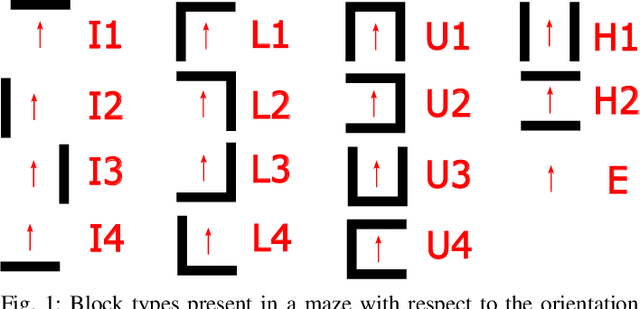

Abstract:This work presents a use case of federated learning (FL) applied to discovering a maze with LiDAR sensors-equipped robots. Goal here is to train classification models to accurately identify the shapes of grid areas within two different square mazes made up with irregular shaped walls. Due to the use of different shapes for the walls, a classification model trained in one maze that captures its structure does not generalize for the other. This issue is resolved by adopting FL framework between the robots that explore only one maze so that the collective knowledge allows them to operate accurately in the unseen maze. This illustrates the effectiveness of FL in real-world applications in terms of enhancing classification accuracy and robustness in maze discovery tasks.

Decentralized RL-Based Data Transmission Scheme for Energy Efficient Harvesting

Jun 24, 2024

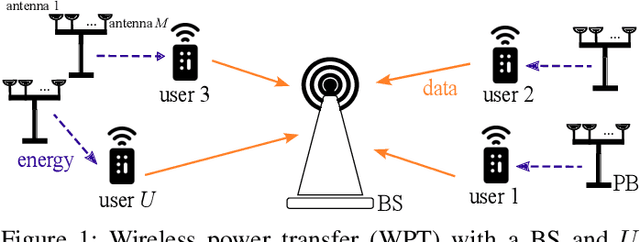

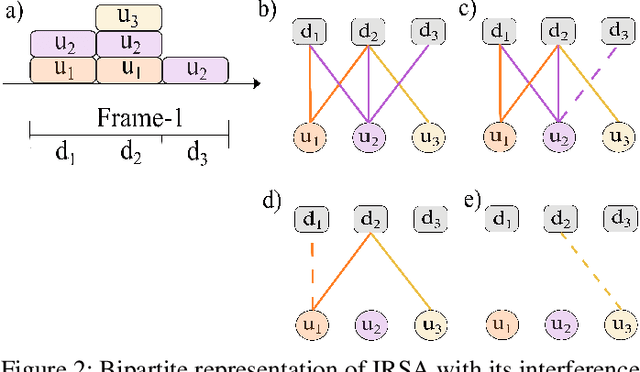

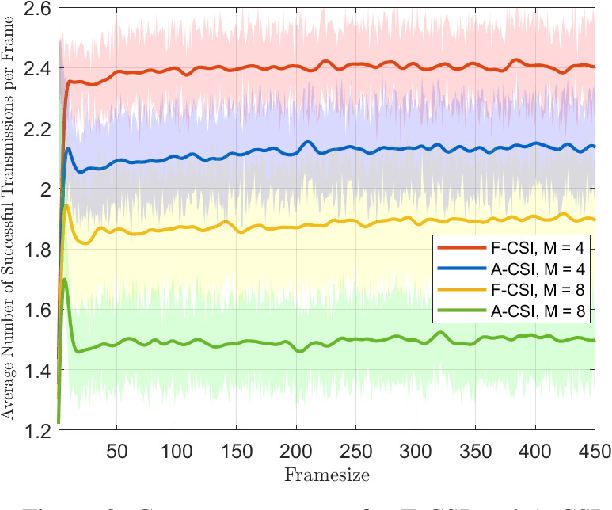

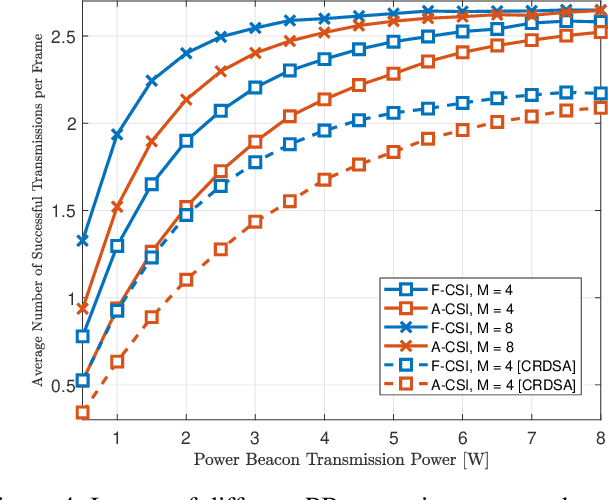

Abstract:The evolving landscape of the Internet of Things (IoT) has given rise to a pressing need for an efficient communication scheme. As the IoT user ecosystem continues to expand, traditional communication protocols grapple with substantial challenges in meeting its burgeoning demands, including energy consumption, scalability, data management, and interference. In response to this, the integration of wireless power transfer and data transmission has emerged as a promising solution. This paper considers an energy harvesting (EH)-oriented data transmission scheme, where a set of users are charged by their own multi-antenna power beacon (PB) and subsequently transmits data to a base station (BS) using an irregular slotted aloha (IRSA) channel access protocol. We propose a closed-form expression to model energy consumption for the present scheme, employing average channel state information (A-CSI) beamforming in the wireless power channel. Subsequently, we employ the reinforcement learning (RL) methodology, wherein every user functions as an agent tasked with the goal of uncovering their most effective strategy for replicating transmissions. This strategy is devised while factoring in their energy constraints and the maximum number of packets they need to transmit. Our results underscore the viability of this solution, particularly when the PB can be strategically positioned to ensure a strong line-of-sight connection with the user, highlighting the potential benefits of optimal deployment.

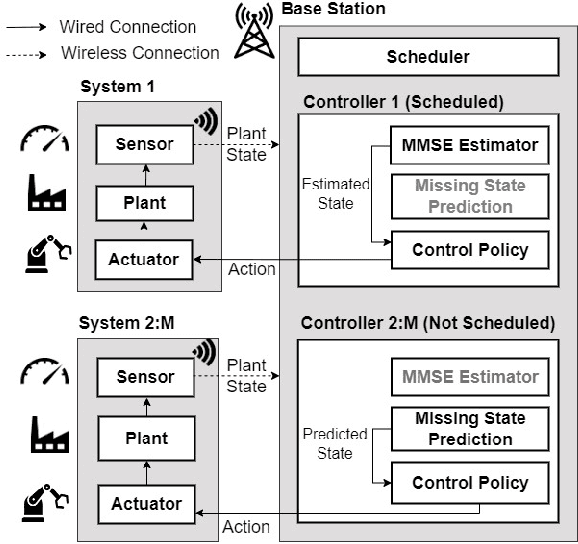

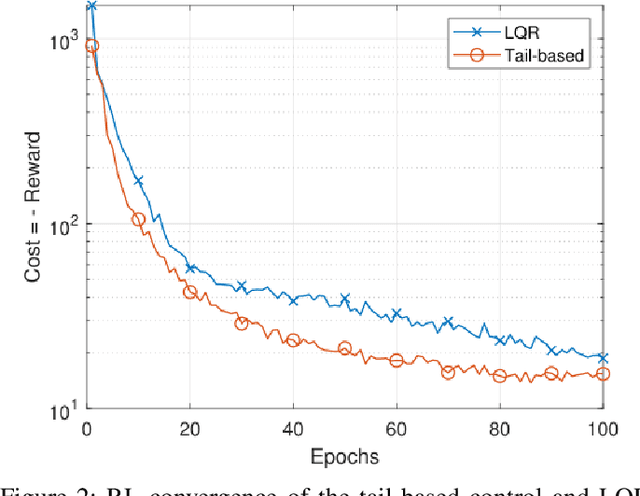

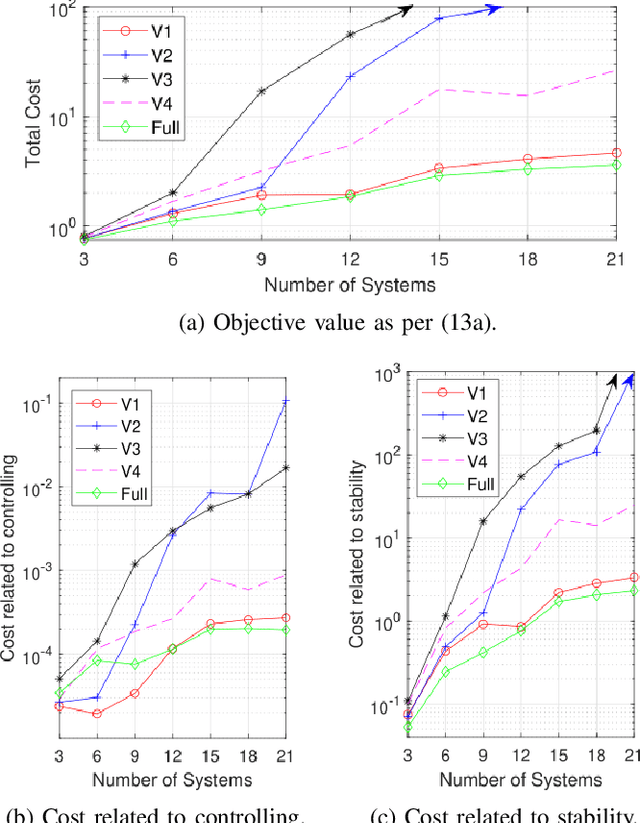

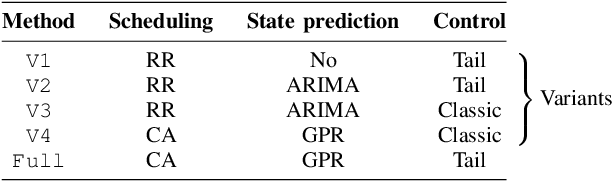

Resource Optimization for Tail-Based Control in Wireless Networked Control Systems

Jun 20, 2024

Abstract:Achieving control stability is one of the key design challenges of scalable Wireless Networked Control Systems (WNCS) under limited communication and computing resources. This paper explores the use of an alternative control concept defined as tail-based control, which extends the classical Linear Quadratic Regulator (LQR) cost function for multiple dynamic control systems over a shared wireless network. We cast the control of multiple control systems as a network-wide optimization problem and decouple it in terms of sensor scheduling, plant state prediction, and control policies. Toward this, we propose a solution consisting of a scheduling algorithm based on Lyapunov optimization for sensing, a mechanism based on Gaussian Process Regression (GPR) for state prediction and uncertainty estimation, and a control policy based on Reinforcement Learning (RL) to ensure tail-based control stability. A set of discrete time-invariant mountain car control systems is used to evaluate the proposed solution and is compared against four variants that use state-of-the-art scheduling, prediction, and control methods. The experimental results indicate that the proposed method yields 22% reduction in overall cost in terms of communication and control resource utilization compared to state-of-the-art methods.

Cooperative Multi-Agent Learning for Navigation via Structured State Abstraction

Jun 20, 2023

Abstract:Cooperative multi-agent reinforcement learning (MARL) for navigation enables agents to cooperate to achieve their navigation goals. Using emergent communication, agents learn a communication protocol to coordinate and share information that is needed to achieve their navigation tasks. In emergent communication, symbols with no pre-specified usage rules are exchanged, in which the meaning and syntax emerge through training. Learning a navigation policy along with a communication protocol in a MARL environment is highly complex due to the huge state space to be explored. To cope with this complexity, this work proposes a novel neural network architecture, for jointly learning an adaptive state space abstraction and a communication protocol among agents participating in navigation tasks. The goal is to come up with an adaptive abstractor that significantly reduces the size of the state space to be explored, without degradation in the policy performance. Simulation results show that the proposed method reaches a better policy, in terms of achievable rewards, resulting in fewer training iterations compared to the case where raw states or fixed state abstraction are used. Moreover, it is shown that a communication protocol emerges during training which enables the agents to learn better policies within fewer training iterations.

Federated Learning Games for Reconfigurable Intelligent Surfaces via Causal Representations

Jun 02, 2023Abstract:In this paper, we investigate the problem of robust Reconfigurable Intelligent Surface (RIS) phase-shifts configuration over heterogeneous communication environments. The problem is formulated as a distributed learning problem over different environments in a Federated Learning (FL) setting. Equivalently, this corresponds to a game played between multiple RISs, as learning agents, in heterogeneous environments. Using Invariant Risk Minimization (IRM) and its FL equivalent, dubbed FL Games, we solve the RIS configuration problem by learning invariant causal representations across multiple environments and then predicting the phases. The solution corresponds to playing according to Best Response Dynamics (BRD) which yields the Nash Equilibrium of the FL game. The representation learner and the phase predictor are modeled by two neural networks, and their performance is validated via simulations against other benchmarks from the literature. Our results show that causality-based learning yields a predictor that is 15% more accurate in unseen Out-of-Distribution (OoD) environments.

How Can Optical Communications Shape the Future of Deep Space Communications? A Survey

Dec 07, 2022

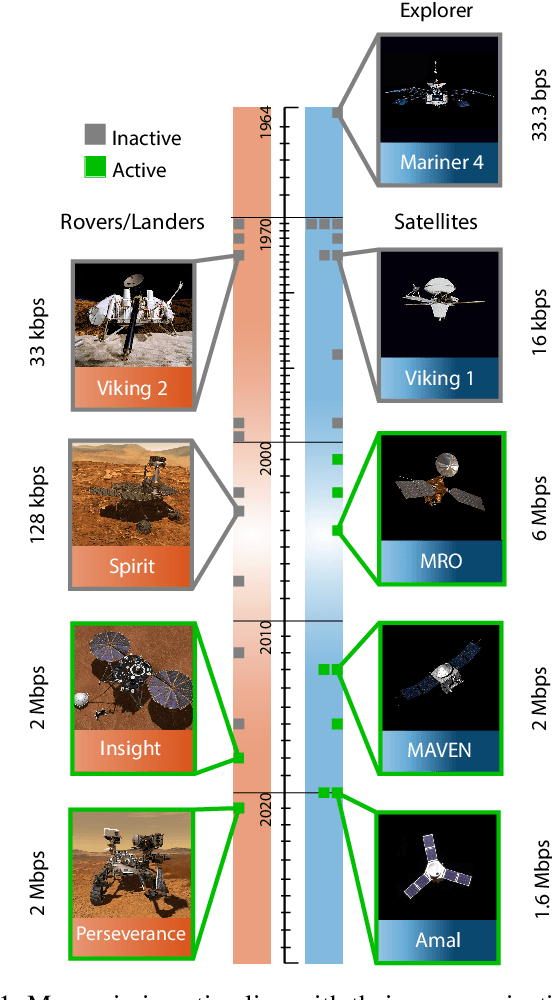

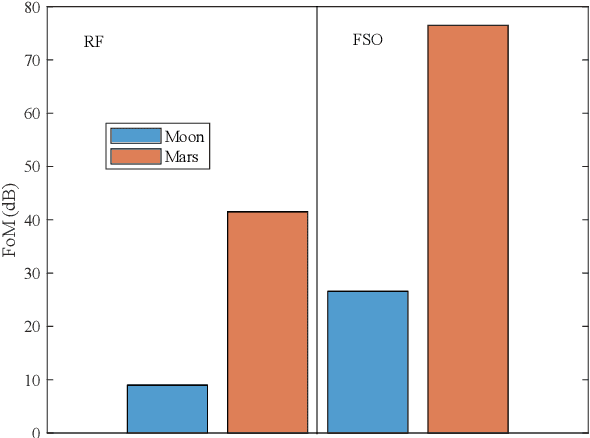

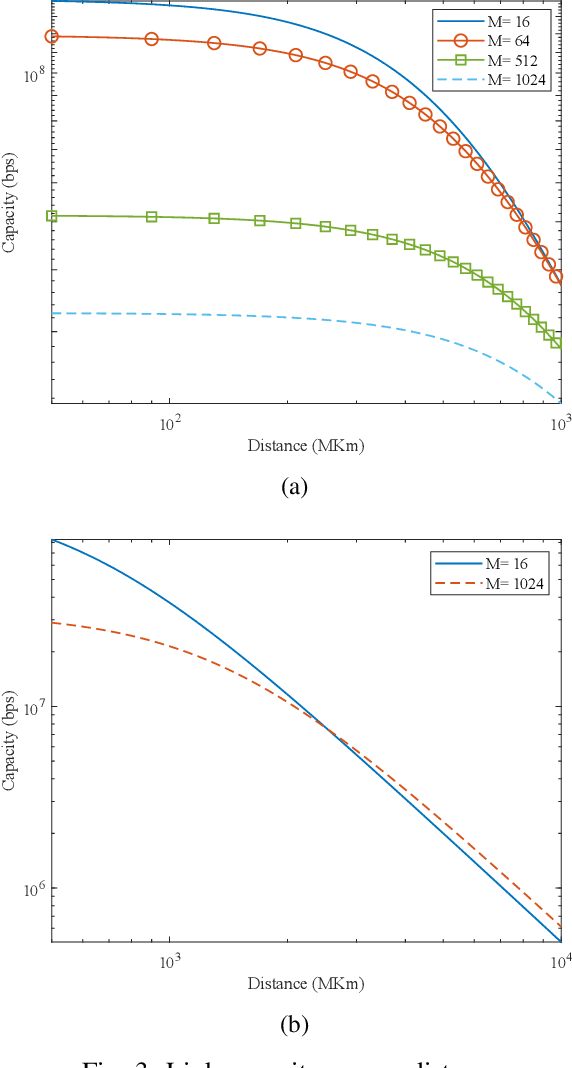

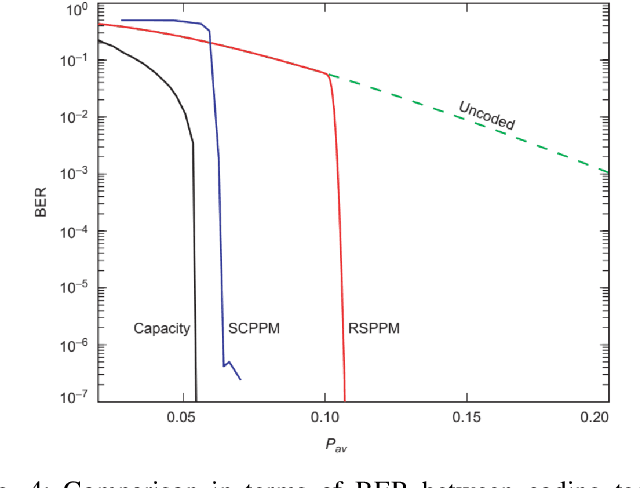

Abstract:With a large number of deep space (DS) missions anticipated by the end of this decade, reliable and high-capacity DS communications systems are needed more than ever. Nevertheless, existing DS communications technologies are far from meeting such a goal. Improving current DS communications systems does not only require system engineering leadership but also, very crucially, an investigation of potential emerging technologies that overcome the unique challenges of ultra-long DS communications links. To the best of our knowledge, there has not been any comprehensive surveys of DS communications technologies over the last decade.Fee-space optical (FSO) technology is an emerging DS technology, proven to acquire lower communications systems size, weight and power (SWaP) and achieve a very high capacity compared to its counterpart radio frequency (RF) technology, the current used DS technology. In this survey, we discuss the pros and cons of deep space optical communications (DSOC). Furthermore, we review the modulation, coding, and detection, receiver, and protocols schemes and technologies for DSOC. We provide, for the very first time, thoughtful discussions about implementing orbital angular momentum (OAM) and quantum communications (QC)for DS. We elaborate on how these technologies among other field advances, including interplanetary network, and RF/FSO systems improve reliability, capacity, and security and address related implementation challenges and potential solutions. This paper provides a holistic survey in DSOC technologies gathering 200+ fragmented literature and including novel perspectives aiming to setting the stage for more developments in the field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge