Stephane Lathuiliere

No Images, No Problem: Retaining Knowledge in Continual VQA with Questions-Only Memory

Feb 06, 2025Abstract:Continual Learning in Visual Question Answering (VQACL) requires models to learn new visual-linguistic tasks (plasticity) while retaining knowledge from previous tasks (stability). The multimodal nature of VQACL presents unique challenges, requiring models to balance stability across visual and textual domains while maintaining plasticity to adapt to novel objects and reasoning tasks. Existing methods, predominantly designed for unimodal tasks, often struggle to balance these demands effectively. In this work, we introduce QUestion-only replay with Attention Distillation (QUAD), a novel approach for VQACL that leverages only past task questions for regularisation, eliminating the need to store visual data and addressing both memory and privacy concerns. QUAD achieves stability by introducing a question-only replay mechanism that selectively uses questions from previous tasks to prevent overfitting to the current task's answer space, thereby mitigating the out-of-answer-set problem. Complementing this, we propose attention consistency distillation, which uniquely enforces both intra-modal and inter-modal attention consistency across tasks, preserving essential visual-linguistic associations. Extensive experiments on VQAv2 and NExT-QA demonstrate that QUAD significantly outperforms state-of-the-art methods, achieving robust performance in continual VQA.

TACO: Training-free Sound Prompted Segmentation via Deep Audio-visual CO-factorization

Dec 02, 2024

Abstract:Large-scale pre-trained audio and image models demonstrate an unprecedented degree of generalization, making them suitable for a wide range of applications. Here, we tackle the specific task of sound-prompted segmentation, aiming to segment image regions corresponding to objects heard in an audio signal. Most existing approaches tackle this problem by fine-tuning pre-trained models or by training additional modules specifically for the task. We adopt a different strategy: we introduce a training-free approach that leverages Non-negative Matrix Factorization (NMF) to co-factorize audio and visual features from pre-trained models to reveal shared interpretable concepts. These concepts are passed to an open-vocabulary segmentation model for precise segmentation maps. By using frozen pre-trained models, our method achieves high generalization and establishes state-of-the-art performance in unsupervised sound-prompted segmentation, significantly surpassing previous unsupervised methods.

Contrast, Stylize and Adapt: Unsupervised Contrastive Learning Framework for Domain Adaptive Semantic Segmentation

Jun 15, 2023

Abstract:To overcome the domain gap between synthetic and real-world datasets, unsupervised domain adaptation methods have been proposed for semantic segmentation. Majority of the previous approaches have attempted to reduce the gap either at the pixel or feature level, disregarding the fact that the two components interact positively. To address this, we present CONtrastive FEaTure and pIxel alignment (CONFETI) for bridging the domain gap at both the pixel and feature levels using a unique contrastive formulation. We introduce well-estimated prototypes by including category-wise cross-domain information to link the two alignments: the pixel-level alignment is achieved using the jointly trained style transfer module with the prototypical semantic consistency, while the feature-level alignment is enforced to cross-domain features with the \textbf{pixel-to-prototype contrast}. Our extensive experiments demonstrate that our method outperforms existing state-of-the-art methods using DeepLabV2. Our code is available at https://github.com/cxa9264/CONFETI

Deformable GANs for Pose-based Human Image Generation

Apr 06, 2018

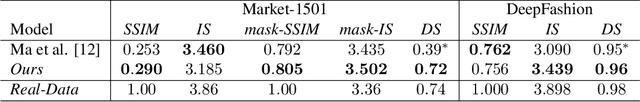

Abstract:In this paper we address the problem of generating person images conditioned on a given pose. Specifically, given an image of a person and a target pose, we synthesize a new image of that person in the novel pose. In order to deal with pixel-to-pixel misalignments caused by the pose differences, we introduce deformable skip connections in the generator of our Generative Adversarial Network. Moreover, a nearest-neighbour loss is proposed instead of the common L1 and L2 losses in order to match the details of the generated image with the target image. We test our approach using photos of persons in different poses and we compare our method with previous work in this area showing state-of-the-art results in two benchmarks. Our method can be applied to the wider field of deformable object generation, provided that the pose of the articulated object can be extracted using a keypoint detector.

Depression Severity Estimation from Multiple Modalities

Nov 10, 2017

Abstract:Depression is a major debilitating disorder which can affect people from all ages. With a continuous increase in the number of annual cases of depression, there is a need to develop automatic techniques for the detection of the presence and extent of depression. In this AVEC challenge we explore different modalities (speech, language and visual features extracted from face) to design and develop automatic methods for the detection of depression. In psychology literature, the PHQ-8 questionnaire is well established as a tool for measuring the severity of depression. In this paper we aim to automatically predict the PHQ-8 scores from features extracted from the different modalities. We show that visual features extracted from facial landmarks obtain the best performance in terms of estimating the PHQ-8 results with a mean absolute error (MAE) of 4.66 on the development set. Behavioral characteristics from speech provide an MAE of 4.73. Language features yield a slightly higher MAE of 5.17. When switching to the test set, our Turn Features derived from audio transcriptions achieve the best performance, scoring an MAE of 4.11 (corresponding to an RMSE of 4.94), which makes our system the winner of the AVEC 2017 depression sub-challenge.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge