Shuyi Wang

Kimi K2.5: Visual Agentic Intelligence

Feb 02, 2026Abstract:We introduce Kimi K2.5, an open-source multimodal agentic model designed to advance general agentic intelligence. K2.5 emphasizes the joint optimization of text and vision so that two modalities enhance each other. This includes a series of techniques such as joint text-vision pre-training, zero-vision SFT, and joint text-vision reinforcement learning. Building on this multimodal foundation, K2.5 introduces Agent Swarm, a self-directed parallel agent orchestration framework that dynamically decomposes complex tasks into heterogeneous sub-problems and executes them concurrently. Extensive evaluations show that Kimi K2.5 achieves state-of-the-art results across various domains including coding, vision, reasoning, and agentic tasks. Agent Swarm also reduces latency by up to $4.5\times$ over single-agent baselines. We release the post-trained Kimi K2.5 model checkpoint to facilitate future research and real-world applications of agentic intelligence.

Kimi K2: Open Agentic Intelligence

Jul 28, 2025

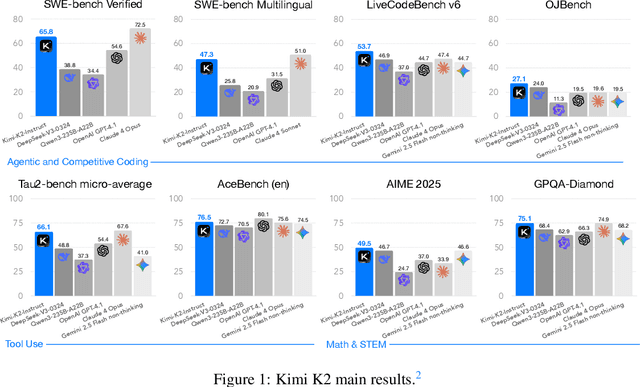

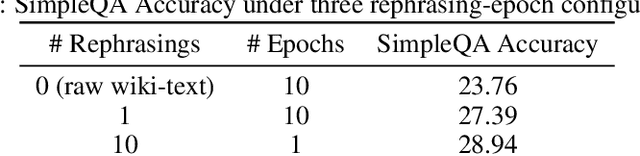

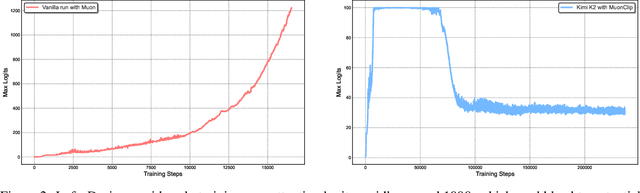

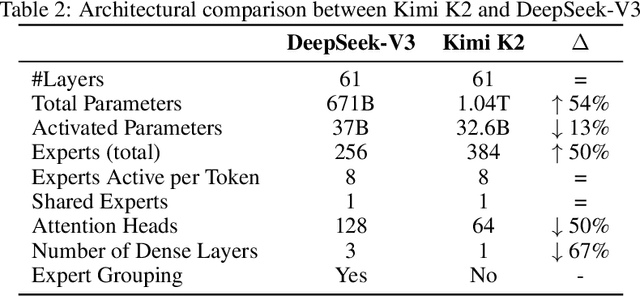

Abstract:We introduce Kimi K2, a Mixture-of-Experts (MoE) large language model with 32 billion activated parameters and 1 trillion total parameters. We propose the MuonClip optimizer, which improves upon Muon with a novel QK-clip technique to address training instability while enjoying the advanced token efficiency of Muon. Based on MuonClip, K2 was pre-trained on 15.5 trillion tokens with zero loss spike. During post-training, K2 undergoes a multi-stage post-training process, highlighted by a large-scale agentic data synthesis pipeline and a joint reinforcement learning (RL) stage, where the model improves its capabilities through interactions with real and synthetic environments. Kimi K2 achieves state-of-the-art performance among open-source non-thinking models, with strengths in agentic capabilities. Notably, K2 obtains 66.1 on Tau2-Bench, 76.5 on ACEBench (En), 65.8 on SWE-Bench Verified, and 47.3 on SWE-Bench Multilingual -- surpassing most open and closed-sourced baselines in non-thinking settings. It also exhibits strong capabilities in coding, mathematics, and reasoning tasks, with a score of 53.7 on LiveCodeBench v6, 49.5 on AIME 2025, 75.1 on GPQA-Diamond, and 27.1 on OJBench, all without extended thinking. These results position Kimi K2 as one of the most capable open-source large language models to date, particularly in software engineering and agentic tasks. We release our base and post-trained model checkpoints to facilitate future research and applications of agentic intelligence.

Calibrating LLMs for Text-to-SQL Parsing by Leveraging Sub-clause Frequencies

May 27, 2025Abstract:While large language models (LLMs) achieve strong performance on text-to-SQL parsing, they sometimes exhibit unexpected failures in which they are confidently incorrect. Building trustworthy text-to-SQL systems thus requires eliciting reliable uncertainty measures from the LLM. In this paper, we study the problem of providing a calibrated confidence score that conveys the likelihood of an output query being correct. Our work is the first to establish a benchmark for post-hoc calibration of LLM-based text-to-SQL parsing. In particular, we show that Platt scaling, a canonical method for calibration, provides substantial improvements over directly using raw model output probabilities as confidence scores. Furthermore, we propose a method for text-to-SQL calibration that leverages the structured nature of SQL queries to provide more granular signals of correctness, named "sub-clause frequency" (SCF) scores. Using multivariate Platt scaling (MPS), our extension of the canonical Platt scaling technique, we combine individual SCF scores into an overall accurate and calibrated score. Empirical evaluation on two popular text-to-SQL datasets shows that our approach of combining MPS and SCF yields further improvements in calibration and the related task of error detection over traditional Platt scaling.

Unlearning for Federated Online Learning to Rank: A Reproducibility Study

May 19, 2025Abstract:This paper reports on findings from a comparative study on the effectiveness and efficiency of federated unlearning strategies within Federated Online Learning to Rank (FOLTR), with specific attention to systematically analysing the unlearning capabilities of methods in a verifiable manner. Federated approaches to ranking of search results have recently garnered attention to address users privacy concerns. In FOLTR, privacy is safeguarded by collaboratively training ranking models across decentralized data sources, preserving individual user data while optimizing search results based on implicit feedback, such as clicks. Recent legislation introduced across numerous countries is establishing the so called "the right to be forgotten", according to which services based on machine learning models like those in FOLTR should provide capabilities that allow users to remove their own data from those used to train models. This has sparked the development of unlearning methods, along with evaluation practices to measure whether unlearning of a user data successfully occurred. Current evaluation practices are however often controversial, necessitating the use of multiple metrics for a more comprehensive assessment -- but previous proposals of unlearning methods only used single evaluation metrics. This paper addresses this limitation: our study rigorously assesses the effectiveness of unlearning strategies in managing both under-unlearning and over-unlearning scenarios using adapted, and newly proposed evaluation metrics. Thanks to our detailed analysis, we uncover the strengths and limitations of five unlearning strategies, offering valuable insights into optimizing federated unlearning to balance data privacy and system performance within FOLTR. We publicly release our code and complete results at https://github.com/Iris1026/Unlearning-for-FOLTR.git.

Empirical Performance Evaluation of Lane Keeping Assist on Modern Production Vehicles

May 14, 2025Abstract:Leveraging a newly released open dataset of Lane Keeping Assist (LKA) systems from production vehicles, this paper presents the first comprehensive empirical analysis of real-world LKA performance. Our study yields three key findings: (i) LKA failures can be systematically categorized into perception, planning, and control errors. We present representative examples of each failure mode through in-depth analysis of LKA-related CAN signals, enabling both justification of the failure mechanisms and diagnosis of when and where each module begins to degrade; (ii) LKA systems tend to follow a fixed lane-centering strategy, often resulting in outward drift that increases linearly with road curvature, whereas human drivers proactively steer slightly inward on similar curved segments; (iii) We provide the first statistical summary and distribution analysis of environmental and road conditions under LKA failures, identifying with statistical significance that faded lane markings, low pavement laneline contrast, and sharp curvature are the most dominant individual factors, along with critical combinations that substantially increase failure likelihood. Building on these insights, we propose a theoretical model that integrates road geometry, speed limits, and LKA steering capability to inform infrastructure design. Additionally, we develop a machine learning-based model to assess roadway readiness for LKA deployment, offering practical tools for safer infrastructure planning, especially in rural areas. This work highlights key limitations of current LKA systems and supports the advancement of safer and more reliable autonomous driving technologies.

OpenLKA: an open dataset of lane keeping assist from market autonomous vehicles

Jan 06, 2025

Abstract:The Lane Keeping Assist (LKA) system has become a standard feature in recent car models. While marketed as providing auto-steering capabilities, the system's operational characteristics and safety performance remain underexplored, primarily due to a lack of real-world testing and comprehensive data. To fill this gap, we extensively tested mainstream LKA systems from leading U.S. automakers in Tampa, Florida. Using an innovative method, we collected a comprehensive dataset that includes full Controller Area Network (CAN) messages with LKA attributes, as well as video, perception, and lateral trajectory data from a high-quality front-facing camera equipped with advanced vision detection and trajectory planning algorithms. Our tests spanned diverse, challenging conditions, including complex road geometry, adverse weather, degraded lane markings, and their combinations. A vision language model (VLM) further annotated the videos to capture weather, lighting, and traffic features. Based on this dataset, we present an empirical overview of LKA's operational features and safety performance. Key findings indicate: (i) LKA is vulnerable to faint markings and low pavement contrast; (ii) it struggles in lane transitions (merges, diverges, intersections), often causing unintended departures or disengagements; (iii) steering torque limitations lead to frequent deviations on sharp turns, posing safety risks; and (iv) LKA systems consistently maintain rigid lane-centering, lacking adaptability on tight curves or near large vehicles such as trucks. We conclude by demonstrating how this dataset can guide both infrastructure planning and self-driving technology. In view of LKA's limitations, we recommend improvements in road geometry and pavement maintenance. Additionally, we illustrate how the dataset supports the development of human-like LKA systems via VLM fine-tuning and Chain of Thought reasoning.

Effective and secure federated online learning to rank

Dec 26, 2024Abstract:Online Learning to Rank (OLTR) optimises ranking models using implicit user feedback, such as clicks. Unlike traditional Learning to Rank (LTR) methods that rely on a static set of training data with relevance judgements to learn a ranking model, OLTR methods update the model continually as new data arrives. Thus, it addresses several drawbacks such as the high cost of human annotations, potential misalignment between user preferences and human judgments, and the rapid changes in user query intents. However, OLTR methods typically require the collection of searchable data, user queries, and clicks, which poses privacy concerns for users. Federated Online Learning to Rank (FOLTR) integrates OLTR within a Federated Learning (FL) framework to enhance privacy by not sharing raw data. While promising, FOLTR methods currently lag behind traditional centralised OLTR due to challenges in ranking effectiveness, robustness with respect to data distribution across clients, susceptibility to attacks, and the ability to unlearn client interactions and data. This thesis presents a comprehensive study on Federated Online Learning to Rank, addressing its effectiveness, robustness, security, and unlearning capabilities, thereby expanding the landscape of FOLTR.

Coordinated Power Smoothing Control for Wind Storage Integrated System with Physics-informed Deep Reinforcement Learning

Dec 17, 2024

Abstract:The Wind Storage Integrated System with Power Smoothing Control (PSC) has emerged as a promising solution to ensure both efficient and reliable wind energy generation. However, existing PSC strategies overlook the intricate interplay and distinct control frequencies between batteries and wind turbines, and lack consideration of wake effect and battery degradation cost. In this paper, a novel coordinated control framework with hierarchical levels is devised to address these challenges effectively, which integrates the wake model and battery degradation model. In addition, after reformulating the problem as a Markov decision process, the multi-agent reinforcement learning method is introduced to overcome the bi-level characteristic of the problem. Moreover, a Physics-informed Neural Network-assisted Multi-agent Deep Deterministic Policy Gradient (PAMA-DDPG) algorithm is proposed to incorporate the power fluctuation differential equation and expedite the learning process. The effectiveness of the proposed methodology is evaluated through simulations conducted in four distinct scenarios using WindFarmSimulator (WFSim). The results demonstrate that the proposed algorithm facilitates approximately an 11% increase in total profit and a 19% decrease in power fluctuation compared to the traditional methods, thereby addressing the dual objectives of economic efficiency and grid-connected energy reliability.

How to Forget Clients in Federated Online Learning to Rank?

Jan 24, 2024Abstract:Data protection legislation like the European Union's General Data Protection Regulation (GDPR) establishes the \textit{right to be forgotten}: a user (client) can request contributions made using their data to be removed from learned models. In this paper, we study how to remove the contributions made by a client participating in a Federated Online Learning to Rank (FOLTR) system. In a FOLTR system, a ranker is learned by aggregating local updates to the global ranking model. Local updates are learned in an online manner at a client-level using queries and implicit interactions that have occurred within that specific client. By doing so, each client's local data is not shared with other clients or with a centralised search service, while at the same time clients can benefit from an effective global ranking model learned from contributions of each client in the federation. In this paper, we study an effective and efficient unlearning method that can remove a client's contribution without compromising the overall ranker effectiveness and without needing to retrain the global ranker from scratch. A key challenge is how to measure whether the model has unlearned the contributions from the client $c^*$ that has requested removal. For this, we instruct $c^*$ to perform a poisoning attack (add noise to this client updates) and then we measure whether the impact of the attack is lessened when the unlearning process has taken place. Through experiments on four datasets, we demonstrate the effectiveness and efficiency of the unlearning strategy under different combinations of parameter settings.

An Analysis of Untargeted Poisoning Attack and Defense Methods for Federated Online Learning to Rank Systems

Jul 04, 2023Abstract:Federated online learning to rank (FOLTR) aims to preserve user privacy by not sharing their searchable data and search interactions, while guaranteeing high search effectiveness, especially in contexts where individual users have scarce training data and interactions. For this, FOLTR trains learning to rank models in an online manner -- i.e. by exploiting users' interactions with the search systems (queries, clicks), rather than labels -- and federatively -- i.e. by not aggregating interaction data in a central server for training purposes, but by training instances of a model on each user device on their own private data, and then sharing the model updates, not the data, across a set of users that have formed the federation. Existing FOLTR methods build upon advances in federated learning. While federated learning methods have been shown effective at training machine learning models in a distributed way without the need of data sharing, they can be susceptible to attacks that target either the system's security or its overall effectiveness. In this paper, we consider attacks on FOLTR systems that aim to compromise their search effectiveness. Within this scope, we experiment with and analyse data and model poisoning attack methods to showcase their impact on FOLTR search effectiveness. We also explore the effectiveness of defense methods designed to counteract attacks on FOLTR systems. We contribute an understanding of the effect of attack and defense methods for FOLTR systems, as well as identifying the key factors influencing their effectiveness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge