Shizhe Chen

INRIA

MAGICIAN: Efficient Long-Term Planning with Imagined Gaussians for Active Mapping

Mar 23, 2026Abstract:Active mapping aims to determine how an agent should move to efficiently reconstruct an unknown environment. Most existing approaches rely on greedy next-best-view prediction, resulting in inefficient exploration and incomplete scene reconstruction. To address this limitation, we introduce MAGICIAN, a novel long-term planning framework that maximizes accumulated surface coverage gain through Imagined Gaussians, a scene representation derived from a pre-trained occupancy network with strong structural priors. This representation enables efficient computation of coverage gain for any novel viewpoint via fast volumetric rendering, allowing its integration into a tree-search algorithm for long-horizon planning. We update Imagined Gaussians and refine the planned trajectory in a closed-loop manner. Our method achieves state-of-the-art performance across indoor and outdoor benchmarks with varying action spaces, demonstrating the critical advantage of long-term planning in active mapping.

Gondola: Grounded Vision Language Planning for Generalizable Robotic Manipulation

Jun 12, 2025

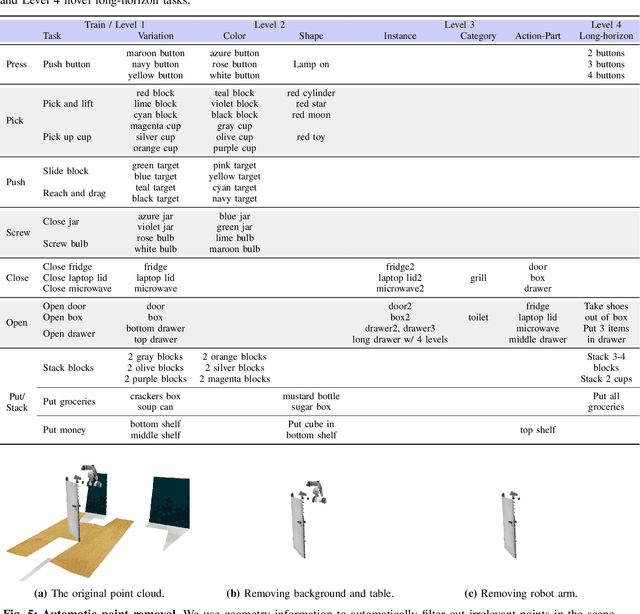

Abstract:Robotic manipulation faces a significant challenge in generalizing across unseen objects, environments and tasks specified by diverse language instructions. To improve generalization capabilities, recent research has incorporated large language models (LLMs) for planning and action execution. While promising, these methods often fall short in generating grounded plans in visual environments. Although efforts have been made to perform visual instructional tuning on LLMs for robotic manipulation, existing methods are typically constrained by single-view image input and struggle with precise object grounding. In this work, we introduce Gondola, a novel grounded vision-language planning model based on LLMs for generalizable robotic manipulation. Gondola takes multi-view images and history plans to produce the next action plan with interleaved texts and segmentation masks of target objects and locations. To support the training of Gondola, we construct three types of datasets using the RLBench simulator, namely robot grounded planning, multi-view referring expression and pseudo long-horizon task datasets. Gondola outperforms the state-of-the-art LLM-based method across all four generalization levels of the GemBench dataset, including novel placements, rigid objects, articulated objects and long-horizon tasks.

ComposeAnything: Composite Object Priors for Text-to-Image Generation

May 30, 2025

Abstract:Generating images from text involving complex and novel object arrangements remains a significant challenge for current text-to-image (T2I) models. Although prior layout-based methods improve object arrangements using spatial constraints with 2D layouts, they often struggle to capture 3D positioning and sacrifice quality and coherence. In this work, we introduce ComposeAnything, a novel framework for improving compositional image generation without retraining existing T2I models. Our approach first leverages the chain-of-thought reasoning abilities of LLMs to produce 2.5D semantic layouts from text, consisting of 2D object bounding boxes enriched with depth information and detailed captions. Based on this layout, we generate a spatial and depth aware coarse composite of objects that captures the intended composition, serving as a strong and interpretable prior that replaces stochastic noise initialization in diffusion-based T2I models. This prior guides the denoising process through object prior reinforcement and spatial-controlled denoising, enabling seamless generation of compositional objects and coherent backgrounds, while allowing refinement of inaccurate priors. ComposeAnything outperforms state-of-the-art methods on the T2I-CompBench and NSR-1K benchmarks for prompts with 2D/3D spatial arrangements, high object counts, and surreal compositions. Human evaluations further demonstrate that our model generates high-quality images with compositions that faithfully reflect the text.

HORT: Monocular Hand-held Objects Reconstruction with Transformers

Mar 27, 2025

Abstract:Reconstructing hand-held objects in 3D from monocular images remains a significant challenge in computer vision. Most existing approaches rely on implicit 3D representations, which produce overly smooth reconstructions and are time-consuming to generate explicit 3D shapes. While more recent methods directly reconstruct point clouds with diffusion models, the multi-step denoising makes high-resolution reconstruction inefficient. To address these limitations, we propose a transformer-based model to efficiently reconstruct dense 3D point clouds of hand-held objects. Our method follows a coarse-to-fine strategy, first generating a sparse point cloud from the image and progressively refining it into a dense representation using pixel-aligned image features. To enhance reconstruction accuracy, we integrate image features with 3D hand geometry to jointly predict the object point cloud and its pose relative to the hand. Our model is trained end-to-end for optimal performance. Experimental results on both synthetic and real datasets demonstrate that our method achieves state-of-the-art accuracy with much faster inference speed, while generalizing well to in-the-wild images.

Online 3D Scene Reconstruction Using Neural Object Priors

Mar 24, 2025Abstract:This paper addresses the problem of reconstructing a scene online at the level of objects given an RGB-D video sequence. While current object-aware neural implicit representations hold promise, they are limited in online reconstruction efficiency and shape completion. Our main contributions to alleviate the above limitations are twofold. First, we propose a feature grid interpolation mechanism to continuously update grid-based object-centric neural implicit representations as new object parts are revealed. Second, we construct an object library with previously mapped objects in advance and leverage the corresponding shape priors to initialize geometric object models in new videos, subsequently completing them with novel views as well as synthesized past views to avoid losing original object details. Extensive experiments on synthetic environments from the Replica dataset, real-world ScanNet sequences and videos captured in our laboratory demonstrate that our approach outperforms state-of-the-art neural implicit models for this task in terms of reconstruction accuracy and completeness.

NextBestPath: Efficient 3D Mapping of Unseen Environments

Feb 07, 2025

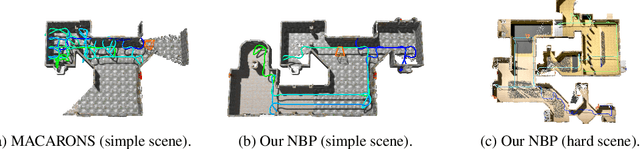

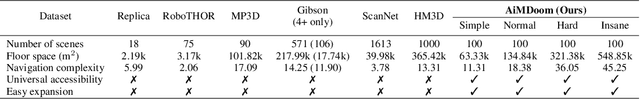

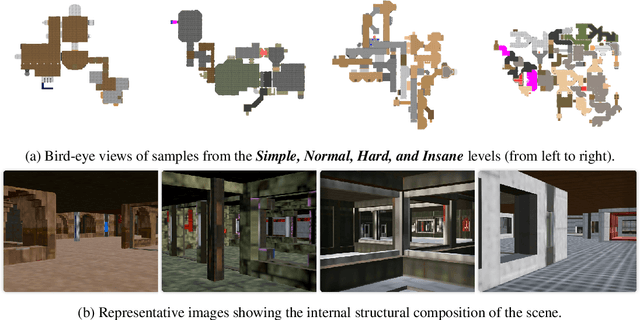

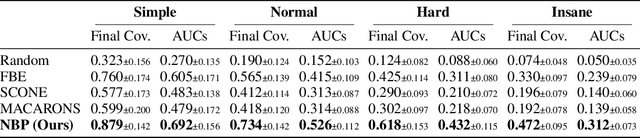

Abstract:This work addresses the problem of active 3D mapping, where an agent must find an efficient trajectory to exhaustively reconstruct a new scene. Previous approaches mainly predict the next best view near the agent's location, which is prone to getting stuck in local areas. Additionally, existing indoor datasets are insufficient due to limited geometric complexity and inaccurate ground truth meshes. To overcome these limitations, we introduce a novel dataset AiMDoom with a map generator for the Doom video game, enabling to better benchmark active 3D mapping in diverse indoor environments. Moreover, we propose a new method we call next-best-path (NBP), which predicts long-term goals rather than focusing solely on short-sighted views. The model jointly predicts accumulated surface coverage gains for long-term goals and obstacle maps, allowing it to efficiently plan optimal paths with a unified model. By leveraging online data collection, data augmentation and curriculum learning, NBP significantly outperforms state-of-the-art methods on both the existing MP3D dataset and our AiMDoom dataset, achieving more efficient mapping in indoor environments of varying complexity.

Towards Generalizable Vision-Language Robotic Manipulation: A Benchmark and LLM-guided 3D Policy

Oct 02, 2024

Abstract:Generalizing language-conditioned robotic policies to new tasks remains a significant challenge, hampered by the lack of suitable simulation benchmarks. In this paper, we address this gap by introducing GemBench, a novel benchmark to assess generalization capabilities of vision-language robotic manipulation policies. GemBench incorporates seven general action primitives and four levels of generalization, spanning novel placements, rigid and articulated objects, and complex long-horizon tasks. We evaluate state-of-the-art approaches on GemBench and also introduce a new method. Our approach 3D-LOTUS leverages rich 3D information for action prediction conditioned on language. While 3D-LOTUS excels in both efficiency and performance on seen tasks, it struggles with novel tasks. To address this, we present 3D-LOTUS++, a framework that integrates 3D-LOTUS's motion planning capabilities with the task planning capabilities of LLMs and the object grounding accuracy of VLMs. 3D-LOTUS++ achieves state-of-the-art performance on novel tasks of GemBench, setting a new standard for generalization in robotic manipulation. The benchmark, codes and trained models are available at \url{https://www.di.ens.fr/willow/research/gembench/}.

Conan-embedding: General Text Embedding with More and Better Negative Samples

Aug 29, 2024

Abstract:With the growing popularity of RAG, the capabilities of embedding models are gaining increasing attention. Embedding models are primarily trained through contrastive loss learning, with negative examples being a key component. Previous work has proposed various hard negative mining strategies, but these strategies are typically employed as preprocessing steps. In this paper, we propose the conan-embedding model, which maximizes the utilization of more and higher-quality negative examples. Specifically, since the model's ability to handle preprocessed negative examples evolves during training, we propose dynamic hard negative mining method to expose the model to more challenging negative examples throughout the training process. Secondly, contrastive learning requires as many negative examples as possible but is limited by GPU memory constraints. Therefore, we use a Cross-GPU balancing Loss to provide more negative examples for embedding training and balance the batch size across multiple tasks. Moreover, we also discovered that the prompt-response pairs from LLMs can be used for embedding training. Our approach effectively enhances the capabilities of embedding models, currently ranking first on the Chinese leaderboard of Massive text embedding benchmark

ViViDex: Learning Vision-based Dexterous Manipulation from Human Videos

Apr 24, 2024

Abstract:In this work, we aim to learn a unified vision-based policy for a multi-fingered robot hand to manipulate different objects in diverse poses. Though prior work has demonstrated that human videos can benefit policy learning, performance improvement has been limited by physically implausible trajectories extracted from videos. Moreover, reliance on privileged object information such as ground-truth object states further limits the applicability in realistic scenarios. To address these limitations, we propose a new framework ViViDex to improve vision-based policy learning from human videos. It first uses reinforcement learning with trajectory guided rewards to train state-based policies for each video, obtaining both visually natural and physically plausible trajectories from the video. We then rollout successful episodes from state-based policies and train a unified visual policy without using any privileged information. A coordinate transformation method is proposed to significantly boost the performance. We evaluate our method on three dexterous manipulation tasks and demonstrate a large improvement over state-of-the-art algorithms.

Think-Program-reCtify: 3D Situated Reasoning with Large Language Models

Apr 23, 2024

Abstract:This work addresses the 3D situated reasoning task which aims to answer questions given egocentric observations in a 3D environment. The task remains challenging as it requires comprehensive 3D perception and complex reasoning skills. End-to-end models trained on supervised data for 3D situated reasoning suffer from data scarcity and generalization ability. Inspired by the recent success of leveraging large language models (LLMs) for visual reasoning, we propose LLM-TPC, a novel framework that leverages the planning, tool usage, and reflection capabilities of LLMs through a ThinkProgram-reCtify loop. The Think phase first decomposes the compositional question into a sequence of steps, and then the Program phase grounds each step to a piece of code and calls carefully designed 3D visual perception modules. Finally, the Rectify phase adjusts the plan and code if the program fails to execute. Experiments and analysis on the SQA3D benchmark demonstrate the effectiveness, interpretability and robustness of our method. Our code is publicly available at https://qingrongh.github.io/LLM-TPC/.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge