Sari Saba-Sadiya

Different Algorithms (Might) Uncover Different Patterns: A Brain-Age Prediction Case Study

Feb 08, 2024

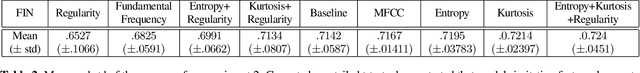

Abstract:Machine learning is a rapidly evolving field with a wide range of applications, including biological signal analysis, where novel algorithms often improve the state-of-the-art. However, robustness to algorithmic variability - measured by different algorithms, consistently uncovering similar findings - is seldom explored. In this paper we investigate whether established hypotheses in brain-age prediction from EEG research validate across algorithms. First, we surveyed literature and identified various features known to be informative for brain-age prediction. We employed diverse feature extraction techniques, processing steps, and models, and utilized the interpretative power of SHapley Additive exPlanations (SHAP) values to align our findings with the existing research in the field. Few of our models achieved state-of-the-art performance on the specific data-set we utilized. Moreover, analysis demonstrated that while most models do uncover similar patterns in the EEG signals, some variability could still be observed. Finally, a few prominent findings could only be validated using specific models. We conclude by suggesting remedies to the potential implications of this lack of robustness to model variability.

MambaNet: A Hybrid Neural Network for Predicting the NBA Playoffs

Oct 31, 2022

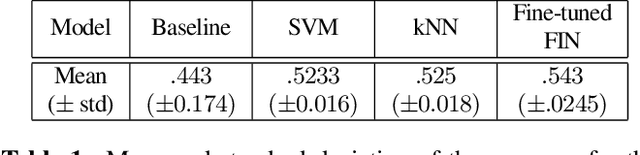

Abstract:In this paper, we present Mambanet: a hybrid neural network for predicting the outcomes of Basketball games. Contrary to other studies, which focus primarily on season games, this study investigates playoff games. MambaNet is a hybrid neural network architecture that processes a time series of teams' and players' game statistics and generates the probability of a team winning or losing an NBA playoff match. In our approach, we utilize Feature Imitating Networks to provide latent signal-processing feature representations of game statistics to further process with convolutional, recurrent, and dense neural layers. Three experiments using six different datasets are conducted to evaluate the performance and generalizability of our architecture against a wide range of previous studies. Our final method successfully predicted the AUC from 0.72 to 0.82, beating the best-performing baseline models by a considerable margin.

Feature Imitating Networks

Oct 23, 2021

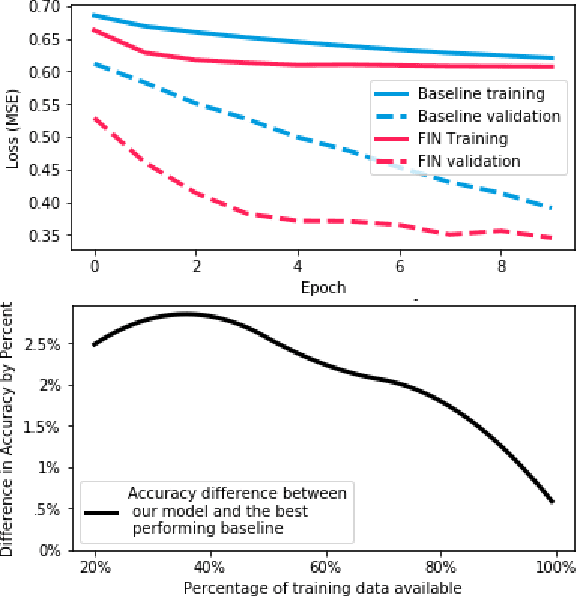

Abstract:In this paper, we introduce a novel approach to neural learning: the Feature-Imitating-Network (FIN). A FIN is a neural network with weights that are initialized to reliably approximate one or more closed-form statistical features, such as Shannon's entropy. In this paper, we demonstrate that FINs (and FIN ensembles) provide best-in-class performance for a variety of downstream signal processing and inference tasks, while using less data and requiring less fine-tuning compared to other networks of similar (or even greater) representational power. We conclude that FINs can help bridge the gap between domain experts and machine learning practitioners by enabling researchers to harness insights from feature-engineering to enhance the performance of contemporary representation learning approaches.

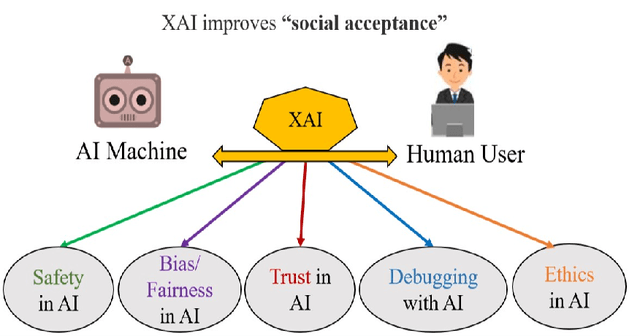

CX-ToM: Counterfactual Explanations with Theory-of-Mind for Enhancing Human Trust in Image Recognition Models

Sep 06, 2021

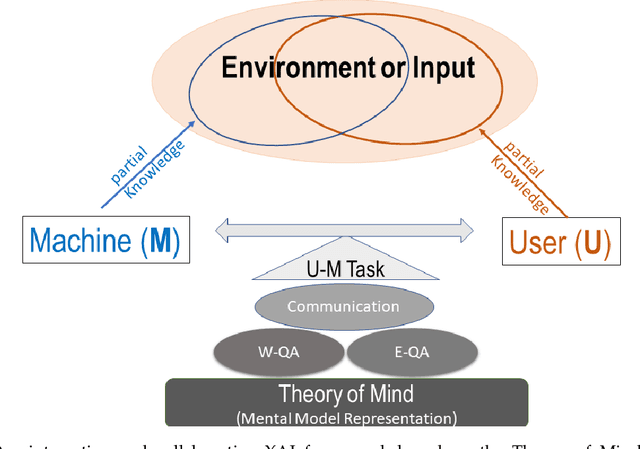

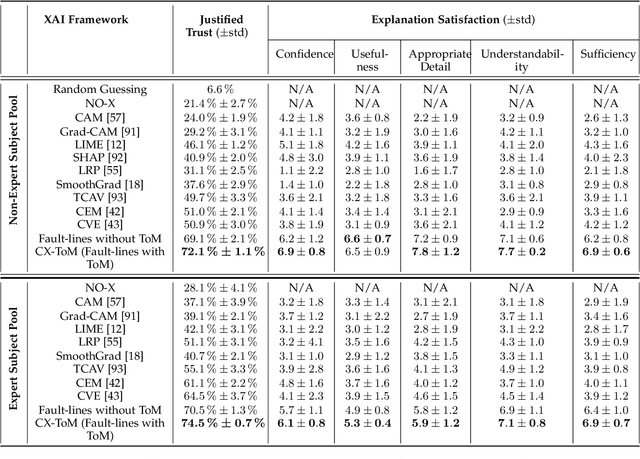

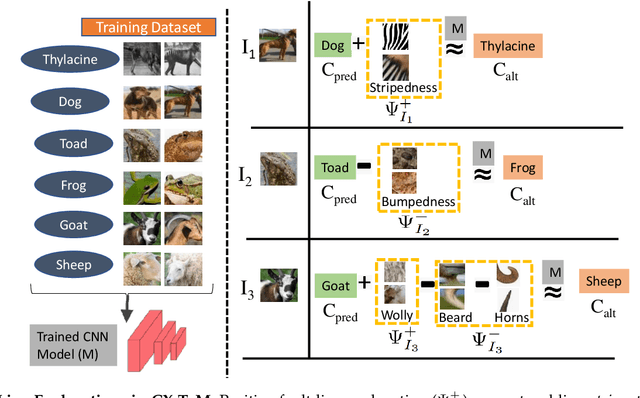

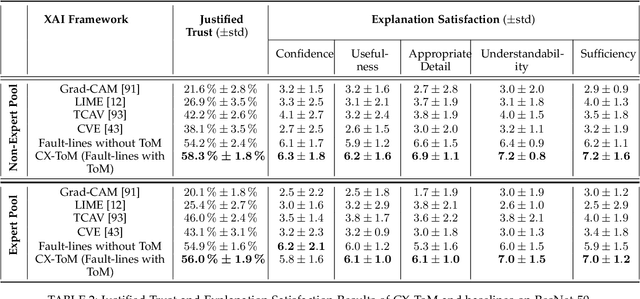

Abstract:We propose CX-ToM, short for counterfactual explanations with theory-of mind, a new explainable AI (XAI) framework for explaining decisions made by a deep convolutional neural network (CNN). In contrast to the current methods in XAI that generate explanations as a single shot response, we pose explanation as an iterative communication process, i.e. dialog, between the machine and human user. More concretely, our CX-ToM framework generates sequence of explanations in a dialog by mediating the differences between the minds of machine and human user. To do this, we use Theory of Mind (ToM) which helps us in explicitly modeling human's intention, machine's mind as inferred by the human as well as human's mind as inferred by the machine. Moreover, most state-of-the-art XAI frameworks provide attention (or heat map) based explanations. In our work, we show that these attention based explanations are not sufficient for increasing human trust in the underlying CNN model. In CX-ToM, we instead use counterfactual explanations called fault-lines which we define as follows: given an input image I for which a CNN classification model M predicts class c_pred, a fault-line identifies the minimal semantic-level features (e.g., stripes on zebra, pointed ears of dog), referred to as explainable concepts, that need to be added to or deleted from I in order to alter the classification category of I by M to another specified class c_alt. We argue that, due to the iterative, conceptual and counterfactual nature of CX-ToM explanations, our framework is practical and more natural for both expert and non-expert users to understand the internal workings of complex deep learning models. Extensive quantitative and qualitative experiments verify our hypotheses, demonstrating that our CX-ToM significantly outperforms the state-of-the-art explainable AI models.

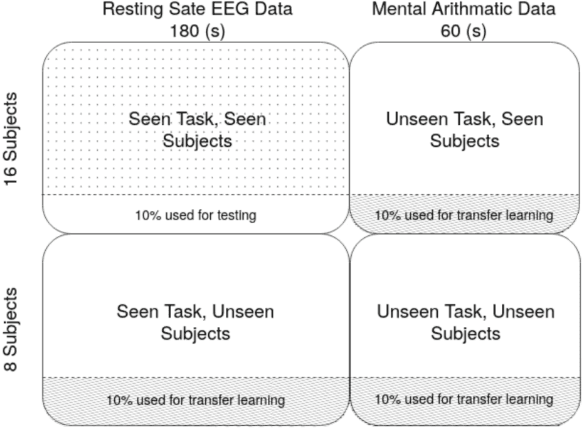

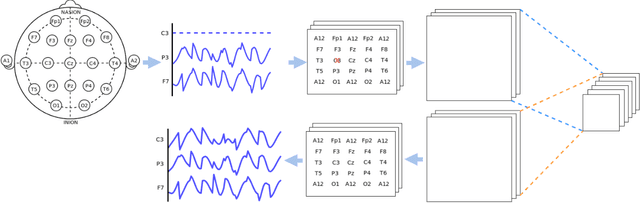

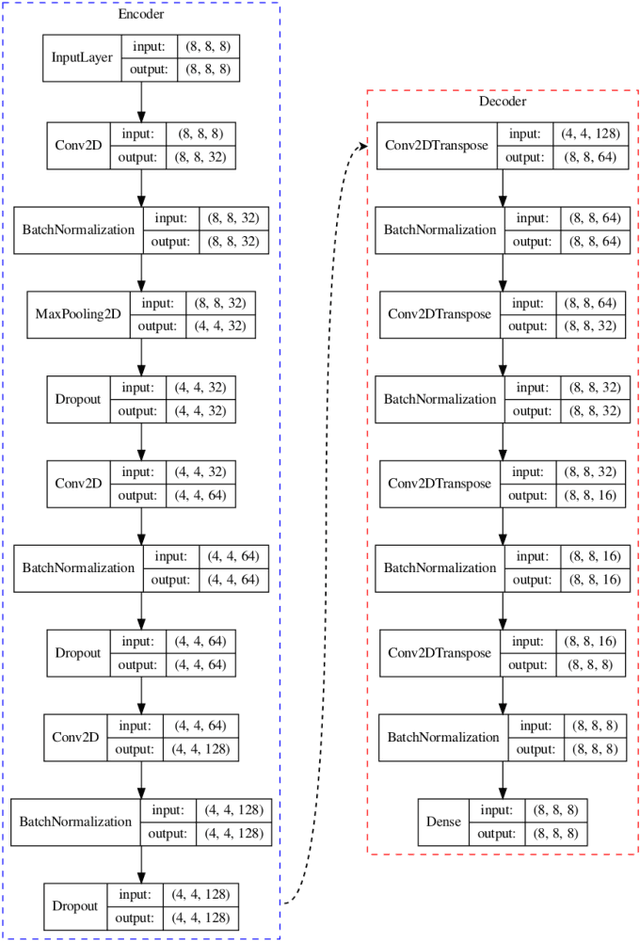

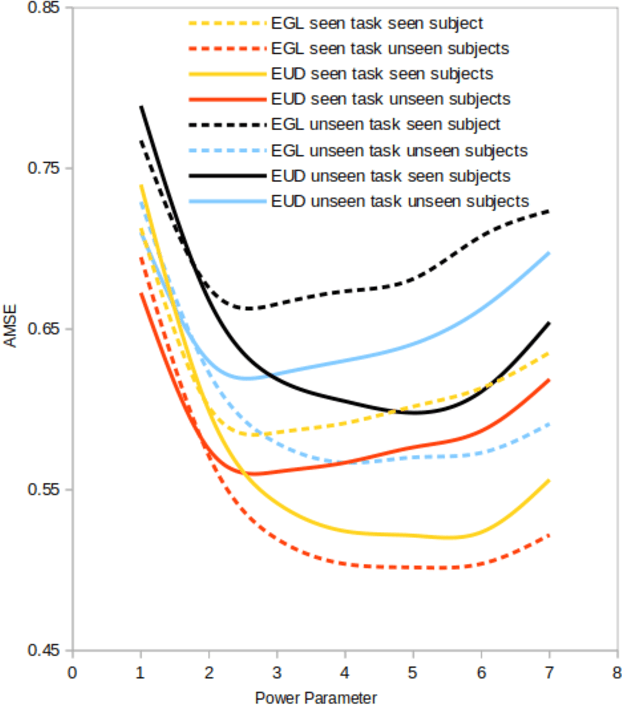

EEG Channel Interpolation Using Deep Encoder-decoder Netwoks

Sep 21, 2020

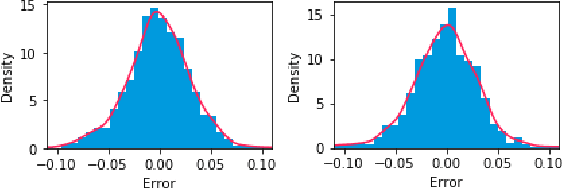

Abstract:Electrode "pop" artifacts originate from the spontaneous loss of connectivity between a surface and an electrode. Electroencephalography (EEG) uses a dense array of electrodes, hence "popped" segments are among the most pervasive type of artifact seen during the collection of EEG data. In many cases, the continuity of EEG data is critical for downstream applications (e.g. brain machine interface) and requires that popped segments be accurately interpolated. In this paper we frame the interpolation problem as a self-learning task using a deep encoder-decoder network. We compare our approach against contemporary interpolation methods on a publicly available EEG data set. Our approach exhibited a minimum of ~15% improvement over contemporary approaches when tested on subjects and tasks not used during model training. We demonstrate how our model's performance can be enhanced further on novel subjects and tasks using transfer learning. All code and data associated with this study is open-source to enable ease of extension and practical use. To our knowledge, this work is the first solution to the EEG interpolation problem that uses deep learning.

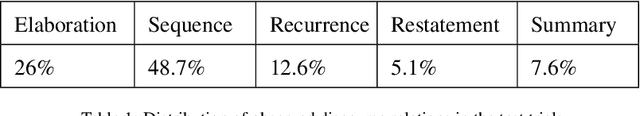

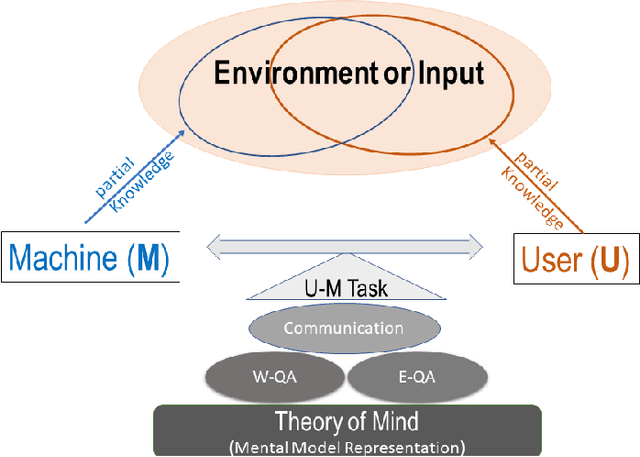

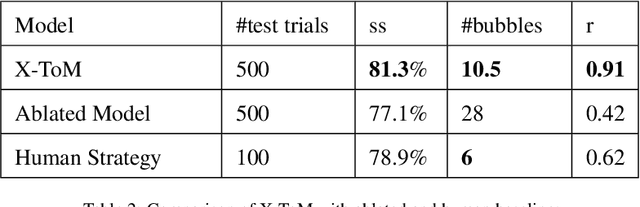

X-ToM: Explaining with Theory-of-Mind for Gaining Justified Human Trust

Sep 15, 2019

Abstract:We present a new explainable AI (XAI) framework aimed at increasing justified human trust and reliance in the AI machine through explanations. We pose explanation as an iterative communication process, i.e. dialog, between the machine and human user. More concretely, the machine generates sequence of explanations in a dialog which takes into account three important aspects at each dialog turn: (a) human's intention (or curiosity); (b) human's understanding of the machine; and (c) machine's understanding of the human user. To do this, we use Theory of Mind (ToM) which helps us in explicitly modeling human's intention, machine's mind as inferred by the human as well as human's mind as inferred by the machine. In other words, these explicit mental representations in ToM are incorporated to learn an optimal explanation policy that takes into account human's perception and beliefs. Furthermore, we also show that ToM facilitates in quantitatively measuring justified human trust in the machine by comparing all the three mental representations. We applied our framework to three visual recognition tasks, namely, image classification, action recognition, and human body pose estimation. We argue that our ToM based explanations are practical and more natural for both expert and non-expert users to understand the internal workings of complex machine learning models. To the best of our knowledge, this is the first work to derive explanations using ToM. Extensive human study experiments verify our hypotheses, showing that the proposed explanations significantly outperform the state-of-the-art XAI methods in terms of all the standard quantitative and qualitative XAI evaluation metrics including human trust, reliance, and explanation satisfaction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge