Peiyu Li

TeachingCoach: A Fine-Tuned Scaffolding Chatbot for Instructional Guidance to Instructors

Mar 18, 2026Abstract:Higher education instructors often lack timely and pedagogically grounded support, as scalable instructional guidance remains limited and existing tools rely on generic chatbot advice or non-scalable teaching center human-human consultations. We present TeachingCoach, a pedagogically grounded chatbot designed to support instructor professional development through real-time, conversational guidance. TeachingCoach is built on a data-centric pipeline that extracts pedagogical rules from educational resources and uses synthetic dialogue generation to fine-tune a specialized language model that guides instructors through problem identification, diagnosis, and strategy development. Expert evaluations show TeachingCoach produces clearer, more reflective, and more responsive guidance than a GPT-4o mini baseline, while a user study with higher education instructors highlights trade-offs between conversational depth and interaction efficiency. Together, these results demonstrate that pedagogically grounded, synthetic data driven chatbots can improve instructional support and offer a scalable design approach for future instructional chatbot systems.

CrochetBench: Can Vision-Language Models Move from Describing to Doing in Crochet Domain?

Nov 12, 2025

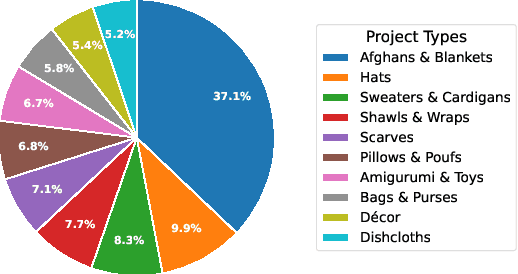

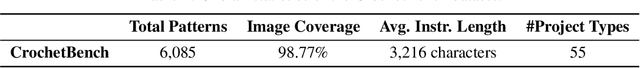

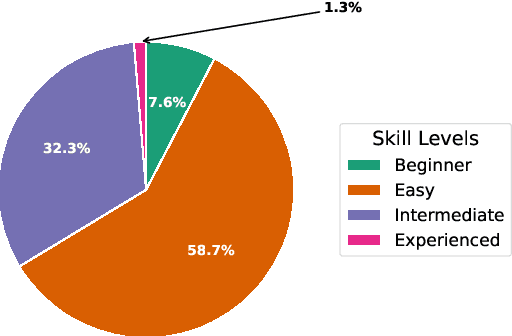

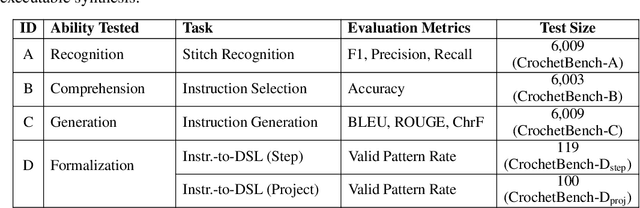

Abstract:We present CrochetBench, a benchmark for evaluating the ability of multimodal large language models to perform fine-grained, low-level procedural reasoning in the domain of crochet. Unlike prior benchmarks that focus on high-level description or visual question answering, CrochetBench shifts the emphasis from describing to doing: models are required to recognize stitches, select structurally appropriate instructions, and generate compilable crochet procedures. We adopt the CrochetPARADE DSL as our intermediate representation, enabling structural validation and functional evaluation via execution. The benchmark covers tasks including stitch classification, instruction grounding, and both natural language and image-to-DSL translation. Across all tasks, performance sharply declines as the evaluation shifts from surface-level similarity to executable correctness, exposing limitations in long-range symbolic reasoning and 3D-aware procedural synthesis. CrochetBench offers a new lens for assessing procedural competence in multimodal models and highlights the gap between surface-level understanding and executable precision in real-world creative domains. Code is available at https://github.com/Peiyu-Georgia-Li/crochetBench.

Enabling Inclusive Systematic Reviews: Incorporating Preprint Articles with Large Language Model-Driven Evaluations

Mar 19, 2025Abstract:Background. Systematic reviews in comparative effectiveness research require timely evidence synthesis. Preprints accelerate knowledge dissemination but vary in quality, posing challenges for systematic reviews. Methods. We propose AutoConfidence (automated confidence assessment), an advanced framework for predicting preprint publication, which reduces reliance on manual curation and expands the range of predictors, including three key advancements: (1) automated data extraction using natural language processing techniques, (2) semantic embeddings of titles and abstracts, and (3) large language model (LLM)-driven evaluation scores. Additionally, we employed two prediction models: a random forest classifier for binary outcome and a survival cure model that predicts both binary outcome and publication risk over time. Results. The random forest classifier achieved AUROC 0.692 with LLM-driven scores, improving to 0.733 with semantic embeddings and 0.747 with article usage metrics. The survival cure model reached AUROC 0.716 with LLM-driven scores, improving to 0.731 with semantic embeddings. For publication risk prediction, it achieved a concordance index of 0.658, increasing to 0.667 with semantic embeddings. Conclusion. Our study advances the framework for preprint publication prediction through automated data extraction and multiple feature integration. By combining semantic embeddings with LLM-driven evaluations, AutoConfidence enhances predictive performance while reducing manual annotation burden. The framework has the potential to facilitate systematic incorporation of preprint articles in evidence-based medicine, supporting researchers in more effective evaluation and utilization of preprint resources.

M-CELS: Counterfactual Explanation for Multivariate Time Series Data Guided by Learned Saliency Maps

Nov 04, 2024Abstract:Over the past decade, multivariate time series classification has received great attention. Machine learning (ML) models for multivariate time series classification have made significant strides and achieved impressive success in a wide range of applications and tasks. The challenge of many state-of-the-art ML models is a lack of transparency and interpretability. In this work, we introduce M-CELS, a counterfactual explanation model designed to enhance interpretability in multidimensional time series classification tasks. Our experimental validation involves comparing M-CELS with leading state-of-the-art baselines, utilizing seven real-world time-series datasets from the UEA repository. The results demonstrate the superior performance of M-CELS in terms of validity, proximity, and sparsity, reinforcing its effectiveness in providing transparent insights into the decisions of machine learning models applied to multivariate time series data.

Info-CELS: Informative Saliency Map Guided Counterfactual Explanation

Oct 27, 2024

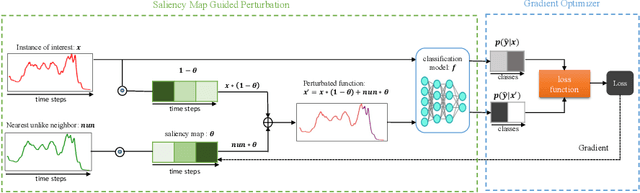

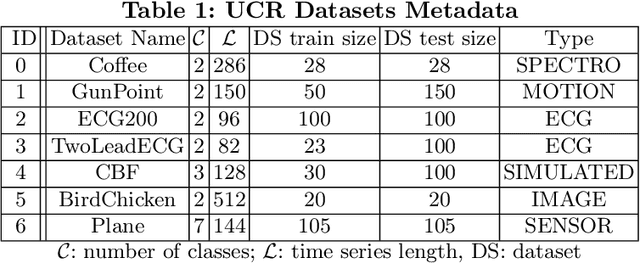

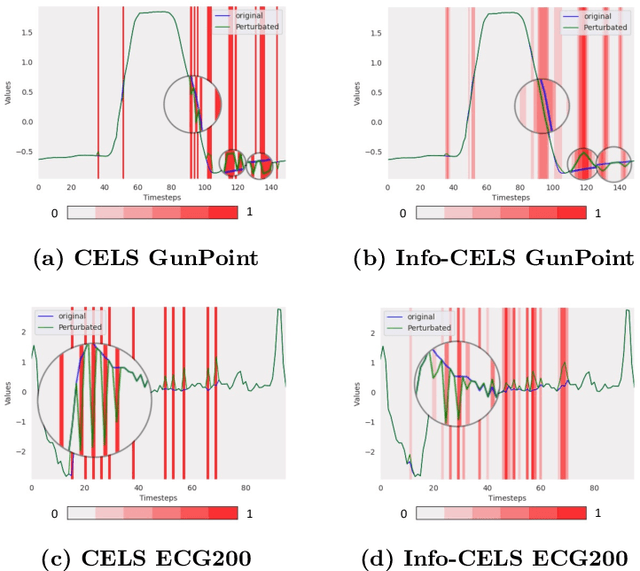

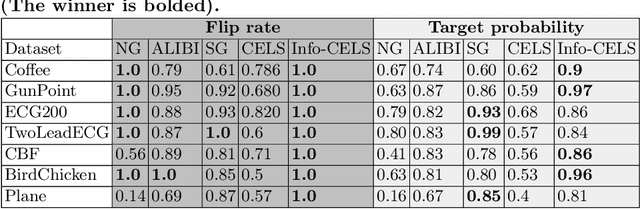

Abstract:As the demand for interpretable machine learning approaches continues to grow, there is an increasing necessity for human involvement in providing informative explanations for model decisions. This is necessary for building trust and transparency in AI-based systems, leading to the emergence of the Explainable Artificial Intelligence (XAI) field. Recently, a novel counterfactual explanation model, CELS, has been introduced. CELS learns a saliency map for the interest of an instance and generates a counterfactual explanation guided by the learned saliency map. While CELS represents the first attempt to exploit learned saliency maps not only to provide intuitive explanations for the reason behind the decision made by the time series classifier but also to explore post hoc counterfactual explanations, it exhibits limitations in terms of high validity for the sake of ensuring high proximity and sparsity. In this paper, we present an enhanced approach that builds upon CELS. While the original model achieved promising results in terms of sparsity and proximity, it faced limitations in validity. Our proposed method addresses this limitation by removing mask normalization to provide more informative and valid counterfactual explanations. Through extensive experimentation on datasets from various domains, we demonstrate that our approach outperforms the CELS model, achieving higher validity and producing more informative explanations.

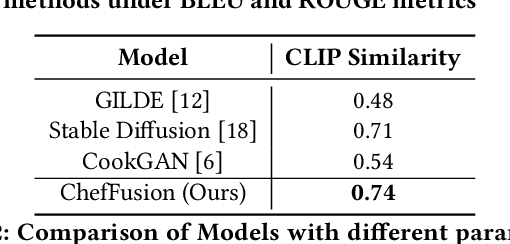

ChefFusion: Multimodal Foundation Model Integrating Recipe and Food Image Generation

Sep 18, 2024

Abstract:Significant work has been conducted in the domain of food computing, yet these studies typically focus on single tasks such as t2t (instruction generation from food titles and ingredients), i2t (recipe generation from food images), or t2i (food image generation from recipes). None of these approaches integrate all modalities simultaneously. To address this gap, we introduce a novel food computing foundation model that achieves true multimodality, encompassing tasks such as t2t, t2i, i2t, it2t, and t2ti. By leveraging large language models (LLMs) and pre-trained image encoder and decoder models, our model can perform a diverse array of food computing-related tasks, including food understanding, food recognition, recipe generation, and food image generation. Compared to previous models, our foundation model demonstrates a significantly broader range of capabilities and exhibits superior performance, particularly in food image generation and recipe generation tasks. We open-sourced ChefFusion at GitHub.

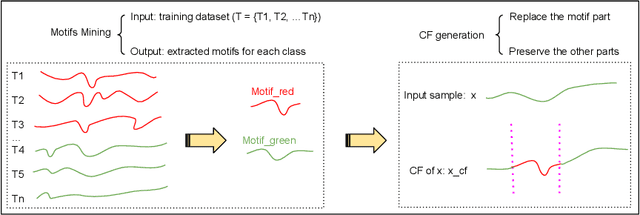

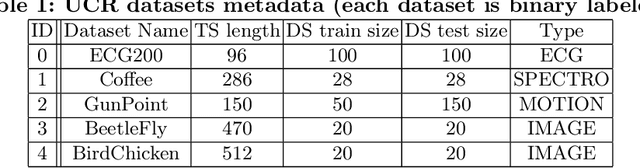

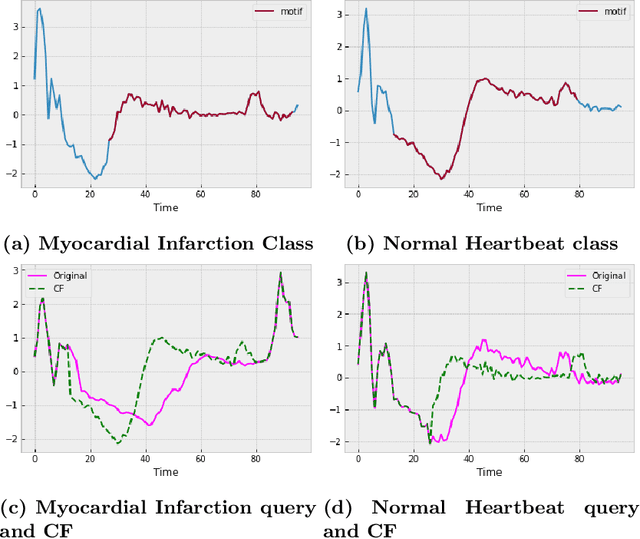

Motif-guided Time Series Counterfactual Explanations

Nov 11, 2022

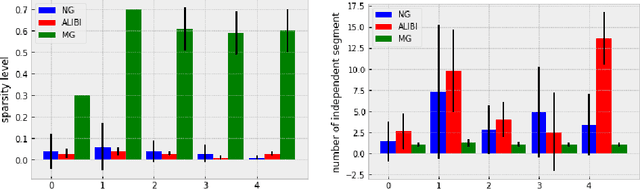

Abstract:With the rising need of interpretable machine learning methods, there is a necessity for a rise in human effort to provide diverse explanations of the influencing factors of the model decisions. To improve the trust and transparency of AI-based systems, the EXplainable Artificial Intelligence (XAI) field has emerged. The XAI paradigm is bifurcated into two main categories: feature attribution and counterfactual explanation methods. While feature attribution methods are based on explaining the reason behind a model decision, counterfactual explanation methods discover the smallest input changes that will result in a different decision. In this paper, we aim at building trust and transparency in time series models by using motifs to generate counterfactual explanations. We propose Motif-Guided Counterfactual Explanation (MG-CF), a novel model that generates intuitive post-hoc counterfactual explanations that make full use of important motifs to provide interpretive information in decision-making processes. To the best of our knowledge, this is the first effort that leverages motifs to guide the counterfactual explanation generation. We validated our model using five real-world time-series datasets from the UCR repository. Our experimental results show the superiority of MG-CF in balancing all the desirable counterfactual explanations properties in comparison with other competing state-of-the-art baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge