P. Bilha Githinji

Mapping the maturation of TCM as an adjuvant to radiotherapy

Jan 17, 2026Abstract:The integration of complementary medicine into oncology represents a paradigm shift that has seen to increasing adoption of Traditional Chinese Medicine (TCM) as an adjuvant to radiotherapy. About twenty-five years since the formal institutionalization of integrated oncology, it is opportune to synthesize the trajectory of evidence for TCM as an adjuvant to radiotherapy. Here we conduct a large-scale analysis of 69,745 publications (2000 - 2025), emerging a cyclical evolution defined by coordinated expansion and contraction in publication output, international collaboration, and funding commitments that mirrors a define-ideate-test pattern. Using a theme modeling workflow designed to determine a stable thematic structure of the field, we identify five dominant thematic axes - cancer types, supportive care, clinical endpoints, mechanisms, and methodology - that signal a focus on patient well-being, scientific rigor and mechanistic exploration. Cross-theme integration of TCM is patient-centered and systems-oriented. Together with the emergent cycles of evolution, the thematic structure demonstrates progressive specialization and potential defragmentation of the field or saturation of existing research agenda. The analysis points to a field that has matured its current research agenda and is likely at the cusp of something new. Additionally, the field exhibits positive reporting of findings that is homogeneous across publication types, thematic areas, and the cycles of evolution suggesting a system-wide positive reporting bias agnostic to structural drivers.

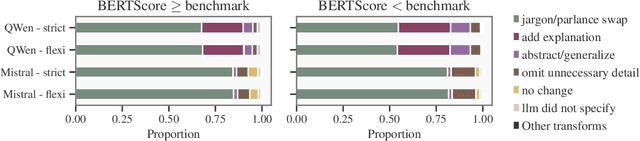

On Text Simplification Metrics and General-Purpose LLMs for Accessible Health Information, and A Potential Architectural Advantage of The Instruction-Tuned LLM class

Nov 07, 2025

Abstract:The increasing health-seeking behavior and digital consumption of biomedical information by the general public necessitate scalable solutions for automatically adapting complex scientific and technical documents into plain language. Automatic text simplification solutions, including advanced large language models, however, continue to face challenges in reliably arbitrating the tension between optimizing readability performance and ensuring preservation of discourse fidelity. This report empirically assesses the performance of two major classes of general-purpose LLMs, demonstrating their linguistic capabilities and foundational readiness for the task compared to a human benchmark. Using a comparative analysis of the instruction-tuned Mistral 24B and the reasoning-augmented QWen2.5 32B, we identify a potential architectural advantage in the instruction-tuned LLM. Mistral exhibits a tempered lexical simplification strategy that enhances readability across a suite of metrics and the simplification-specific formula SARI (mean 42.46), while preserving human-level discourse with a BERTScore of 0.91. QWen also attains enhanced readability performance, but its operational strategy shows a disconnect in balancing between readability and accuracy, reaching a statistically significantly lower BERTScore of 0.89. Additionally, a comprehensive correlation analysis of 21 metrics spanning readability, discourse fidelity, content safety, and underlying distributional measures for mechanistic insights, confirms strong functional redundancies among five readability indices. This empirical evidence tracks baseline performance of the evolving LLMs for the task of text simplification, identifies the instruction-tuned Mistral 24B for simplification, provides necessary heuristics for metric selection, and points to lexical support as a primary domain-adaptation issue for simplification.

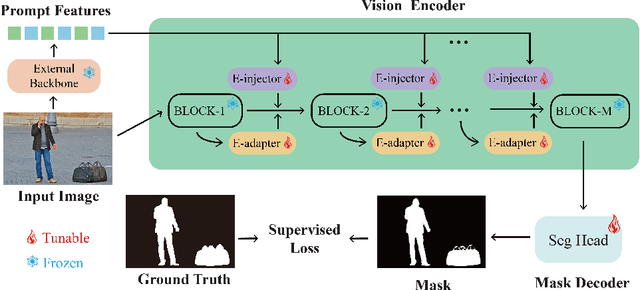

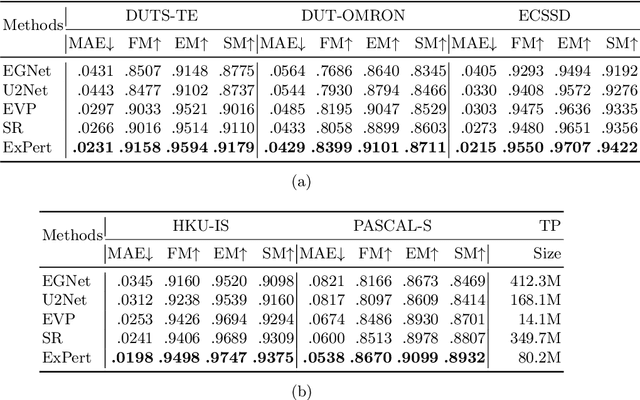

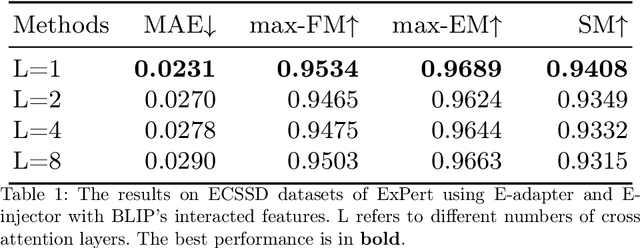

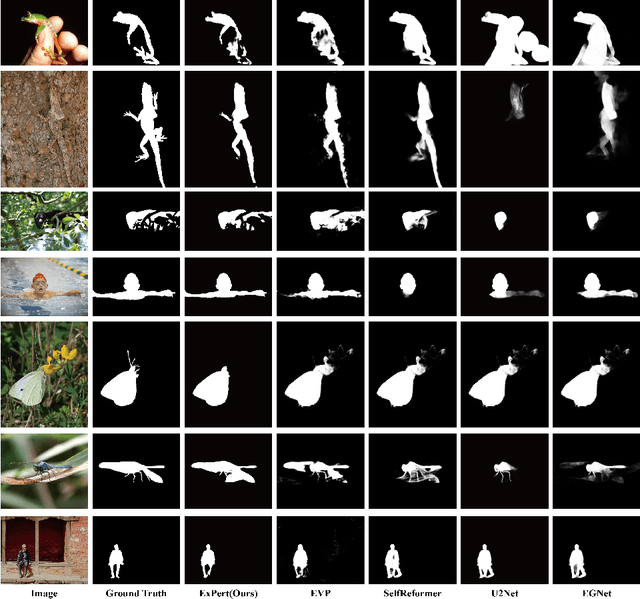

External Prompt Features Enhanced Parameter-efficient Fine-tuning for Salient Object Detection

Apr 23, 2024

Abstract:Salient object detection (SOD) aims at finding the most salient objects in images and outputs pixel-level binary masks. Transformer-based methods achieve promising performance due to their global semantic understanding, crucial for identifying salient objects. However, these models tend to be large and require numerous training parameters. To better harness the potential of transformers for SOD, we propose a novel parameter-efficient fine-tuning method aimed at reducing the number of training parameters while enhancing the salient object detection capability. Our model, termed EXternal Prompt features Enhanced adapteR Tuning (ExPert), features an encoder-decoder structure with adapters and injectors interspersed between the layers of a frozen transformer encoder. The adapter modules adapt the pre-trained backbone to SOD while the injector modules incorporate external prompt features to enhance the awareness of salient objects. Comprehensive experiments demonstrate the superiority of our method. Surpassing former state-of-the-art (SOTA) models across five SOD datasets, ExPert achieves 0.215 mean absolute error (MAE) in ECSSD dataset with 80.2M trained parameters, 21% better than transformer-based SOTA model and 47% better than CNN-based SOTA model.

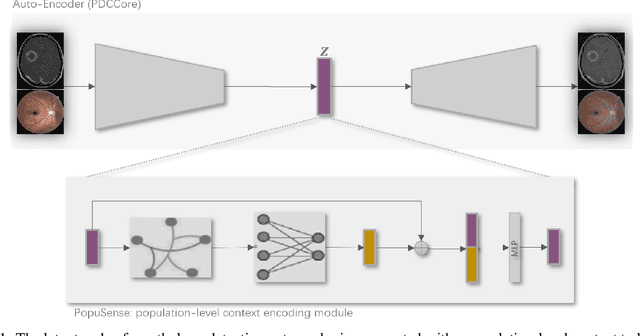

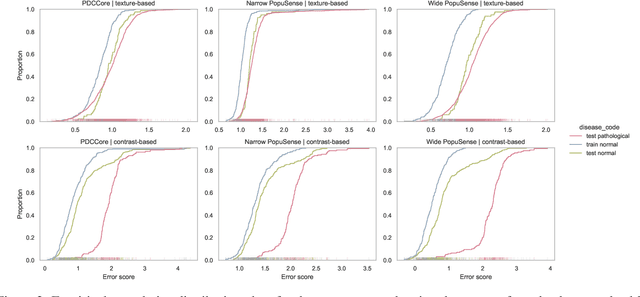

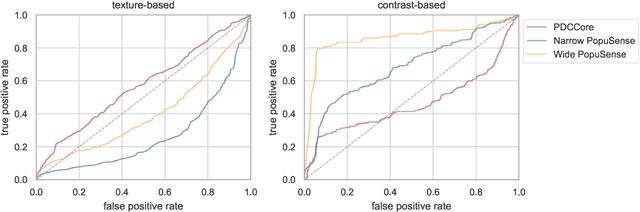

Harnessing Intra-group Variations Via a Population-Level Context for Pathology Detection

Mar 04, 2024

Abstract:Realizing sufficient separability between the distributions of healthy and pathological samples is a critical obstacle for pathology detection convolutional models. Moreover, these models exhibit a bias for contrast-based images, with diminished performance on texture-based medical images. This study introduces the notion of a population-level context for pathology detection and employs a graph theoretic approach to model and incorporate it into the latent code of an autoencoder via a refinement module we term PopuSense. PopuSense seeks to capture additional intra-group variations inherent in biomedical data that a local or global context of the convolutional model might miss or smooth out. Experiments on contrast-based and texture-based images, with minimal adaptation, encounter the existing preference for intensity-based input. Nevertheless, PopuSense demonstrates improved separability in contrast-based images, presenting an additional avenue for refining representations learned by a model.

IRFundusSet: An Integrated Retinal Fundus Dataset with a Harmonized Healthy Label

Feb 27, 2024Abstract:Ocular conditions are a global concern and computational tools utilizing retinal fundus color photographs can aid in routine screening and management. Obtaining comprehensive and sufficiently sized datasets, however, is non-trivial for the intricate retinal fundus, which exhibits heterogeneities within pathologies, in addition to variations from demographics and acquisition. Moreover, retinal fundus datasets in the public space suffer fragmentation in the organization of data and definition of a healthy observation. We present Integrated Retinal Fundus Set (IRFundusSet), a dataset that consolidates, harmonizes and curates several public datasets, facilitating their consumption as a unified whole and with a consistent is_normal label. IRFundusSet comprises a Python package that automates harmonization and avails a dataset object in line with the PyTorch approach. Moreover, images are physically reviewed and a new is_normal label is annotated for a consistent definition of a healthy observation. Ten public datasets are initially considered with a total of 46064 images, of which 25406 are curated for a new is_normal label and 3515 are deemed healthy across the sources.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge