Nick Kolkin

Group Diffusion: Enhancing Image Generation by Unlocking Cross-Sample Collaboration

Dec 11, 2025

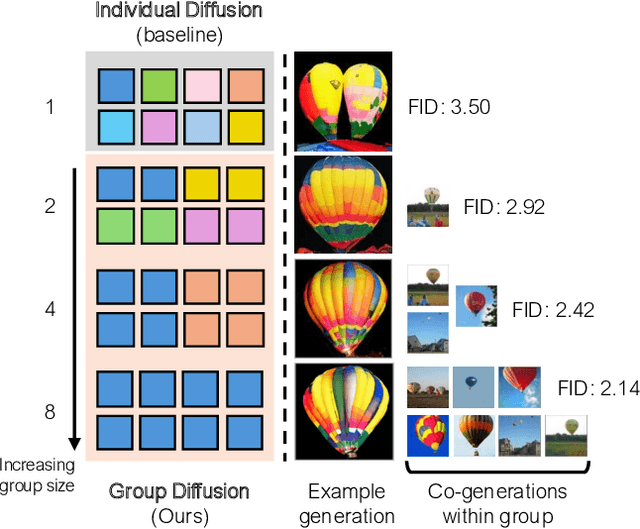

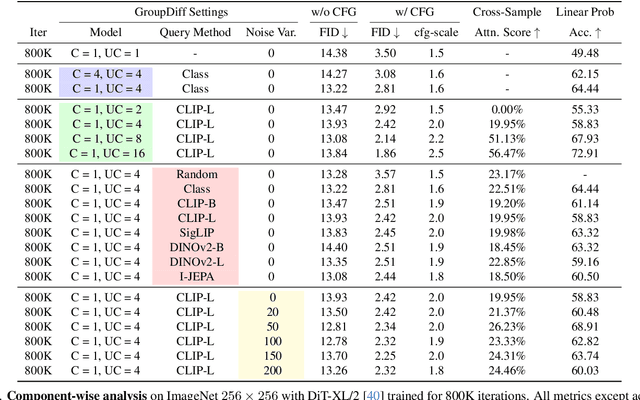

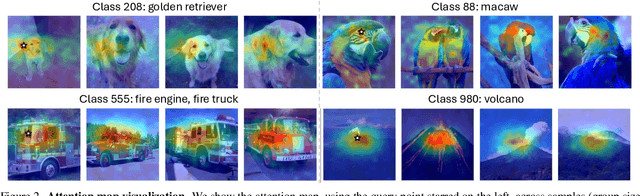

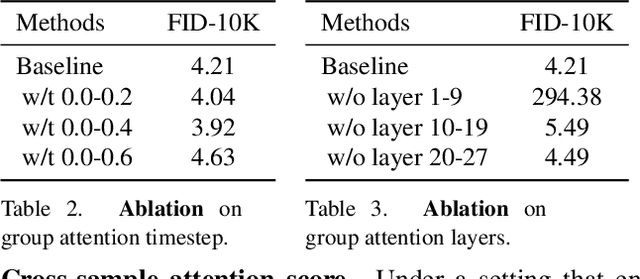

Abstract:In this work, we explore an untapped signal in diffusion model inference. While all previous methods generate images independently at inference, we instead ask if samples can be generated collaboratively. We propose Group Diffusion, unlocking the attention mechanism to be shared across images, rather than limited to just the patches within an image. This enables images to be jointly denoised at inference time, learning both intra and inter-image correspondence. We observe a clear scaling effect - larger group sizes yield stronger cross-sample attention and better generation quality. Furthermore, we introduce a qualitative measure to capture this behavior and show that its strength closely correlates with FID. Built on standard diffusion transformers, our GroupDiff achieves up to 32.2% FID improvement on ImageNet-256x256. Our work reveals cross-sample inference as an effective, previously unexplored mechanism for generative modeling.

SliderSpace: Decomposing the Visual Capabilities of Diffusion Models

Feb 03, 2025Abstract:We present SliderSpace, a framework for automatically decomposing the visual capabilities of diffusion models into controllable and human-understandable directions. Unlike existing control methods that require a user to specify attributes for each edit direction individually, SliderSpace discovers multiple interpretable and diverse directions simultaneously from a single text prompt. Each direction is trained as a low-rank adaptor, enabling compositional control and the discovery of surprising possibilities in the model's latent space. Through extensive experiments on state-of-the-art diffusion models, we demonstrate SliderSpace's effectiveness across three applications: concept decomposition, artistic style exploration, and diversity enhancement. Our quantitative evaluation shows that SliderSpace-discovered directions decompose the visual structure of model's knowledge effectively, offering insights into the latent capabilities encoded within diffusion models. User studies further validate that our method produces more diverse and useful variations compared to baselines. Our code, data and trained weights are available at https://sliderspace.baulab.info

ARF: Artistic Radiance Fields

Jun 13, 2022Abstract:We present a method for transferring the artistic features of an arbitrary style image to a 3D scene. Previous methods that perform 3D stylization on point clouds or meshes are sensitive to geometric reconstruction errors for complex real-world scenes. Instead, we propose to stylize the more robust radiance field representation. We find that the commonly used Gram matrix-based loss tends to produce blurry results without faithful brushstrokes, and introduce a nearest neighbor-based loss that is highly effective at capturing style details while maintaining multi-view consistency. We also propose a novel deferred back-propagation method to enable optimization of memory-intensive radiance fields using style losses defined on full-resolution rendered images. Our extensive evaluation demonstrates that our method outperforms baselines by generating artistic appearance that more closely resembles the style image. Please check our project page for video results and open-source implementations: https://www.cs.cornell.edu/projects/arf/ .

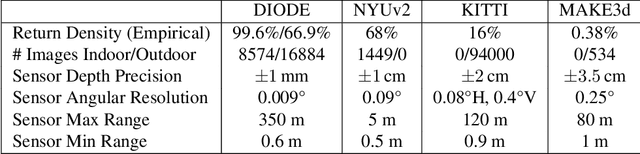

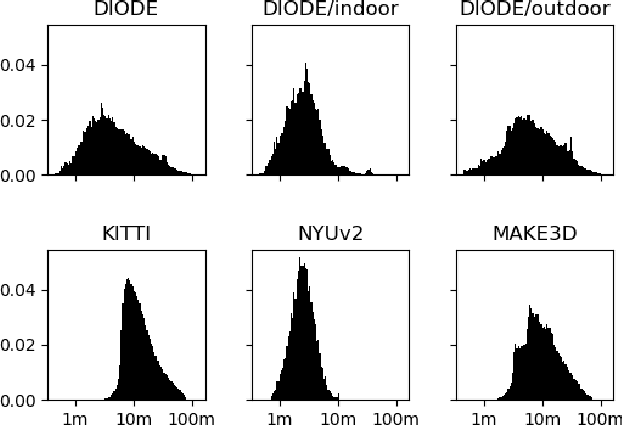

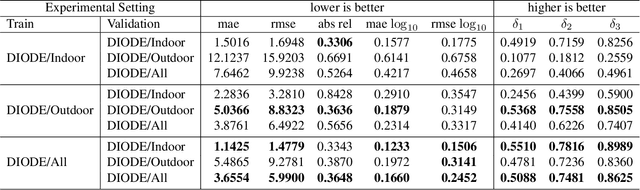

DIODE: A Dense Indoor and Outdoor DEpth Dataset

Aug 29, 2019

Abstract:We introduce DIODE, a dataset that contains thousands of diverse high resolution color images with accurate, dense, long-range depth measurements. DIODE (Dense Indoor/Outdoor DEpth) is the first public dataset to include RGBD images of indoor and outdoor scenes obtained with one sensor suite. This is in contrast to existing datasets that focus on just one domain/scene type and employ different sensors, making generalization across domains difficult. The dataset is available for download at http://diode-dataset.org

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge