Mohammed Brahimi

Combining GCN Structural Learning with LLM Chemical Knowledge for or Enhanced Virtual Screening

Apr 24, 2025Abstract:Virtual screening plays a critical role in modern drug discovery by enabling the identification of promising candidate molecules for experimental validation. Traditional machine learning methods such as support vector machines (SVM) and XGBoost rely on predefined molecular representations, often leading to information loss and potential bias. In contrast, deep learning approaches-particularly Graph Convolutional Networks (GCNs)-offer a more expressive and unbiased alternative by operating directly on molecular graphs. Meanwhile, Large Language Models (LLMs) have recently demonstrated state-of-the-art performance in drug design, thanks to their capacity to capture complex chemical patterns from large-scale data via attention mechanisms. In this paper, we propose a hybrid architecture that integrates GCNs with LLM-derived embeddings to combine localized structural learning with global chemical knowledge. The LLM embeddings can be precomputed and stored in a molecular feature library, removing the need to rerun the LLM during training or inference and thus maintaining computational efficiency. We found that concatenating the LLM embeddings after each GCN layer-rather than only at the final layer-significantly improves performance, enabling deeper integration of global context throughout the network. The resulting model achieves superior results, with an F1-score of (88.8%), outperforming standalone GCN (87.9%), XGBoost (85.5%), and SVM (85.4%) baselines.

Better Together: Unified Motion Capture and 3D Avatar Reconstruction

Mar 12, 2025Abstract:We present Better Together, a method that simultaneously solves the human pose estimation problem while reconstructing a photorealistic 3D human avatar from multi-view videos. While prior art usually solves these problems separately, we argue that joint optimization of skeletal motion with a 3D renderable body model brings synergistic effects, i.e. yields more precise motion capture and improved visual quality of real-time rendering of avatars. To achieve this, we introduce a novel animatable avatar with 3D Gaussians rigged on a personalized mesh and propose to optimize the motion sequence with time-dependent MLPs that provide accurate and temporally consistent pose estimates. We first evaluate our method on highly challenging yoga poses and demonstrate state-of-the-art accuracy on multi-view human pose estimation, reducing error by 35% on body joints and 45% on hand joints compared to keypoint-based methods. At the same time, our method significantly boosts the visual quality of animatable avatars (+2dB PSNR on novel view synthesis) on diverse challenging subjects.

Sparse Views, Near Light: A Practical Paradigm for Uncalibrated Point-light Photometric Stereo

Mar 29, 2024Abstract:Neural approaches have shown a significant progress on camera-based reconstruction. But they require either a fairly dense sampling of the viewing sphere, or pre-training on an existing dataset, thereby limiting their generalizability. In contrast, photometric stereo (PS) approaches have shown great potential for achieving high-quality reconstruction under sparse viewpoints. Yet, they are impractical because they typically require tedious laboratory conditions, are restricted to dark rooms, and often multi-staged, making them subject to accumulated errors. To address these shortcomings, we propose an end-to-end uncalibrated multi-view PS framework for reconstructing high-resolution shapes acquired from sparse viewpoints in a real-world environment. We relax the dark room assumption, and allow a combination of static ambient lighting and dynamic near LED lighting, thereby enabling easy data capture outside the lab. Experimental validation confirms that it outperforms existing baseline approaches in the regime of sparse viewpoints by a large margin. This allows to bring high-accuracy 3D reconstruction from the dark room to the real world, while maintaining a reasonable data capture complexity.

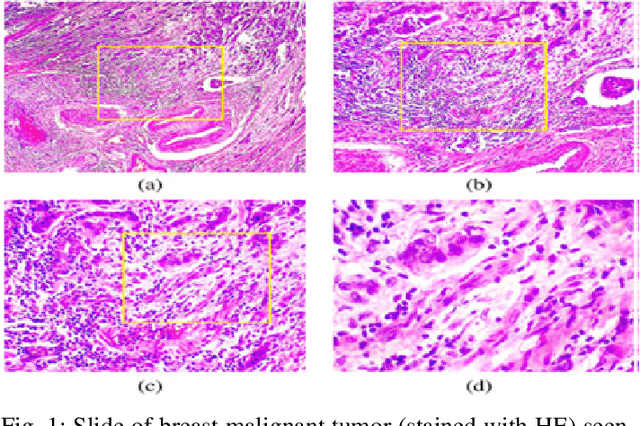

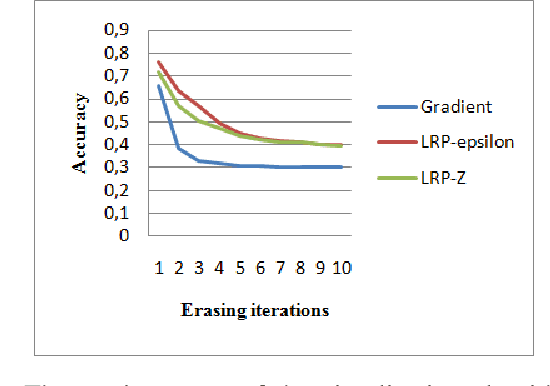

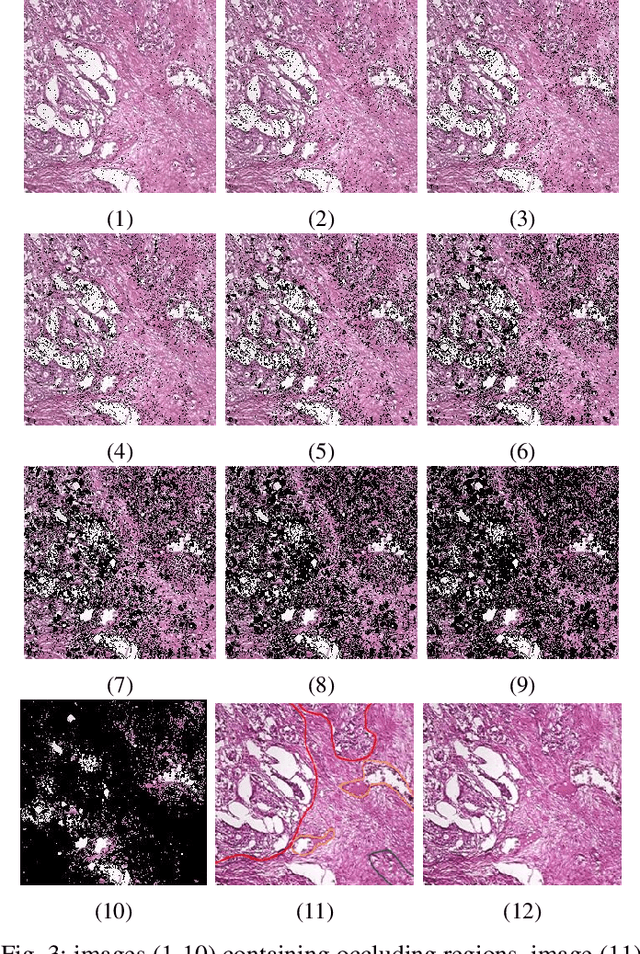

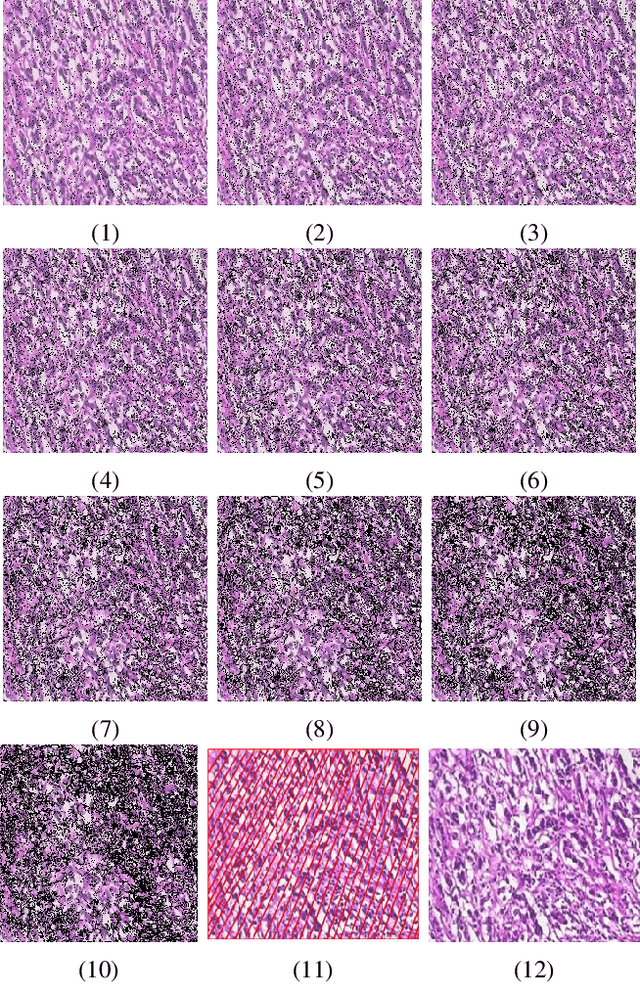

Exploring Regions of Interest: Visualizing Histological Image Classification for Breast Cancer using Deep Learning

May 31, 2023

Abstract:Computer aided detection and diagnosis systems based on deep learning have shown promising performance in breast cancer detection. However, there are cases where the obtained results lack justification. In this study, our objective is to highlight the regions of interest used by a convolutional neural network (CNN) for classifying histological images as benign or malignant. We compare these regions with the regions identified by pathologists. To achieve this, we employed the VGG19 architecture and tested three visualization methods: Gradient, LRP Z, and LRP Epsilon. Additionally, we experimented with three pixel selection methods: Bins, K-means, and MeanShift. Based on the results obtained, the Gradient visualization method and the MeanShift selection method yielded satisfactory outcomes for visualizing the images.

SupeRVol: Super-Resolution Shape and Reflectance Estimation in Inverse Volume Rendering

Dec 09, 2022

Abstract:We propose an end-to-end inverse rendering pipeline called SupeRVol that allows us to recover 3D shape and material parameters from a set of color images in a super-resolution manner. To this end, we represent both the bidirectional reflectance distribution function (BRDF) and the signed distance function (SDF) by multi-layer perceptrons. In order to obtain both the surface shape and its reflectance properties, we revert to a differentiable volume renderer with a physically based illumination model that allows us to decouple reflectance and lighting. This physical model takes into account the effect of the camera's point spread function thereby enabling a reconstruction of shape and material in a super-resolution quality. Experimental validation confirms that SupeRVol achieves state of the art performance in terms of inverse rendering quality. It generates reconstructions that are sharper than the individual input images, making this method ideally suited for 3D modeling from low-resolution imagery.

On the well-posedness of uncalibrated photometric stereo under general lighting

Nov 17, 2019

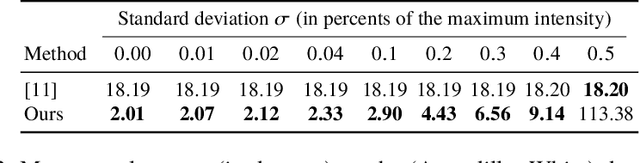

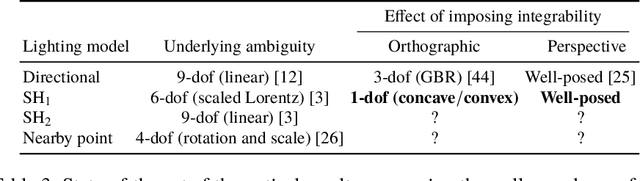

Abstract:Uncalibrated photometric stereo aims at estimating the 3D-shape of a surface, given a set of images captured from the same viewing angle, but under unknown, varying illumination. While the theoretical foundations of this inverse problem under directional lighting are well-established, there is a lack of mathematical evidence for the uniqueness of a solution under general lighting. On the other hand, stable and accurate heuristical solutions of uncalibrated photometric stereo under such general lighting have recently been proposed. The quality of the results demonstrated therein tends to indicate that the problem may actually be well-posed, but this still has to be established. The present paper addresses this theoretical issue, considering first-order spherical harmonics approximation of general lighting. Two important theoretical results are established. First, the orthographic integrability constraint ensures uniqueness of a solution up to a global concave-convex ambiguity, which had already been conjectured, yet not proven. Second, the perspective integrability constraint makes the problem well-posed, which generalizes a previous result limited to directional lighting. Eventually, a closed-form expression for the unique least-squares solution of the problem under perspective projection is provided, allowing numerical simulations on synthetic data to empirically validate our findings.

Deep interpretable architecture for plant diseases classification

Jun 13, 2019

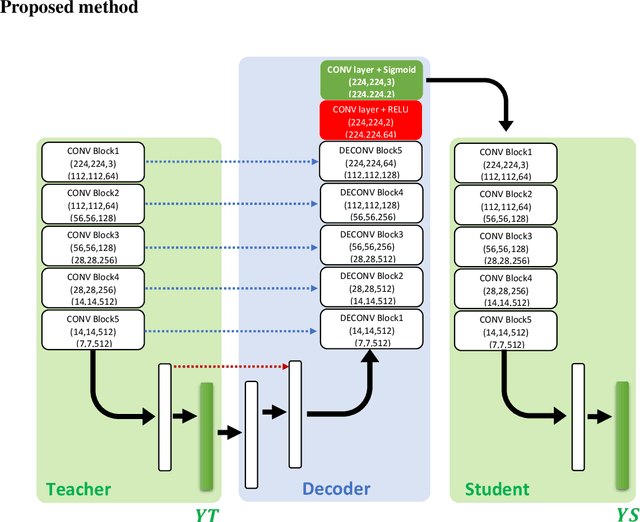

Abstract:Recently, many works have been inspired by the success of deep learning in computer vision for plant diseases classification. Unfortunately, these end-to-end deep classifiers lack transparency which can limit their adoption in practice. In this paper, we propose a new trainable visualization method for plant diseases classification based on a Convolutional Neural Network (CNN) architecture composed of two deep classifiers. The first one is named Teacher and the second one Student. This architecture leverages the multitask learning to train the Teacher and the Student jointly. Then, the communicated representation between the Teacher and the Student is used as a proxy to visualize the most important image regions for classification. This new architecture produces sharper visualization than the existing methods in plant diseases context. All experiments are achieved on PlantVillage dataset that contains 54306 plant images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge