Minjie Hua

Application-Driven AI Paradigm for Person Counting in Various Scenarios

Mar 24, 2023

Abstract:Person counting is considered as a fundamental task in video surveillance. However, the scenario diversity in practical applications makes it difficult to exploit a single person counting model for general use. Consequently, engineers must preview the video stream and manually specify an appropriate person counting model based on the scenario of camera shot, which is time-consuming, especially for large-scale deployments. In this paper, we propose a person counting paradigm that utilizes a scenario classifier to automatically select a suitable person counting model for each captured frame. First, the input image is passed through the scenario classifier to obtain a scenario label, which is then used to allocate the frame to one of five fine-tuned models for person counting. Additionally, we present five augmentation datasets collected from different scenarios, including side-view, long-shot, top-view, customized and crowd, which are also integrated to form a scenario classification dataset containing 26323 samples. In our comparative experiments, the proposed paradigm achieves better balance than any single model on the integrated dataset, thus its generalization in various scenarios has been proved.

Small Obstacle Avoidance Based on RGB-D Semantic Segmentation

Aug 30, 2019

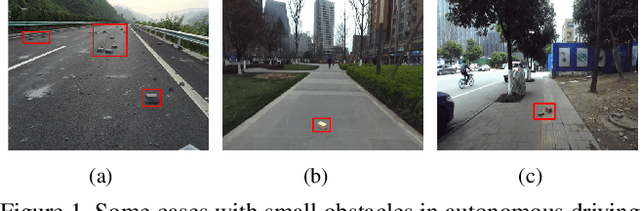

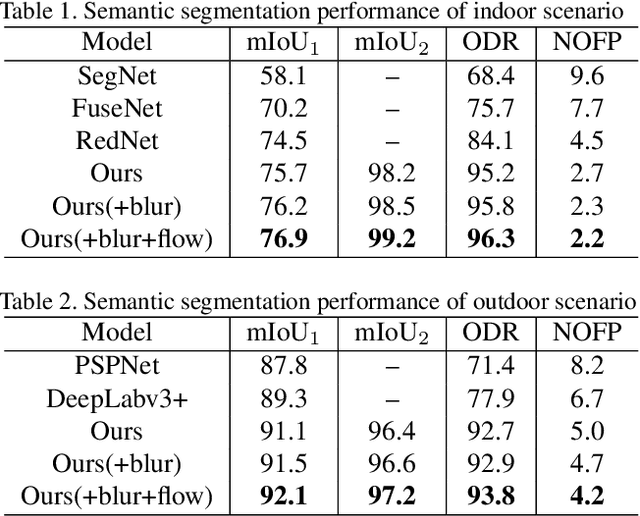

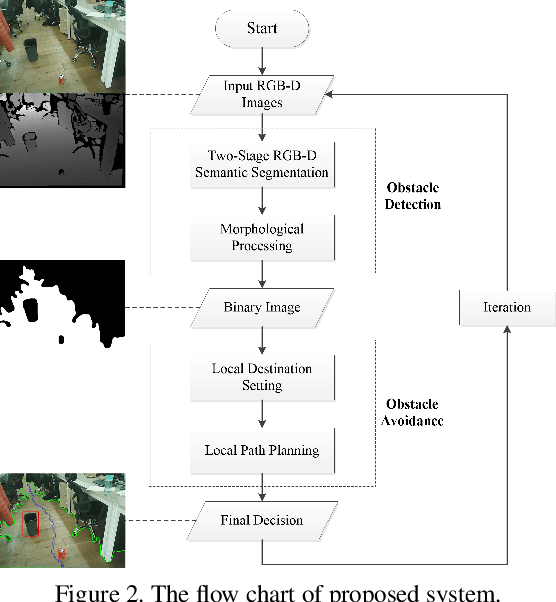

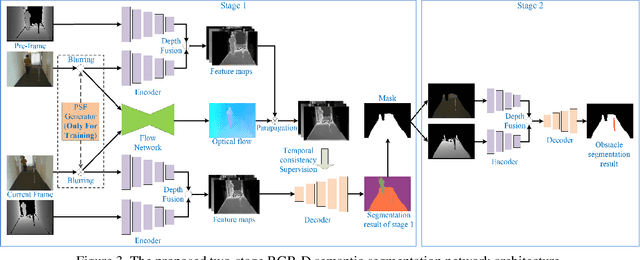

Abstract:This paper presents a novel obstacle avoidance system for road robots equipped with RGB-D sensor that captures scenes of its way forward. The purpose of the system is to have road robots move around autonomously and constantly without any collision even with small obstacles, which are often missed by existing solutions. For each input RGB-D image, the system uses a new two-stage semantic segmentation network followed by the morphological processing to generate the accurate semantic map containing road and obstacles. Based on the map, the local path planning is applied to avoid possible collision. Additionally, optical flow supervision and motion blurring augmented training scheme is applied to improve temporal consistency between adjacent frames and overcome the disturbance caused by camera shake. Various experiments are conducted to show that the proposed architecture obtains high performance both in indoor and outdoor scenarios.

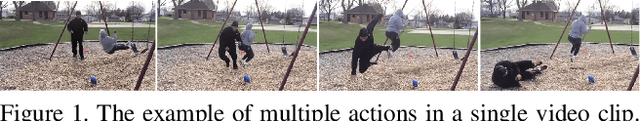

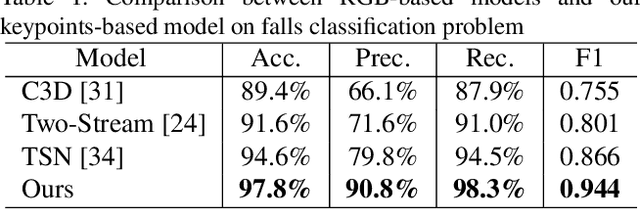

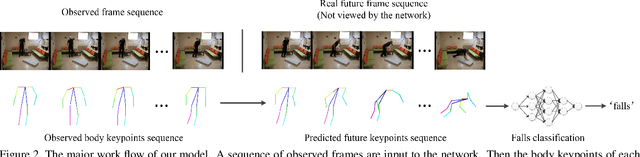

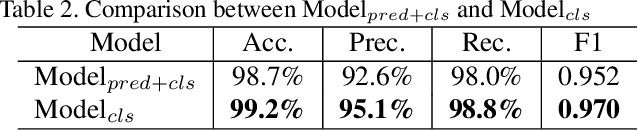

Falls Prediction Based on Body Keypoints and Seq2Seq Architecture

Aug 30, 2019

Abstract:This paper presents a novel approach for predicting the falls of people in advance from monocular video. First, all persons in the observed frames are detected and tracked with the coordinates of their body keypoints being extracted meanwhile. A keypoints vectorization method is exploited to eliminate irrelevant information in the initial coordinate representation. Then, the observed keypoint sequence of each person is input to the pose prediction module adapted from sequence-to-sequence(seq2seq) architecture to predict the future keypoint sequence. Finally, the predicted pose is analyzed by the falls classifier to judge whether the person will fall down in the future. The pose prediction module and falls classifier are trained separately and tuned jointly using Le2i dataset, which contains 191 videos of various normal daily activities as well as falls performed by several actors. The contrast experiments with mainstream raw RGB-based models show the accuracy improvement of utilizing body keypoints in falls classification. Moreover, the precognition of falls is proved effective by comparisons between models that with and without the pose prediction module.

Towards More Realistic Human-Robot Conversation: A Seq2Seq-based Body Gesture Interaction System

May 05, 2019

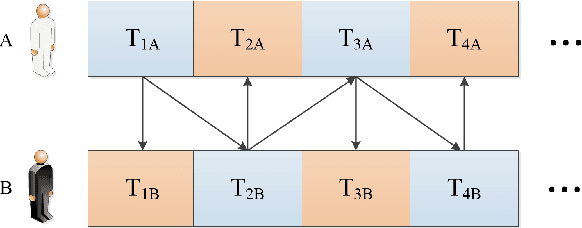

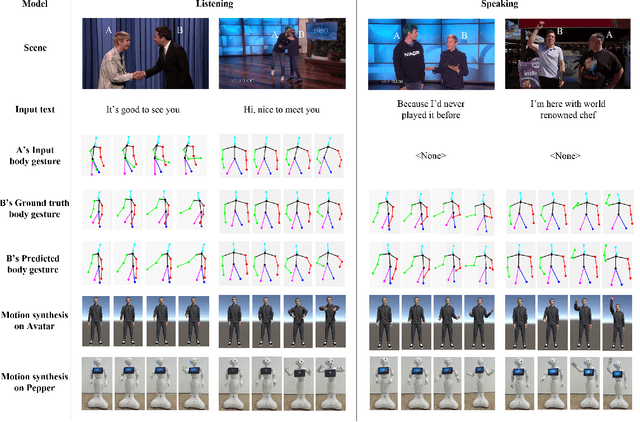

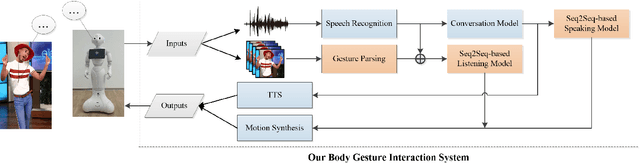

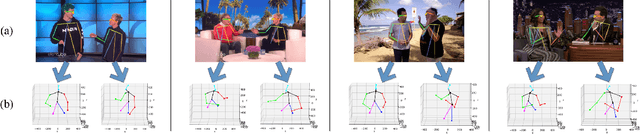

Abstract:This paper presents a novel method to improve the conversational interaction abilities of intelligent robots to enable more realistic body gestures. The sequence-to-sequence (seq2seq) model is adapted for synthesizing the robots' body gestures represented by the movements of twelve upper-body keypoints in not only the speaking phase, but also the listening phase for which previous methods can hardly achieve. We collected and preprocessed substantial videos of human conversation from Youtube to train our seq2seq-based models and evaluated them by the mean squared error (MSE) and cosine similarity on the test set. The tuned models were implemented to drive a virtual avatar as well as a physical humanoid robot, to demonstrate the improvement on interaction abilities of our method in practice. With body gestures synthesized by our models, the avatar and Pepper exhibited more intelligently while communicating with humans.

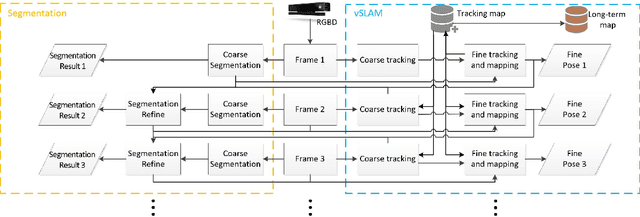

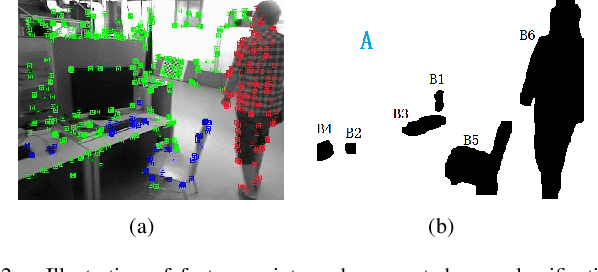

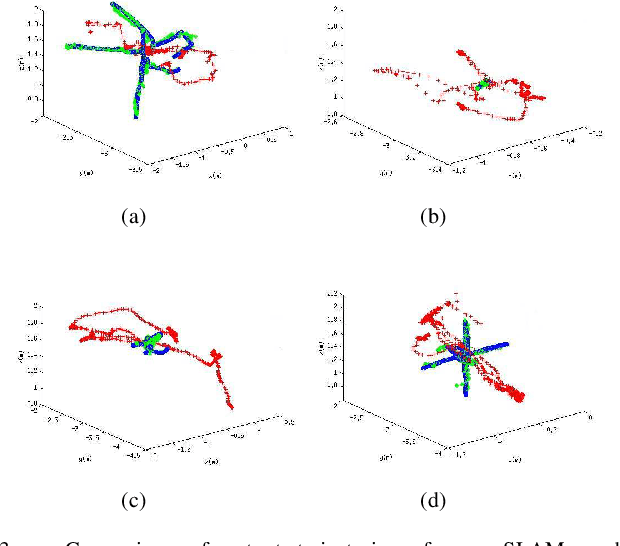

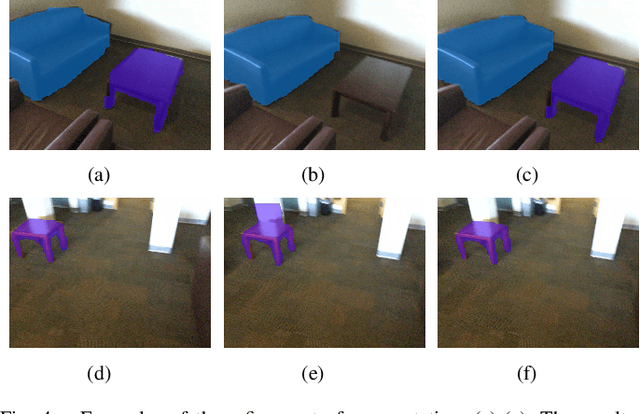

A Unified Framework for Mutual Improvement of SLAM and Semantic Segmentation

Dec 25, 2018

Abstract:This paper presents a novel framework for simultaneously implementing localization and segmentation, which are two of the most important vision-based tasks for robotics. While the goals and techniques used for them were considered to be different previously, we show that by making use of the intermediate results of the two modules, their performance can be enhanced at the same time. Our framework is able to handle both the instantaneous motion and long-term changes of instances in localization with the help of the segmentation result, which also benefits from the refined 3D pose information. We conduct experiments on various datasets, and prove that our framework works effectively on improving the precision and robustness of the two tasks and outperforms existing localization and segmentation algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge