Mingliang Chen

The Future of Combating Rumors? Retrieval, Discrimination, and Generation

Mar 29, 2024

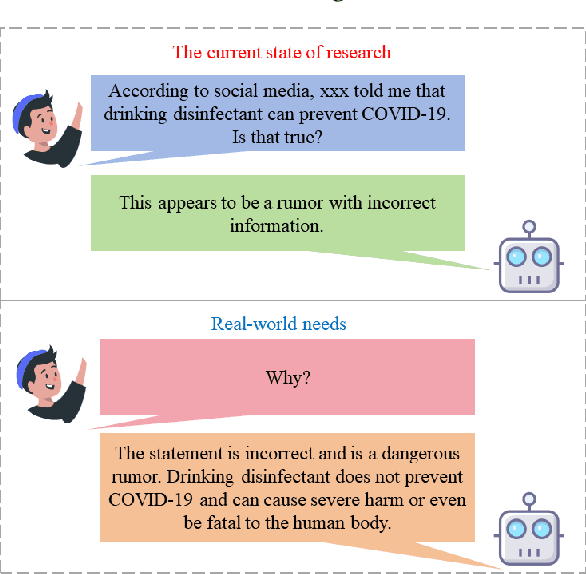

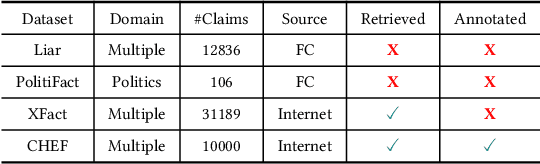

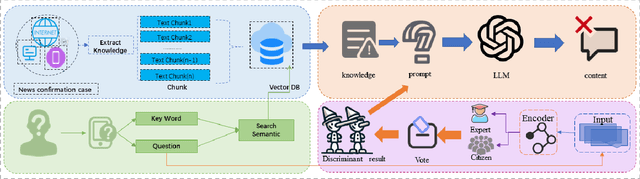

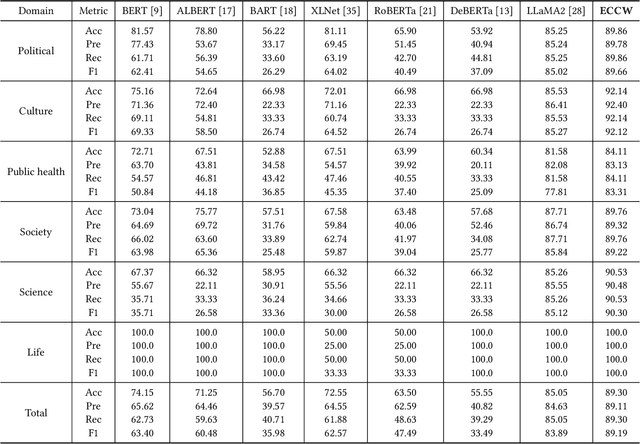

Abstract:Artificial Intelligence Generated Content (AIGC) technology development has facilitated the creation of rumors with misinformation, impacting societal, economic, and political ecosystems, challenging democracy. Current rumor detection efforts fall short by merely labeling potentially misinformation (classification task), inadequately addressing the issue, and it is unrealistic to have authoritative institutions debunk every piece of information on social media. Our proposed comprehensive debunking process not only detects rumors but also provides explanatory generated content to refute the authenticity of the information. The Expert-Citizen Collective Wisdom (ECCW) module we designed aensures high-precision assessment of the credibility of information and the retrieval module is responsible for retrieving relevant knowledge from a Real-time updated debunking database based on information keywords. By using prompt engineering techniques, we feed results and knowledge into a LLM (Large Language Model), achieving satisfactory discrimination and explanatory effects while eliminating the need for fine-tuning, saving computational costs, and contributing to debunking efforts.

Towards Fairness in Personalized Ads Using Impression Variance Aware Reinforcement Learning

Jun 08, 2023

Abstract:Variances in ad impression outcomes across demographic groups are increasingly considered to be potentially indicative of algorithmic bias in personalized ads systems. While there are many definitions of fairness that could be applicable in the context of personalized systems, we present a framework which we call the Variance Reduction System (VRS) for achieving more equitable outcomes in Meta's ads systems. VRS seeks to achieve a distribution of impressions with respect to selected protected class (PC) attributes that more closely aligns the demographics of an ad's eligible audience (a function of advertiser targeting criteria) with the audience who sees that ad, in a privacy-preserving manner. We first define metrics to quantify fairness gaps in terms of ad impression variances with respect to PC attributes including gender and estimated race. We then present the VRS for re-ranking ads in an impression variance-aware manner. We evaluate VRS via extensive simulations over different parameter choices and study the effect of the VRS on the chosen fairness metric. We finally present online A/B testing results from applying VRS to Meta's ads systems, concluding with a discussion of future work. We have deployed the VRS to all users in the US for housing ads, resulting in significant improvement in our fairness metric. VRS is the first large-scale deployed framework for pursuing fairness for multiple PC attributes in online advertising.

Transparency Tools for Fairness in AI (Luskin)

Jul 09, 2020

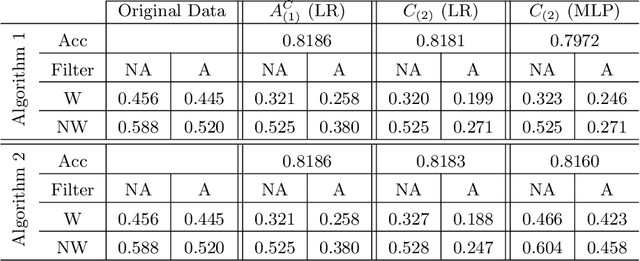

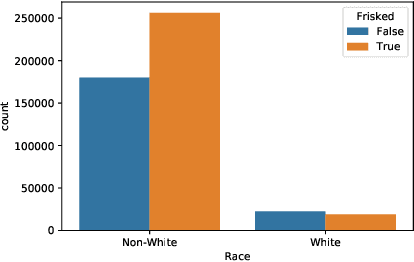

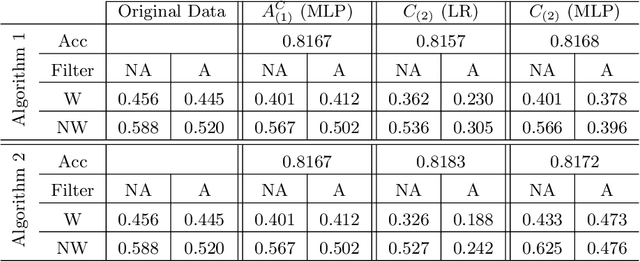

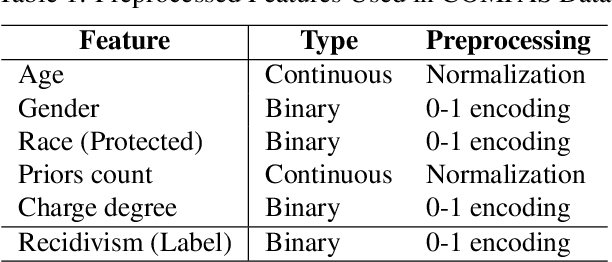

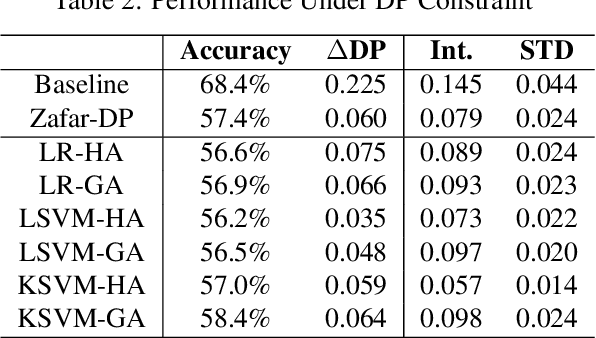

Abstract:We propose new tools for policy-makers to use when assessing and correcting fairness and bias in AI algorithms. The three tools are: - A new definition of fairness called "controlled fairness" with respect to choices of protected features and filters. The definition provides a simple test of fairness of an algorithm with respect to a dataset. This notion of fairness is suitable in cases where fairness is prioritized over accuracy, such as in cases where there is no "ground truth" data, only data labeled with past decisions (which may have been biased). - Algorithms for retraining a given classifier to achieve "controlled fairness" with respect to a choice of features and filters. Two algorithms are presented, implemented and tested. These algorithms require training two different models in two stages. We experiment with combinations of various types of models for the first and second stage and report on which combinations perform best in terms of fairness and accuracy. - Algorithms for adjusting model parameters to achieve a notion of fairness called "classification parity". This notion of fairness is suitable in cases where accuracy is prioritized. Two algorithms are presented, one which assumes that protected features are accessible to the model during testing, and one which assumes protected features are not accessible during testing. We evaluate our tools on three different publicly available datasets. We find that the tools are useful for understanding various dimensions of bias, and that in practice the algorithms are effective in starkly reducing a given observed bias when tested on new data.

Towards Threshold Invariant Fair Classification

Jun 18, 2020

Abstract:Effective machine learning models can automatically learn useful information from a large quantity of data and provide decisions in a high accuracy. These models may, however, lead to unfair predictions in certain sense among the population groups of interest, where the grouping is based on such sensitive attributes as race and gender. Various fairness definitions, such as demographic parity and equalized odds, were proposed in prior art to ensure that decisions guided by the machine learning models are equitable. Unfortunately, the "fair" model trained with these fairness definitions is threshold sensitive, i.e., the condition of fairness may no longer hold true when tuning the decision threshold. This paper introduces the notion of threshold invariant fairness, which enforces equitable performances across different groups independent of the decision threshold. To achieve this goal, this paper proposes to equalize the risk distributions among the groups via two approximation methods. Experimental results demonstrate that the proposed methodology is effective to alleviate the threshold sensitivity in machine learning models designed to achieve fairness.

3D Hand Pose Tracking and Estimation Using Stereo Matching

Oct 23, 2016

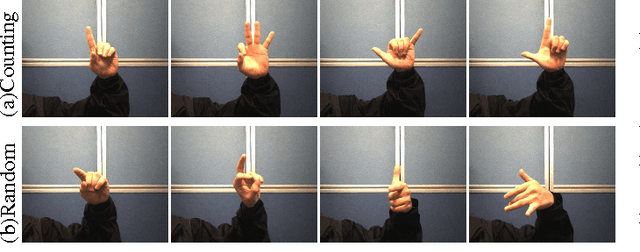

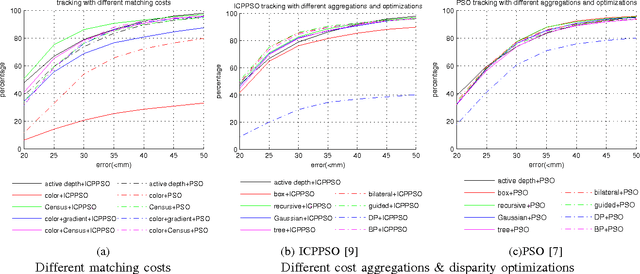

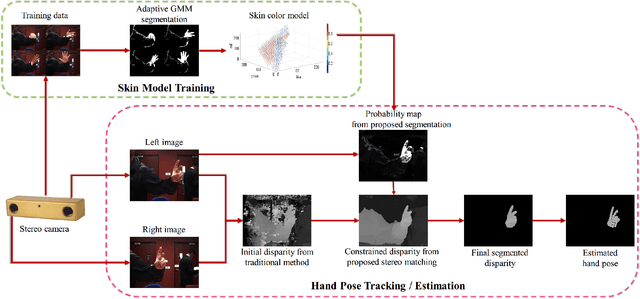

Abstract:3D hand pose tracking/estimation will be very important in the next generation of human-computer interaction. Most of the currently available algorithms rely on low-cost active depth sensors. However, these sensors can be easily interfered by other active sources and require relatively high power consumption. As a result, they are currently not suitable for outdoor environments and mobile devices. This paper aims at tracking/estimating hand poses using passive stereo which avoids these limitations. A benchmark with 18,000 stereo image pairs and 18,000 depth images captured from different scenarios and the ground-truth 3D positions of palm and finger joints (obtained from the manual label) is thus proposed. This paper demonstrates that the performance of the state-of-the art tracking/estimation algorithms can be maintained with most stereo matching algorithms on the proposed benchmark, as long as the hand segmentation is correct. As a result, a novel stereo-based hand segmentation algorithm specially designed for hand tracking/estimation is proposed. The quantitative evaluation demonstrates that the proposed algorithm is suitable for the state-of-the-art hand pose tracking/estimation algorithms and the tracking quality is comparable to the use of active depth sensors under different challenging scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge