Massimiliano Tamborski

HAD-Gen: Human-like and Diverse Driving Behavior Modeling for Controllable Scenario Generation

Mar 19, 2025

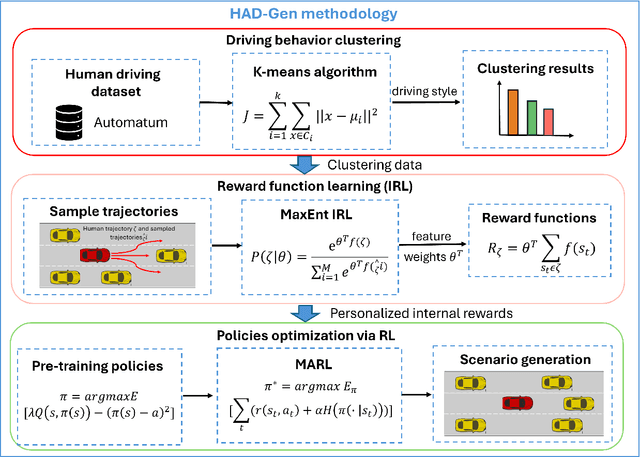

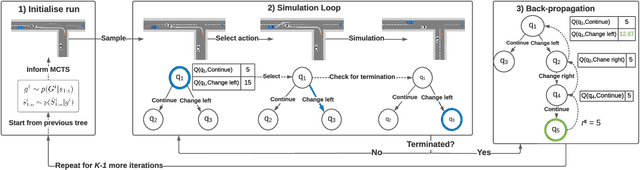

Abstract:Simulation-based testing has emerged as an essential tool for verifying and validating autonomous vehicles (AVs). However, contemporary methodologies, such as deterministic and imitation learning-based driver models, struggle to capture the variability of human-like driving behavior. Given these challenges, we propose HAD-Gen, a general framework for realistic traffic scenario generation that simulates diverse human-like driving behaviors. The framework first clusters the vehicle trajectory data into different driving styles according to safety features. It then employs maximum entropy inverse reinforcement learning on each of the clusters to learn the reward function corresponding to each driving style. Using these reward functions, the method integrates offline reinforcement learning pre-training and multi-agent reinforcement learning algorithms to obtain general and robust driving policies. Multi-perspective simulation results show that our proposed scenario generation framework can simulate diverse, human-like driving behaviors with strong generalization capability. The proposed framework achieves a 90.96% goal-reaching rate, an off-road rate of 2.08%, and a collision rate of 6.91% in the generalization test, outperforming prior approaches by over 20% in goal-reaching performance. The source code is released at https://github.com/RoboSafe-Lab/Sim4AD.

Deep Reinforcement Learning for Multi-Agent Interaction

Aug 02, 2022Abstract:The development of autonomous agents which can interact with other agents to accomplish a given task is a core area of research in artificial intelligence and machine learning. Towards this goal, the Autonomous Agents Research Group develops novel machine learning algorithms for autonomous systems control, with a specific focus on deep reinforcement learning and multi-agent reinforcement learning. Research problems include scalable learning of coordinated agent policies and inter-agent communication; reasoning about the behaviours, goals, and composition of other agents from limited observations; and sample-efficient learning based on intrinsic motivation, curriculum learning, causal inference, and representation learning. This article provides a broad overview of the ongoing research portfolio of the group and discusses open problems for future directions.

Verifiable Goal Recognition for Autonomous Driving with Occlusions

Jun 28, 2022

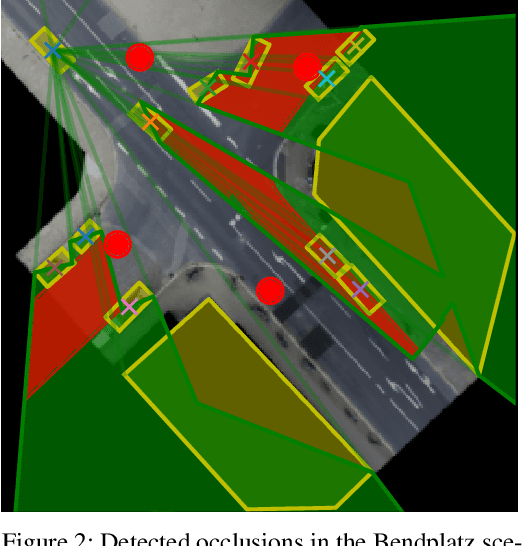

Abstract:When used in autonomous driving, goal recognition allows the future behaviour of other vehicles to be more accurately predicted. A recent goal recognition method for autonomous vehicles, GRIT, has been shown to be fast, accurate, interpretable and verifiable. In autonomous driving, vehicles can encounter novel scenarios that were unseen during training, and the environment is partially observable due to occlusions. However, GRIT can only operate in fixed frame scenarios, with full observability. We present a novel goal recognition method named Goal Recognition with Interpretable Trees under Occlusion (OGRIT), which solves these shortcomings of GRIT. We demonstrate that OGRIT can generalise between different scenarios and handle missing data due to occlusions, while still being fast, accurate, interpretable and verifiable.

A Human-Centric Method for Generating Causal Explanations in Natural Language for Autonomous Vehicle Motion Planning

Jun 27, 2022

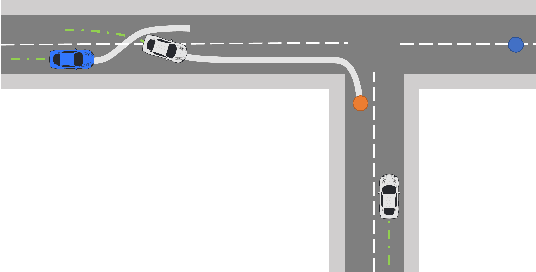

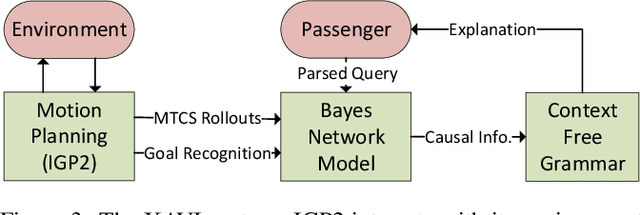

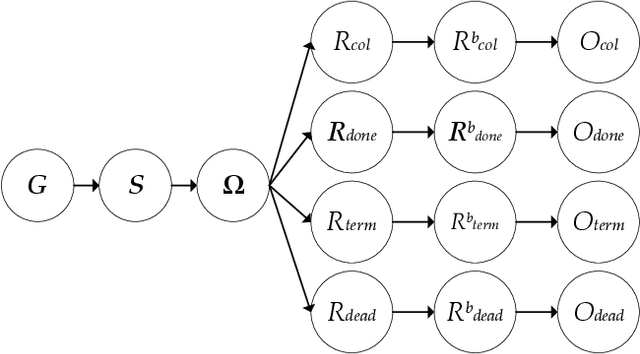

Abstract:Inscrutable AI systems are difficult to trust, especially if they operate in safety-critical settings like autonomous driving. Therefore, there is a need to build transparent and queryable systems to increase trust levels. We propose a transparent, human-centric explanation generation method for autonomous vehicle motion planning and prediction based on an existing white-box system called IGP2. Our method integrates Bayesian networks with context-free generative rules and can give causal natural language explanations for the high-level driving behaviour of autonomous vehicles. Preliminary testing on simulated scenarios shows that our method captures the causes behind the actions of autonomous vehicles and generates intelligible explanations with varying complexity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge