Linfeng Tang

TemCoCo: Temporally Consistent Multi-modal Video Fusion with Visual-Semantic Collaboration

Aug 25, 2025

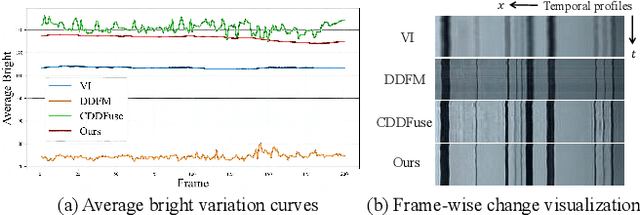

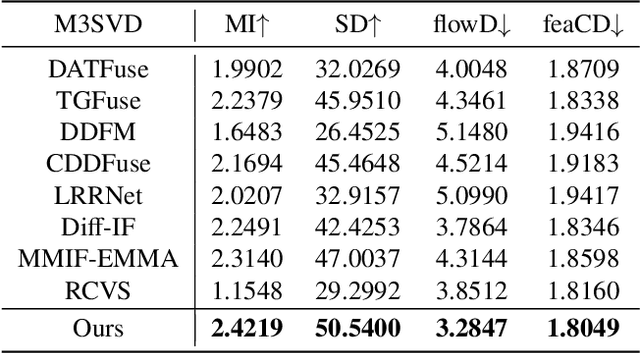

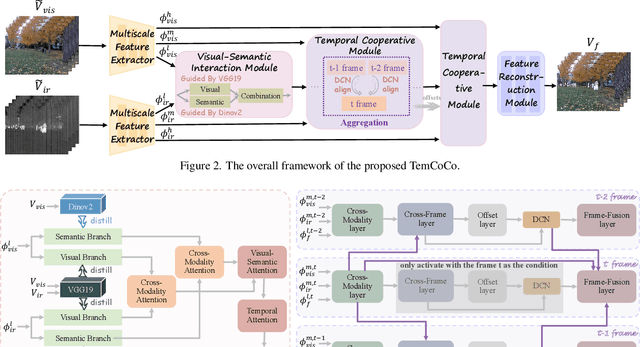

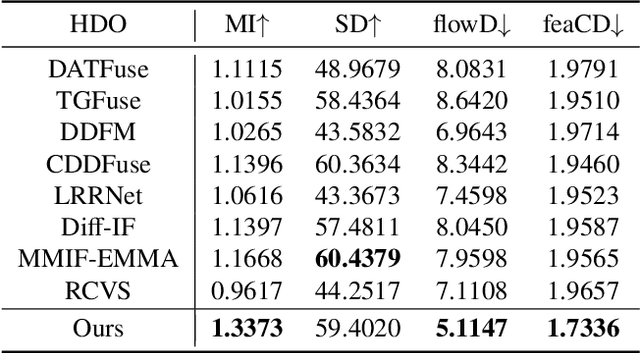

Abstract:Existing multi-modal fusion methods typically apply static frame-based image fusion techniques directly to video fusion tasks, neglecting inherent temporal dependencies and leading to inconsistent results across frames. To address this limitation, we propose the first video fusion framework that explicitly incorporates temporal modeling with visual-semantic collaboration to simultaneously ensure visual fidelity, semantic accuracy, and temporal consistency. First, we introduce a visual-semantic interaction module consisting of a semantic branch and a visual branch, with Dinov2 and VGG19 employed for targeted distillation, allowing simultaneous enhancement of both the visual and semantic representations. Second, we pioneer integrate the video degradation enhancement task into the video fusion pipeline by constructing a temporal cooperative module, which leverages temporal dependencies to facilitate weak information recovery. Third, to ensure temporal consistency, we embed a temporal-enhanced mechanism into the network and devise a temporal loss to guide the optimization process. Finally, we introduce two innovative evaluation metrics tailored for video fusion, aimed at assessing the temporal consistency of the generated fused videos. Extensive experimental results on public video datasets demonstrate the superiority of our method. Our code is released at https://github.com/Meiqi-Gong/TemCoCo.

Deep Learning Reforms Image Matching: A Survey and Outlook

Jun 05, 2025Abstract:Image matching, which establishes correspondences between two-view images to recover 3D structure and camera geometry, serves as a cornerstone in computer vision and underpins a wide range of applications, including visual localization, 3D reconstruction, and simultaneous localization and mapping (SLAM). Traditional pipelines composed of ``detector-descriptor, feature matcher, outlier filter, and geometric estimator'' falter in challenging scenarios. Recent deep-learning advances have significantly boosted both robustness and accuracy. This survey adopts a unique perspective by comprehensively reviewing how deep learning has incrementally transformed the classical image matching pipeline. Our taxonomy highly aligns with the traditional pipeline in two key aspects: i) the replacement of individual steps in the traditional pipeline with learnable alternatives, including learnable detector-descriptor, outlier filter, and geometric estimator; and ii) the merging of multiple steps into end-to-end learnable modules, encompassing middle-end sparse matcher, end-to-end semi-dense/dense matcher, and pose regressor. We first examine the design principles, advantages, and limitations of both aspects, and then benchmark representative methods on relative pose recovery, homography estimation, and visual localization tasks. Finally, we discuss open challenges and outline promising directions for future research. By systematically categorizing and evaluating deep learning-driven strategies, this survey offers a clear overview of the evolving image matching landscape and highlights key avenues for further innovation.

CoMatch: Dynamic Covisibility-Aware Transformer for Bilateral Subpixel-Level Semi-Dense Image Matching

Mar 31, 2025Abstract:This prospective study proposes CoMatch, a novel semi-dense image matcher with dynamic covisibility awareness and bilateral subpixel accuracy. Firstly, observing that modeling context interaction over the entire coarse feature map elicits highly redundant computation due to the neighboring representation similarity of tokens, a covisibility-guided token condenser is introduced to adaptively aggregate tokens in light of their covisibility scores that are dynamically estimated, thereby ensuring computational efficiency while improving the representational capacity of aggregated tokens simultaneously. Secondly, considering that feature interaction with massive non-covisible areas is distracting, which may degrade feature distinctiveness, a covisibility-assisted attention mechanism is deployed to selectively suppress irrelevant message broadcast from non-covisible reduced tokens, resulting in robust and compact attention to relevant rather than all ones. Thirdly, we find that at the fine-level stage, current methods adjust only the target view's keypoints to subpixel level, while those in the source view remain restricted at the coarse level and thus not informative enough, detrimental to keypoint location-sensitive usages. A simple yet potent fine correlation module is developed to refine the matching candidates in both source and target views to subpixel level, attaining attractive performance improvement. Thorough experimentation across an array of public benchmarks affirms CoMatch's promising accuracy, efficiency, and generalizability.

DSPFusion: Image Fusion via Degradation and Semantic Dual-Prior Guidance

Mar 30, 2025Abstract:Existing fusion methods are tailored for high-quality images but struggle with degraded images captured under harsh circumstances, thus limiting the practical potential of image fusion. This work presents a \textbf{D}egradation and \textbf{S}emantic \textbf{P}rior dual-guided framework for degraded image \textbf{Fusion} (\textbf{DSPFusion}), utilizing degradation priors and high-quality scene semantic priors restored via diffusion models to guide both information recovery and fusion in a unified model. In specific, it first individually extracts modality-specific degradation priors, while jointly capturing comprehensive low-quality semantic priors. Subsequently, a diffusion model is developed to iteratively restore high-quality semantic priors in a compact latent space, enabling our method to be over $20 \times$ faster than mainstream diffusion model-based image fusion schemes. Finally, the degradation priors and high-quality semantic priors are employed to guide information enhancement and aggregation via the dual-prior guidance and prior-guided fusion modules. Extensive experiments demonstrate that DSPFusion mitigates most typical degradations while integrating complementary context with minimal computational cost, greatly broadening the application scope of image fusion.

ControlFusion: A Controllable Image Fusion Framework with Language-Vision Degradation Prompts

Mar 30, 2025

Abstract:Current image fusion methods struggle to address the composite degradations encountered in real-world imaging scenarios and lack the flexibility to accommodate user-specific requirements. In response to these challenges, we propose a controllable image fusion framework with language-vision prompts, termed ControlFusion, which adaptively neutralizes composite degradations. On the one hand, we develop a degraded imaging model that integrates physical imaging mechanisms, including the Retinex theory and atmospheric scattering principle, to simulate composite degradations, thereby providing potential for addressing real-world complex degradations from the data level. On the other hand, we devise a prompt-modulated restoration and fusion network that dynamically enhances features with degradation prompts, enabling our method to accommodate composite degradation of varying levels. Specifically, considering individual variations in quality perception of users, we incorporate a text encoder to embed user-specified degradation types and severity levels as degradation prompts. We also design a spatial-frequency collaborative visual adapter that autonomously perceives degradations in source images, thus eliminating the complete dependence on user instructions. Extensive experiments demonstrate that ControlFusion outperforms SOTA fusion methods in fusion quality and degradation handling, particularly in countering real-world and compound degradations with various levels.

VideoFusion: A Spatio-Temporal Collaborative Network for Mutli-modal Video Fusion and Restoration

Mar 30, 2025Abstract:Compared to images, videos better align with real-world acquisition scenarios and possess valuable temporal cues. However, existing multi-sensor fusion research predominantly integrates complementary context from multiple images rather than videos. This primarily stems from two factors: 1) the scarcity of large-scale multi-sensor video datasets, limiting research in video fusion, and 2) the inherent difficulty of jointly modeling spatial and temporal dependencies in a unified framework. This paper proactively compensates for the dilemmas. First, we construct M3SVD, a benchmark dataset with $220$ temporally synchronized and spatially registered infrared-visible video pairs comprising 153,797 frames, filling the data gap for the video fusion community. Secondly, we propose VideoFusion, a multi-modal video fusion model that fully exploits cross-modal complementarity and temporal dynamics to generate spatio-temporally coherent videos from (potentially degraded) multi-modal inputs. Specifically, 1) a differential reinforcement module is developed for cross-modal information interaction and enhancement, 2) a complete modality-guided fusion strategy is employed to adaptively integrate multi-modal features, and 3) a bi-temporal co-attention mechanism is devised to dynamically aggregate forward-backward temporal contexts to reinforce cross-frame feature representations. Extensive experiments reveal that VideoFusion outperforms existing image-oriented fusion paradigms in sequential scenarios, effectively mitigating temporal inconsistency and interference.

Text-IF: Leveraging Semantic Text Guidance for Degradation-Aware and Interactive Image Fusion

Mar 25, 2024

Abstract:Image fusion aims to combine information from different source images to create a comprehensively representative image. Existing fusion methods are typically helpless in dealing with degradations in low-quality source images and non-interactive to multiple subjective and objective needs. To solve them, we introduce a novel approach that leverages semantic text guidance image fusion model for degradation-aware and interactive image fusion task, termed as Text-IF. It innovatively extends the classical image fusion to the text guided image fusion along with the ability to harmoniously address the degradation and interaction issues during fusion. Through the text semantic encoder and semantic interaction fusion decoder, Text-IF is accessible to the all-in-one infrared and visible image degradation-aware processing and the interactive flexible fusion outcomes. In this way, Text-IF achieves not only multi-modal image fusion, but also multi-modal information fusion. Extensive experiments prove that our proposed text guided image fusion strategy has obvious advantages over SOTA methods in the image fusion performance and degradation treatment. The code is available at https://github.com/XunpengYi/Text-IF.

Diff-Retinex: Rethinking Low-light Image Enhancement with A Generative Diffusion Model

Aug 25, 2023Abstract:In this paper, we rethink the low-light image enhancement task and propose a physically explainable and generative diffusion model for low-light image enhancement, termed as Diff-Retinex. We aim to integrate the advantages of the physical model and the generative network. Furthermore, we hope to supplement and even deduce the information missing in the low-light image through the generative network. Therefore, Diff-Retinex formulates the low-light image enhancement problem into Retinex decomposition and conditional image generation. In the Retinex decomposition, we integrate the superiority of attention in Transformer and meticulously design a Retinex Transformer decomposition network (TDN) to decompose the image into illumination and reflectance maps. Then, we design multi-path generative diffusion networks to reconstruct the normal-light Retinex probability distribution and solve the various degradations in these components respectively, including dark illumination, noise, color deviation, loss of scene contents, etc. Owing to generative diffusion model, Diff-Retinex puts the restoration of low-light subtle detail into practice. Extensive experiments conducted on real-world low-light datasets qualitatively and quantitatively demonstrate the effectiveness, superiority, and generalization of the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge