Kuk Jin Jang

Pattern-Guided Diffusion Models

Dec 15, 2025

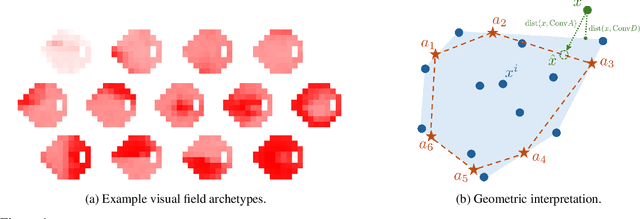

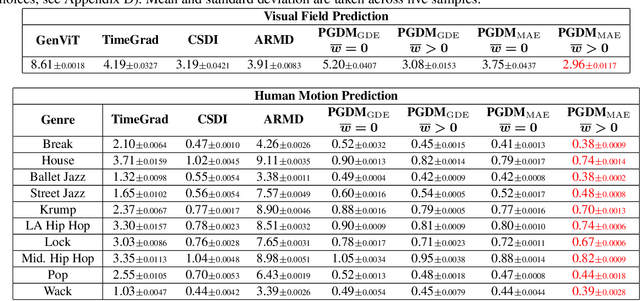

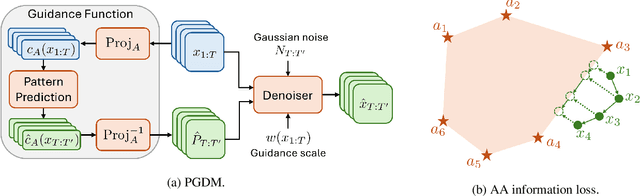

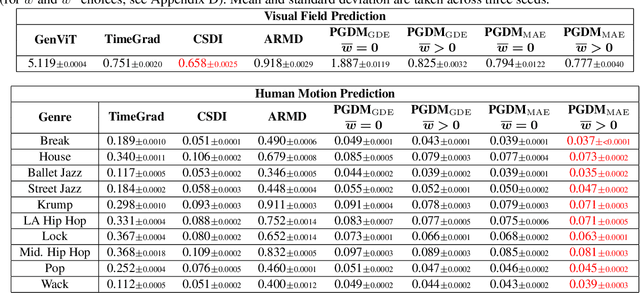

Abstract:Diffusion models have shown promise in forecasting future data from multivariate time series. However, few existing methods account for recurring structures, or patterns, that appear within the data. We present Pattern-Guided Diffusion Models (PGDM), which leverage inherent patterns within temporal data for forecasting future time steps. PGDM first extracts patterns using archetypal analysis and estimates the most likely next pattern in the sequence. By guiding predictions with this pattern estimate, PGDM makes more realistic predictions that fit within the set of known patterns. We additionally introduce a novel uncertainty quantification technique based on archetypal analysis, and we dynamically scale the guidance level based on the pattern estimate uncertainty. We apply our method to two well-motivated forecasting applications, predicting visual field measurements and motion capture frames. On both, we show that pattern guidance improves PGDM's performance (MAE / CRPS) by up to 40.67% / 56.26% and 14.12% / 14.10%, respectively. PGDM also outperforms baselines by up to 65.58% / 84.83% and 93.64% / 92.55%.

Fundus Image-based Visual Acuity Assessment with PAC-Guarantees

Dec 09, 2024Abstract:Timely detection and treatment are essential for maintaining eye health. Visual acuity (VA), which measures the clarity of vision at a distance, is a crucial metric for managing eye health. Machine learning (ML) techniques have been introduced to assist in VA measurement, potentially alleviating clinicians' workloads. However, the inherent uncertainties in ML models make relying solely on them for VA prediction less than ideal. The VA prediction task involves multiple sources of uncertainty, requiring more robust approaches. A promising method is to build prediction sets or intervals rather than point estimates, offering coverage guarantees through techniques like conformal prediction and Probably Approximately Correct (PAC) prediction sets. Despite the potential, to date, these approaches have not been applied to the VA prediction task.To address this, we propose a method for deriving prediction intervals for estimating visual acuity from fundus images with a PAC guarantee. Our experimental results demonstrate that the PAC guarantees are upheld, with performance comparable to or better than that of two prior works that do not provide such guarantees.

Assessing Modality Bias in Video Question Answering Benchmarks with Multimodal Large Language Models

Aug 22, 2024Abstract:Multimodal large language models (MLLMs) can simultaneously process visual, textual, and auditory data, capturing insights that complement human analysis. However, existing video question-answering (VidQA) benchmarks and datasets often exhibit a bias toward a single modality, despite the goal of requiring advanced reasoning skills that integrate diverse modalities to answer the queries. In this work, we introduce the modality importance score (MIS) to identify such bias. It is designed to assess which modality embeds the necessary information to answer the question. Additionally, we propose an innovative method using state-of-the-art MLLMs to estimate the modality importance, which can serve as a proxy for human judgments of modality perception. With this MIS, we demonstrate the presence of unimodal bias and the scarcity of genuinely multimodal questions in existing datasets. We further validate the modality importance score with multiple ablation studies to evaluate the performance of MLLMs on permuted feature sets. Our results indicate that current models do not effectively integrate information due to modality imbalance in existing datasets. Our proposed MLLM-derived MIS can guide the curation of modality-balanced datasets that advance multimodal learning and enhance MLLMs' capabilities to understand and utilize synergistic relations across modalities.

Distributionally Robust Statistical Verification with Imprecise Neural Networks

Aug 30, 2023

Abstract:A particularly challenging problem in AI safety is providing guarantees on the behavior of high-dimensional autonomous systems. Verification approaches centered around reachability analysis fail to scale, and purely statistical approaches are constrained by the distributional assumptions about the sampling process. Instead, we pose a distributionally robust version of the statistical verification problem for black-box systems, where our performance guarantees hold over a large family of distributions. This paper proposes a novel approach based on a combination of active learning, uncertainty quantification, and neural network verification. A central piece of our approach is an ensemble technique called Imprecise Neural Networks, which provides the uncertainty to guide active learning. The active learning uses an exhaustive neural-network verification tool Sherlock to collect samples. An evaluation on multiple physical simulators in the openAI gym Mujoco environments with reinforcement-learned controllers demonstrates that our approach can provide useful and scalable guarantees for high-dimensional systems.

Take Me Home: Reversing Distribution Shifts using Reinforcement Learning

Feb 24, 2023

Abstract:Deep neural networks have repeatedly been shown to be non-robust to the uncertainties of the real world. Even subtle adversarial attacks and naturally occurring distribution shifts wreak havoc on systems relying on deep neural networks. In response to this, current state-of-the-art techniques use data-augmentation to enrich the training distribution of the model and consequently improve robustness to natural distribution shifts. We propose an alternative approach that allows the system to recover from distribution shifts online. Specifically, our method applies a sequence of semantic-preserving transformations to bring the shifted data closer in distribution to the training set, as measured by the Wasserstein distance. We formulate the problem of sequence selection as an MDP, which we solve using reinforcement learning. To aid in our estimates of Wasserstein distance, we employ dimensionality reduction through orthonormal projection. We provide both theoretical and empirical evidence that orthonormal projection preserves characteristics of the data at the distributional level. Finally, we apply our distribution shift recovery approach to the ImageNet-C benchmark for distribution shifts, targeting shifts due to additive noise and image histogram modifications. We demonstrate an improvement in average accuracy up to 14.21% across a variety of state-of-the-art ImageNet classifiers.

Imprecise Bayesian Neural Networks

Feb 19, 2023

Abstract:Uncertainty quantification and robustness to distribution shifts are important goals in machine learning and artificial intelligence. Although Bayesian neural networks (BNNs) allow for uncertainty in the predictions to be assessed, different sources of uncertainty are indistinguishable. We present imprecise Bayesian neural networks (IBNNs); they generalize and overcome some of the drawbacks of standard BNNs. These latter are trained using a single prior and likelihood distributions, whereas IBNNs are trained using credal prior and likelihood sets. They allow to distinguish between aleatoric and epistemic uncertainties, and to quantify them. In addition, IBNNs are robust in the sense of Bayesian sensitivity analysis, and are more robust than BNNs to distribution shift. They can also be used to compute sets of outcomes that enjoy PAC-like properties. We apply IBNNs to two case studies. One, to model blood glucose and insulin dynamics for artificial pancreas control, and two, for motion prediction in autonomous driving scenarios. We show that IBNNs performs better when compared to an ensemble of BNNs benchmark.

Electroanatomic Mapping to determine Scar Regions in patients with Atrial Fibrillation

Oct 23, 2022Abstract:Left atrial voltage maps are routinely acquired during electroanatomic mapping in patients undergoing catheter ablation for atrial fibrillation. For patients, who have prior catheter ablation when they are in sinus rhythm, the voltage map can be used to identify low voltage areas using a threshold of 0.2 - 0.45 mV. However, such a voltage threshold for maps acquired during atrial fibrillation has not been well established. A prerequisite for defining a voltage threshold is to maximize the topologically matched low voltage areas between the electroanatomic mapping acquired during atrial fibrillation and sinus rhythm. This paper demonstrates a new technique to improve the sensitivity and specificity of the matched low voltage areas. This is achieved by computing omni-directional bipolar voltages and applying Gaussian Process Regression based interpolation to derive the AF map. The proposed method is evaluated on a test cohort of 7 male patients, and a total of 46,589 data points were included in analysis. The low voltage areas in the posterior left atrium and pulmonary vein junction are determined using the standard method and the proposed method. Overall, the proposed method showed patient-specific sensitivity and specificity in matching low voltage areas of 75.70% and 65.55% for a geometric mean of 70.69%. On average, there was an improvement of 3.00% in the geometric mean, 7.88% improvement in sensitivity, 0.30% improvement in specificity compared to the standard method. The results show that the proposed method is an improvement in matching low voltage areas. This may help develop the voltage threshold to better identify low voltage areas in the left atrium for patients in atrial fibrillation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge