Konstantinos Votis

Centre for Research and Technology Hellas, Information Technologies Institute

Physics-Aware RIS Codebook Compilation for Near-Field Beam Focusing under Mutual Coupling and Specular Reflections

Jan 19, 2026Abstract:Next-generation wireless networks are envisioned to achieve reliable, low-latency connectivity within environments characterized by strong multipath and severe channel variability. Programmable wireless environments (PWEs) address this challenge by enabling deterministic control of electromagnetic (EM) propagation through software-defined reconfigurable intelligent surfaces (RISs). However, effectively configuring RISs in real time remains a major bottleneck, particularly under near-field conditions where mutual coupling and specular reflections alter the intended response. To overcome this limitation, this paper introduces MATCH, a physics-based codebook compilation algorithm that explicitly accounts for the EM coupling among RIS unit cells and the reflective interactions with surrounding structures, ensuring that the resulting codebooks remain consistent with the physical characteristics of the environment. Finally, MATCH is evaluated under a full-wave simulation framework incorporating mutual coupling and secondary reflections, demonstrating its ability to concentrate scattered energy within the focal region, confirming that physics-consistent, codebook-based optimization constitutes an effective approach for practical and efficient RIS configuration.

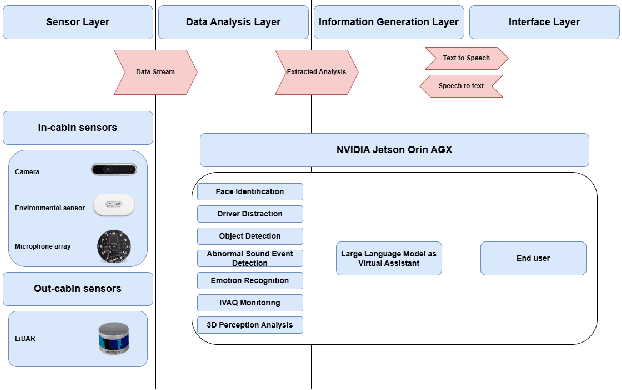

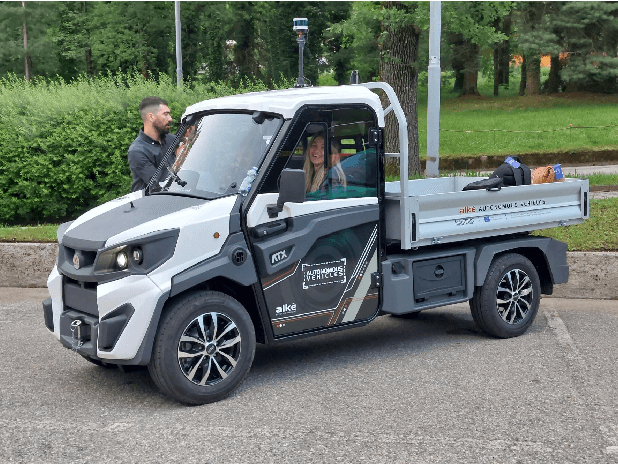

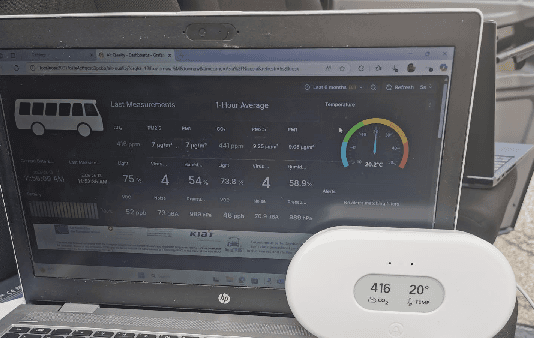

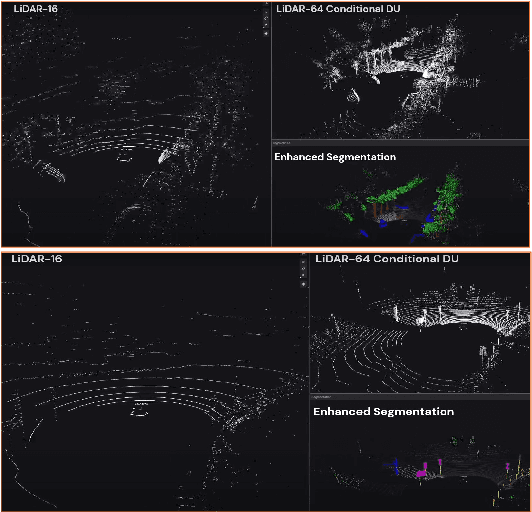

A holistic perception system of internal and external monitoring for ground autonomous vehicles: AutoTRUST paradigm

Aug 25, 2025

Abstract:This paper introduces a holistic perception system for internal and external monitoring of autonomous vehicles, with the aim of demonstrating a novel AI-leveraged self-adaptive framework of advanced vehicle technologies and solutions that optimize perception and experience on-board. Internal monitoring system relies on a multi-camera setup designed for predicting and identifying driver and occupant behavior through facial recognition, exploiting in addition a large language model as virtual assistant. Moreover, the in-cabin monitoring system includes AI-empowered smart sensors that measure air-quality and perform thermal comfort analysis for efficient on and off-boarding. On the other hand, external monitoring system perceives the surrounding environment of vehicle, through a LiDAR-based cost-efficient semantic segmentation approach, that performs highly accurate and efficient super-resolution on low-quality raw 3D point clouds. The holistic perception framework is developed in the context of EU's Horizon Europe programm AutoTRUST, and has been integrated and deployed on a real electric vehicle provided by ALKE. Experimental validation and evaluation at the integration site of Joint Research Centre at Ispra, Italy, highlights increased performance and efficiency of the modular blocks of the proposed perception architecture.

Multi-Sensor Fusion for UAV Classification Based on Feature Maps of Image and Radar Data

Oct 21, 2024

Abstract:The unique cost, flexibility, speed, and efficiency of modern UAVs make them an attractive choice in many applications in contemporary society. This, however, causes an ever-increasing number of reported malicious or accidental incidents, rendering the need for the development of UAV detection and classification mechanisms essential. We propose a methodology for developing a system that fuses already processed multi-sensor data into a new Deep Neural Network to increase its classification accuracy towards UAV detection. The DNN model fuses high-level features extracted from individual object detection and classification models associated with thermal, optronic, and radar data. Additionally, emphasis is given to the model's Convolutional Neural Network (CNN) based architecture that combines the features of the three sensor modalities by stacking the extracted image features of the thermal and optronic sensor achieving higher classification accuracy than each sensor alone.

CEASEFIRE: An AI-powered system for combatting illicit firearms trafficking

Jun 21, 2024Abstract:Modern technologies have led illicit firearms trafficking to partially merge with cybercrime, while simultaneously permitting its off-line aspects to become more sophisticated. Law enforcement officers face difficult challenges that require hi-tech solutions. This article presents a real-world system, powered by advanced Artificial Intelligence, for facilitating them in their everyday work.

From Detection to Action Recognition: An Edge-Based Pipeline for Robot Human Perception

Dec 06, 2023

Abstract:Mobile service robots are proving to be increasingly effective in a range of applications, such as healthcare, monitoring Activities of Daily Living (ADL), and facilitating Ambient Assisted Living (AAL). These robots heavily rely on Human Action Recognition (HAR) to interpret human actions and intentions. However, for HAR to function effectively on service robots, it requires prior knowledge of human presence (human detection) and identification of individuals to monitor (human tracking). In this work, we propose an end-to-end pipeline that encompasses the entire process, starting from human detection and tracking, leading to action recognition. The pipeline is designed to operate in near real-time while ensuring all stages of processing are performed on the edge, reducing the need for centralised computation. To identify the most suitable models for our mobile robot, we conducted a series of experiments comparing state-of-the-art solutions based on both their detection performance and efficiency. To evaluate the effectiveness of our proposed pipeline, we proposed a dataset comprising daily household activities. By presenting our findings and analysing the results, we demonstrate the efficacy of our approach in enabling mobile robots to understand and respond to human behaviour in real-world scenarios relying mainly on the data from their RGB cameras.

An Open Platform for Simulating the Physical Layer of 6G Communication Systems with Multiple Intelligent Surfaces

Nov 03, 2022Abstract:Reconfigurable Intelligent Surfaces (RIS) constitute a promising technology that could fulfill the extreme performance and capacity needs of the upcoming 6G wireless networks, by offering software-defined control over wireless propagation phenomena. Despite the existence of many theoretical models describing various aspects of RIS from the signal processing perspective (e.g., channel fading models), there is no open platform to simulate and study their actual physical-layer behavior, especially in the multi-RIS case. In this paper, we develop an open simulation platform, aimed at modeling the physical-layer electromagnetic coupling and propagation between RIS pairs. We present the platform by initially designing a basic unit cell, and then proceeding to progressively model and simulate multiple and larger RISs. The platform can be used for producing verifiable stochastic models for wireless communication in multi-RIS deployments, such as vehicle-to-everything (V2X) communications in autonomous vehicles and cybersecurity schemes, while its code is freely available to the public.

Open Challenges in Synthetic Speech Detection

Sep 15, 2022

Abstract:In this paper the current status and open challenges of synthetic speech detection are addressed. The work comprises an initial analysis of available open datasets and of existing detection methods, a description of the requirements for new research datasets compliant with regulations and better representing real-case scenarios, and a discussion of the desired characteristics of future trustworthy detection methods in terms of both functional and non-functional requirements. Compared to other works, based on specific detection solutions or presenting single dataset of synthetic speeches, our paper is meant to orient future state-of-the-art research in the domain, to quickly lessen the current gap between synthesis and detection approaches.

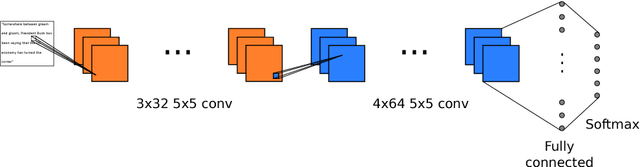

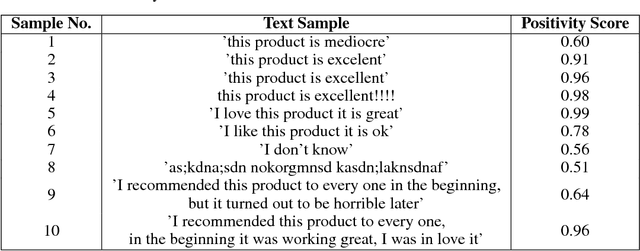

Image-based Natural Language Understanding Using 2D Convolutional Neural Networks

Nov 06, 2018

Abstract:We propose a new approach to natural language understanding in which we consider the input text as an image and apply 2D Convolutional Neural Networks to learn the local and global semantics of the sentences from the variations ofthe visual patterns of words. Our approach demonstrates that it is possible to get semantically meaningful features from images with text without using optical character recognition and sequential processing pipelines, techniques that traditional Natural Language Understanding algorithms require. To validate our approach, we present results for two applications: text classification and dialog modeling. Using a 2D Convolutional Neural Network, we were able to outperform the state-of-art accuracy results of non-Latin alphabet-based text classification and achieved promising results for eight text classification datasets. Furthermore, our approach outperformed the memory networks when using out of vocabulary entities fromtask 4 of the bAbI dialog dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge