Kiyoshi Izumi

Are Generative AI Agents Effective Personalized Financial Advisors?

Apr 08, 2025Abstract:Large language model-based agents are becoming increasingly popular as a low-cost mechanism to provide personalized, conversational advice, and have demonstrated impressive capabilities in relatively simple scenarios, such as movie recommendations. But how do these agents perform in complex high-stakes domains, where domain expertise is essential and mistakes carry substantial risk? This paper investigates the effectiveness of LLM-advisors in the finance domain, focusing on three distinct challenges: (1) eliciting user preferences when users themselves may be unsure of their needs, (2) providing personalized guidance for diverse investment preferences, and (3) leveraging advisor personality to build relationships and foster trust. Via a lab-based user study with 64 participants, we show that LLM-advisors often match human advisor performance when eliciting preferences, although they can struggle to resolve conflicting user needs. When providing personalized advice, the LLM was able to positively influence user behavior, but demonstrated clear failure modes. Our results show that accurate preference elicitation is key, otherwise, the LLM-advisor has little impact, or can even direct the investor toward unsuitable assets. More worryingly, users appear insensitive to the quality of advice being given, or worse these can have an inverse relationship. Indeed, users reported a preference for and increased satisfaction as well as emotional trust with LLMs adopting an extroverted persona, even though those agents provided worse advice.

The Impact and Feasibility of Self-Confidence Shaping for AI-Assisted Decision-Making

Feb 20, 2025Abstract:In AI-assisted decision-making, it is crucial but challenging for humans to appropriately rely on AI, especially in high-stakes domains such as finance and healthcare. This paper addresses this problem from a human-centered perspective by presenting an intervention for self-confidence shaping, designed to calibrate self-confidence at a targeted level. We first demonstrate the impact of self-confidence shaping by quantifying the upper-bound improvement in human-AI team performance. Our behavioral experiments with 121 participants show that self-confidence shaping can improve human-AI team performance by nearly 50% by mitigating both over- and under-reliance on AI. We then introduce a self-confidence prediction task to identify when our intervention is needed. Our results show that simple machine-learning models achieve 67% accuracy in predicting self-confidence. We further illustrate the feasibility of such interventions. The observed relationship between sentiment and self-confidence suggests that modifying sentiment could be a viable strategy for shaping self-confidence. Finally, we outline future research directions to support the deployment of self-confidence shaping in a real-world scenario for effective human-AI collaboration.

Beyond Turing Test: Can GPT-4 Sway Experts' Decisions?

Sep 25, 2024Abstract:In the post-Turing era, evaluating large language models (LLMs) involves assessing generated text based on readers' reactions rather than merely its indistinguishability from human-produced content. This paper explores how LLM-generated text impacts readers' decisions, focusing on both amateur and expert audiences. Our findings indicate that GPT-4 can generate persuasive analyses affecting the decisions of both amateurs and professionals. Furthermore, we evaluate the generated text from the aspects of grammar, convincingness, logical coherence, and usefulness. The results highlight a high correlation between real-world evaluation through audience reactions and the current multi-dimensional evaluators commonly used for generative models. Overall, this paper shows the potential and risk of using generated text to sway human decisions and also points out a new direction for evaluating generated text, i.e., leveraging the reactions and decisions of readers. We release our dataset to assist future research.

Interactive DualChecker for Mitigating Hallucinations in Distilling Large Language Models

Aug 22, 2024

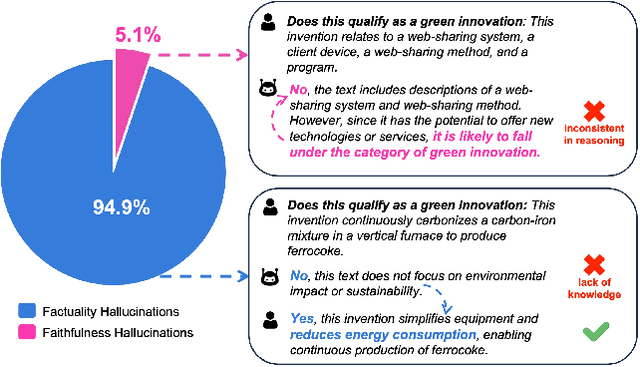

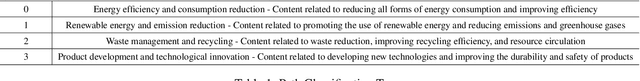

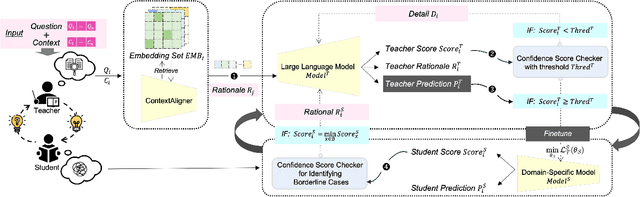

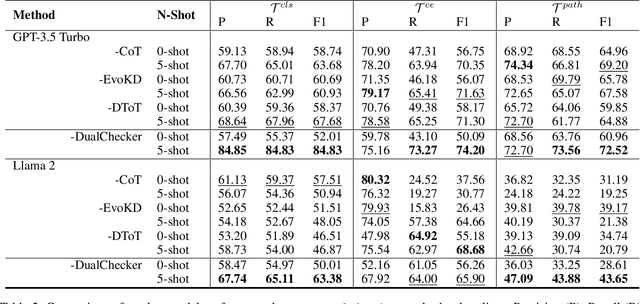

Abstract:Large Language Models (LLMs) have demonstrated exceptional capabilities across various machine learning (ML) tasks. Given the high costs of creating annotated datasets for supervised learning, LLMs offer a valuable alternative by enabling effective few-shot in-context learning. However, these models can produce hallucinations, particularly in domains with incomplete knowledge. Additionally, current methods for knowledge distillation using LLMs often struggle to enhance the effectiveness of both teacher and student models. To address these challenges, we introduce DualChecker, an innovative framework designed to mitigate hallucinations and improve the performance of both teacher and student models during knowledge distillation. DualChecker employs ContextAligner to ensure that the context provided by teacher models aligns with human labeling standards. It also features a dynamic checker system that enhances model interaction: one component re-prompts teacher models with more detailed content when they show low confidence, and another identifies borderline cases from student models to refine the teaching templates. This interactive process promotes continuous improvement and effective knowledge transfer between the models. We evaluate DualChecker using a green innovation textual dataset that includes binary, multiclass, and token classification tasks. The experimental results show that DualChecker significantly outperforms existing state-of-the-art methods, achieving up to a 17% improvement in F1 score for teacher models and 10% for student models. Notably, student models fine-tuned with LLM predictions perform comparably to those fine-tuned with actual data, even in a challenging domain. We make all datasets, models, and code from this research publicly available.

SETN: Stock Embedding Enhanced with Textual and Network Information

Aug 06, 2024

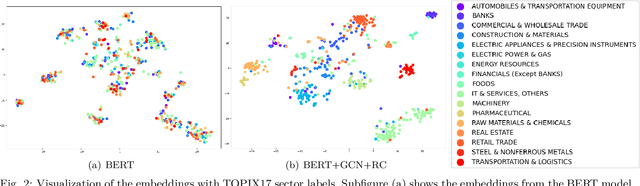

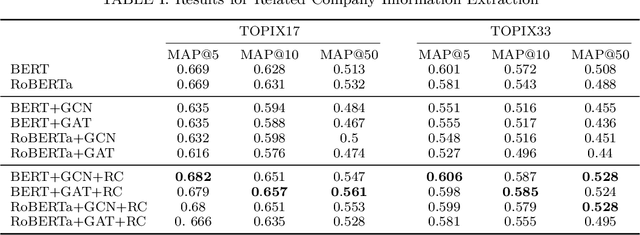

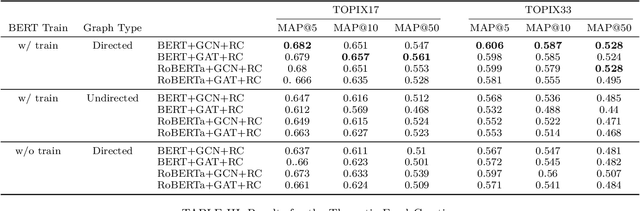

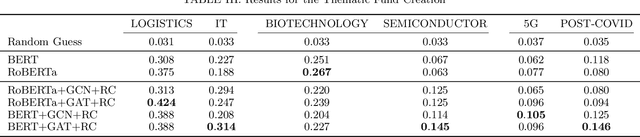

Abstract:Stock embedding is a method for vector representation of stocks. There is a growing demand for vector representations of stock, i.e., stock embedding, in wealth management sectors, and the method has been applied to various tasks such as stock price prediction, portfolio optimization, and similar fund identifications. Stock embeddings have the advantage of enabling the quantification of relative relationships between stocks, and they can extract useful information from unstructured data such as text and network data. In this study, we propose stock embedding enhanced with textual and network information (SETN) using a domain-adaptive pre-trained transformer-based model to embed textual information and a graph neural network model to grasp network information. We evaluate the performance of our proposed model on related company information extraction tasks. We also demonstrate that stock embeddings obtained from the proposed model perform better in creating thematic funds than those obtained from baseline methods, providing a promising pathway for various applications in the wealth management industry.

Summarization of Investment Reports Using Pre-trained Model

Aug 03, 2024

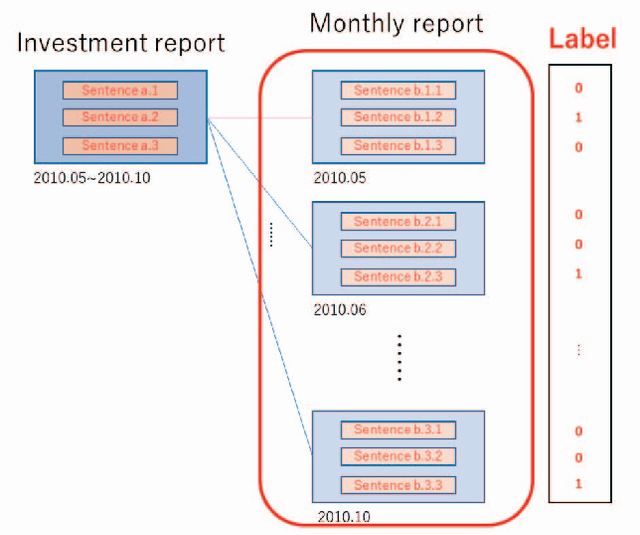

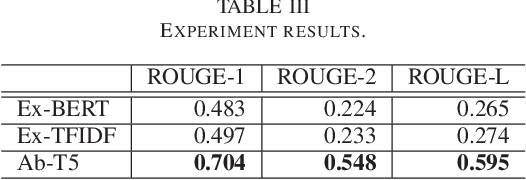

Abstract:In this paper, we attempt to summarize monthly reports as investment reports. Fund managers have a wide range of tasks, one of which is the preparation of investment reports. In addition to preparing monthly reports on fund management, fund managers prepare management reports that summarize these monthly reports every six months or once a year. The preparation of fund reports is a labor-intensive and time-consuming task. Therefore, in this paper, we tackle investment summarization from monthly reports using transformer-based models. There are two main types of summarization methods: extractive summarization and abstractive summarization, and this study constructs both methods and examines which is more useful in summarizing investment reports.

Discovery of Rare Causal Knowledge from Financial Statement Summaries

Aug 03, 2024Abstract:What would happen if temperatures were subdued and result in a cool summer? One can easily imagine that air conditioner, ice cream or beer sales would be suppressed as a result of this. Less obvious is that agricultural shipments might be delayed, or that sound proofing material sales might decrease. The ability to extract such causal knowledge is important, but it is also important to distinguish between cause-effect pairs that are known and those that are likely to be unknown, or rare. Therefore, in this paper, we propose a method for extracting rare causal knowledge from Japanese financial statement summaries produced by companies. Our method consists of three steps. First, it extracts sentences that include causal knowledge from the summaries using a machine learning method based on an extended language ontology. Second, it obtains causal knowledge from the extracted sentences using syntactic patterns. Finally, it extracts the rarest causal knowledge from the knowledge it has obtained.

LLMFactor: Extracting Profitable Factors through Prompts for Explainable Stock Movement Prediction

Jun 16, 2024

Abstract:Recently, Large Language Models (LLMs) have attracted significant attention for their exceptional performance across a broad range of tasks, particularly in text analysis. However, the finance sector presents a distinct challenge due to its dependence on time-series data for complex forecasting tasks. In this study, we introduce a novel framework called LLMFactor, which employs Sequential Knowledge-Guided Prompting (SKGP) to identify factors that influence stock movements using LLMs. Unlike previous methods that relied on keyphrases or sentiment analysis, this approach focuses on extracting factors more directly related to stock market dynamics, providing clear explanations for complex temporal changes. Our framework directs the LLMs to create background knowledge through a fill-in-the-blank strategy and then discerns potential factors affecting stock prices from related news. Guided by background knowledge and identified factors, we leverage historical stock prices in textual format to predict stock movement. An extensive evaluation of the LLMFactor framework across four benchmark datasets from both the U.S. and Chinese stock markets demonstrates its superiority over existing state-of-the-art methods and its effectiveness in financial time-series forecasting.

Is ChatGPT the Future of Causal Text Mining? A Comprehensive Evaluation and Analysis

Feb 23, 2024Abstract:Causality is fundamental in human cognition and has drawn attention in diverse research fields. With growing volumes of textual data, discerning causalities within text data is crucial, and causal text mining plays a pivotal role in extracting meaningful patterns. This study conducts comprehensive evaluations of ChatGPT's causal text mining capabilities. Firstly, we introduce a benchmark that extends beyond general English datasets, including domain-specific and non-English datasets. We also provide an evaluation framework to ensure fair comparisons between ChatGPT and previous approaches. Finally, our analysis outlines the limitations and future challenges in employing ChatGPT for causal text mining. Specifically, our analysis reveals that ChatGPT serves as a good starting point for various datasets. However, when equipped with a sufficient amount of training data, previous models still surpass ChatGPT's performance. Additionally, ChatGPT suffers from the tendency to falsely recognize non-causal sequences as causal sequences. These issues become even more pronounced with advanced versions of the model, such as GPT-4. In addition, we highlight the constraints of ChatGPT in handling complex causality types, including both intra/inter-sentential and implicit causality. The model also faces challenges with effectively leveraging in-context learning and domain adaptation. We release our code to support further research and development in this field.

PAMS: Platform for Artificial Market Simulations

Sep 19, 2023Abstract:This paper presents a new artificial market simulation platform, PAMS: Platform for Artificial Market Simulations. PAMS is developed as a Python-based simulator that is easily integrated with deep learning and enabling various simulation that requires easy users' modification. In this paper, we demonstrate PAMS effectiveness through a study using agents predicting future prices by deep learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge