José Cano

Accelerating Transposed Convolutions on FPGA-based Edge Devices

Jul 10, 2025Abstract:Transposed Convolutions (TCONV) enable the up-scaling mechanism within generative Artificial Intelligence (AI) models. However, the predominant Input-Oriented Mapping (IOM) method for implementing TCONV has complex output mapping, overlapping sums, and ineffectual computations. These inefficiencies further exacerbate the performance bottleneck of TCONV and generative models on resource-constrained edge devices. To address this problem, in this paper we propose MM2IM, a hardware-software co-designed accelerator that combines Matrix Multiplication (MatMul) with col2IM to process TCONV layers on resource-constrained edge devices efficiently. Using the SECDA-TFLite design toolkit, we implement MM2IM and evaluate its performance across 261 TCONV problem configurations, achieving an average speedup of 1.9x against a dual-thread ARM Neon optimized CPU baseline. We then evaluate the performance of MM2IM on a range of TCONV layers from well-known generative models achieving up to 4.2x speedup, and compare it against similar resource-constrained TCONV accelerators, outperforming them by at least 2x GOPs/DSP. Finally, we evaluate MM2IM on the DCGAN and pix2pix GAN models, achieving up to 3x speedup and 2.4x energy reduction against the CPU baseline.

ICE-Pruning: An Iterative Cost-Efficient Pruning Pipeline for Deep Neural Networks

May 12, 2025Abstract:Pruning is a widely used method for compressing Deep Neural Networks (DNNs), where less relevant parameters are removed from a DNN model to reduce its size. However, removing parameters reduces model accuracy, so pruning is typically combined with fine-tuning, and sometimes other operations such as rewinding weights, to recover accuracy. A common approach is to repeatedly prune and then fine-tune, with increasing amounts of model parameters being removed in each step. While straightforward to implement, pruning pipelines that follow this approach are computationally expensive due to the need for repeated fine-tuning. In this paper we propose ICE-Pruning, an iterative pruning pipeline for DNNs that significantly decreases the time required for pruning by reducing the overall cost of fine-tuning, while maintaining a similar accuracy to existing pruning pipelines. ICE-Pruning is based on three main components: i) an automatic mechanism to determine after which pruning steps fine-tuning should be performed; ii) a freezing strategy for faster fine-tuning in each pruning step; and iii) a custom pruning-aware learning rate scheduler to further improve the accuracy of each pruning step and reduce the overall time consumption. We also propose an efficient auto-tuning stage for the hyperparameters (e.g., freezing percentage) introduced by the three components. We evaluate ICE-Pruning on several DNN models and datasets, showing that it can accelerate pruning by up to 9.61x. Code is available at https://github.com/gicLAB/ICE-Pruning

Quantitative Analysis of Deeply Quantized Tiny Neural Networks Robust to Adversarial Attacks

Mar 12, 2025Abstract:Reducing the memory footprint of Machine Learning (ML) models, especially Deep Neural Networks (DNNs), is imperative to facilitate their deployment on resource-constrained edge devices. However, a notable drawback of DNN models lies in their susceptibility to adversarial attacks, wherein minor input perturbations can deceive them. A primary challenge revolves around the development of accurate, resilient, and compact DNN models suitable for deployment on resource-constrained edge devices. This paper presents the outcomes of a compact DNN model that exhibits resilience against both black-box and white-box adversarial attacks. This work has achieved this resilience through training with the QKeras quantization-aware training framework. The study explores the potential of QKeras and an adversarial robustness technique, Jacobian Regularization (JR), to co-optimize the DNN architecture through per-layer JR methodology. As a result, this paper has devised a DNN model employing this co-optimization strategy based on Stochastic Ternary Quantization (STQ). Its performance was compared against existing DNN models in the face of various white-box and black-box attacks. The experimental findings revealed that, the proposed DNN model had small footprint and on average, it exhibited better performance than Quanos and DS-CNN MLCommons/TinyML (MLC/T) benchmarks when challenged with white-box and black-box attacks, respectively, on the CIFAR-10 image and Google Speech Commands audio datasets.

Exploiting Unstructured Sparsity in Fully Homomorphic Encrypted DNNs

Mar 12, 2025Abstract:The deployment of deep neural networks (DNNs) in privacy-sensitive environments is constrained by computational overheads in fully homomorphic encryption (FHE). This paper explores unstructured sparsity in FHE matrix multiplication schemes as a means of reducing this burden while maintaining model accuracy requirements. We demonstrate that sparsity can be exploited in arbitrary matrix multiplication, providing runtime benefits compared to a baseline naive algorithm at all sparsity levels. This is a notable departure from the plaintext domain, where there is a trade-off between sparsity and the overhead of the sparse multiplication algorithm. In addition, we propose three sparse multiplication schemes in FHE based on common plaintext sparse encodings. We demonstrate the performance gain is scheme-invariant; however, some sparse schemes vastly reduce the memory storage requirements of the encrypted matrix at high sparsity values. Our proposed sparse schemes yield an average performance gain of 2.5x at 50% unstructured sparsity, with our multi-threading scheme providing a 32.5x performance increase over the equivalent single-threaded sparse computation when utilizing 64 cores.

Biases in Edge Language Models: Detection, Analysis, and Mitigation

Feb 17, 2025Abstract:The integration of large language models (LLMs) on low-power edge devices such as Raspberry Pi, known as edge language models (ELMs), has introduced opportunities for more personalized, secure, and low-latency language intelligence that is accessible to all. However, the resource constraints inherent in edge devices and the lack of robust ethical safeguards in language models raise significant concerns about fairness, accountability, and transparency in model output generation. This paper conducts a comparative analysis of text-based bias across language model deployments on edge, cloud, and desktop environments, aiming to evaluate how deployment settings influence model fairness. Specifically, we examined an optimized Llama-2 model running on a Raspberry Pi 4; GPT 4o-mini, Gemini-1.5-flash, and Grok-beta models running on cloud servers; and Gemma2 and Mistral models running on a MacOS desktop machine. Our results demonstrate that Llama-2 running on Raspberry Pi 4 is 43.23% and 21.89% more prone to showing bias over time compared to models running on the desktop and cloud-based environments. We also propose the implementation of a feedback loop, a mechanism that iteratively adjusts model behavior based on previous outputs, where predefined constraint weights are applied layer-by-layer during inference, allowing the model to correct bias patterns, resulting in 79.28% reduction in model bias.

DQA: An Efficient Method for Deep Quantization of Deep Neural Network Activations

Dec 12, 2024Abstract:Quantization of Deep Neural Network (DNN) activations is a commonly used technique to reduce compute and memory demands during DNN inference, which can be particularly beneficial on resource-constrained devices. To achieve high accuracy, existing methods for quantizing activations rely on complex mathematical computations or perform extensive searches for the best hyper-parameters. However, these expensive operations are impractical on devices with limited computation capabilities, memory capacities, and energy budgets. Furthermore, many existing methods do not focus on sub-6-bit (or deep) quantization. To fill these gaps, in this paper we propose DQA (Deep Quantization of DNN Activations), a new method that focuses on sub-6-bit quantization of activations and leverages simple shifting-based operations and Huffman coding to be efficient and achieve high accuracy. We evaluate DQA with 3, 4, and 5-bit quantization levels and three different DNN models for two different tasks, image classification and image segmentation, on two different datasets. DQA shows significantly better accuracy (up to 29.28%) compared to the direct quantization method and the state-of-the-art NoisyQuant for sub-6-bit quantization.

Accelerating PoT Quantization on Edge Devices

Sep 30, 2024

Abstract:Non-uniform quantization, such as power-of-two (PoT) quantization, matches data distributions better than uniform quantization, which reduces the quantization error of Deep Neural Networks (DNNs). PoT quantization also allows bit-shift operations to replace multiplications, but there are limited studies on the efficiency of shift-based accelerators for PoT quantization. Furthermore, existing pipelines for accelerating PoT-quantized DNNs on edge devices are not open-source. In this paper, we first design shift-based processing elements (shift-PE) for different PoT quantization methods and evaluate their efficiency using synthetic benchmarks. Then we design a shift-based accelerator using our most efficient shift-PE and propose PoTAcc, an open-source pipeline for end-to-end acceleration of PoT-quantized DNNs on resource-constrained edge devices. Using PoTAcc, we evaluate the performance of our shift-based accelerator across three DNNs. On average, it achieves a 1.23x speedup and 1.24x energy reduction compared to a multiplier-based accelerator, and a 2.46x speedup and 1.83x energy reduction compared to CPU-only execution. Our code is available at https://github.com/gicLAB/PoTAcc

Designing Efficient LLM Accelerators for Edge Devices

Aug 01, 2024

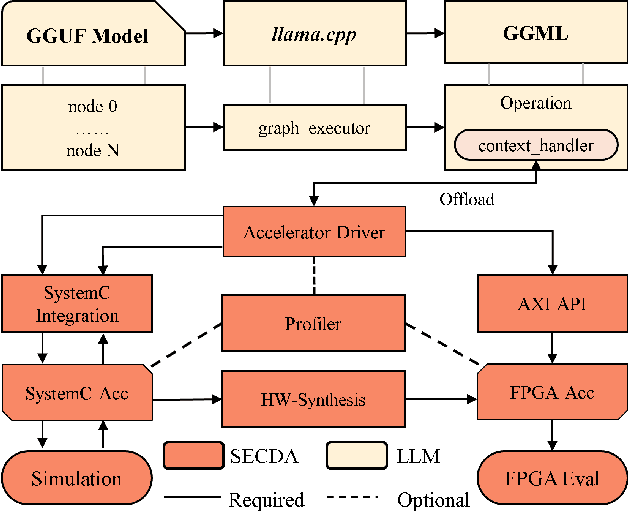

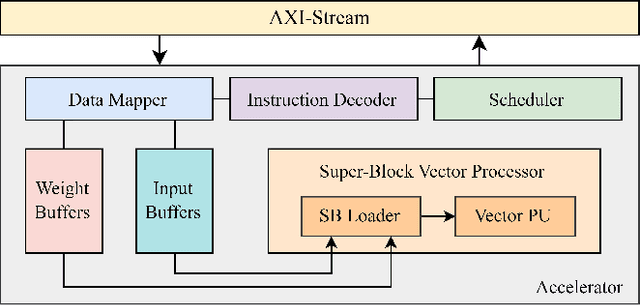

Abstract:The increase in open-source availability of Large Language Models (LLMs) has enabled users to deploy them on more and more resource-constrained edge devices to reduce reliance on network connections and provide more privacy. However, the high computation and memory demands of LLMs make their execution on resource-constrained edge devices challenging and inefficient. To address this issue, designing new and efficient edge accelerators for LLM inference is crucial. FPGA-based accelerators are ideal for LLM acceleration due to their reconfigurability, as they enable model-specific optimizations and higher performance per watt. However, creating and integrating FPGA-based accelerators for LLMs (particularly on edge devices) has proven challenging, mainly due to the limited hardware design flows for LLMs in existing FPGA platforms. To tackle this issue, in this paper we first propose a new design platform, named SECDA-LLM, that utilizes the SECDA methodology to streamline the process of designing, integrating, and deploying efficient FPGA-based LLM accelerators for the llama.cpp inference framework. We then demonstrate, through a case study, the potential benefits of SECDA-LLM by creating a new MatMul accelerator that supports block floating point quantized operations for LLMs. Our initial accelerator design, deployed on the PYNQ-Z1 board, reduces latency 1.7 seconds per token or ~2 seconds per word) by 11x over the dual-core Arm NEON-based CPU execution for the TinyLlama model.

Fix-Con: Automatic Fault Localization and Repair of Deep Learning Model Conversions

Dec 22, 2023Abstract:Converting deep learning models between frameworks is a common step to maximize model compatibility across devices and leverage optimization features that may be exclusively provided in one deep learning framework. However, this conversion process may be riddled with bugs, making the converted models either undeployable or problematic, considerably degrading their prediction correctness. We propose an automated approach for fault localization and repair, Fix-Con, during model conversion between deep learning frameworks. Fix-Con is capable of detecting and fixing faults introduced in model input, parameters, hyperparameters, and the model graph during conversion. Fix-Con uses a set of fault types mined from surveying conversion issues raised to localize potential conversion faults in the converted target model, and then repairs them appropriately, e.g. replacing the parameters of the target model with those from the source model. This is done iteratively for every image in the dataset with output label differences between the source model and the converted target model until all differences are resolved. We evaluate the effectiveness of Fix-Con in fixing model conversion bugs of three widely used image recognition models converted across four different deep learning frameworks. Overall, Fix-Con was able to either completely repair, or significantly improve the performance of 14 out of the 15 erroneous conversion cases.

DLAS: An Exploration and Assessment of the Deep Learning Acceleration Stack

Nov 15, 2023Abstract:Deep Neural Networks (DNNs) are extremely computationally demanding, which presents a large barrier to their deployment on resource-constrained devices. Since such devices are where many emerging deep learning applications lie (e.g., drones, vision-based medical technology), significant bodies of work from both the machine learning and systems communities have attempted to provide optimizations to accelerate DNNs. To help unify these two perspectives, in this paper we combine machine learning and systems techniques within the Deep Learning Acceleration Stack (DLAS), and demonstrate how these layers can be tightly dependent on each other with an across-stack perturbation study. We evaluate the impact on accuracy and inference time when varying different parameters of DLAS across two datasets, seven popular DNN architectures, four DNN compression techniques, three algorithmic primitives with sparse and dense variants, untuned and auto-scheduled code generation, and four hardware platforms. Our evaluation highlights how perturbations across DLAS parameters can cause significant variation and across-stack interactions. The highest level observation from our evaluation is that the model size, accuracy, and inference time are not guaranteed to be correlated. Overall we make 13 key observations, including that speedups provided by compression techniques are very hardware dependent, and that compiler auto-tuning can significantly alter what the best algorithm to use for a given configuration is. With DLAS, we aim to provide a reference framework to aid machine learning and systems practitioners in reasoning about the context in which their respective DNN acceleration solutions exist in. With our evaluation strongly motivating the need for co-design, we believe that DLAS can be a valuable concept for exploring the next generation of co-designed accelerated deep learning solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge