Danilo Pietro Pau

Biases in Edge Language Models: Detection, Analysis, and Mitigation

Feb 17, 2025Abstract:The integration of large language models (LLMs) on low-power edge devices such as Raspberry Pi, known as edge language models (ELMs), has introduced opportunities for more personalized, secure, and low-latency language intelligence that is accessible to all. However, the resource constraints inherent in edge devices and the lack of robust ethical safeguards in language models raise significant concerns about fairness, accountability, and transparency in model output generation. This paper conducts a comparative analysis of text-based bias across language model deployments on edge, cloud, and desktop environments, aiming to evaluate how deployment settings influence model fairness. Specifically, we examined an optimized Llama-2 model running on a Raspberry Pi 4; GPT 4o-mini, Gemini-1.5-flash, and Grok-beta models running on cloud servers; and Gemma2 and Mistral models running on a MacOS desktop machine. Our results demonstrate that Llama-2 running on Raspberry Pi 4 is 43.23% and 21.89% more prone to showing bias over time compared to models running on the desktop and cloud-based environments. We also propose the implementation of a feedback loop, a mechanism that iteratively adjusts model behavior based on previous outputs, where predefined constraint weights are applied layer-by-layer during inference, allowing the model to correct bias patterns, resulting in 79.28% reduction in model bias.

Pre-trainable Reservoir Computing with Recursive Neural Gas

Jul 25, 2018

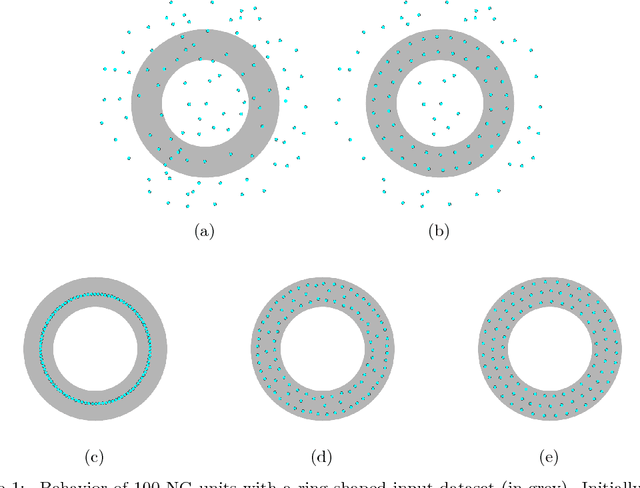

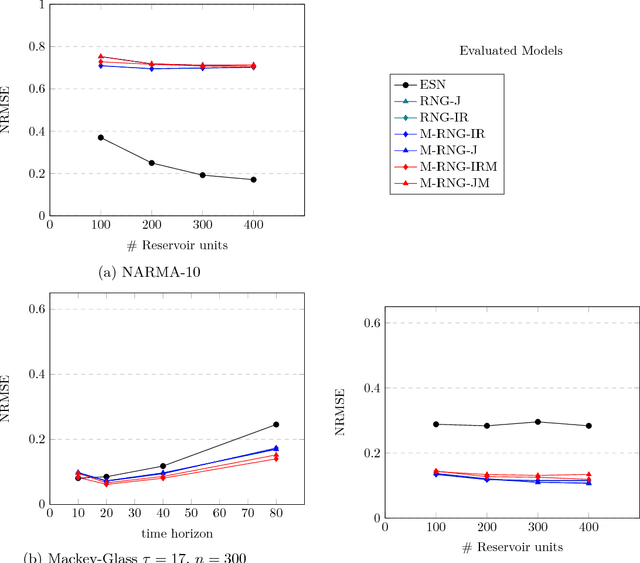

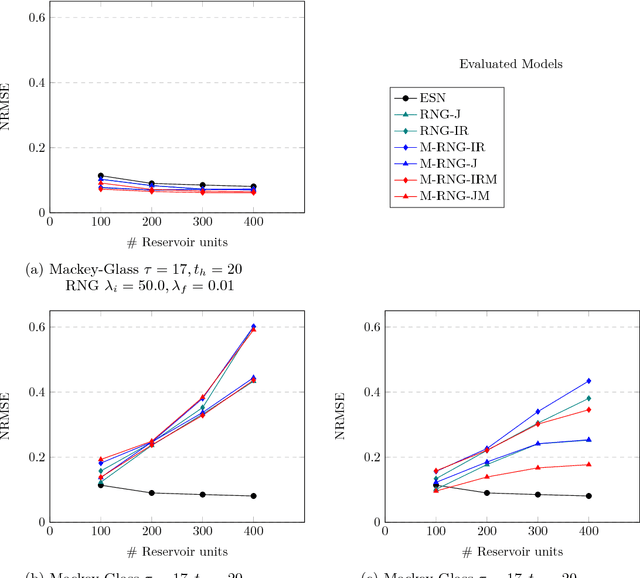

Abstract:Echo State Networks (ESN) are a class of Recurrent Neural Networks (RNN) that has gained substantial popularity due to their effectiveness, ease of use and potential for compact hardware implementation. An ESN contains the three network layers input, reservoir and readout where the reservoir is the truly recurrent network. The input and reservoir layers of an ESN are initialized at random and never trained afterwards and the training of the ESN is applied to the readout layer only. The alternative of Recursive Neural Gas (RNG) is one of the many proposals of fully-trainable reservoirs that can be found in the literature. Although some improvements in performance have been reported with RNG, to the best of authors' knowledge, no experimental comparative results are known with benchmarks for which ESN is known to yield excellent results. This work describes an accurate model of RNG together with some extensions to the models presented in the literature and shows comparative results on three well-known and accepted datasets. The experimental results obtained show that, under specific circumstances, RNG-based reservoirs can achieve better performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge