Joost-Pieter Katoen

Adversarial Robustness of Time-Series Classification for Crystal Collimator Alignment

Apr 07, 2026Abstract:In this paper, we analyze and improve the adversarial robustness of a convolutional neural network (CNN) that assists crystal-collimator alignment at CERN's Large Hadron Collider (LHC) by classifying a beam-loss monitor (BLM) time series during crystal rotation. We formalize a local robustness property for this classifier under an adversarial threat model based on real-world plausibility. Building on established parameterized input-transformation patterns used for transformation- and semantic-perturbation robustness, we instantiate a preprocessing-aware wrapper for our deployed time-series pipeline: we encode time-series normalization, padding constraints, and structured perturbations as a lightweight differentiable wrapper in front of the CNN, so that existing gradient-based robustness frameworks can operate on the deployed pipeline. For formal verification, data-dependent preprocessing such as per-window z-normalization introduces nonlinear operators that require verifier-specific abstractions. We therefore focus on attack-based robustness estimates and pipeline-checked validity by benchmarking robustness with the frameworks Foolbox and ART. Adversarial fine-tuning of the resulting CNN improves robust accuracy by up to 18.6 % without degrading clean accuracy. Finally, we extend robustness on time-series data beyond single windows to sequence-level robustness for sliding-window classification, introduce adversarial sequences as counterexamples to a temporal robustness requirement over full scans, and observe attack-induced misclassifications that persist across adjacent windows.

Natural Strategic Ability in Stochastic Multi-Agent Systems

Jan 22, 2024

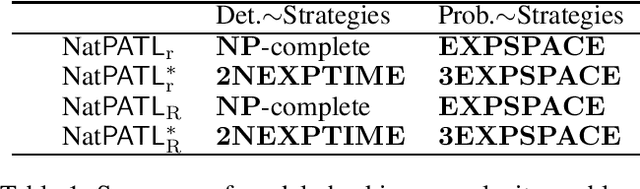

Abstract:Strategies synthesized using formal methods can be complex and often require infinite memory, which does not correspond to the expected behavior when trying to model Multi-Agent Systems (MAS). To capture such behaviors, natural strategies are a recently proposed framework striking a balance between the ability of agents to strategize with memory and the model-checking complexity, but until now has been restricted to fully deterministic settings. For the first time, we consider the probabilistic temporal logics PATL and PATL* under natural strategies (NatPATL and NatPATL*, resp.). As main result we show that, in stochastic MAS, NatPATL model-checking is NP-complete when the active coalition is restricted to deterministic strategies. We also give a 2NEXPTIME complexity result for NatPATL* with the same restriction. In the unrestricted case, we give an EXPSPACE complexity for NatPATL and 3EXPSPACE complexity for NatPATL*.

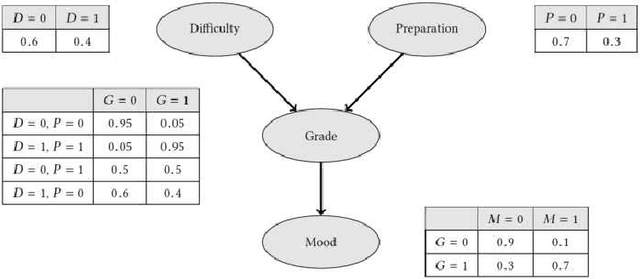

Finding an $ε$-close Variation of Parameters in Bayesian Networks

May 17, 2023

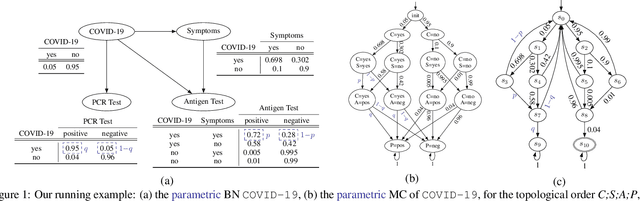

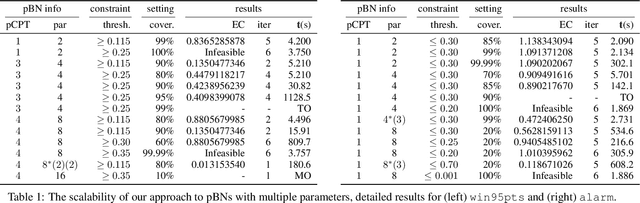

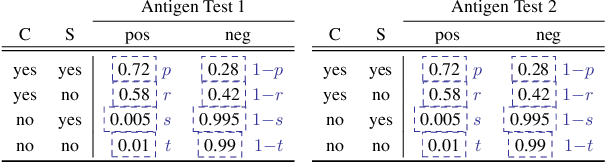

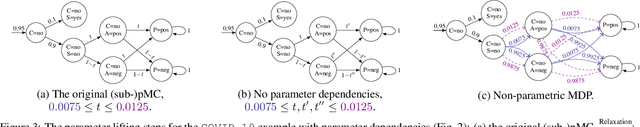

Abstract:This paper addresses the $\epsilon$-close parameter tuning problem for Bayesian Networks (BNs): find a minimal $\epsilon$-close amendment of probability entries in a given set of (rows in) conditional probability tables that make a given quantitative constraint on the BN valid. Based on the state-of-the-art "region verification" techniques for parametric Markov chains, we propose an algorithm whose capabilities go beyond any existing techniques. Our experiments show that $\epsilon$-close tuning of large BN benchmarks with up to 8 parameters is feasible. In particular, by allowing (i) varied parameters in multiple CPTs and (ii) inter-CPT parameter dependencies, we treat subclasses of parametric BNs that have received scant attention so far.

Weighted Programming

Feb 15, 2022

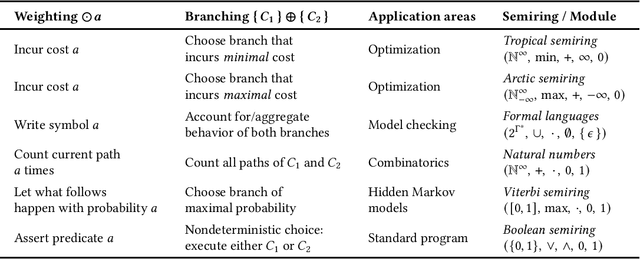

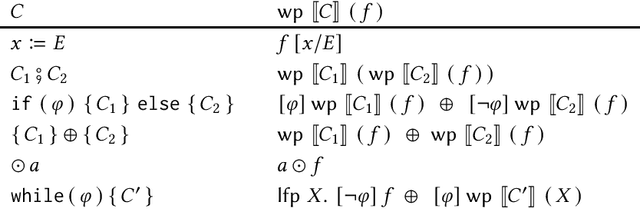

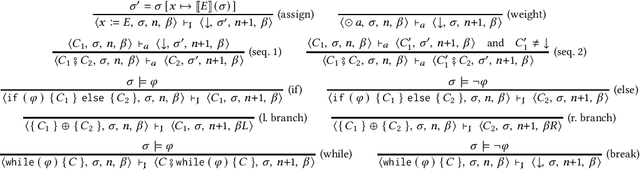

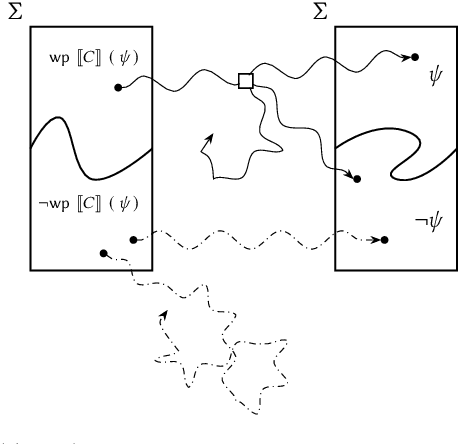

Abstract:We study weighted programming, a programming paradigm for specifying mathematical models. More specifically, the weighted programs we investigate are like usual imperative programs with two additional features: (1) nondeterministic branching and (2) weighting execution traces. Weights can be numbers but also other objects like words from an alphabet, polynomials, formal power series, or cardinal numbers. We argue that weighted programming as a paradigm can be used to specify mathematical models beyond probability distributions (as is done in probabilistic programming). We develop weakest-precondition- and weakest-liberal-precondition-style calculi \`{a} la Dijkstra for reasoning about mathematical models specified by weighted programs. We present several case studies. For instance, we use weighted programming to model the ski rental problem - an optimization problem. We model not only the optimization problem itself, but also the best deterministic online algorithm for solving this problem as weighted programs. By means of weakest-precondition-style reasoning, we can determine the competitive ratio of the online algorithm on source code level.

Under-Approximating Expected Total Rewards in POMDPs

Jan 21, 2022

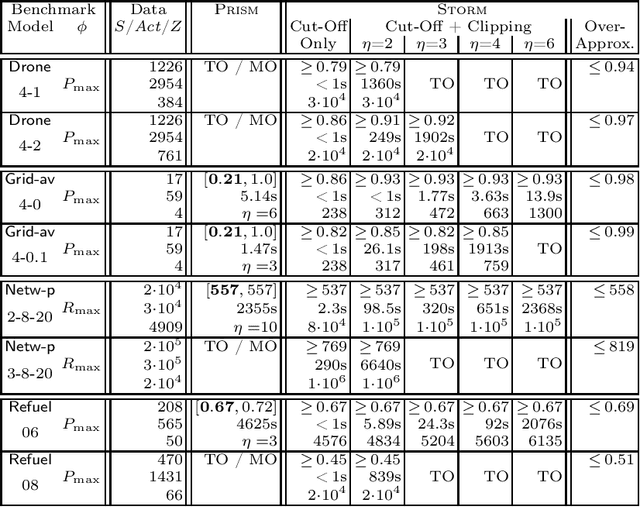

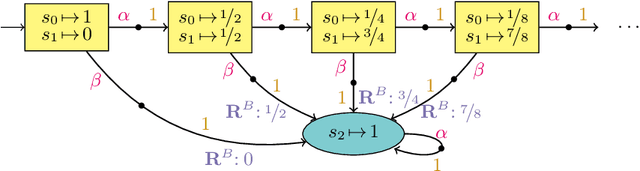

Abstract:We consider the problem: is the optimal expected total reward to reach a goal state in a partially observable Markov decision process (POMDP) below a given threshold? We tackle this -- generally undecidable -- problem by computing under-approximations on these total expected rewards. This is done by abstracting finite unfoldings of the infinite belief MDP of the POMDP. The key issue is to find a suitable under-approximation of the value function. We provide two techniques: a simple (cut-off) technique that uses a good policy on the POMDP, and a more advanced technique (belief clipping) that uses minimal shifts of probabilities between beliefs. We use mixed-integer linear programming (MILP) to find such minimal probability shifts and experimentally show that our techniques scale quite well while providing tight lower bounds on the expected total reward.

Convex Optimization for Parameter Synthesis in MDPs

Jun 30, 2021

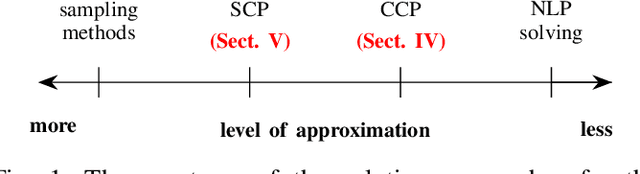

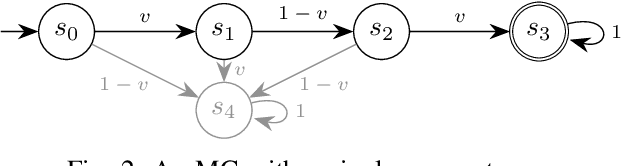

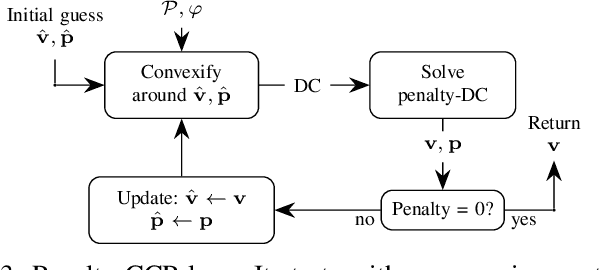

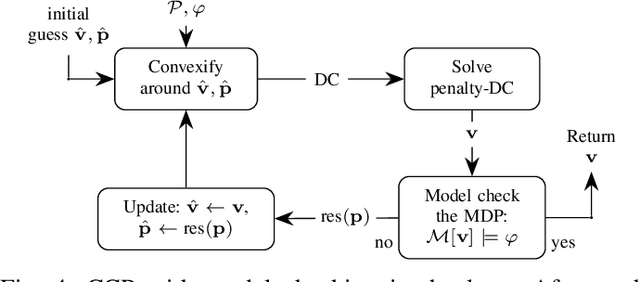

Abstract:Probabilistic model checking aims to prove whether a Markov decision process (MDP) satisfies a temporal logic specification. The underlying methods rely on an often unrealistic assumption that the MDP is precisely known. Consequently, parametric MDPs (pMDPs) extend MDPs with transition probabilities that are functions over unspecified parameters. The parameter synthesis problem is to compute an instantiation of these unspecified parameters such that the resulting MDP satisfies the temporal logic specification. We formulate the parameter synthesis problem as a quadratically constrained quadratic program (QCQP), which is nonconvex and is NP-hard to solve in general. We develop two approaches that iteratively obtain locally optimal solutions. The first approach exploits the so-called convex-concave procedure (CCP), and the second approach utilizes a sequential convex programming (SCP) method. The techniques improve the runtime and scalability by multiple orders of magnitude compared to black-box CCP and SCP by merging ideas from convex optimization and probabilistic model checking. We demonstrate the approaches on a satellite collision avoidance problem with hundreds of thousands of states and tens of thousands of parameters and their scalability on a wide range of commonly used benchmarks.

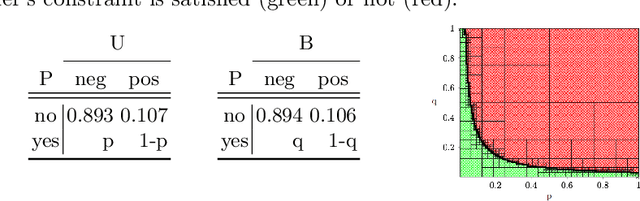

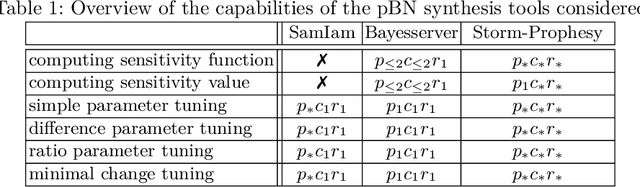

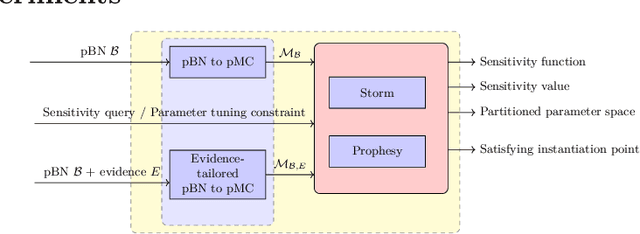

Fine-Tuning the Odds in Bayesian Networks

May 29, 2021

Abstract:This paper proposes various new analysis techniques for Bayes networks in which conditional probability tables (CPTs) may contain symbolic variables. The key idea is to exploit scalable and powerful techniques for synthesis problems in parametric Markov chains. Our techniques are applicable to arbitrarily many, possibly dependent parameters that may occur in various CPTs. This lifts the severe restrictions on parameters, e.g., by restricting the number of parametrized CPTs to one or two, or by avoiding parameter dependencies between several CPTs, in existing works for parametric Bayes networks (pBNs). We describe how our techniques can be used for various pBN synthesis problems studied in the literature such as computing sensitivity functions (and values), simple and difference parameter tuning, ratio parameter tuning, and minimal change tuning. Experiments on several benchmarks show that our prototypical tool built on top of the probabilistic model checker Storm can handle several hundreds of parameters.

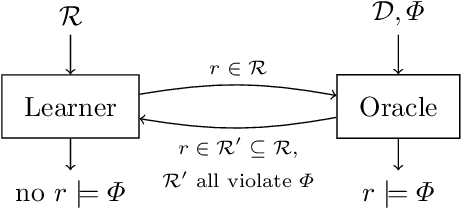

Inductive Synthesis for Probabilistic Programs Reaches New Horizons

Jan 29, 2021

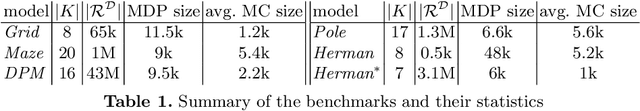

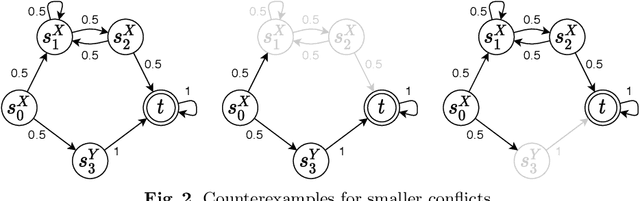

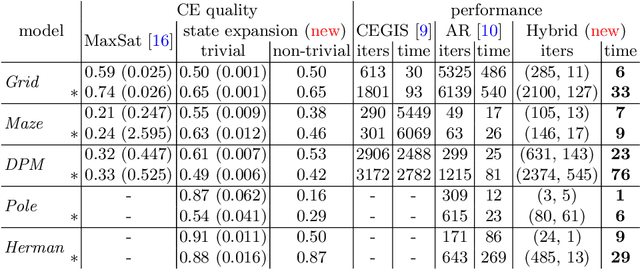

Abstract:This paper presents a novel method for the automated synthesis of probabilistic programs. The starting point is a program sketch representing a finite family of finite-state Markov chains with related but distinct topologies, and a PCTL specification. The method builds on a novel inductive oracle that greedily generates counter-examples (CEs) for violating programs and uses them to prune the family. These CEs leverage the semantics of the family in the form of bounds on its best- and worst-case behaviour provided by a deductive oracle using an MDP abstraction. The method further monitors the performance of the synthesis and adaptively switches between the inductive and deductive reasoning. Our experiments demonstrate that the novel CE construction provides a significantly faster and more effective pruning strategy leading to acceleration of the synthesis process on a wide range of benchmarks. For challenging problems, such as the synthesis of decentralized partially-observable controllers, we reduce the run-time from a day to minutes.

Bayesian Inference by Symbolic Model Checking

Jul 29, 2020

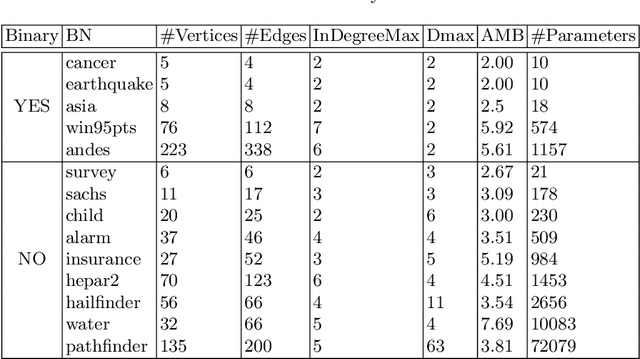

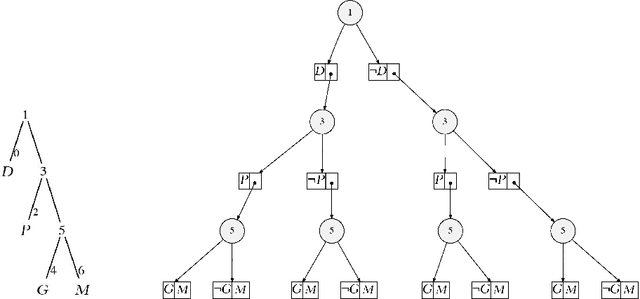

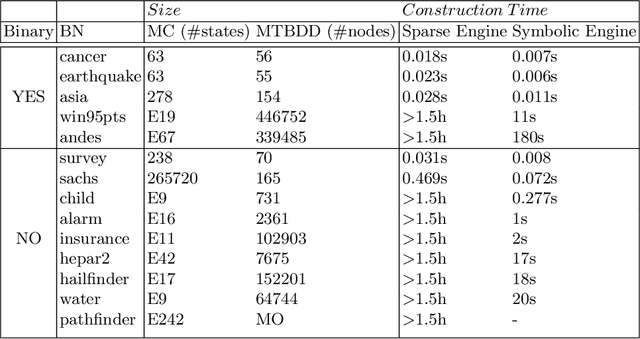

Abstract:This paper applies probabilistic model checking techniques for discrete Markov chains to inference in Bayesian networks. We present a simple translation from Bayesian networks into tree-like Markov chains such that inference can be reduced to computing reachability probabilities. Using a prototypical implementation on top of the Storm model checker, we show that symbolic data structures such as multi-terminal BDDs (MTBDDs) are very effective to perform inference on large Bayesian network benchmarks. We compare our result to inference using probabilistic sentential decision diagrams and vtrees, a scalable symbolic technique in AI inference tools.

Verification of indefinite-horizon POMDPs

Jun 30, 2020

Abstract:The verification problem in MDPs asks whether, for any policy resolving the nondeterminism, the probability that something bad happens is bounded by some given threshold. This verification problem is often overly pessimistic, as the policies it considers may depend on the complete system state. This paper considers the verification problem for partially observable MDPs, in which the policies make their decisions based on (the history of) the observations emitted by the system. We present an abstraction-refinement framework extending previous instantiations of the Lovejoy-approach. Our experiments show that this framework significantly improves the scalability of the approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge