Jim Mainprice

Autonomous Motion Department at the MPI for Intelligent Systems, Tübingen, Germany, Univ. of Stuttgart, Germany

Planning Coordinated Human-Robot Motions with Neural Network Full-Body Prediction Models

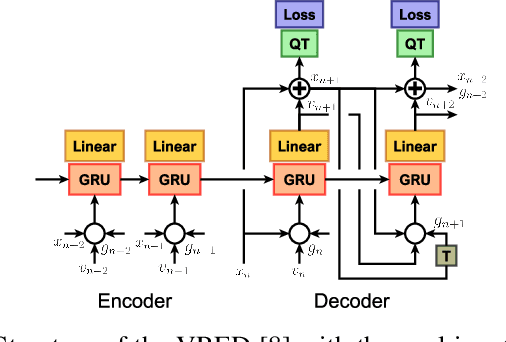

Oct 24, 2022Abstract:Numerical optimization has become a popular approach to plan smooth motion trajectories for robots. However, when sharing space with humans, balancing properly safety, comfort and efficiency still remains challenging. This is notably the case because humans adapt their behavior to that of the robot, raising the need for intricate planning and prediction. In this paper, we propose a novel optimization-based motion planning algorithm, which generates robot motions, while simultaneously maximizing the human trajectory likelihood under a data-driven predictive model. Considering planning and prediction together allows us to formulate objective and constraint functions in the joint human-robot state space. Key to the approach are added latent space modifiers to a differentiable human predictive model based on a dedicated recurrent neural network. These modifiers allow to change the human prediction within motion optimization. We empirically evaluate our method using the publicly available MoGaze dataset. Our results indicate that the proposed framework outperforms current baselines for planning handover trajectories and avoiding collisions between a robot and a human. Our experiments demonstrate collaborative motion trajectories, where both, the human prediction and the robot plan, adapt to each other.

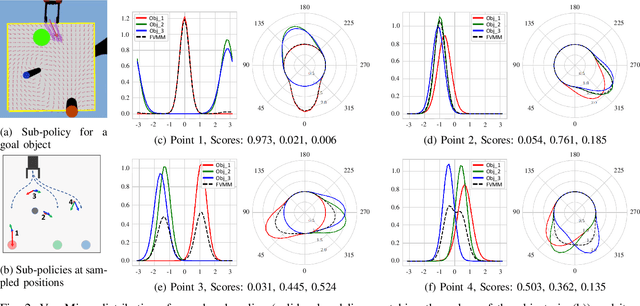

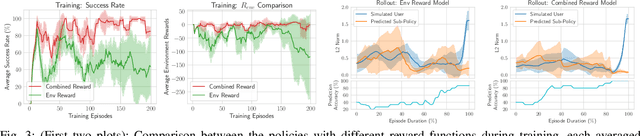

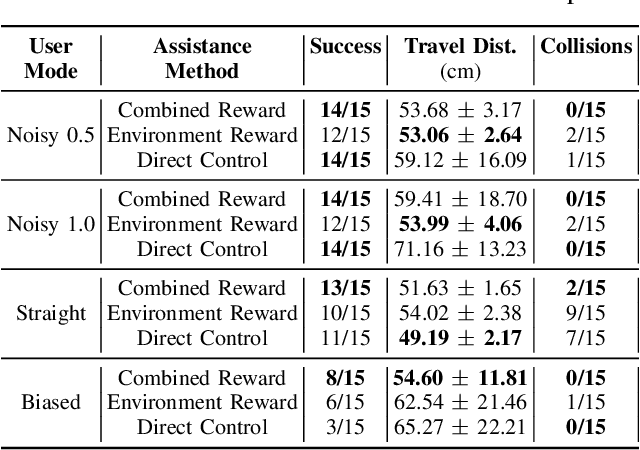

Learning to Arbitrate Human and Robot Control using Disagreement between Sub-Policies

Aug 24, 2021

Abstract:In the context of teleoperation, arbitration refers to deciding how to blend between human and autonomous robot commands. We present a reinforcement learning solution that learns an optimal arbitration strategy that allocates more control authority to the human when the robot comes across a decision point in the task. A decision point is where the robot encounters multiple options (sub-policies), such as having multiple paths to get around an obstacle or deciding between two candidate goals. By expressing each directional sub-policy as a von Mises distribution, we identify the decision points by observing the modality of the mixture distribution. Our reward function reasons on this modality and prioritizes to match its learned policy to either the user or the robot accordingly. We report teleoperation experiments on reach-and-grasping objects using a robot manipulator arm with different simulated human controllers. Results indicate that our shared control agent outperforms direct control and improves the teleoperation performance among different users. Using our reward term enables flexible blending between human and robot commands while maintaining safe and accurate teleoperation.

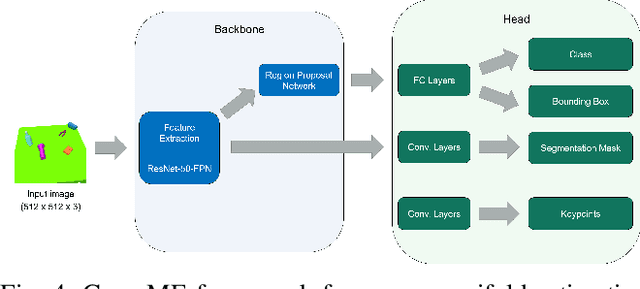

GraspME -- Grasp Manifold Estimator

Jul 05, 2021

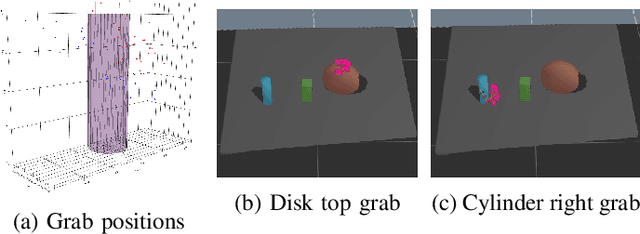

Abstract:In this paper, we introduce a Grasp Manifold Estimator (GraspME) to detect grasp affordances for objects directly in 2D camera images. To perform manipulation tasks autonomously it is crucial for robots to have such graspability models of the surrounding objects. Grasp manifolds have the advantage of providing continuously infinitely many grasps, which is not the case when using other grasp representations such as predefined grasp points. For instance, this property can be leveraged in motion optimization to define goal sets as implicit surface constraints in the robot configuration space. In this work, we restrict ourselves to the case of estimating possible end-effector positions directly from 2D camera images. To this extend, we define grasp manifolds via a set of key points and locate them in images using a Mask R-CNN backbone. Using learned features allows generalizing to different view angles, with potentially noisy images, and objects that were not part of the training set. We rely on simulation data only and perform experiments on simple and complex objects, including unseen ones. Our framework achieves an inference speed of 11.5 fps on a GPU, an average precision for keypoint estimation of 94.5% and a mean pixel distance of only 1.29. This shows that we can estimate the objects very well via bounding boxes and segmentation masks as well as approximate the correct grasp manifold's keypoint coordinates.

A System for Traded Control Teleoperation of Manipulation Tasks using Intent Prediction from Hand Gestures

Jul 05, 2021

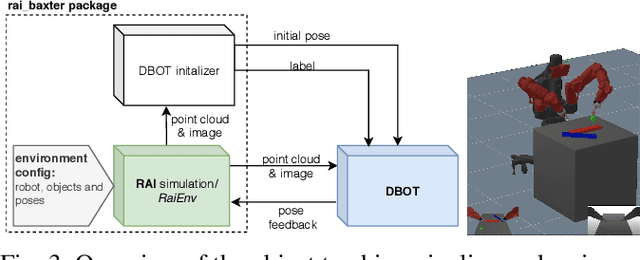

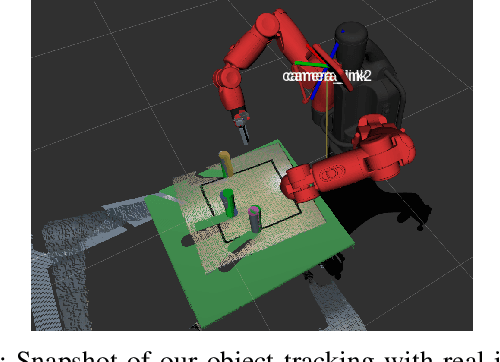

Abstract:This paper presents a teleoperation system that includes robot perception and intent prediction from hand gestures. The perception module identifies the objects present in the robot workspace and the intent prediction module which object the user likely wants to grasp. This architecture allows the approach to rely on traded control instead of direct control: we use hand gestures to specify the goal objects for a sequential manipulation task, the robot then autonomously generates a grasping or a retrieving motion using trajectory optimization. The perception module relies on the model-based tracker to precisely track the 6D pose of the objects and makes use of a state of the art learning-based object detection and segmentation method, to initialize the tracker by automatically detecting objects in the scene. Goal objects are identified from user hand gestures using a trained a multi-layer perceptron classifier. After presenting all the components of the system and their empirical evaluation, we present experimental results comparing our pipeline to a direct traded control approach (i.e., one that does not use prediction) which shows that using intent prediction allows to bring down the overall task execution time.

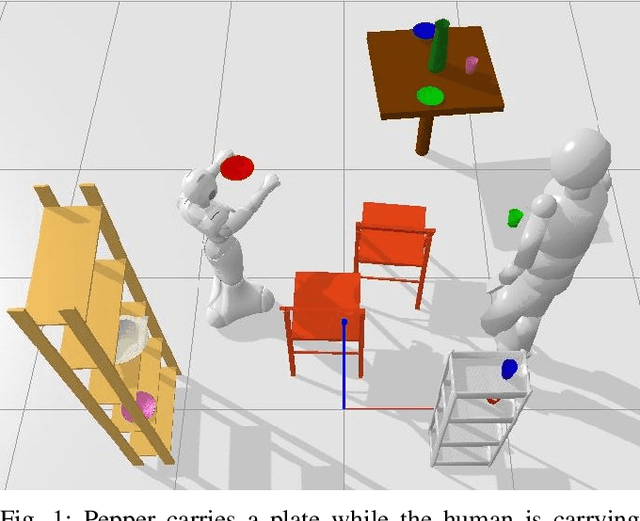

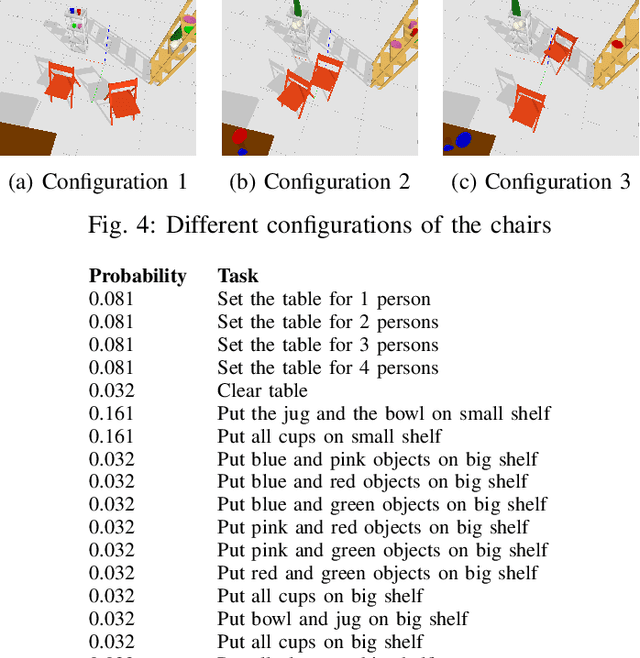

Hierarchical Human-Motion Prediction and Logic-Geometric Programming for Minimal Interference Human-Robot Tasks

Apr 16, 2021

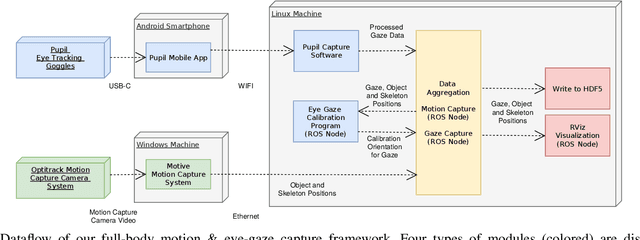

Abstract:In this paper, we tackle the problem of human-robot coordination in sequences of manipulation tasks. Our approach integrates hierarchical human motion prediction with Task and Motion Planning (TAMP). We first devise a hierarchical motion prediction approach by combining Inverse Reinforcement Learning and short-term motion prediction using a Recurrent Neural Network. In a second step, we propose a dynamic version of the TAMP algorithm Logic- Geometric Programming (LGP). Our version of Dynamic LGP, replans periodically to handle the mismatch between the human motion prediction and the actual human behavior. We assess the efficacy of the approach by training the prediction algorithms and testing the framework on the publicly available MoGaze dataset

MoGaze: A Dataset of Full-Body Motions that Includes Workspace Geometry and Eye-Gaze

Nov 23, 2020

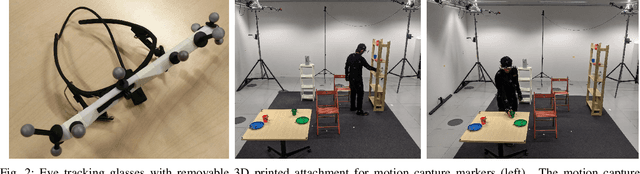

Abstract:As robots become more present in open human environments, it will become crucial for robotic systems to understand and predict human motion. Such capabilities depend heavily on the quality and availability of motion capture data. However, existing datasets of full-body motion rarely include 1) long sequences of manipulation tasks, 2) the 3D model of the workspace geometry, and 3) eye-gaze, which are all important when a robot needs to predict the movements of humans in close proximity. Hence, in this paper, we present a novel dataset of full-body motion for everyday manipulation tasks, which includes the above. The motion data was captured using a traditional motion capture system based on reflective markers. We additionally captured eye-gaze using a wearable pupil-tracking device. As we show in experiments, the dataset can be used for the design and evaluation of full-body motion prediction algorithms. Furthermore, our experiments show eye-gaze as a powerful predictor of human intent. The dataset includes 180 min of motion capture data with 1627 pick and place actions being performed. It is available at https://humans-to-robots-motion.github.io/mogaze and is planned to be extended to collaborative tasks with two humans in the near future.

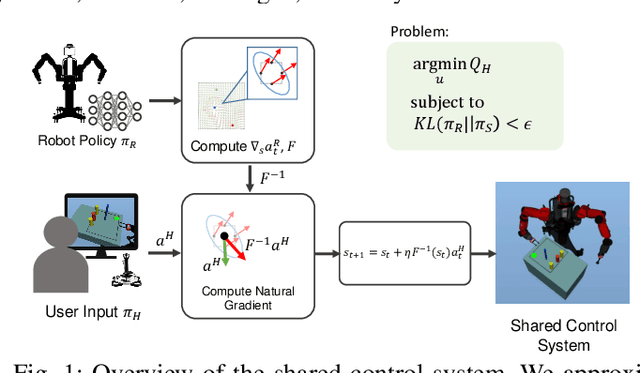

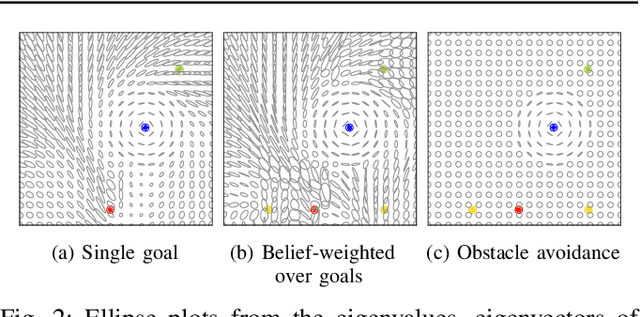

Natural Gradient Shared Control

Jul 30, 2020

Abstract:We propose a formalism for shared control, which is the problem of defining a policy that blends user control and autonomous control. The challenge posed by the shared autonomy system is to maintain user control authority while allowing the robot to support the user. This can be done by enforcing constraints or acting optimally when the intent is clear. Our proposed solution relies on natural gradients emerging from the divergence constraint between the robot and the shared policy. We approximate the Fisher information by sampling a learned robot policy and computing the local gradient to augment the user control when necessary. A user study performed on a manipulation task demonstrates that our approach allows for more efficient task completion while keeping control authority against a number of baseline methods.

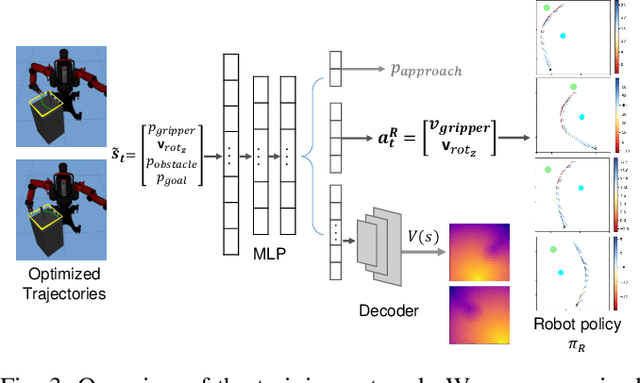

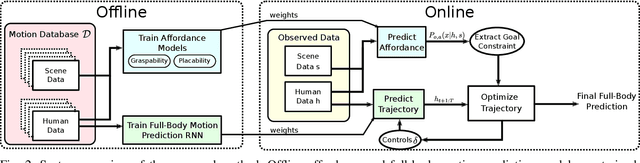

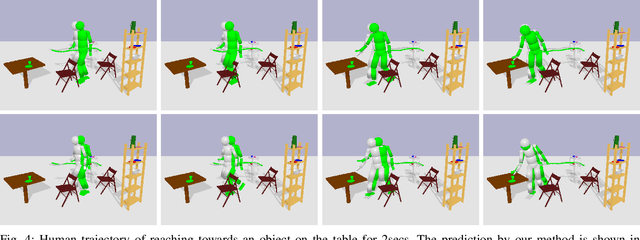

Anticipating Human Intention for Full-Body Motion Prediction in Object Grasping and Placing Tasks

Jul 20, 2020

Abstract:Motion prediction in unstructured environments is a difficult problem and is essential for safe and efficient human-robot space sharing and collaboration. In this work, we focus on manipulation movements in environments such as homes, workplaces or restaurants, where the overall task and environment can be leveraged to produce accurate motion prediction. For these cases we propose an algorithmic framework that accounts explicitly for the environment geometry based on a model of affordances and a model of short-term human dynamics both trained on motion capture data. We propose dedicated function networks for graspability and placebility affordances and we make use of a dedicated RNN for short-term motion prediction. The prediction of grasp and placement probability densities are used by a constraint-based trajectory optimizer to produce a full-body motion prediction over the entire horizon. We show by comparing to ground truth data that we achieve similar performance for full-body motion predictions as using oracle grasp and place locations.

An Interior Point Method Solving Motion Planning Problems with Narrow Passages

Jul 09, 2020

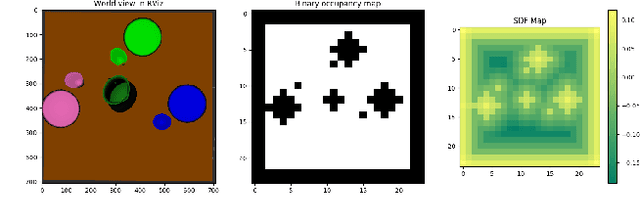

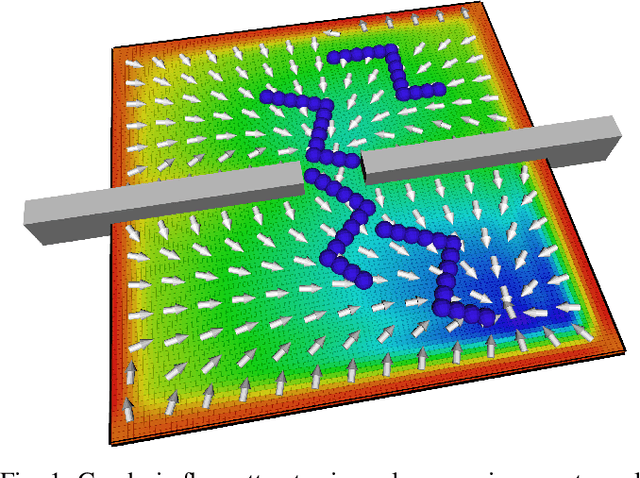

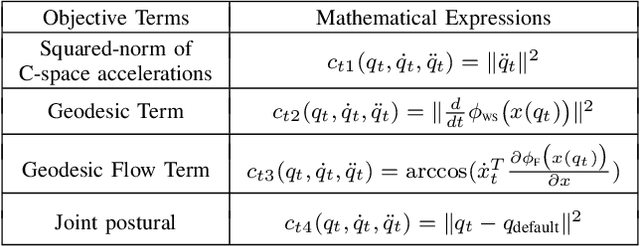

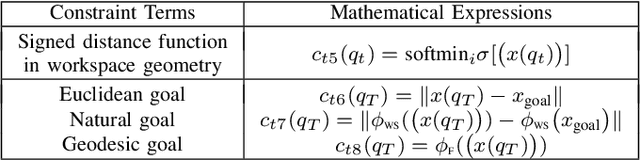

Abstract:Algorithmic solutions for the motion planning problem have been investigated for five decades. Since the development of A* in 1969 many approaches have been investigated, traditionally classified as either grid decomposition, potential fields or sampling-based. In this work, we focus on using numerical optimization, which is understudied for solving motion planning problems. This lack of interest in the favor of sampling-based methods is largely due to the non-convexity introduced by narrow passages. We address this shortcoming by grounding the motion planning problem in differential geometry. We demonstrate through a series of experiments on 3 Dofs and 6 Dofs narrow passage problems, how modeling explicitly the underlying Riemannian manifold leads to an efficient interior-point non-linear programming solution.

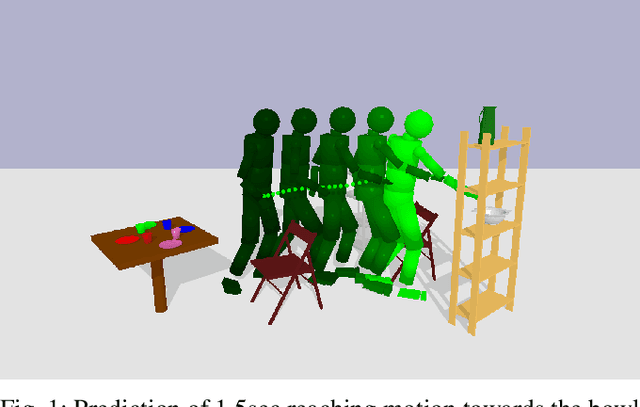

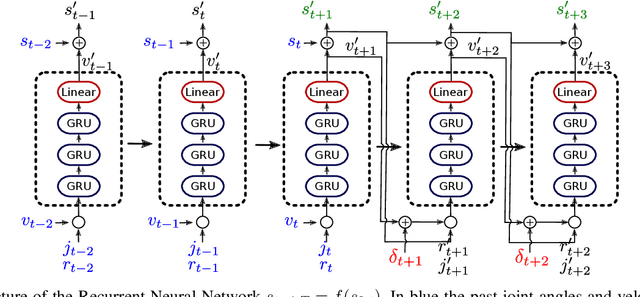

Prediction of Human Full-Body Movements with Motion Optimization and Recurrent Neural Networks

Oct 04, 2019

Abstract:Human movement prediction is difficult as humans naturally exhibit complex behaviors that can change drastically from one environment to the next. In order to alleviate this issue, we propose a prediction framework that decouples short-term prediction, linked to internal body dynamics, and long-term prediction, linked to the environment and task constraints. In this work we investigate encoding short-term dynamics in a recurrent neural network, while we account for environmental constraints, such as obstacle avoidance, using gradient-based trajectory optimization. Experiments on real motion data demonstrate that our framework improves the prediction with respect to state-of-the-art motion prediction methods, as it accounts to beforehand unseen environmental structures. Moreover we demonstrate on an example, how this framework can be used to plan robot trajectories that are optimized to coordinate with a human partner.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge