Anticipating Human Intention for Full-Body Motion Prediction in Object Grasping and Placing Tasks

Paper and Code

Jul 20, 2020

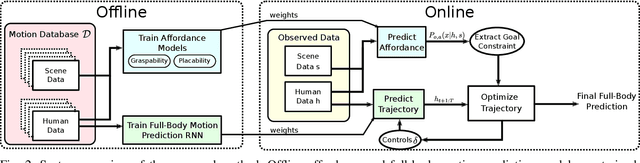

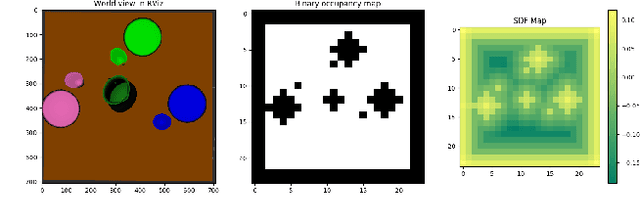

Motion prediction in unstructured environments is a difficult problem and is essential for safe and efficient human-robot space sharing and collaboration. In this work, we focus on manipulation movements in environments such as homes, workplaces or restaurants, where the overall task and environment can be leveraged to produce accurate motion prediction. For these cases we propose an algorithmic framework that accounts explicitly for the environment geometry based on a model of affordances and a model of short-term human dynamics both trained on motion capture data. We propose dedicated function networks for graspability and placebility affordances and we make use of a dedicated RNN for short-term motion prediction. The prediction of grasp and placement probability densities are used by a constraint-based trajectory optimizer to produce a full-body motion prediction over the entire horizon. We show by comparing to ground truth data that we achieve similar performance for full-body motion predictions as using oracle grasp and place locations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge