Jennifer Williams

Disentangling Speaker Traits for Deepfake Source Verification via Chebyshev Polynomial and Riemannian Metric Learning

Mar 23, 2026Abstract:Speech deepfake source verification systems aims to determine whether two synthetic speech utterances originate from the same source generator, often assuming that the resulting source embeddings are independent of speaker traits. However, this assumption remains unverified. In this paper, we first investigate the impact of speaker factors on source verification. We propose a speaker-disentangled metric learning (SDML) framework incorporating two novel loss functions. The first leverages Chebyshev polynomial to mitigate gradient instability during disentanglement optimization. The second projects source and speaker embeddings into hyperbolic space, leveraging Riemannian metric distances to reduce speaker information and learn more discriminative source features. Experimental results on MLAAD benchmark, evaluated under four newly proposed protocols designed for source-speaker disentanglement scenarios, demonstrate the effectiveness of SDML framework. The code, evaluation protocols and demo website are available at https://github.com/xxuan-acoustics/RiemannSD-Net.

A New Approach to Voice Authenticity

Feb 09, 2024

Abstract:Voice faking, driven primarily by recent advances in text-to-speech (TTS) synthesis technology, poses significant societal challenges. Currently, the prevailing assumption is that unaltered human speech can be considered genuine, while fake speech comes from TTS synthesis. We argue that this binary distinction is oversimplified. For instance, altered playback speeds can be used for malicious purposes, like in the 'Drunken Nancy Pelosi' incident. Similarly, editing of audio clips can be done ethically, e.g., for brevity or summarization in news reporting or podcasts, but editing can also create misleading narratives. In this paper, we propose a conceptual shift away from the binary paradigm of audio being either 'fake' or 'real'. Instead, our focus is on pinpointing 'voice edits', which encompass traditional modifications like filters and cuts, as well as TTS synthesis and VC systems. We delineate 6 categories and curate a new challenge dataset rooted in the M-AILABS corpus, for which we present baseline detection systems. And most importantly, we argue that merely categorizing audio as fake or real is a dangerous over-simplification that will fail to move the field of speech technology forward.

Exploratory Evaluation of Speech Content Masking

Jan 08, 2024Abstract:Most recent speech privacy efforts have focused on anonymizing acoustic speaker attributes but there has not been as much research into protecting information from speech content. We introduce a toy problem that explores an emerging type of privacy called "content masking" which conceals selected words and phrases in speech. In our efforts to define this problem space, we evaluate an introductory baseline masking technique based on modifying sequences of discrete phone representations (phone codes) produced from a pre-trained vector-quantized variational autoencoder (VQ-VAE) and re-synthesized using WaveRNN. We investigate three different masking locations and three types of masking strategies: noise substitution, word deletion, and phone sequence reversal. Our work attempts to characterize how masking affects two downstream tasks: automatic speech recognition (ASR) and automatic speaker verification (ASV). We observe how the different masks types and locations impact these downstream tasks and discuss how these issues may influence privacy goals.

Protecting Publicly Available Data With Machine Learning Shortcuts

Oct 30, 2023Abstract:Machine-learning (ML) shortcuts or spurious correlations are artifacts in datasets that lead to very good training and test performance but severely limit the model's generalization capability. Such shortcuts are insidious because they go unnoticed due to good in-domain test performance. In this paper, we explore the influence of different shortcuts and show that even simple shortcuts are difficult to detect by explainable AI methods. We then exploit this fact and design an approach to defend online databases against crawlers: providers such as dating platforms, clothing manufacturers, or used car dealers have to deal with a professionalized crawling industry that grabs and resells data points on a large scale. We show that a deterrent can be created by deliberately adding ML shortcuts. Such augmented datasets are then unusable for ML use cases, which deters crawlers and the unauthorized use of data from the internet. Using real-world data from three use cases, we show that the proposed approach renders such collected data unusable, while the shortcut is at the same time difficult to notice in human perception. Thus, our proposed approach can serve as a proactive protection against illegitimate data crawling.

New Challenges for Content Privacy in Speech and Audio

Jan 21, 2023Abstract:Privacy in speech and audio has many facets. A particularly under-developed area of privacy in this domain involves consideration for information related to content and context. Speech content can include words and their meaning or even stylistic markers, pathological speech, intonation patterns, or emotion. More generally, audio captured in-the-wild may contain background speech or reveal contextual information such as markers of location, room characteristics, paralinguistic sounds, or other audible events. Audio recording devices and speech technologies are becoming increasingly commonplace in everyday life. At the same time, commercialised speech and audio technologies do not provide consumers with a range of privacy choices. Even where privacy is regulated or protected by law, technical solutions to privacy assurance and enforcement fall short. This position paper introduces three important and timely research challenges for content privacy in speech and audio. We highlight current gaps and opportunities, and identify focus areas, that could have significant implications for developing ethical and safer speech technologies.

Shortcut Removal for Improved OOD-Generalization

Nov 24, 2022

Abstract:Machine learning is a data-driven discipline, and learning success is largely dependent on the quality of the underlying data sets. However, it is becoming increasingly clear that even high performance on held-out test data does not necessarily mean that a model generalizes or learns anything meaningful at all. One reason for this is the presence of machine learning shortcuts, i.e., hints in the data that are predictive but accidental and semantically unconnected to the problem. We present a new approach to detect such shortcuts and a technique to automatically remove them from datasets. Using an adversarially trained lens, any small and highly predictive clues in images can be detected and removed. We show that this approach 1) does not cause degradation of model performance in the absence of these shortcuts, and 2) reliably identifies and neutralizes shortcuts from different image datasets. In our experiments, we are able to recover up to 93,8% of model performance in the presence of different shortcuts. Finally, we apply our model to a real-world dataset from the medical domain consisting of chest x-rays and identify and remove several types of shortcuts that are known to hinder real-world applicability. Thus, we hope that our proposed approach fosters real-world applicability of machine learning.

Analysis of Voice Conversion and Code-Switching Synthesis Using VQ-VAE

Mar 28, 2022

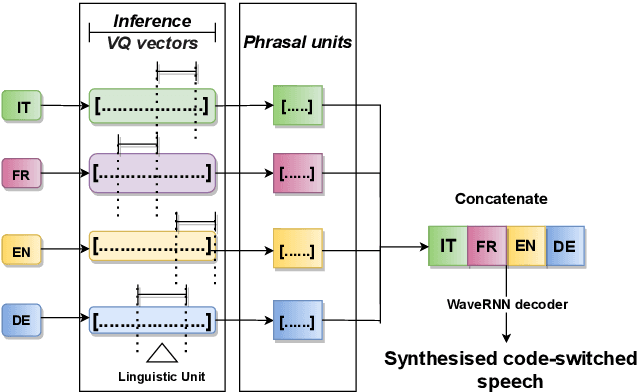

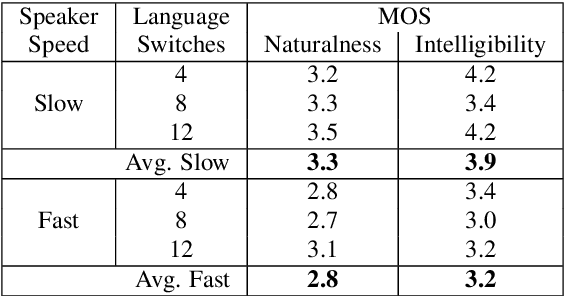

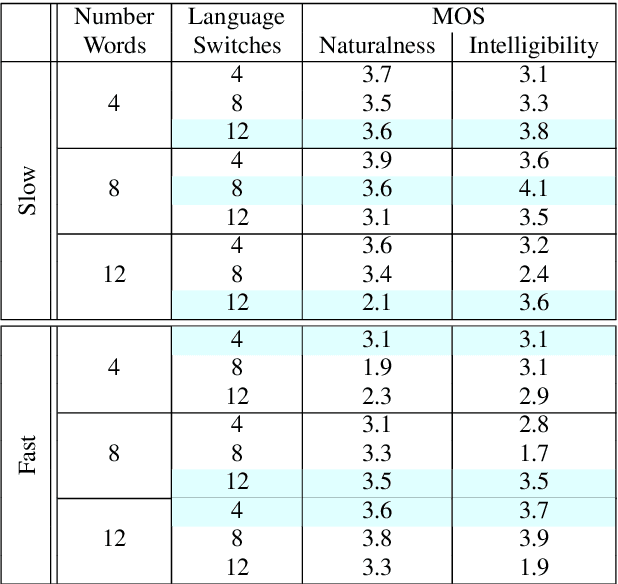

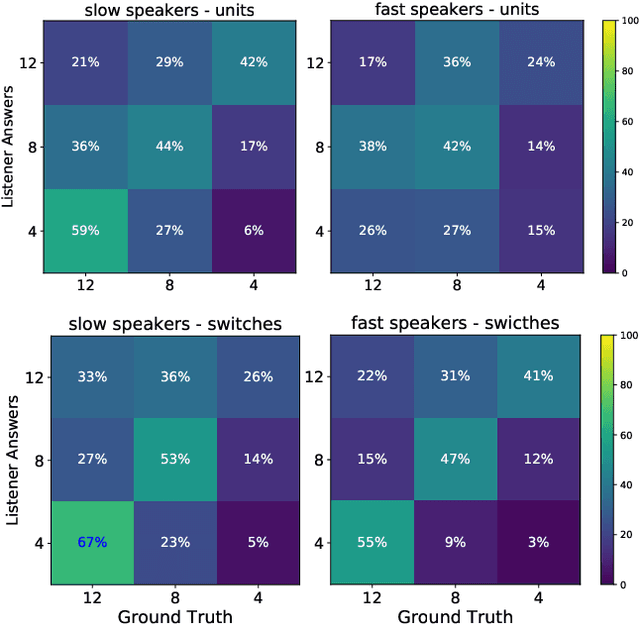

Abstract:This paper presents an analysis of speech synthesis quality achieved by simultaneously performing voice conversion and language code-switching using multilingual VQ-VAE speech synthesis in German, French, English and Italian. In this paper, we utilize VQ code indices representing phone information from VQ-VAE to perform code-switching and a VQ speaker code to perform voice conversion in a single system with a neural vocoder. Our analysis examines several aspects of code-switching including the number of language switches and the number of words involved in each switch. We found that speech synthesis quality degrades after increasing the number of language switches within an utterance and decreasing the number of words. We also found some evidence of accent transfer when performing voice conversion across languages as observed when a speaker's original language differs from the language of a synthetic target utterance. We present results from our listening tests and discuss the inherent difficulties of assessing accent transfer in speech synthesis. Our work highlights some of the limitations and strengths of using a semi-supervised end-to-end system like VQ-VAE for handling multilingual synthesis. Our work provides insight into why multilingual speech synthesis is challenging and we suggest some directions for expanding work in this area.

Attacker Attribution of Audio Deepfakes

Mar 28, 2022

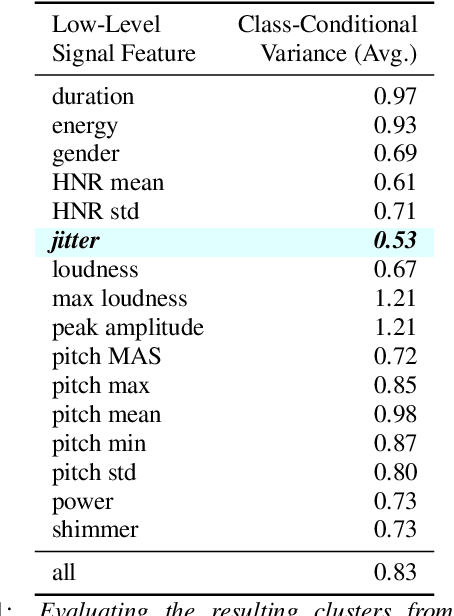

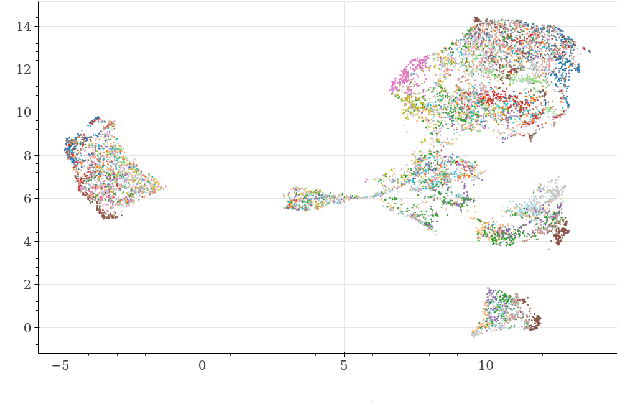

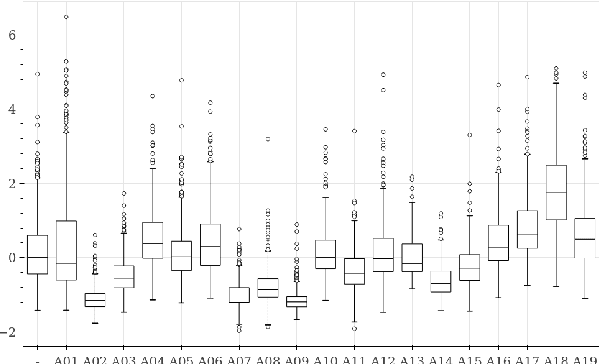

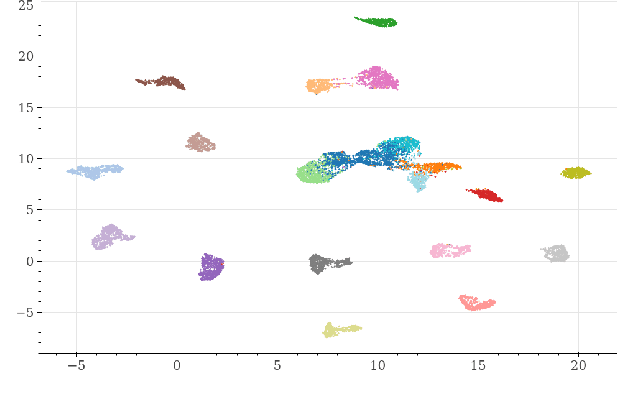

Abstract:Deepfakes are synthetically generated media often devised with malicious intent. They have become increasingly more convincing with large training datasets advanced neural networks. These fakes are readily being misused for slander, misinformation and fraud. For this reason, intensive research for developing countermeasures is also expanding. However, recent work is almost exclusively limited to deepfake detection - predicting if audio is real or fake. This is despite the fact that attribution (who created which fake?) is an essential building block of a larger defense strategy, as practiced in the field of cybersecurity for a long time. This paper considers the problem of deepfake attacker attribution in the domain of audio. We present several methods for creating attacker signatures using low-level acoustic descriptors and machine learning embeddings. We show that speech signal features are inadequate for characterizing attacker signatures. However, we also demonstrate that embeddings from a recurrent neural network can successfully characterize attacks from both known and unknown attackers. Our attack signature embeddings result in distinct clusters, both for seen and unseen audio deepfakes. We show that these embeddings can be used in downstream-tasks to high-effect, scoring 97.10% accuracy in attacker-id classification.

Same Cause; Different Effects in the Brain

Feb 21, 2022

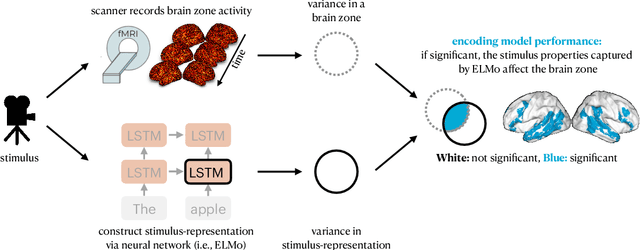

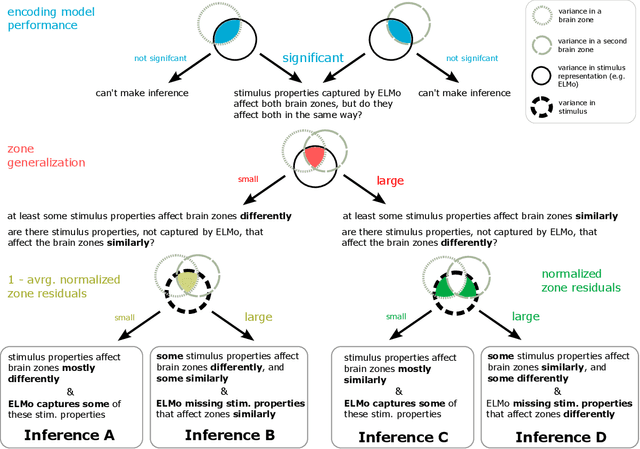

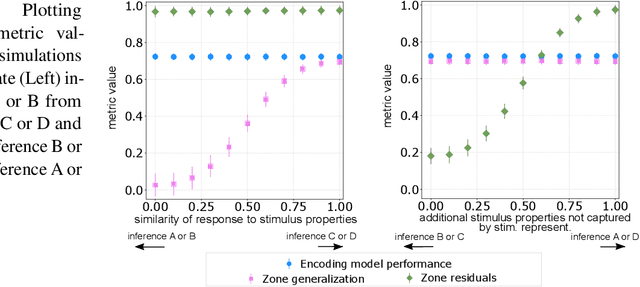

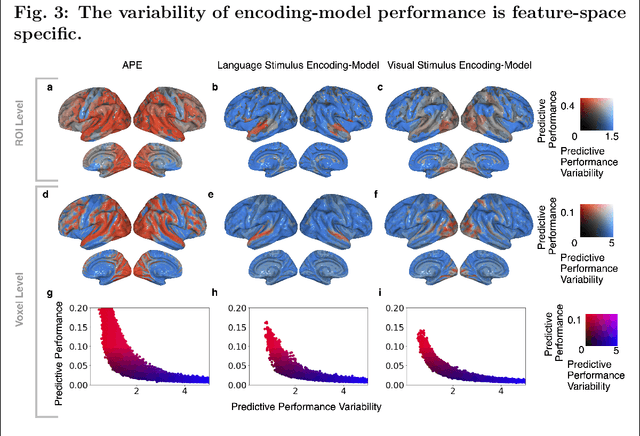

Abstract:To study information processing in the brain, neuroscientists manipulate experimental stimuli while recording participant brain activity. They can then use encoding models to find out which brain "zone" (e.g. which region of interest, volume pixel or electrophysiology sensor) is predicted from the stimulus properties. Given the assumptions underlying this setup, when stimulus properties are predictive of the activity in a zone, these properties are understood to cause activity in that zone. In recent years, researchers have used neural networks to construct representations that capture the diverse properties of complex stimuli, such as natural language or natural images. Encoding models built using these high-dimensional representations are often able to significantly predict the activity in large swathes of cortex, suggesting that the activity in all these brain zones is caused by stimulus properties captured in the representation. It is then natural to ask: "Is the activity in these different brain zones caused by the stimulus properties in the same way?" In neuroscientific terms, this corresponds to asking if these different zones process the stimulus properties in the same way. Here, we propose a new framework that enables researchers to ask if the properties of a stimulus affect two brain zones in the same way. We use simulated data and two real fMRI datasets with complex naturalistic stimuli to show that our framework enables us to make such inferences. Our inferences are strikingly consistent between the two datasets, indicating that the proposed framework is a promising new tool for neuroscientists to understand how information is processed in the brain.

Behavior measures are predicted by how information is encoded in an individual's brain

Dec 11, 2021

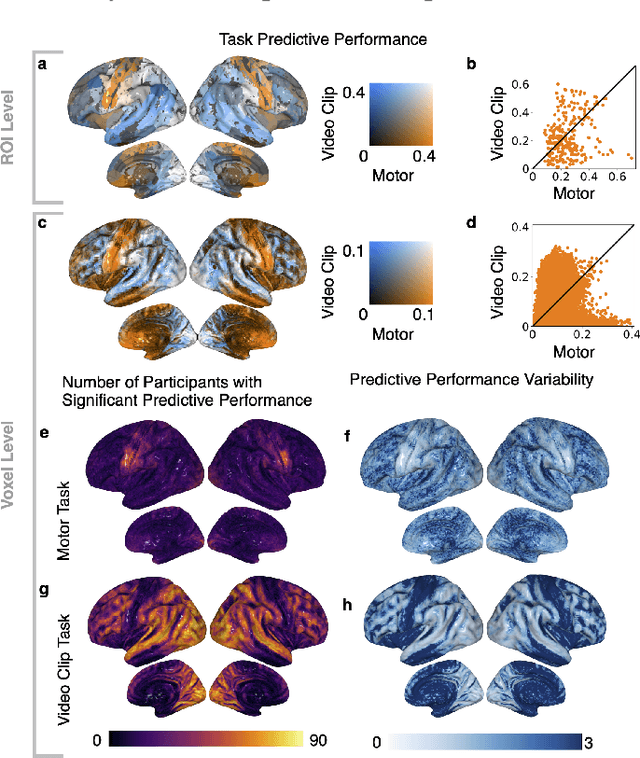

Abstract:Similar to how differences in the proficiency of the cardiovascular and musculoskeletal system predict an individual's athletic ability, differences in how the same brain region encodes information across individuals may explain their behavior. However, when studying how the brain encodes information, researchers choose different neuroimaging tasks (e.g., language or motor tasks), which can rely on processing different types of information and can modulate different brain regions. We hypothesize that individual differences in how information is encoded in the brain are task-specific and predict different behavior measures. We propose a framework using encoding-models to identify individual differences in brain encoding and test if these differences can predict behavior. We evaluate our framework using task functional magnetic resonance imaging data. Our results indicate that individual differences revealed by encoding-models are a powerful tool for predicting behavior, and that researchers should optimize their choice of task and encoding-model for their behavior of interest.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge