James Stokes

Variational quantum and neural quantum states algorithms for the linear complementarity problem

Apr 10, 2025

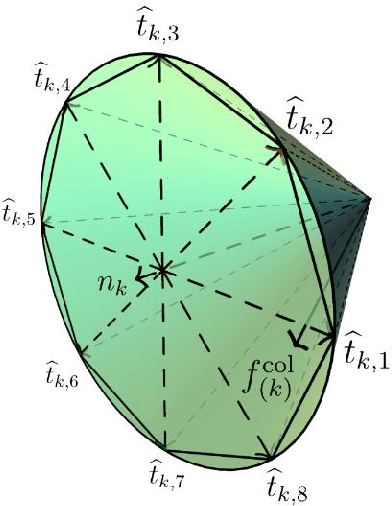

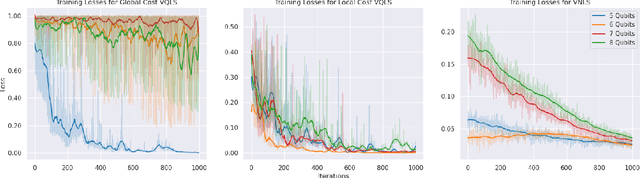

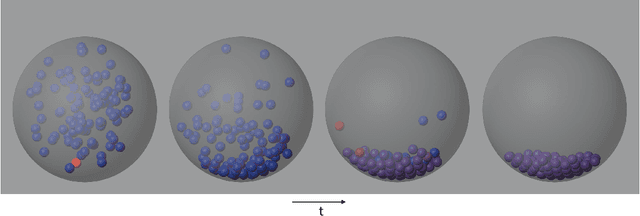

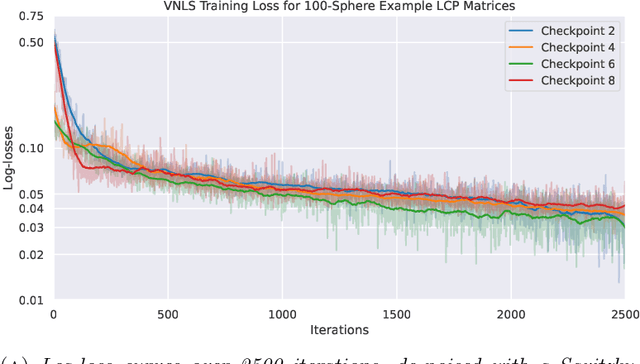

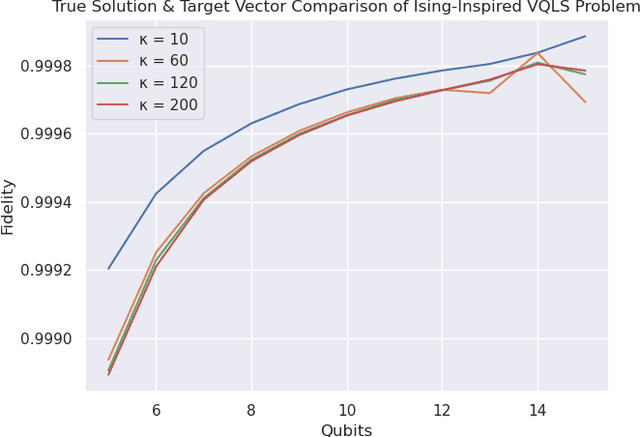

Abstract:Variational quantum algorithms (VQAs) are promising hybrid quantum-classical methods designed to leverage the computational advantages of quantum computing while mitigating the limitations of current noisy intermediate-scale quantum (NISQ) hardware. Although VQAs have been demonstrated as proofs of concept, their practical utility in solving real-world problems -- and whether quantum-inspired classical algorithms can match their performance -- remains an open question. We present a novel application of the variational quantum linear solver (VQLS) and its classical neural quantum states-based counterpart, the variational neural linear solver (VNLS), as key components within a minimum map Newton solver for a complementarity-based rigid body contact model. We demonstrate using the VNLS that our solver accurately simulates the dynamics of rigid spherical bodies during collision events. These results suggest that quantum and quantum-inspired linear algebra algorithms can serve as viable alternatives to standard linear algebra solvers for modeling certain physical systems.

Retentive Neural Quantum States: Efficient Ansätze for Ab Initio Quantum Chemistry

Nov 06, 2024

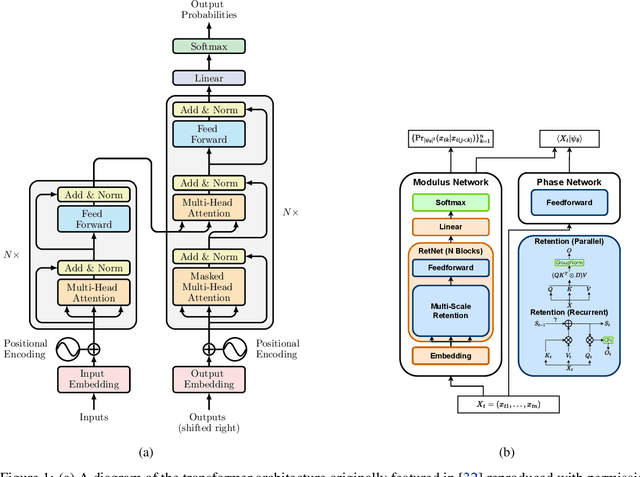

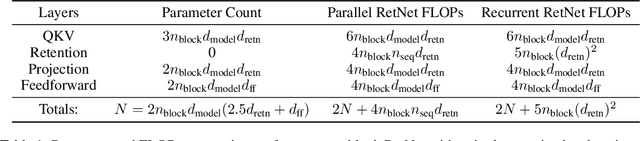

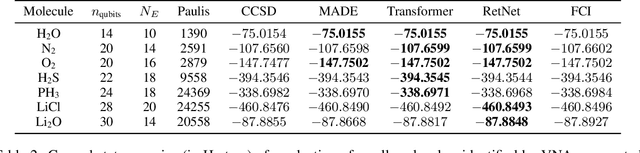

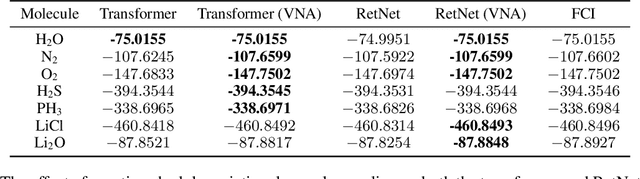

Abstract:Neural-network quantum states (NQS) has emerged as a powerful application of quantum-inspired deep learning for variational Monte Carlo methods, offering a competitive alternative to existing techniques for identifying ground states of quantum problems. A significant advancement toward improving the practical scalability of NQS has been the incorporation of autoregressive models, most recently transformers, as variational ansatze. Transformers learn sequence information with greater expressiveness than recurrent models, but at the cost of increased time complexity with respect to sequence length. We explore the use of the retentive network (RetNet), a recurrent alternative to transformers, as an ansatz for solving electronic ground state problems in $\textit{ab initio}$ quantum chemistry. Unlike transformers, RetNets overcome this time complexity bottleneck by processing data in parallel during training, and recurrently during inference. We give a simple computational cost estimate of the RetNet and directly compare it with similar estimates for transformers, establishing a clear threshold ratio of problem-to-model size past which the RetNet's time complexity outperforms that of the transformer. Though this efficiency can comes at the expense of decreased expressiveness relative to the transformer, we overcome this gap through training strategies that leverage the autoregressive structure of the model -- namely, variational neural annealing. Our findings support the RetNet as a means of improving the time complexity of NQS without sacrificing accuracy. We provide further evidence that the ablative improvements of neural annealing extend beyond the RetNet architecture, suggesting it would serve as an effective general training strategy for autoregressive NQS.

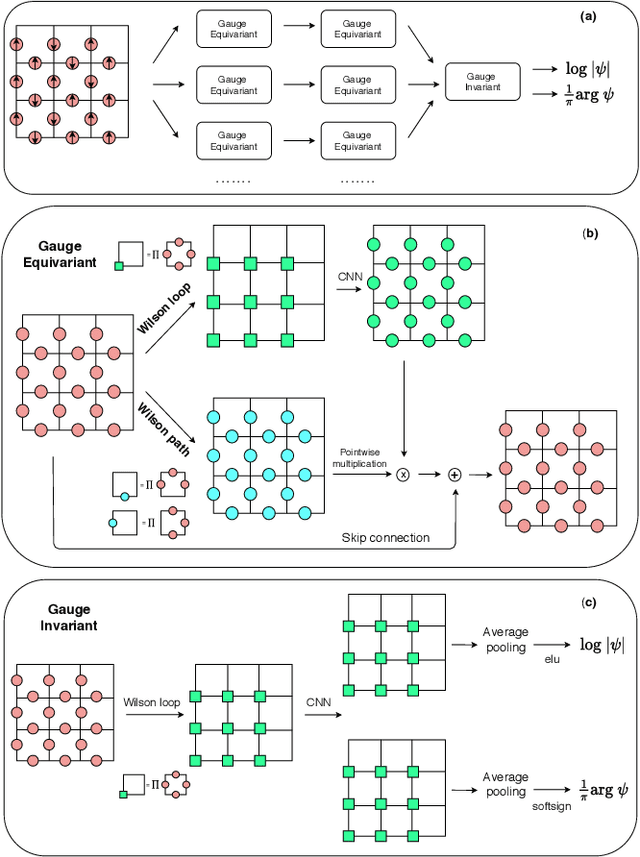

Gauge Equivariant Neural Networks for 2+1D U Gauge Theory Simulations in Hamiltonian Formulation

Nov 06, 2022

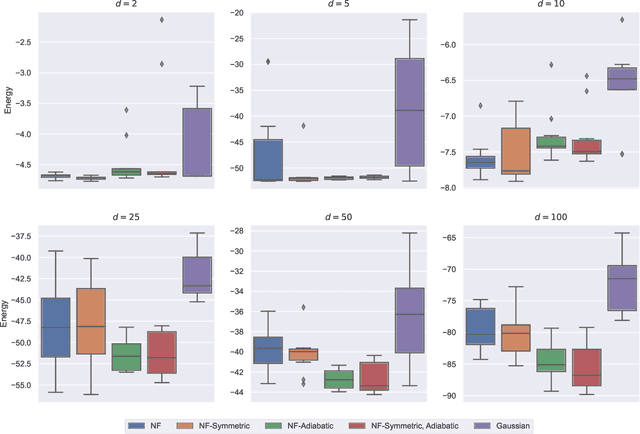

Abstract:Gauge Theory plays a crucial role in many areas in science, including high energy physics, condensed matter physics and quantum information science. In quantum simulations of lattice gauge theory, an important step is to construct a wave function that obeys gauge symmetry. In this paper, we have developed gauge equivariant neural network wave function techniques for simulating continuous-variable quantum lattice gauge theories in the Hamiltonian formulation. We have applied the gauge equivariant neural network approach to find the ground state of 2+1-dimensional lattice gauge theory with U(1) gauge group using variational Monte Carlo. We have benchmarked our approach against the state-of-the-art complex Gaussian wave functions, demonstrating improved performance in the strong coupling regime and comparable results in the weak coupling regime.

Toward Neural Network Simulation of Variational Quantum Algorithms

Nov 05, 2022

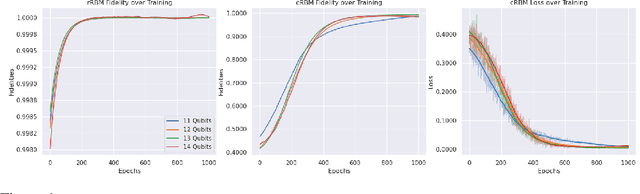

Abstract:Variational quantum algorithms (VQAs) utilize a hybrid quantum-classical architecture to recast problems of high-dimensional linear algebra as ones of stochastic optimization. Despite the promise of leveraging near- to intermediate-term quantum resources to accelerate this task, the computational advantage of VQAs over wholly classical algorithms has not been firmly established. For instance, while the variational quantum eigensolver (VQE) has been developed to approximate low-lying eigenmodes of high-dimensional sparse linear operators, analogous classical optimization algorithms exist in the variational Monte Carlo (VMC) literature, utilizing neural networks in place of quantum circuits to represent quantum states. In this paper we ask if classical stochastic optimization algorithms can be constructed paralleling other VQAs, focusing on the example of the variational quantum linear solver (VQLS). We find that such a construction can be applied to the VQLS, yielding a paradigm that could theoretically extend to other VQAs of similar form.

Scalable neural quantum states architecture for quantum chemistry

Aug 11, 2022

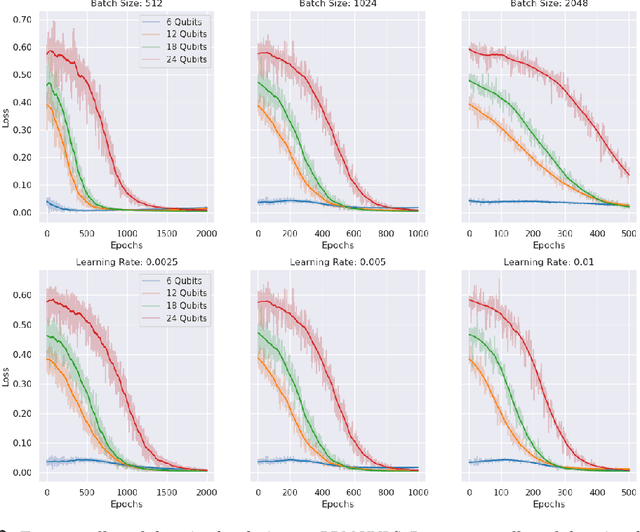

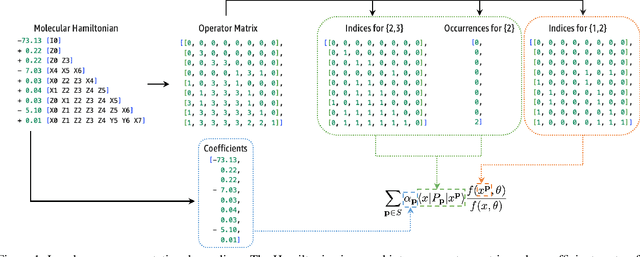

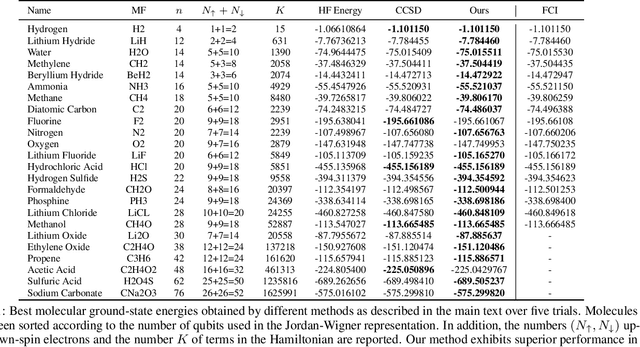

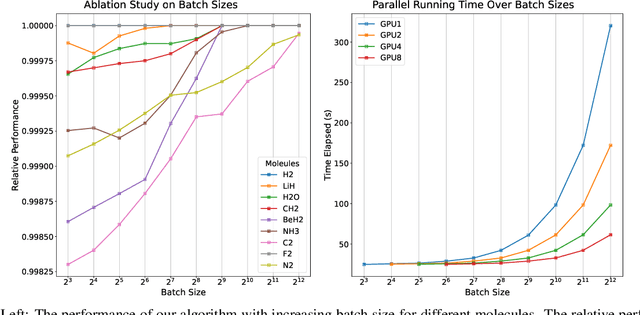

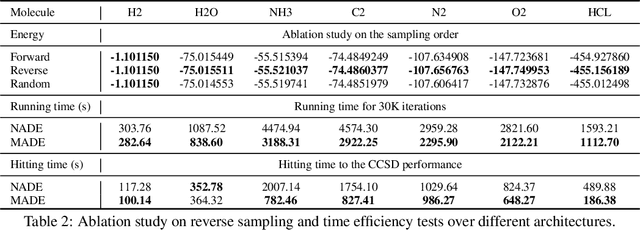

Abstract:Variational optimization of neural-network representations of quantum states has been successfully applied to solve interacting fermionic problems. Despite rapid developments, significant scalability challenges arise when considering molecules of large scale, which correspond to non-locally interacting quantum spin Hamiltonians consisting of sums of thousands or even millions of Pauli operators. In this work, we introduce scalable parallelization strategies to improve neural-network-based variational quantum Monte Carlo calculations for ab-initio quantum chemistry applications. We establish GPU-supported local energy parallelism to compute the optimization objective for Hamiltonians of potentially complex molecules. Using autoregressive sampling techniques, we demonstrate systematic improvement in wall-clock timings required to achieve CCSD baseline target energies. The performance is further enhanced by accommodating the structure of resultant spin Hamiltonians into the autoregressive sampling ordering. The algorithm achieves promising performance in comparison with the classical approximate methods and exhibits both running time and scalability advantages over existing neural-network based methods.

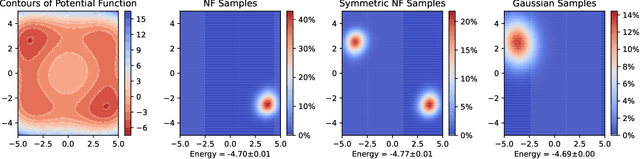

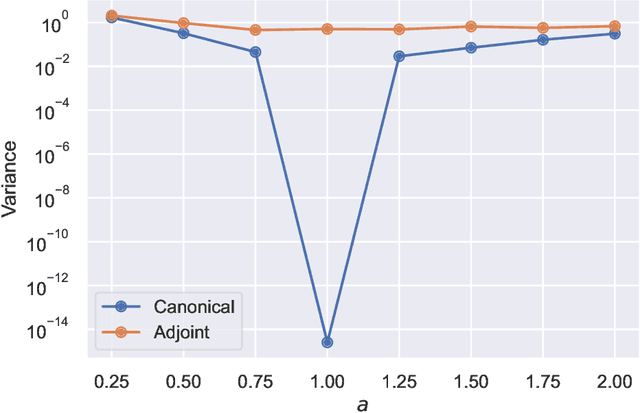

Numerical and geometrical aspects of flow-based variational quantum Monte Carlo

Mar 28, 2022

Abstract:This article aims to summarize recent and ongoing efforts to simulate continuous-variable quantum systems using flow-based variational quantum Monte Carlo techniques, focusing for pedagogical purposes on the example of bosons in the field amplitude (quadrature) basis. Particular emphasis is placed on the variational real- and imaginary-time evolution problems, carefully reviewing the stochastic estimation of the time-dependent variational principles and their relationship with information geometry. Some practical instructions are provided to guide the implementation of a PyTorch code. The review is intended to be accessible to researchers interested in machine learning and quantum information science.

Continuous-variable neural-network quantum states and the quantum rotor model

Jul 15, 2021

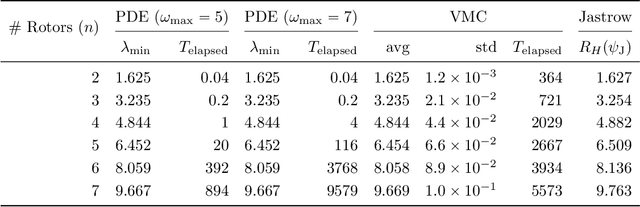

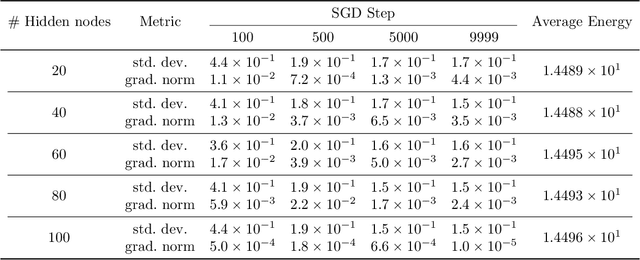

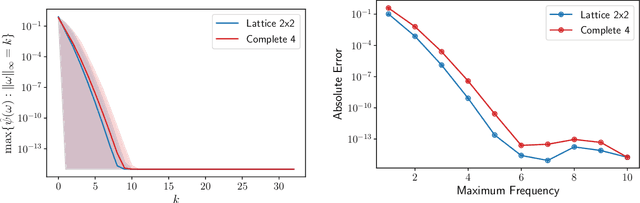

Abstract:We initiate the study of neural-network quantum state algorithms for analyzing continuous-variable lattice quantum systems in first quantization. A simple family of continuous-variable trial wavefunctons is introduced which naturally generalizes the restricted Boltzmann machine (RBM) wavefunction introduced for analyzing quantum spin systems. By virtue of its simplicity, the same variational Monte Carlo training algorithms that have been developed for ground state determination and time evolution of spin systems have natural analogues in the continuum. We offer a proof of principle demonstration in the context of ground state determination of a stoquastic quantum rotor Hamiltonian. Results are compared against those obtained from partial differential equation (PDE) based scalable eigensolvers. This study serves as a benchmark against which future investigation of continuous-variable neural quantum states can be compared, and points to the need to consider deep network architectures and more sophisticated training algorithms.

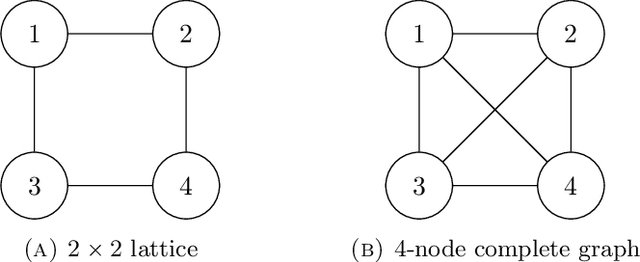

Gauge equivariant neural networks for quantum lattice gauge theories

Dec 09, 2020

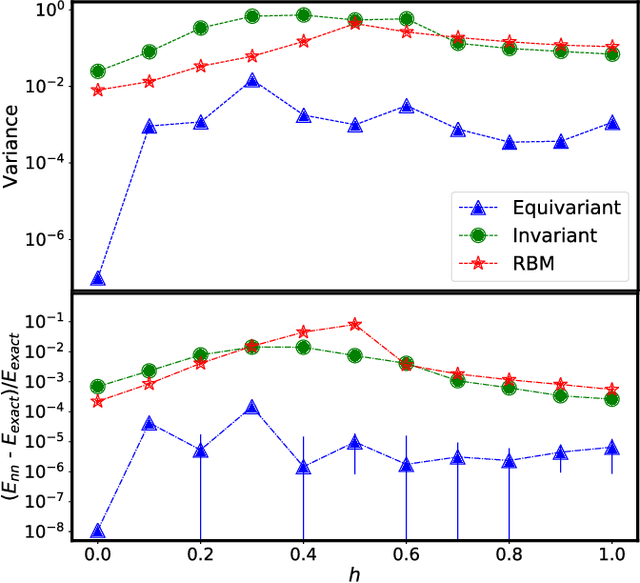

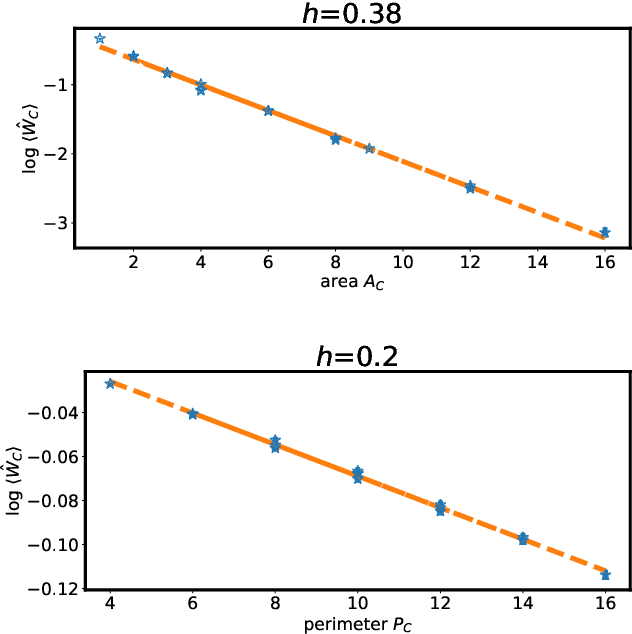

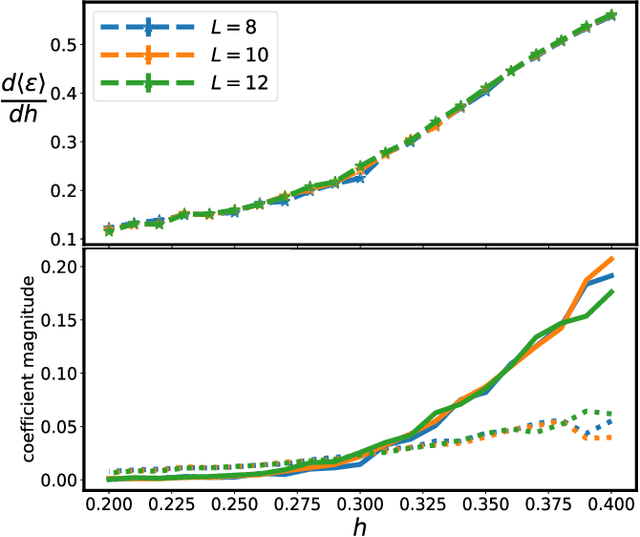

Abstract:Gauge symmetries play a key role in physics appearing in areas such as quantum field theories of the fundamental particles and emergent degrees of freedom in quantum materials. Motivated by the desire to efficiently simulate many-body quantum systems with exact local gauge invariance, gauge equivariant neural-network quantum states are introduced, which exactly satisfy the local Hilbert space constraints necessary for the description of quantum lattice gauge theory with Zd gauge group on different geometries. Focusing on the special case of Z2 gauge group on a periodically identified square lattice, the equivariant architecture is analytically shown to contain the loop-gas solution as a special case. Gauge equivariant neural-network quantum states are used in combination with variational quantum Monte Carlo to obtain compact descriptions of the ground state wavefunction for the Z2 theory away from the exactly solvable limit, and to demonstrate the confining/deconfining phase transition of the Wilson loop order parameter.

Meta Variational Monte Carlo

Nov 20, 2020

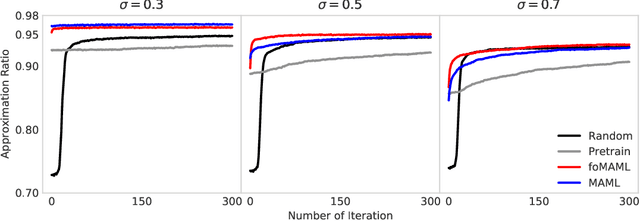

Abstract:An identification is found between meta-learning and the problem of determining the ground state of a randomly generated Hamiltonian drawn from a known ensemble. A model-agnostic meta-learning approach is proposed to solve the associated learning problem and a preliminary experimental study of random Max-Cut problems indicates that the resulting Meta Variational Monte Carlo accelerates training and improves convergence.

Phases of two-dimensional spinless lattice fermions with first-quantized deep neural-network quantum states

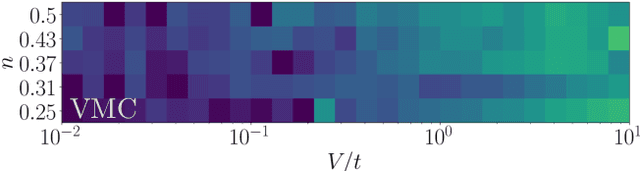

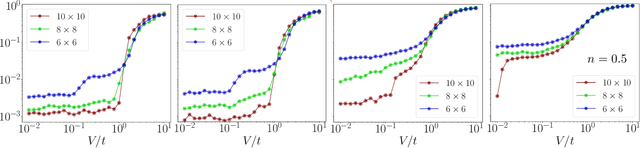

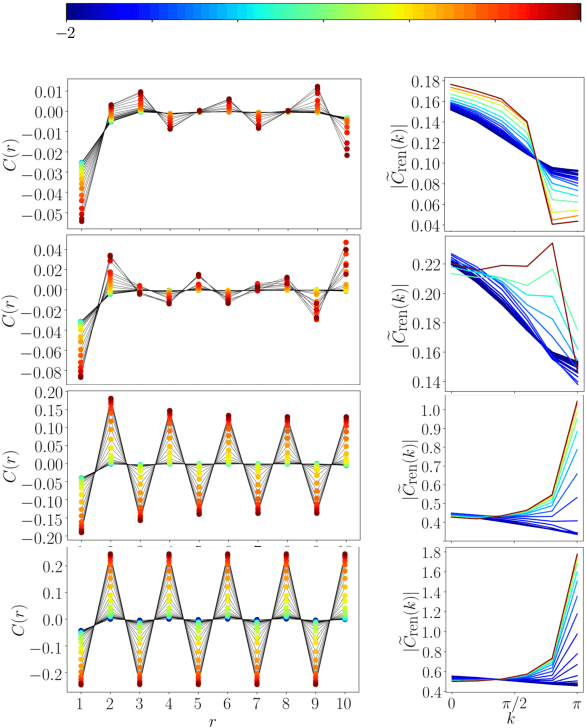

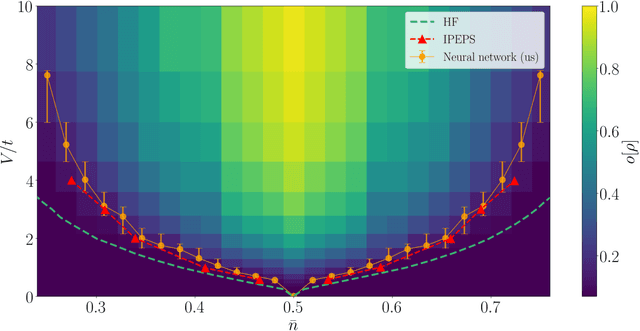

Jul 31, 2020

Abstract:First-quantized deep neural network techniques are developed for analyzing strongly coupled fermionic systems on the lattice. Using a Slater-Jastrow inspired ansatz which exploits deep residual networks with convolutional residual blocks, we approximately determine the ground state of spinless fermions on a square lattice with nearest-neighbor interactions. The flexibility of the neural-network ansatz results in a high level of accuracy when compared to exact diagonalization results on small systems, both for energy and correlation functions. On large systems, we obtain accurate estimates of the boundaries between metallic and charge ordered phases as a function of the interaction strength and the particle density.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge