Hamid Sarmadi

Leveraging ChatGPT's Multimodal Vision Capabilities to Rank Satellite Images by Poverty Level: Advancing Tools for Social Science Research

Jan 24, 2025Abstract:This paper investigates the novel application of Large Language Models (LLMs) with vision capabilities to analyze satellite imagery for village-level poverty prediction. Although LLMs were originally designed for natural language understanding, their adaptability to multimodal tasks, including geospatial analysis, has opened new frontiers in data-driven research. By leveraging advancements in vision-enabled LLMs, we assess their ability to provide interpretable, scalable, and reliable insights into human poverty from satellite images. Using a pairwise comparison approach, we demonstrate that ChatGPT can rank satellite images based on poverty levels with accuracy comparable to domain experts. These findings highlight both the promise and the limitations of LLMs in socioeconomic research, providing a foundation for their integration into poverty assessment workflows. This study contributes to the ongoing exploration of unconventional data sources for welfare analysis and opens pathways for cost-effective, large-scale poverty monitoring.

Towards Explaining Satellite Based Poverty Predictions with Convolutional Neural Networks

Dec 01, 2023Abstract:Deep convolutional neural networks (CNNs) have been shown to predict poverty and development indicators from satellite images with surprising accuracy. This paper presents a first attempt at analyzing the CNNs responses in detail and explaining the basis for the predictions. The CNN model, while trained on relatively low resolution day- and night-time satellite images, is able to outperform human subjects who look at high-resolution images in ranking the Wealth Index categories. Multiple explainability experiments performed on the model indicate the importance of the sizes of the objects, pixel colors in the image, and provide a visualization of the importance of different structures in input images. A visualization is also provided of type images that maximize the network prediction of Wealth Index, which provides clues on what the CNN prediction is based on.

Explainable Predictive Maintenance

Jun 08, 2023

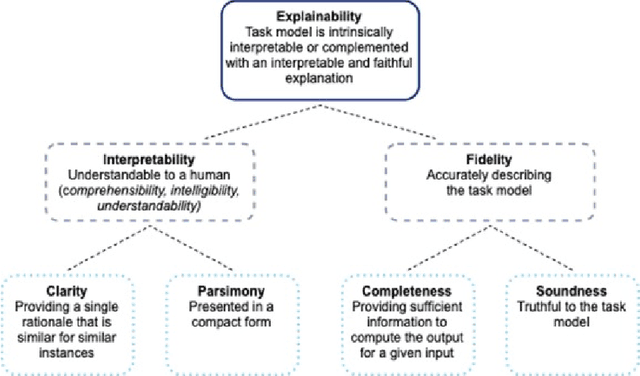

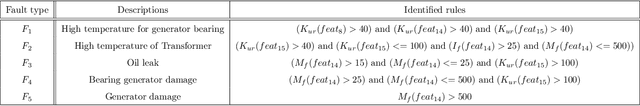

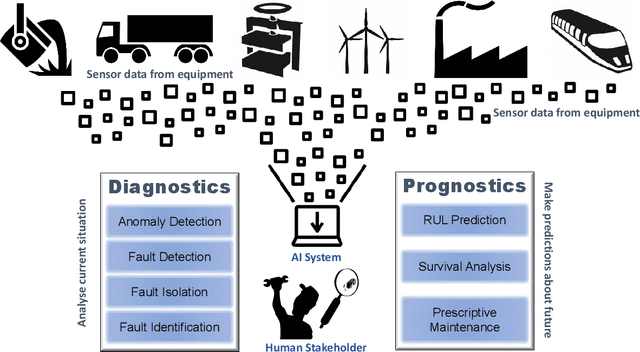

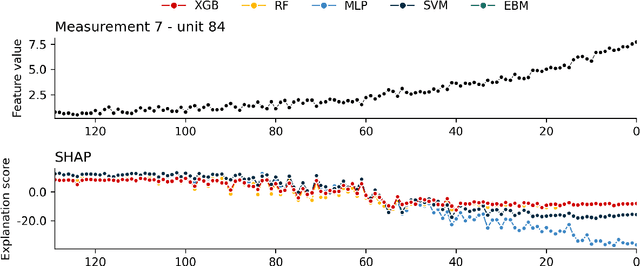

Abstract:Explainable Artificial Intelligence (XAI) fills the role of a critical interface fostering interactions between sophisticated intelligent systems and diverse individuals, including data scientists, domain experts, end-users, and more. It aids in deciphering the intricate internal mechanisms of ``black box'' Machine Learning (ML), rendering the reasons behind their decisions more understandable. However, current research in XAI primarily focuses on two aspects; ways to facilitate user trust, or to debug and refine the ML model. The majority of it falls short of recognising the diverse types of explanations needed in broader contexts, as different users and varied application areas necessitate solutions tailored to their specific needs. One such domain is Predictive Maintenance (PdM), an exploding area of research under the Industry 4.0 \& 5.0 umbrella. This position paper highlights the gap between existing XAI methodologies and the specific requirements for explanations within industrial applications, particularly the Predictive Maintenance field. Despite explainability's crucial role, this subject remains a relatively under-explored area, making this paper a pioneering attempt to bring relevant challenges to the research community's attention. We provide an overview of predictive maintenance tasks and accentuate the need and varying purposes for corresponding explanations. We then list and describe XAI techniques commonly employed in the literature, discussing their suitability for PdM tasks. Finally, to make the ideas and claims more concrete, we demonstrate XAI applied in four specific industrial use cases: commercial vehicles, metro trains, steel plants, and wind farms, spotlighting areas requiring further research.

3D Reconstruction and Alignment by Consumer RGB-D Sensors and Fiducial Planar Markers for Patient Positioning in Radiation Therapy

Mar 22, 2021

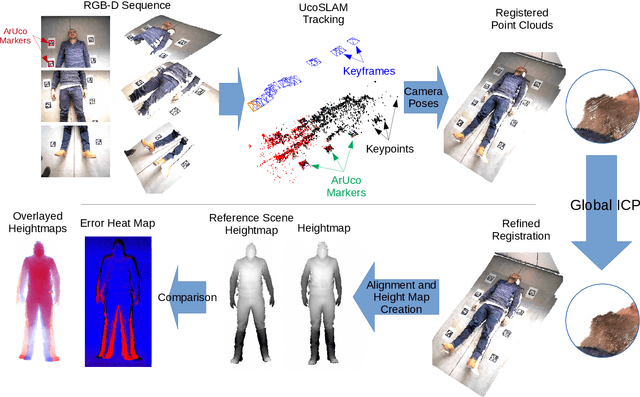

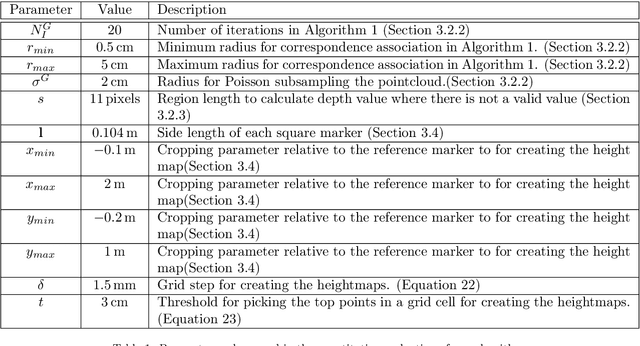

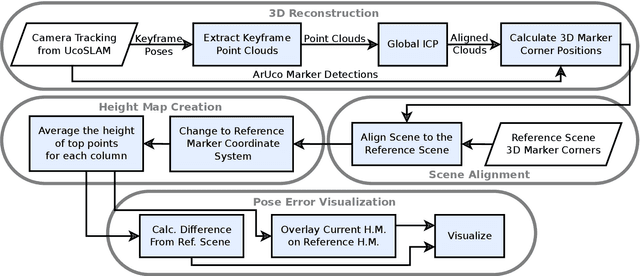

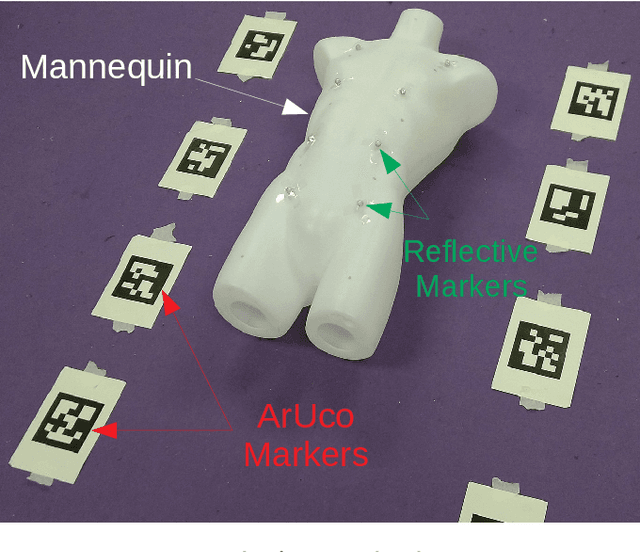

Abstract:BACKGROUND AND OBJECTIVE: Patient positioning is a crucial step in radiation therapy, for which non-invasive methods have been developed based on surface reconstruction using optical 3D imaging. However, most solutions need expensive specialized hardware and a careful calibration procedure that must be repeated over time.This paper proposes a fast and cheap patient positioning method based on inexpensive consumer level RGB-D sensors. METHODS: The proposed method relies on a 3D reconstruction approach that fuses, in real-time, artificial and natural visual landmarks recorded from a hand-held RGB-D sensor. The video sequence is transformed into a set of keyframes with known poses, that are later refined to obtain a realistic 3D reconstruction of the patient. The use of artificial landmarks allows our method to automatically align the reconstruction to a reference one, without the need of calibrating the system with respect to the linear accelerator coordinate system. RESULTS:The experiments conducted show that our method obtains a median of 1 cm in translational error, and 1 degree of rotational error with respect to reference pose. Additionally, the proposed method shows as visual output overlayed poses (from the reference and the current scene) and an error map that can be used to correct the patient's current pose to match the reference pose. CONCLUSIONS: A novel approach to obtain 3D body reconstructions for patient positioning without requiring expensive hardware or dedicated graphic cards is proposed. The method can be used to align in real time the patient's current pose to a preview pose, which is a relevant step in radiation therapy.

Simultaneous Multi-View Camera Pose Estimation and Object Tracking with Square Planar Markers

Mar 16, 2021

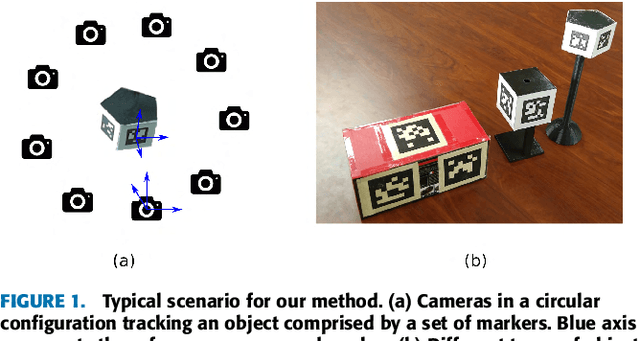

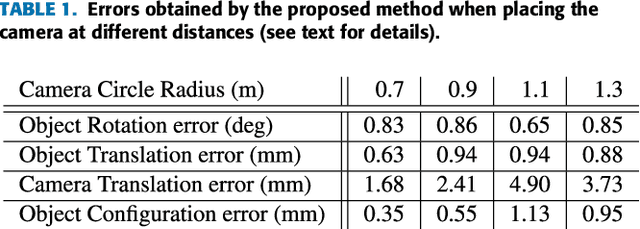

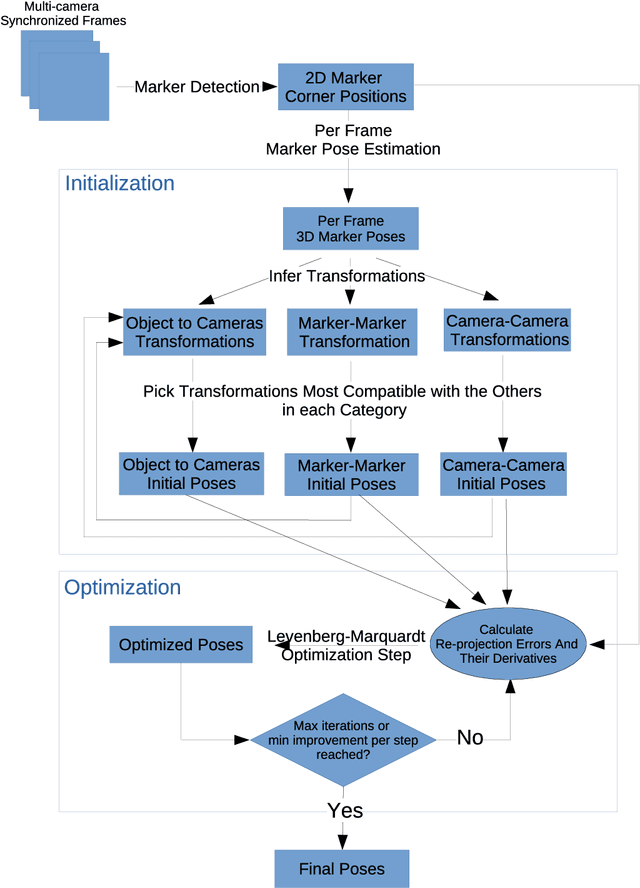

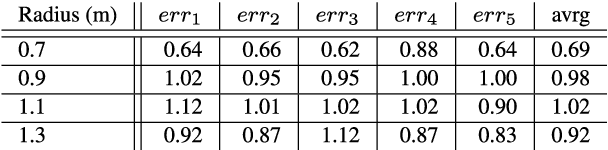

Abstract:Object tracking is a key aspect in many applications such as augmented reality in medicine (e.g. tracking a surgical instrument) or robotics. Squared planar markers have become popular tools for tracking since their pose can be estimated from their four corners. While using a single marker and a single camera limits the working area considerably, using multiple markers attached to an object requires estimating their relative position, which is not trivial, for high accuracy tracking. Likewise, using multiple cameras requires estimating their extrinsic parameters, also a tedious process that must be repeated whenever a camera is moved. This work proposes a novel method to simultaneously solve the above-mentioned problems. From a video sequence showing a rigid set of planar markers recorded from multiple cameras, the proposed method is able to automatically obtain the three-dimensional configuration of the markers, the extrinsic parameters of the cameras, and the relative pose between the markers and the cameras at each frame. Our experiments show that our approach can obtain highly accurate results for estimating these parameters using low resolution cameras. Once the parameters are obtained, tracking of the object can be done in real time with a low computational cost. The proposed method is a step forward in the development of cost-effective solutions for object tracking.

Detection of Binary Square Fiducial Markers Using an Event Camera

Jan 19, 2021

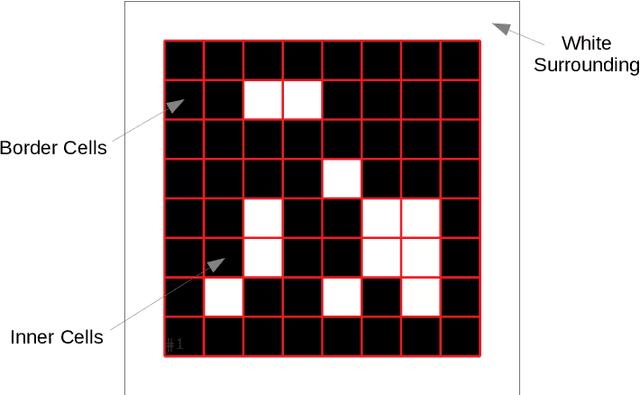

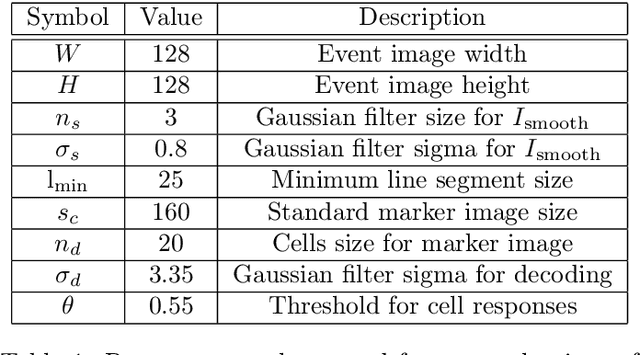

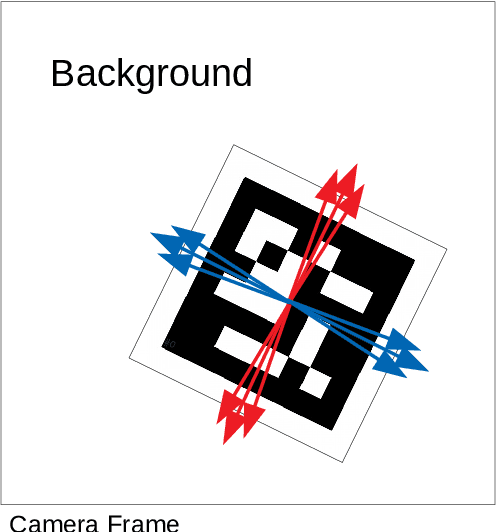

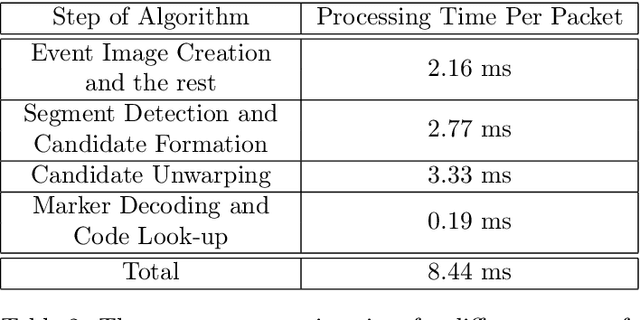

Abstract:Event cameras are a new type of image sensors that output changes in light intensity (events) instead of absolute intensity values. They have a very high temporal resolution and a high dynamic range. In this paper, we propose a method to detect and decode binary square markers using an event camera. We detect the edges of the markers by detecting line segments in an image created from events in the current packet. The line segments are combined to form marker candidates. The bit value of marker cells is decoded using the events on their borders. To the best of our knowledge, no other approach exists for detecting square binary markers directly from an event camera. Experimental results show that the performance of our proposal is much superior to the one from the RGB ArUco marker detector. Additionally, the proposed method can run on a single CPU thread in real-time.

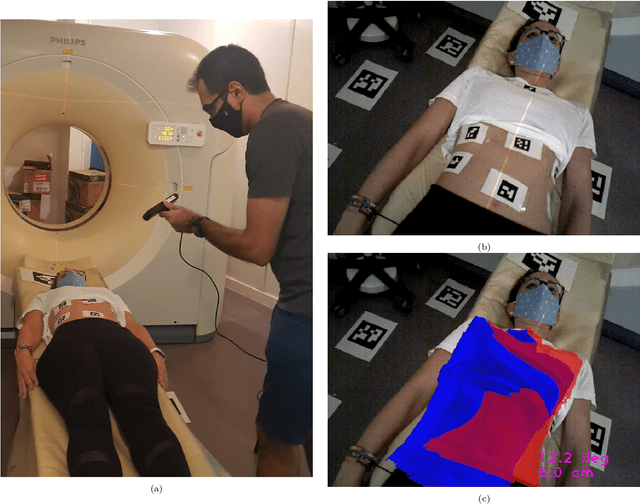

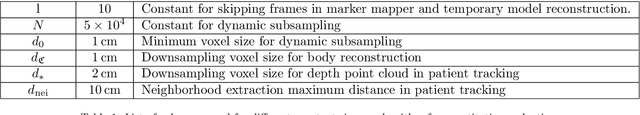

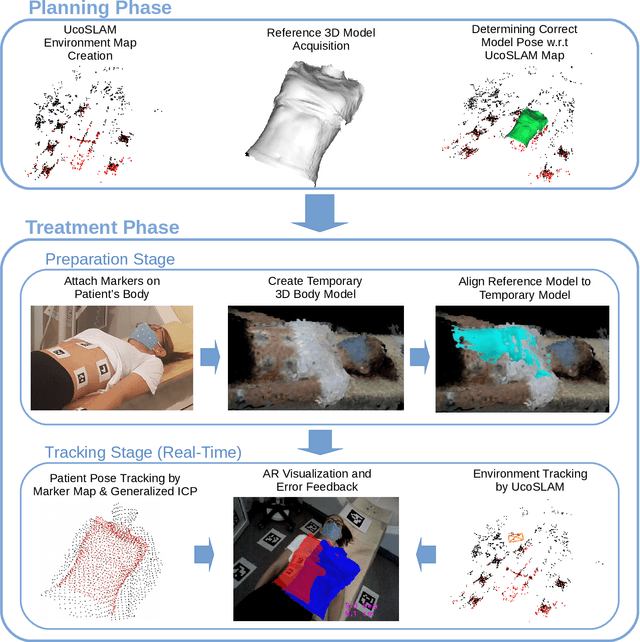

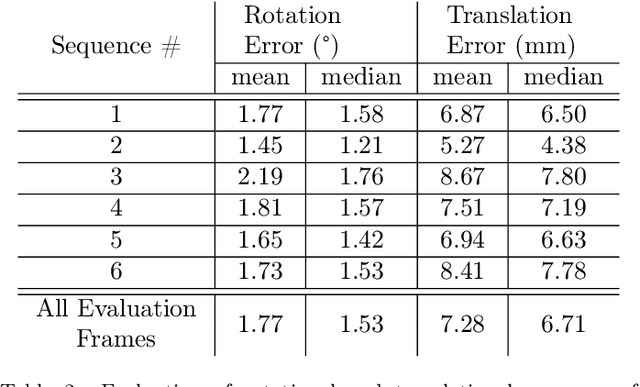

Joint Scene and Object Tracking for Cost-Effective Augmented Reality Assisted Patient Positioning in Radiation Therapy

Oct 05, 2020

Abstract:$\textrm{Background and Objective:}$ The research done in the field of Augmented Reality (AR) for patient positioning in radiation therapy is scarce. We propose an efficient and cost-effective algorithm for tracking the scene and the patient to interactively assist the patient's positioning process by providing visual feedback to the operator. $\textrm{Methods:}$ We have taken advantage of the marker mapper algorithm combined with other steps including generalized ICP to track the patient. We track the environment using the UcoSLAM algorithm. The alignment between the 3D reference model and body marker map is calculated employing our efficient body reconstruction algorithm. $\textrm{Results}:$ Our quantitative evaluation shows that we were able to achieve an average rotational error of 1.77 deg and a translational error of 7.28 mm. Our algorithm performed with an average frame rate of 19 fps. Furthermore, the qualitative results demonstrate the usefulness of our algorithm in patient positioning on different human subjects. $\textrm{Conclusion:}$ Since our algorithm achieves a relatively high frame rate and accuracy without the usage of a dedicated GPU employing a regular laptop, it is a very cost-effective AR-based patient positioning method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge