Haiying Wang

School of Automation, Harbin University of Science and Technology, Harbin, 150080, China

Camera-Invariant Meta-Learning Network for Single-Camera-Training Person Re-identification

Jun 21, 2024Abstract:Single-camera-training person re-identification (SCT re-ID) aims to train a re-ID model using SCT datasets where each person appears in only one camera. The main challenge of SCT re-ID is to learn camera-invariant feature representations without cross-camera same-person (CCSP) data as supervision. Previous methods address it by assuming that the most similar person should be found in another camera. However, this assumption is not guaranteed to be correct. In this paper, we propose a Camera-Invariant Meta-Learning Network (CIMN) for SCT re-ID. CIMN assumes that the camera-invariant feature representations should be robust to camera changes. To this end, we split the training data into meta-train set and meta-test set based on camera IDs and perform a cross-camera simulation via meta-learning strategy, aiming to enforce the representations learned from the meta-train set to be robust to the meta-test set. With the cross-camera simulation, CIMN can learn camera-invariant and identity-discriminative representations even there are no CCSP data. However, this simulation also causes the separation of the meta-train set and the meta-test set, which ignores some beneficial relations between them. Thus, we introduce three losses: meta triplet loss, meta classification loss, and meta camera alignment loss, to leverage the ignored relations. The experiment results demonstrate that our method achieves comparable performance with and without CCSP data, and outperforms the state-of-the-art methods on SCT re-ID benchmarks. In addition, it is also effective in improving the domain generalization ability of the model.

EAR-U-Net: EfficientNet and attention-based residual U-Net for automatic liver segmentation in CT

Oct 03, 2021

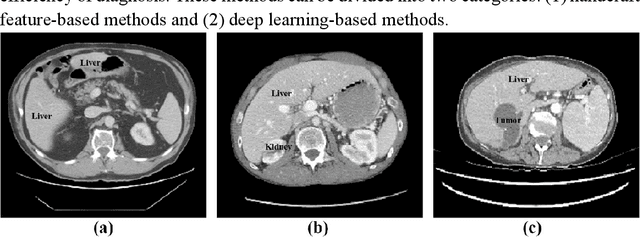

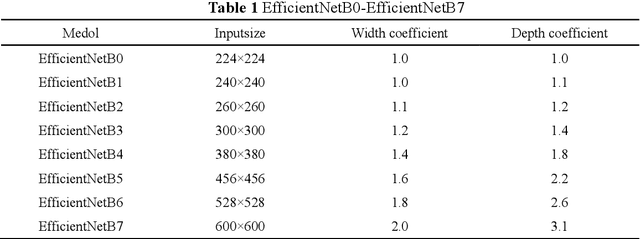

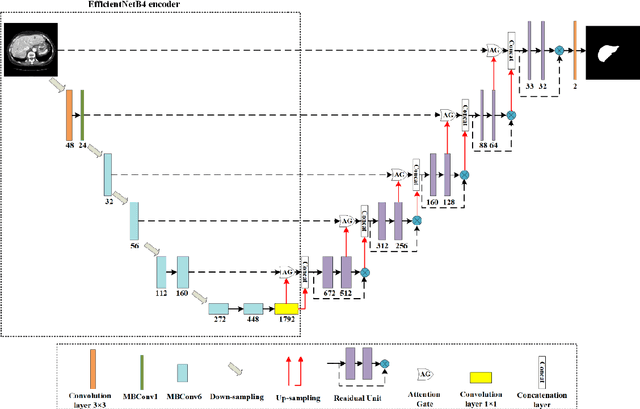

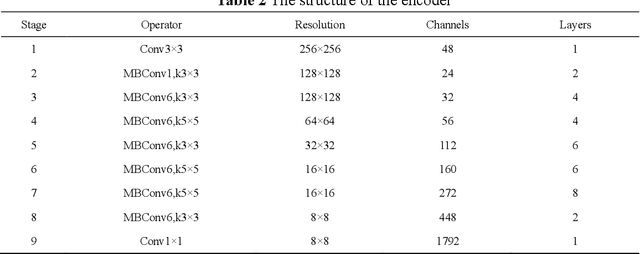

Abstract:Purpose: This paper proposes a new network framework called EAR-U-Net, which leverages EfficientNetB4, attention gate, and residual learning techniques to achieve automatic and accurate liver segmentation. Methods: The proposed method is based on the U-Net framework. First, we use EfficientNetB4 as the encoder to extract more feature information during the encoding stage. Then, an attention gate is introduced in the skip connection to eliminate irrelevant regions and highlight features of a specific segmentation task. Finally, to alleviate the problem of gradient vanishment, we replace the traditional convolution of the decoder with a residual block to improve the segmentation accuracy. Results: We verified the proposed method on the LiTS17 and SLiver07 datasets and compared it with classical networks such as FCN, U-Net, Attention U-Net, and Attention Res-U-Net. In the Sliver07 evaluation, the proposed method achieved the best segmentation performance on all five standard metrics. Meanwhile, in the LiTS17 assessment, the best performance is obtained except for a slight inferior on RVD. Moreover, we also participated in the MICCIA-LiTS17 challenge, and the Dice per case score was 0.952. Conclusion: The proposed method's qualitative and quantitative results demonstrated its applicability in liver segmentation and proved its good prospect in computer-assisted liver segmentation.

Rethinking the constraints of multimodal fusion: case study in Weakly-Supervised Audio-Visual Video Parsing

May 30, 2021

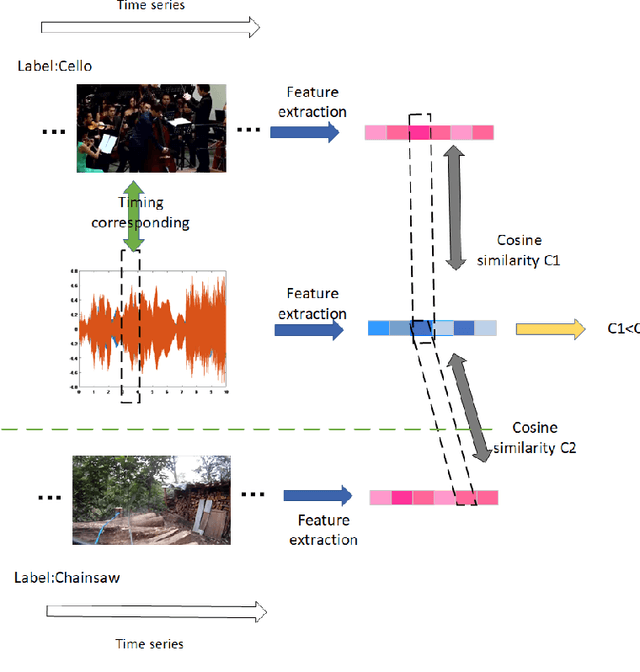

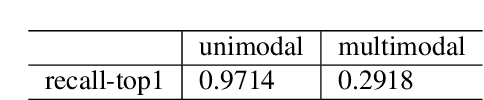

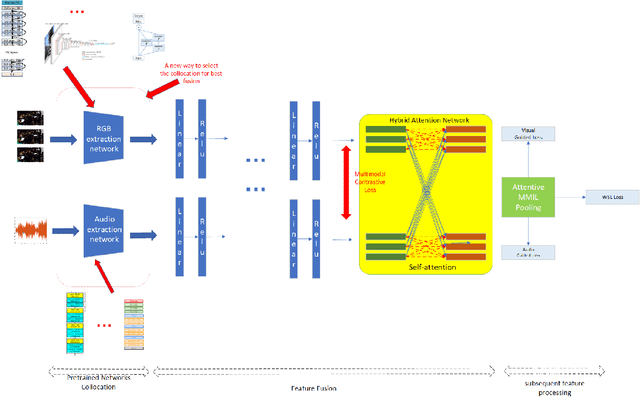

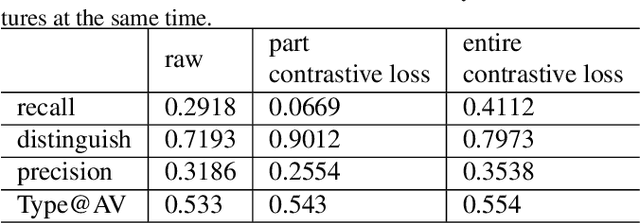

Abstract:For multimodal tasks, a good feature extraction network should extract information as much as possible and ensure that the extracted feature embedding and other modal feature embedding have an excellent mutual understanding. The latter is often more critical in feature fusion than the former. Therefore, selecting the optimal feature extraction network collocation is a very important subproblem in multimodal tasks. Most of the existing studies ignore this problem or adopt an ergodic approach. This problem is modeled as an optimization problem in this paper. A novel method is proposed to convert the optimization problem into an issue of comparative upper bounds by referring to the general practice of extreme value conversion in mathematics. Compared with the traditional method, it reduces the time cost. Meanwhile, aiming at the common problem that the feature similarity and the feature semantic similarity are not aligned in the multimodal time-series problem, we refer to the idea of contrast learning and propose a multimodal time-series contrastive loss(MTSC). Based on the above issues, We demonstrated the feasibility of our approach in the audio-visual video parsing task. Substantial analyses verify that our methods promote the fusion of different modal features.

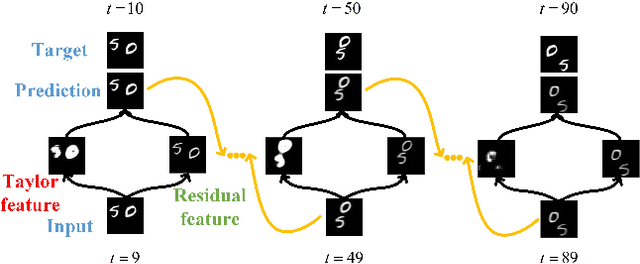

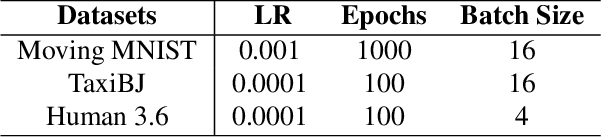

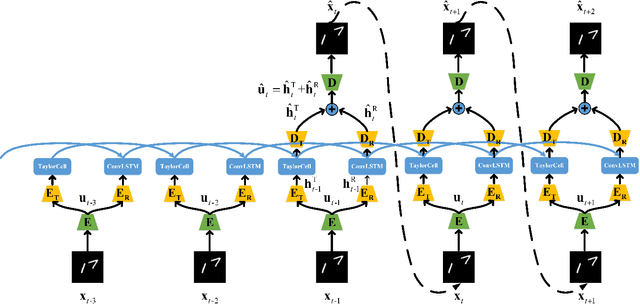

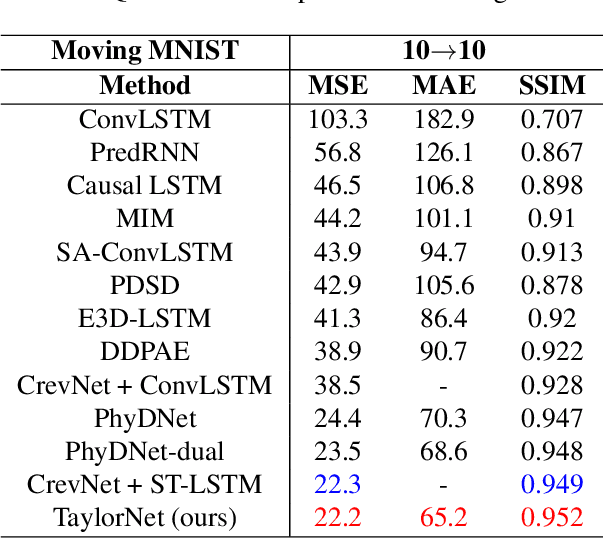

Taylor saves for later: disentanglement for video prediction using Taylor representation

May 24, 2021

Abstract:Video prediction is a challenging task with wide application prospects in meteorology and robot systems. Existing works fail to trade off short-term and long-term prediction performances and extract robust latent dynamics laws in video frames. We propose a two-branch seq-to-seq deep model to disentangle the Taylor feature and the residual feature in video frames by a novel recurrent prediction module (TaylorCell) and residual module. TaylorCell can expand the video frames' high-dimensional features into the finite Taylor series to describe the latent laws. In TaylorCell, we propose the Taylor prediction unit (TPU) and the memory correction unit (MCU). TPU employs the first input frame's derivative information to predict the future frames, avoiding error accumulation. MCU distills all past frames' information to correct the predicted Taylor feature from TPU. Correspondingly, the residual module extracts the residual feature complementary to the Taylor feature. On three generalist datasets (Moving MNIST, TaxiBJ, Human 3.6), our model outperforms or reaches state-of-the-art models, and ablation experiments demonstrate the effectiveness of our model in long-term prediction.

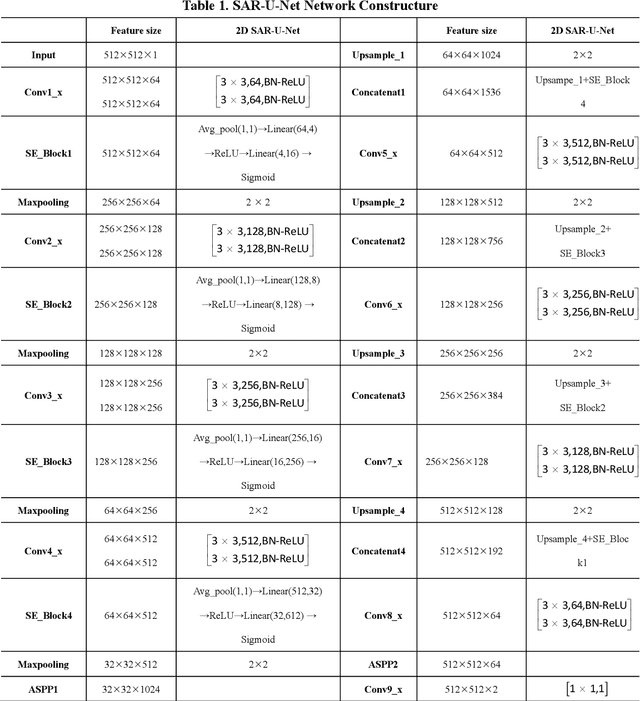

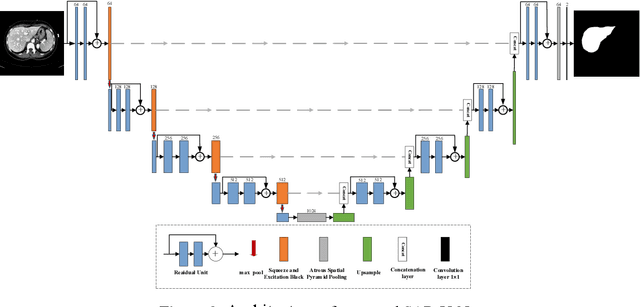

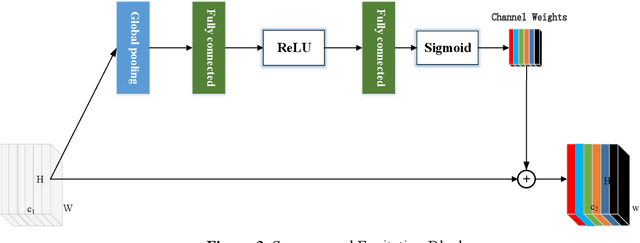

SAR-U-Net: squeeze-and-excitation block and atrous spatial pyramid pooling based residual U-Net for automatic liver CT segmentation

Mar 11, 2021

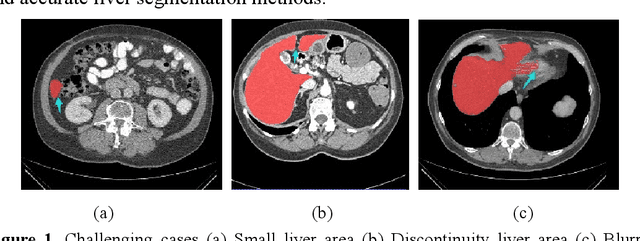

Abstract:Background and objective: In this paper, a modified U-Net based framework is presented, which leverages techniques from Squeeze-and-Excitation (SE) block, Atrous Spatial Pyramid Pooling (ASPP) and residual learning for accurate and robust liver CT segmentation, and the effectiveness of the proposed method was tested on two public datasets LiTS17 and SLiver07. Methods: A new network architecture called SAR-U-Net was designed. Firstly, the SE block is introduced to adaptively extract image features after each convolution in the U-Net encoder, while suppressing irrelevant regions, and highlighting features of specific segmentation task; Secondly, ASPP was employed to replace the transition layer and the output layer, and acquire multi-scale image information via different receptive fields. Thirdly, to alleviate the degradation problem, the traditional convolution block was replaced with the residual block and thus prompt the network to gain accuracy from considerably increased depth. Results: In the LiTS17 experiment, the mean values of Dice, VOE, RVD, ASD and MSD were 95.71, 9.52, -0.84, 1.54 and 29.14, respectively. Compared with other closely related 2D-based models, the proposed method achieved the highest accuracy. In the experiment of the SLiver07, the mean values of Dice, VOE, RVD, ASD and MSD were 97.31, 5.37, -1.08, 1.85 and 27.45, respectively. Compared with other closely related models, the proposed method achieved the highest segmentation accuracy except for the RVD. Conclusion: The proposed model enables a great improvement on the accuracy compared to 2D-based models, and its robustness in circumvent challenging problems, such as small liver regions, discontinuous liver regions, and fuzzy liver boundaries, is also well demonstrated and validated.

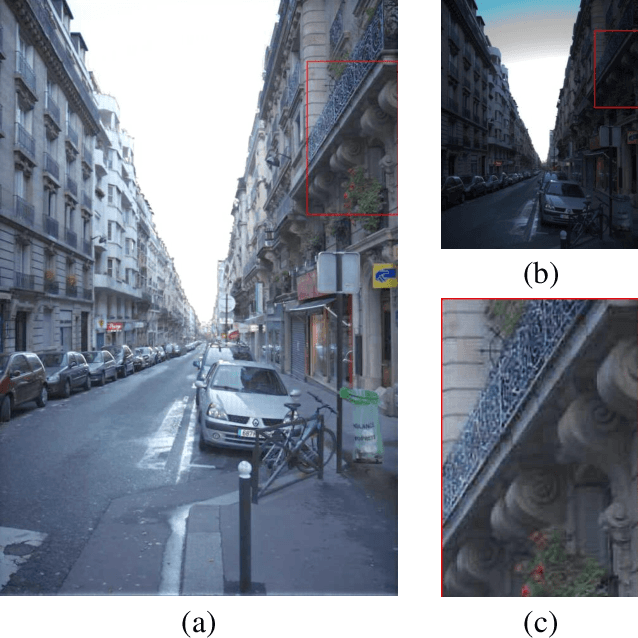

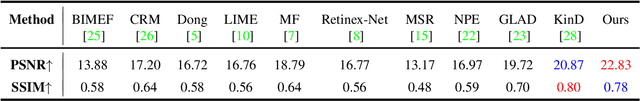

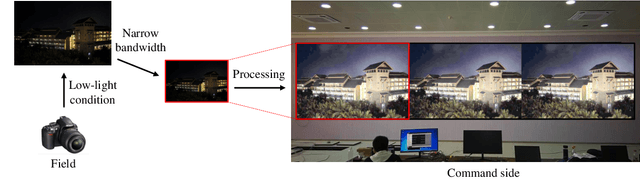

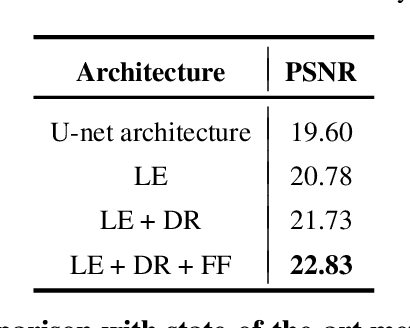

Bridge the Vision Gap from Field to Command: A Deep Learning Network Enhancing Illumination and Details

Jan 20, 2021

Abstract:With the goal of tuning up the brightness, low-light image enhancement enjoys numerous applications, such as surveillance, remote sensing and computational photography. Images captured under low-light conditions often suffer from poor visibility and blur. Solely brightening the dark regions will inevitably amplify the blur, thus may lead to detail loss. In this paper, we propose a simple yet effective two-stream framework named NEID to tune up the brightness and enhance the details simultaneously without introducing many computational costs. Precisely, the proposed method consists of three parts: Light Enhancement (LE), Detail Refinement (DR) and Feature Fusing (FF) module, which can aggregate composite features oriented to multiple tasks based on channel attention mechanism. Extensive experiments conducted on several benchmark datasets demonstrate the efficacy of our method and its superiority over state-of-the-art methods.

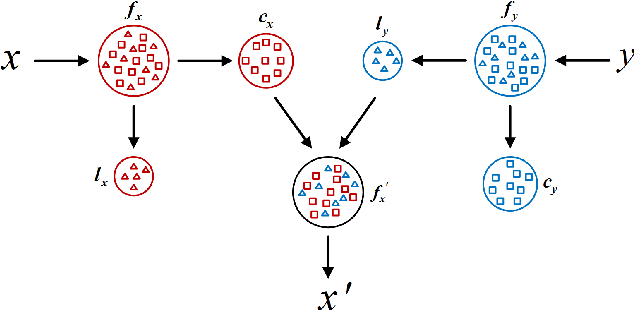

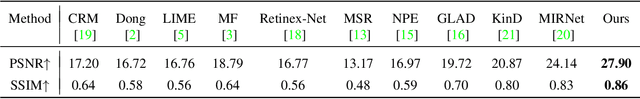

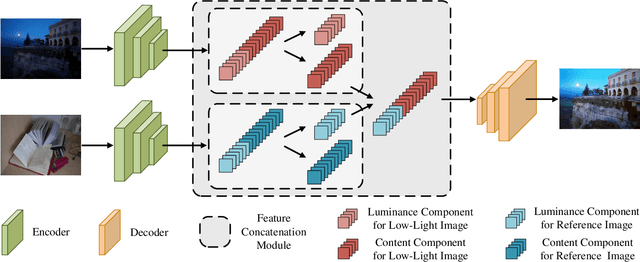

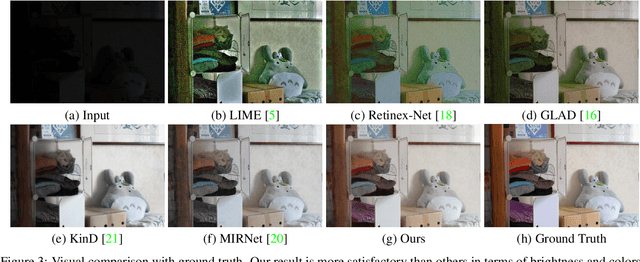

Shed Various Lights on a Low-Light Image: Multi-Level Enhancement Guided by Arbitrary References

Jan 04, 2021

Abstract:It is suggested that low-light image enhancement realizes one-to-many mapping since we have different definitions of NORMAL-light given application scenarios or users' aesthetic. However, most existing methods ignore subjectivity of the task, and simply produce one result with fixed brightness. This paper proposes a neural network for multi-level low-light image enhancement, which is user-friendly to meet various requirements by selecting different images as brightness reference. Inspired by style transfer, our method decomposes an image into two low-coupling feature components in the latent space, which allows the concatenation feasibility of the content components from low-light images and the luminance components from reference images. In such a way, the network learns to extract scene-invariant and brightness-specific information from a set of image pairs instead of learning brightness differences. Moreover, information except for the brightness is preserved to the greatest extent to alleviate color distortion. Extensive results show strong capacity and superiority of our network against existing methods.

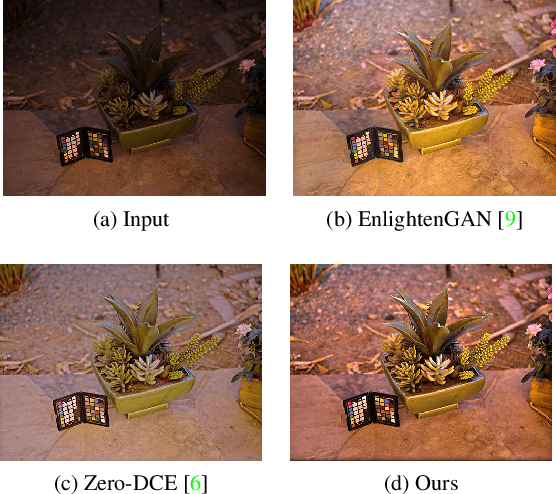

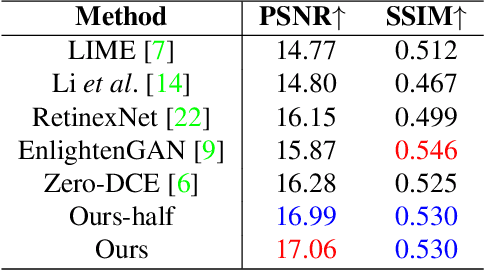

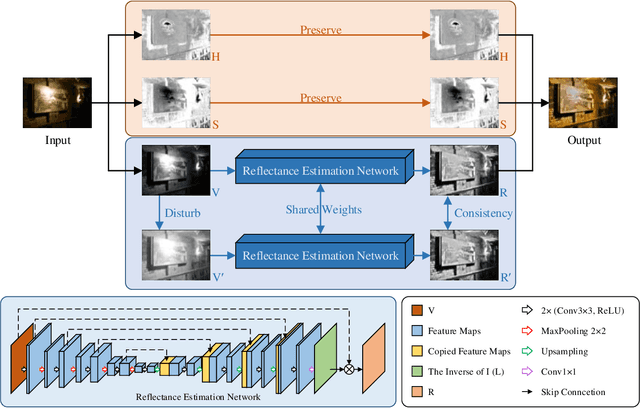

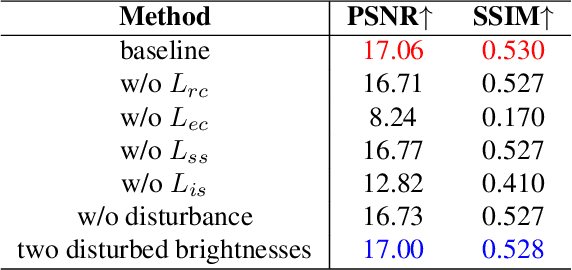

A Switched View of Retinex: Deep Self-Regularized Low-Light Image Enhancement

Jan 03, 2021

Abstract:Self-regularized low-light image enhancement does not require any normal-light image in training, thereby freeing from the chains on paired or unpaired low-/normal-images. However, existing methods suffer color deviation and fail to generalize to various lighting conditions. This paper presents a novel self-regularized method based on Retinex, which, inspired by HSV, preserves all colors (Hue, Saturation) and only integrates Retinex theory into brightness (Value). We build a reflectance estimation network by restricting the consistency of reflectances embedded in both the original and a novel random disturbed form of the brightness of the same scene. The generated reflectance, which is assumed to be irrelevant of illumination by Retinex, is treated as enhanced brightness. Our method is efficient as a low-light image is decoupled into two subspaces, color and brightness, for better preservation and enhancement. Extensive experiments demonstrate that our method outperforms multiple state-of-the-art algorithms qualitatively and quantitatively and adapts to more lighting conditions.

Incremental Transductive Learning Approaches to Schistosomiasis Vector Classification

Apr 06, 2017

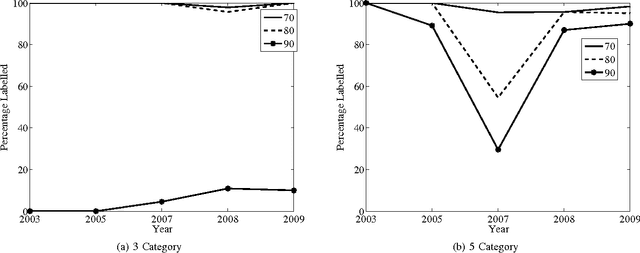

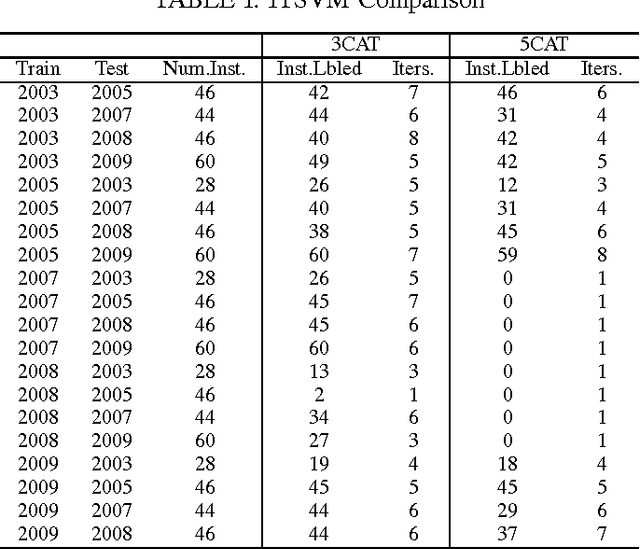

Abstract:The key issues pertaining to collection of epidemic disease data for our analysis purposes are that it is a labour intensive, time consuming and expensive process resulting in availability of sparse sample data which we use to develop prediction models. To address this sparse data issue, we present novel Incremental Transductive methods to circumvent the data collection process by applying previously acquired data to provide consistent, confidence-based labelling alternatives to field survey research. We investigated various reasoning approaches for semisupervised machine learning including Bayesian models for labelling data. The results show that using the proposed methods, we can label instances of data with a class of vector density at a high level of confidence. By applying the Liberal and Strict Training Approaches, we provide a labelling and classification alternative to standalone algorithms. The methods in this paper are components in the process of reducing the proliferation of the Schistosomiasis disease and its effects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge