Guanghua Yang

Non-Orthogonal HARQ-CC over SDR: A GNU Radio-Based Implementation

Mar 04, 2026Abstract:Hybrid Automatic Repeat Request (HARQ) schemes typically allocate all available resources to retransmit failed packets to ensure reliability. However, under stringent delay constraints, these schemes often exhibit low spectral efficiency and increased transmission latency. To address these challenges, this paper proposes an efficient Non-Orthogonal HARQ with Chase Combining (N-HARQ-CC) transmission strategy. Specifically, the proposed approach allocates a larger portion of retransmission resources to new data packets, reserving only a small fraction for retransmitting previously erroneous packets. This is based on the observation that only a small number of information bits are typically incorrect, enabling surplus communication resources to be utilized for transmitting new messages. The N-HARQ-CC scheme retransmits the same redundant version of a failed packet and employs Maximum Ratio Combining (MRC) for decoding. To minimize complex packet scheduling and decoding complexity, the proposed scheme limits superposition to at most two messages per transmission round. At the receiver, Successive Interference Cancellation (SIC) is used to decouple the superimposed messages. The proposed N-HARQ-CC system was implemented using GNU Radio and USRP platforms for validation. Compared to conventional Type-I HARQ and HARQ-CC schemes, the proposed scheme achieves a significant improvement in spectral efficiency of approximately 0.5 bps/Hz, aligning with the low-latency requirements of 6G networks.

Out-of-Band Modality Synergy Based Multi-User Beam Prediction and Proactive BS Selection with Zero Pilot Overhead

Jun 18, 2025Abstract:Multi-user millimeter-wave communication relies on narrow beams and dense cell deployments to ensure reliable connectivity. However, tracking optimal beams for multiple mobile users across multiple base stations (BSs) results in significant signaling overhead. Recent works have explored the capability of out-of-band (OOB) modalities in obtaining spatial characteristics of wireless channels and reducing pilot overhead in single-BS single-user/multi-user systems. However, applying OOB modalities for multi-BS selection towards dense cell deployments leads to high coordination overhead, i.e, excessive computing overhead and high latency in data exchange. How to leverage OOB modalities to eliminate pilot overhead and achieve efficient multi-BS coordination in multi-BS systems remains largely unexplored. In this paper, we propose a novel OOB modality synergy (OMS) based mobility management scheme to realize multi-user beam prediction and proactive BS selection by synergizing two OOB modalities, i.e., vision and location. Specifically, mobile users are initially identified via spatial alignment of visual sensing and location feedback, and then tracked according to the temporal correlation in image sequence. Subsequently, a binary encoding map based gain and beam prediction network (BEM-GBPN) is designed to predict beamforming gains and optimal beams for mobile users at each BS, such that a central unit can control the BSs to perform user handoff and beam switching. Simulation results indicate that the proposed OMS-based mobility management scheme enhances beam prediction and BS selection accuracy and enables users to achieve 91% transmission rates of the optimal with zero pilot overhead and significantly improve multi-BS coordination efficiency compared to existing methods.

Power Allocation for Coordinated Multi-Point Aided ISAC Systems

Mar 10, 2025Abstract:In this letter, we investigate a coordinated multiple point (CoMP)-aided integrated sensing and communication (ISAC) system that supports multiple users and targets. Multiple base stations (BSs) employ a coordinated power allocation strategy to serve their associated single-antenna communication users (CUs) while utilizing the echo signals for joint radar target (RT) detection. The probability of detection (PoD) of the CoMP-ISAC system is then proposed for assessing the sensing performance. To maximize the sum rate while ensuring the PoD for each RT and adhering to the total transmit power budget across all BSs, we introduce an efficient power allocation strategy. Finally, simulation results are provided to validate the analytical findings, demonstrating that the proposed power allocation scheme effectively enhances the sum rate while satisfying the sensing requirements.

AdaSin: Enhancing Hard Sample Metrics with Dual Adaptive Penalty for Face Recognition

Mar 05, 2025Abstract:In recent years, the emergence of deep convolutional neural networks has positioned face recognition as a prominent research focus in computer vision. Traditional loss functions, such as margin-based, hard-sample mining-based, and hybrid approaches, have achieved notable performance improvements, with some leveraging curriculum learning to optimize training. However, these methods often fall short in effectively quantifying the difficulty of hard samples. To address this, we propose Adaptive Sine (AdaSin) loss function, which introduces the sine of the angle between a sample's embedding feature and its ground-truth class center as a novel difficulty metric. This metric enables precise and effective penalization of hard samples. By incorporating curriculum learning, the model dynamically adjusts classification boundaries across different training stages. Unlike previous adaptive-margin loss functions, AdaSin introduce a dual adaptive penalty, applied to both the positive and negative cosine similarities of hard samples. This design imposes stronger constraints, enhancing intra-class compactness and inter-class separability. The combination of the dual adaptive penalty and curriculum learning is guided by a well-designed difficulty metric. It enables the model to focus more effectively on hard samples in later training stages, and lead to the extraction of highly discriminative face features. Extensive experiments across eight benchmarks demonstrate that AdaSin achieves superior accuracy compared to other state-of-the-art methods.

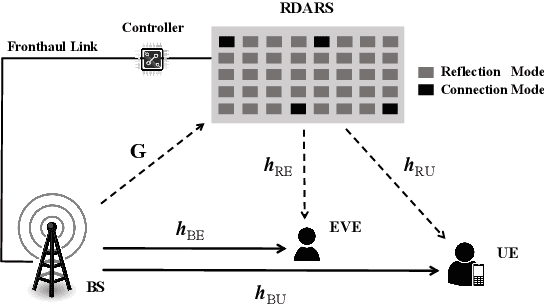

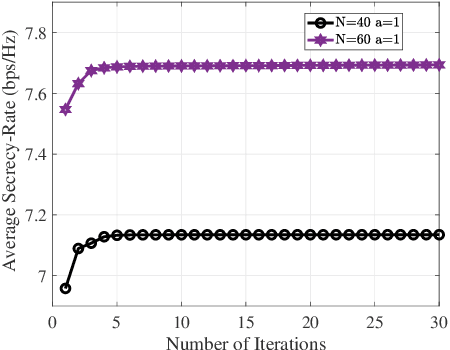

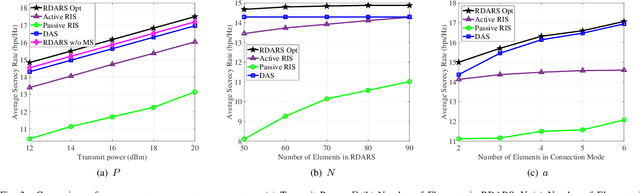

Secure Communication in Dynamic RDARS-Driven Systems

Jan 18, 2025

Abstract:In this letter, we investigate a dynamic reconfigurable distributed antenna and reflection surface (RDARS)-driven secure communication system, where the working mode of the RDARS can be flexibly configured. We aim to maximize the secrecy rate by jointly designing the active beamforming vectors, reflection coefficients, and the channel-aware mode selection matrix. To address the non-convex binary and cardinality constraints introduced by dynamic mode selection, we propose an efficient alternating optimization (AO) framework that employs penalty-based fractional programming (FP) and successive convex approximation (SCA) transformations. Simulation results demonstrate the potential of RDARS in enhancing the secrecy rate and show its superiority compared to existing reflection surface-based schemes.

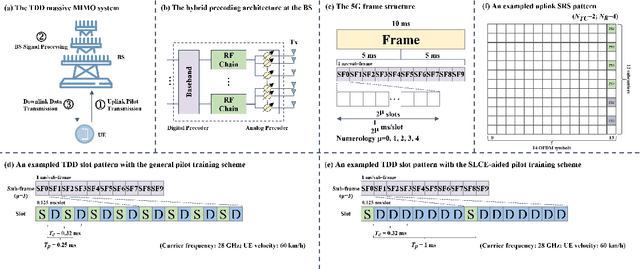

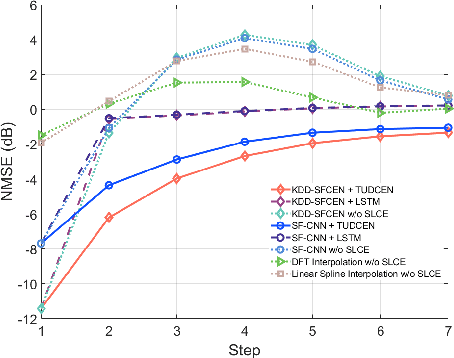

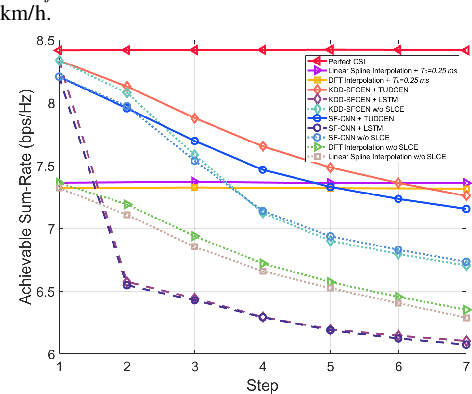

Low-Overhead Channel Estimation via 3D Extrapolation for TDD mmWave Massive MIMO Systems Under High-Mobility Scenarios

Jun 13, 2024

Abstract:In TDD mmWave massive MIMO systems, the downlink CSI can be attained through uplink channel estimation thanks to the uplink-downlink channel reciprocity. However, the channel aging issue is significant under high-mobility scenarios and thus necessitates frequent uplink channel estimation. In addition, large amounts of antennas and subcarriers lead to high-dimensional CSI matrices, aggravating the pilot training overhead. To systematically reduce the pilot overhead, a spatial, frequency, and temporal domain (3D) channel extrapolation framework is proposed in this paper. Considering the marginal effects of pilots in the spatial and frequency domains and the effectiveness of traditional knowledge-driven channel estimation methods, we first propose a knowledge-and-data driven spatial-frequency channel extrapolation network (KDD-SFCEN) for uplink channel estimation by exploiting the least square estimator for coarse channel estimation and joint spatial-frequency channel extrapolation to reduce the spatial-frequency domain pilot overhead. Then, resorting to the uplink-downlink channel reciprocity and temporal domain dependencies of downlink channels, a temporal uplink-downlink channel extrapolation network (TUDCEN) is proposed for slot-level channel extrapolation, aiming to enlarge the pilot signal period and thus reduce the temporal domain pilot overhead under high-mobility scenarios. Specifically, we propose the spatial-frequency sampling embedding module to reduce the representation dimension and consequent computational complexity, and we propose to exploit the autoregressive generative Transformer for generating downlink channels autoregressively. Numerical results demonstrate the superiority of the proposed framework in significantly reducing the pilot training overhead by more than 16 times and improving the system's spectral efficiency under high-mobility scenarios.

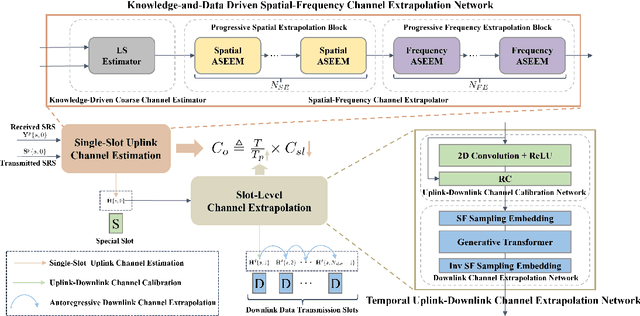

Robust Beamforming Design and Antenna Selection for Dynamic HRIS-aided Massive MIMO Systems

Mar 31, 2024

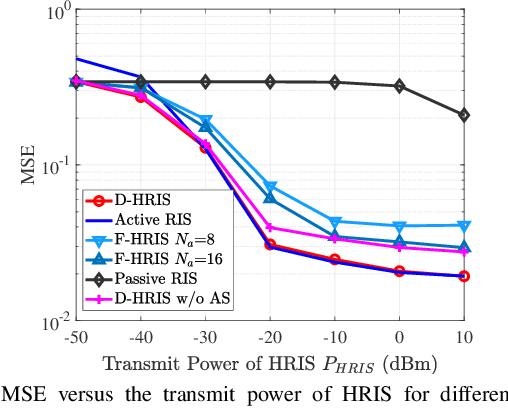

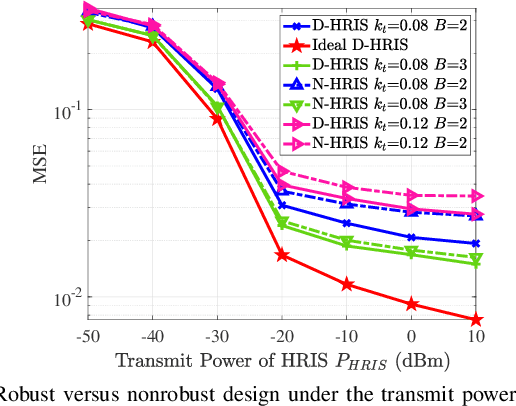

Abstract:In this paper, a dynamic hybrid active-passive reconfigurable intelligent surface (HRIS) is proposed to further enhance the massive multiple-input-multiple-output (MIMO) system, since it supports the dynamic placement of active and passive elements. Specifically, considering the impact of the hardware impairments (HWIs), we investigate the channel-aware configuration of the receive antennas at the base station (BS) and the active/passive elements at the HRIS to improve the reliability of system. To this end, we investigate the average mean-square-error (MSE) minimization problem for the HRIS-aided massive MIMO system by jointly optimizing the BS receive antenna selection matrix, the reflection phase coefficients, the reflection amplitude matrix, and the mode selection matrix of the HRIS under the power budget of the HRIS. To tackle the non-convexity and intractability of this problem, we first transform the binary and discrete variables into continuous ones, and then propose a penalty-based exact block coordinate descent (BCD) algorithm to solve these subproblems alternately. Numerical simulations demonstrate the great superiority of the proposed scheme over the conventional benchmark schemes.

Variational Bayesian Learning based Joint Localization and Channel Estimation with Distance-dependent Noise

Mar 07, 2024

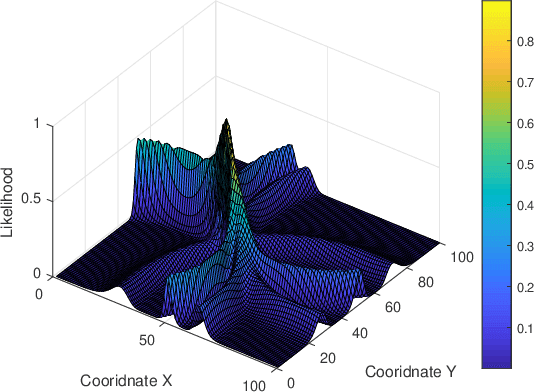

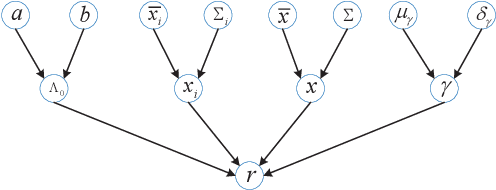

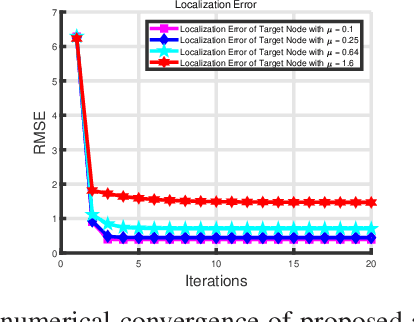

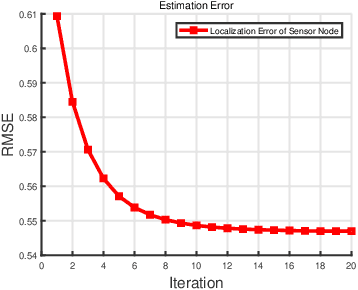

Abstract:In the Industrial Internet of Things (IIoTs) and Ocean of Things (OoTs), the advent of massive intelligent services has imposed stringent requirements on both communication and localization, particularly emphasizing precise localization and channel information. This paper focuses on the challenge of jointly optimizing localization and communication in IoT networks. Departing from the conventional independent noise model used in localization and channel estimation problems, we consider a more realistic model incorporating distance-dependent noise variance, as revealed in recent theoretical analyses and experimental results. The distance-dependent noise introduces unknown noise power and a complex noise model, resulting in an exceptionally challenging non-convex and nonlinear optimization problem. In this study, we address a joint localization and channel estimation problem encompassing distance-dependent noise, unknown channel parameters, and uncertainties in sensor node locations. To surmount the intractable nonlinear and non-convex objective function inherent in the problem, we introduce a variational Bayesian learning-based framework. This framework enables the joint optimization of localization and channel parameters by leveraging an effective approximation to the true posterior distribution. Furthermore, the proposed joint learning algorithm provides an iterative closed-form solution and exhibits superior performance in terms of computational complexity compared to existing algorithms. Computer simulation results demonstrate that the proposed algorithm approaches the performance of the Bayesian Cramer-Rao bound (BCRB), achieves localization performance comparable to the ML-GMP algorithm, and outperforms the other two comparison algorithms.

Variational Bayesian Learning Based Localization and Channel Reconstruction in RIS-aided Systems

Mar 02, 2024

Abstract:The emerging immersive and autonomous services have posed stringent requirements on both communications and localization. By considering the great potential of reconfigurable intelligent surface (RIS), this paper focuses on the joint channel estimation and localization for RIS-aided wireless systems. As opposed to existing works that treat channel estimation and localization independently, this paper exploits the intrinsic coupling and nonlinear relationships between the channel parameters and user location for enhancement of both localization and channel reconstruction. By noticing the non-convex, nonlinear objective function and the sparser angle pattern, a variational Bayesian learning-based framework is developed to jointly estimate the channel parameters and user location through leveraging an effective approximation of the posterior distribution. The proposed framework is capable of unifying near-field and far-field scenarios owing to exploitation of sparsity of the angular domain. Since the joint channel and location estimation problem has a closed-form solution in each iteration, our proposed iterative algorithm performs better than the conventional particle swarm optimization (PSO) and maximum likelihood (ML) based ones in terms of computational complexity. Simulations demonstrate that the proposed algorithm almost reaches the Bayesian Cramer-Rao bound (BCRB) and achieves a superior estimation accuracy by comparing to the PSO and the ML algorithms.

HARQ-IR Aided Short Packet Communications: BLER Analysis and Throughput Maximization

Dec 07, 2023

Abstract:This paper introduces hybrid automatic repeat request with incremental redundancy (HARQ-IR) to boost the reliability of short packet communications. The finite blocklength information theory and correlated decoding events tremendously preclude the analysis of average block error rate (BLER). Fortunately, the recursive form of average BLER motivates us to calculate its value through the trapezoidal approximation and Gauss-Laguerre quadrature. Moreover, the asymptotic analysis is performed to derive a simple expression for the average BLER at high signal-to-noise ratio (SNR). Then, we study the maximization of long term average throughput (LTAT) via power allocation meanwhile ensuring the power and the BLER constraints. For tractability, the asymptotic BLER is employed to solve the problem through geometric programming (GP). However, the GP-based solution underestimates the LTAT at low SNR due to a large approximation error in this case. Alternatively, we also develop a deep reinforcement learning (DRL)-based framework to learn power allocation policy. In particular, the optimization problem is transformed into a constrained Markov decision process, which is solved by integrating deep deterministic policy gradient (DDPG) with subgradient method. The numerical results finally demonstrate that the DRL-based method outperforms the GP-based one at low SNR, albeit at the cost of increasing computational burden.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge