Francesco Marchetti

Kriging via variably scaled kernels

Mar 16, 2026Abstract:Classical Gaussian processes and Kriging models are commonly based on stationary kernels, whereby correlations between observations depend exclusively on the relative distance between scattered data. While this assumption ensures analytical tractability, it limits the ability of Gaussian processes to represent heterogeneous correlation structures. In this work, we investigate variably scaled kernels as an effective tool for constructing non-stationary Gaussian processes by explicitly modifying the correlation structure of the data. Through a scaling function, variably scaled kernels alter the correlations between data and enable the modeling of targets exhibiting abrupt changes or discontinuities. We analyse the resulting predictive uncertainty via the variably scaled kernel power function and clarify the relationship between variably scaled kernels-based constructions and classical non-stationary kernels. Numerical experiments demonstrate that variably scaled kernels-based Gaussian processes yield improved reconstruction accuracy and provide uncertainty estimates that reflect the underlying structure of the data

An accurate flatness measure to estimate the generalization performance of CNN models

Mar 09, 2026Abstract:Flatness measures based on the spectrum or the trace of the Hessian of the loss are widely used as proxies for the generalization ability of deep networks. However, most existing definitions are either tailored to fully connected architectures, relying on stochastic estimators of the Hessian trace, or ignore the specific geometric structure of modern Convolutional Neural Networks (CNNs). In this work, we develop a flatness measure that is both exact and architecturally faithful for a broad and practically relevant class of CNNs. We first derive a closed-form expression for the trace of the Hessian of the cross-entropy loss with respect to convolutional kernels in networks that use global average pooling followed by a linear classifier. Building on this result, we then specialize the notion of relative flatness to convolutional layers and obtain a parameterization-aware flatness measure that properly accounts for the scaling symmetries and filter interactions induced by convolution and pooling. Finally, we empirically investigate the proposed measure on families of CNNs trained on standard image-classification benchmarks. The results obtained suggest that the proposed measure can serve as a robust tool to assess and compare the generalization performance of CNN models, and to guide the design of architecture and training choices in practice.

Feature Understanding and Sparsity Enhancement via 2-Layered kernel machines (2L-FUSE)

Sep 09, 2025Abstract:We propose a novel sparsity enhancement strategy for regression tasks, based on learning a data-adaptive kernel metric, i.e., a shape matrix, through 2-Layered kernel machines. The resulting shape matrix, which defines a Mahalanobis-type deformation of the input space, is then factorized via an eigen-decomposition, allowing us to identify the most informative directions in the space of features. This data-driven approach provides a flexible, interpretable and accurate feature reduction scheme. Numerical experiments on synthetic and applications to real datasets of geomagnetic storms demonstrate that our approach achieves minimal yet highly informative feature sets without losing predictive performance.

Multiclass threshold-based classification

May 16, 2025Abstract:In this paper, we introduce a threshold-based framework for multiclass classification that generalizes the standard argmax rule. This is done by replacing the probabilistic interpretation of softmax outputs with a geometric one on the multidimensional simplex, where the classification depends on a multidimensional threshold. This change of perspective enables for any trained classification network an a posteriori optimization of the classification score by means of threshold tuning, as usually carried out in the binary setting. This allows a further refinement of the prediction capability of any network. Moreover, this multidimensional threshold-based setting makes it possible to define score-oriented losses, which are based on the interpretation of the threshold as a random variable. Our experiments show that the multidimensional threshold tuning yields consistent performance improvements across various networks and datasets, and that the proposed multiclass score-oriented losses are competitive with standard loss functions, resembling the advantages observed in the binary case.

Depth-based Privileged Information for Boosting 3D Human Pose Estimation on RGB

Sep 17, 2024

Abstract:Despite the recent advances in computer vision research, estimating the 3D human pose from single RGB images remains a challenging task, as multiple 3D poses can correspond to the same 2D projection on the image. In this context, depth data could help to disambiguate the 2D information by providing additional constraints about the distance between objects in the scene and the camera. Unfortunately, the acquisition of accurate depth data is limited to indoor spaces and usually is tied to specific depth technologies and devices, thus limiting generalization capabilities. In this paper, we propose a method able to leverage the benefits of depth information without compromising its broader applicability and adaptability in a predominantly RGB-camera-centric landscape. Our approach consists of a heatmap-based 3D pose estimator that, leveraging the paradigm of Privileged Information, is able to hallucinate depth information from the RGB frames given at inference time. More precisely, depth information is used exclusively during training by enforcing our RGB-based hallucination network to learn similar features to a backbone pre-trained only on depth data. This approach proves to be effective even when dealing with limited and small datasets. Experimental results reveal that the paradigm of Privileged Information significantly enhances the model's performance, enabling efficient extraction of depth information by using only RGB images.

Greedy feature selection: Classifier-dependent feature selection via greedy methods

Mar 08, 2024

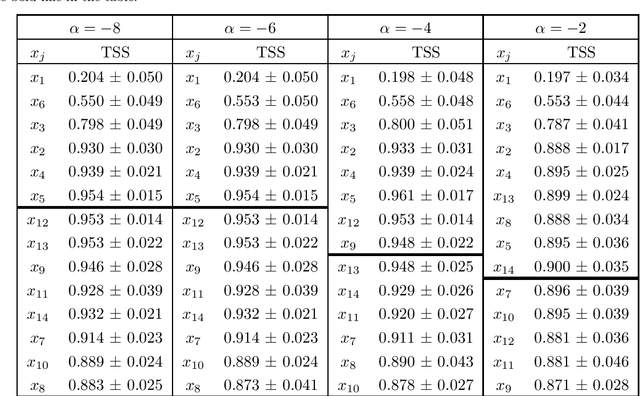

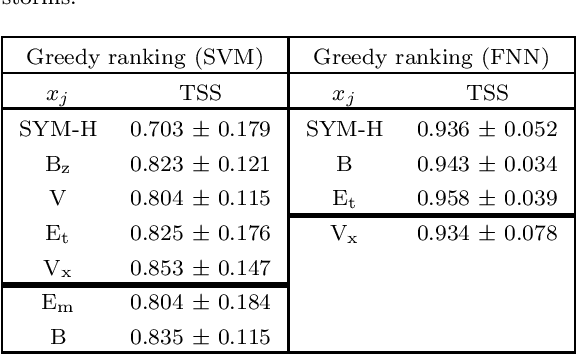

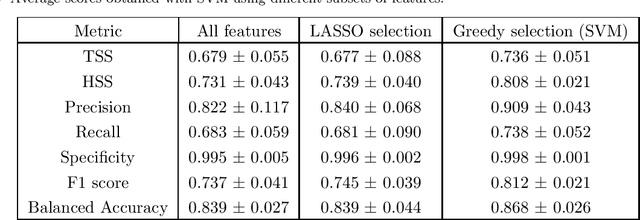

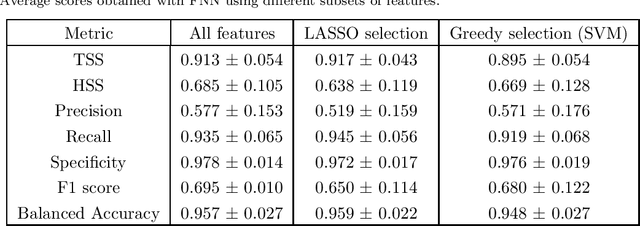

Abstract:The purpose of this study is to introduce a new approach to feature ranking for classification tasks, called in what follows greedy feature selection. In statistical learning, feature selection is usually realized by means of methods that are independent of the classifier applied to perform the prediction using that reduced number of features. Instead, greedy feature selection identifies the most important feature at each step and according to the selected classifier. In the paper, the benefits of such scheme are investigated theoretically in terms of model capacity indicators, such as the Vapnik-Chervonenkis (VC) dimension or the kernel alignment, and tested numerically by considering its application to the problem of predicting geo-effective manifestations of the active Sun.

AI-FLARES: Artificial Intelligence for the Analysis of Solar Flares Data

Jan 02, 2024Abstract:AI-FLARES (Artificial Intelligence for the Analysis of Solar Flares Data) is a research project funded by the Agenzia Spaziale Italiana and by the Istituto Nazionale di Astrofisica within the framework of the ``Attivit\`a di Studio per la Comunit\`a Scientifica Nazionale Sole, Sistema Solare ed Esopianeti'' program. The topic addressed by this project was the development and use of computational methods for the analysis of remote sensing space data associated to solar flare emission. This paper overviews the main results obtained by the project, with specific focus on solar flare forecasting, reconstruction of morphologies of the flaring sources, and interpretation of acceleration mechanisms triggered by solar flares.

Robot Pose Nowcasting: Forecast the Future to Improve the Present

Aug 24, 2023

Abstract:In recent years, the effective and safe collaboration between humans and machines has gained significant importance, particularly in the Industry 4.0 scenario. A critical prerequisite for realizing this collaborative paradigm is precisely understanding the robot's 3D pose within its environment. Therefore, in this paper, we introduce a novel vision-based system leveraging depth data to accurately establish the 3D locations of robotic joints. Specifically, we prove the ability of the proposed system to enhance its current pose estimation accuracy by jointly learning to forecast future poses. Indeed, we introduce the concept of Pose Nowcasting, denoting the capability of a system to exploit the learned knowledge of the future to improve the estimation of the present. The experimental evaluation is conducted on two different datasets, providing state-of-the-art and real-time performance and confirming the validity of the proposed method on both the robotic and human scenarios.

A comprehensive theoretical framework for the optimization of neural networks classification performance with respect to weighted metrics

May 22, 2023Abstract:In many contexts, customized and weighted classification scores are designed in order to evaluate the goodness of the predictions carried out by neural networks. However, there exists a discrepancy between the maximization of such scores and the minimization of the loss function in the training phase. In this paper, we provide a complete theoretical setting that formalizes weighted classification metrics and then allows the construction of losses that drive the model to optimize these metrics of interest. After a detailed theoretical analysis, we show that our framework includes as particular instances well-established approaches such as classical cost-sensitive learning, weighted cross entropy loss functions and value-weighted skill scores.

Physics-driven machine learning for the prediction of coronal mass ejections' travel times

May 17, 2023Abstract:Coronal Mass Ejections (CMEs) correspond to dramatic expulsions of plasma and magnetic field from the solar corona into the heliosphere. CMEs are scientifically relevant because they are involved in the physical mechanisms characterizing the active Sun. However, more recently CMEs have attracted attention for their impact on space weather, as they are correlated to geomagnetic storms and may induce the generation of Solar Energetic Particles streams. In this space weather framework, the present paper introduces a physics-driven artificial intelligence (AI) approach to the prediction of CMEs travel time, in which the deterministic drag-based model is exploited to improve the training phase of a cascade of two neural networks fed with both remote sensing and in-situ data. This study shows that the use of physical information in the AI architecture significantly improves both the accuracy and the robustness of the travel time prediction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge