Erik Hellsten

Leveraging Axis-Aligned Subspaces for High-Dimensional Bayesian Optimization with Group Testing

Apr 08, 2025Abstract:Bayesian optimization (BO ) is an effective method for optimizing expensive-to-evaluate black-box functions. While high-dimensional problems can be particularly challenging, due to the multitude of parameter choices and the potentially high number of data points required to fit the model, this limitation can be addressed if the problem satisfies simplifying assumptions. Axis-aligned subspace approaches, where few dimensions have a significant impact on the objective, motivated several algorithms for high-dimensional BO . However, the validity of this assumption is rarely verified, and the assumption is rarely exploited to its full extent. We propose a group testing ( GT) approach to identify active variables to facilitate efficient optimization in these domains. The proposed algorithm, Group Testing Bayesian Optimization (GTBO), first runs a testing phase where groups of variables are systematically selected and tested on whether they influence the objective, then terminates once active dimensions are identified. To that end, we extend the well-established GT theory to functions over continuous domains. In the second phase, GTBO guides optimization by placing more importance on the active dimensions. By leveraging the axis-aligned subspace assumption, GTBO outperforms state-of-the-art methods on benchmarks satisfying the assumption of axis-aligned subspaces, while offering improved interpretability.

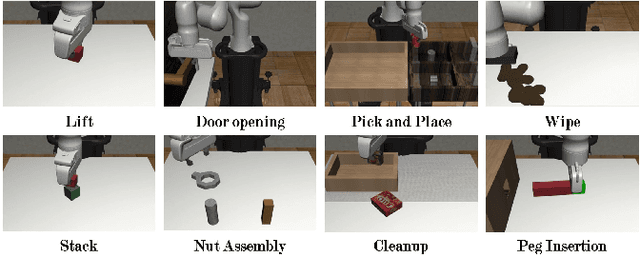

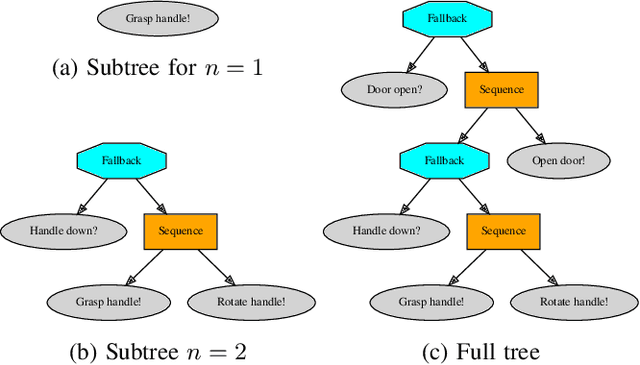

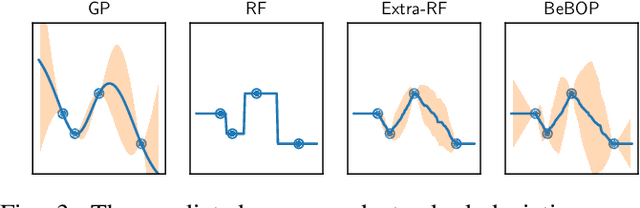

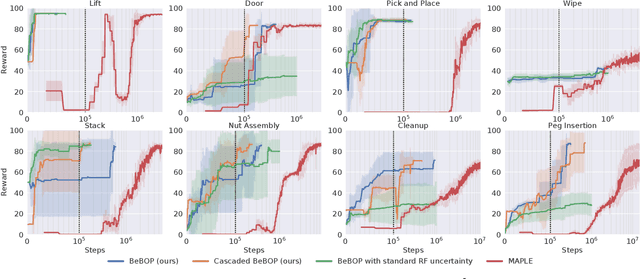

BeBOP -- Combining Reactive Planning and Bayesian Optimization to Solve Robotic Manipulation Tasks

Oct 02, 2023

Abstract:Robotic systems for manipulation tasks are increasingly expected to be easy to configure for new tasks. While in the past, robot programs were often written statically and tuned manually, the current, faster transition times call for robust, modular and interpretable solutions that also allow a robotic system to learn how to perform a task. We propose the method Behavior-based Bayesian Optimization and Planning (BeBOP) that combines two approaches for generating behavior trees: we build the structure using a reactive planner and learn specific parameters with Bayesian optimization. The method is evaluated on a set of robotic manipulation benchmarks and is shown to outperform state-of-the-art reinforcement learning algorithms by being up to 46 times faster while simultaneously being less dependent on reward shaping. We also propose a modification to the uncertainty estimate for the random forest surrogate models that drastically improves the results.

Self-Correcting Bayesian Optimization through Bayesian Active Learning

Apr 21, 2023Abstract:Gaussian processes are cemented as the model of choice in Bayesian optimization and active learning. Yet, they are severely dependent on cleverly chosen hyperparameters to reach their full potential, and little effort is devoted to finding the right hyperparameters in the literature. We demonstrate the impact of selecting good hyperparameters for GPs and present two acquisition functions that explicitly prioritize this goal. Statistical distance-based Active Learning (SAL) considers the average disagreement among samples from the posterior, as measured by a statistical distance. It is shown to outperform the state-of-the-art in Bayesian active learning on a number of test functions. We then introduce Self-Correcting Bayesian Optimization (SCoreBO), which extends SAL to perform Bayesian optimization and active hyperparameter learning simultaneously. SCoreBO learns the model hyperparameters at improved rates compared to vanilla BO, while outperforming the latest Bayesian optimization methods on traditional benchmarks. Moreover, the importance of self-correction is demonstrated on an array of exotic Bayesian optimization tasks

BaCO: A Fast and Portable Bayesian Compiler Optimization Framework

Dec 01, 2022

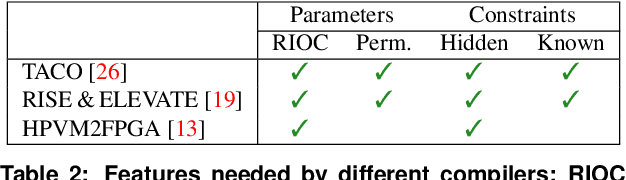

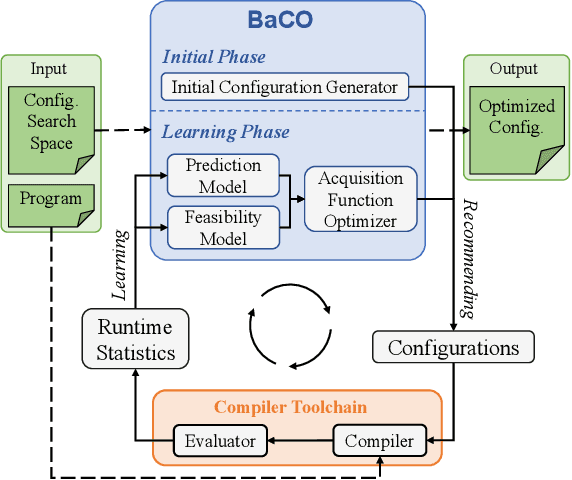

Abstract:We introduce the Bayesian Compiler Optimization framework (BaCO), a general purpose autotuner for modern compilers targeting CPUs, GPUs, and FPGAs. BaCO provides the flexibility needed to handle the requirements of modern autotuning tasks. Particularly, it deals with permutation, ordered, and continuous parameter types along with both known and unknown parameter constraints. To reason about these parameter types and efficiently deliver high-quality code, BaCO uses Bayesian optimization algorithms specialized towards the autotuning domain. We demonstrate BaCO's effectiveness on three modern compiler systems: TACO, RISE & ELEVATE, and HPVM2FPGA for CPUs, GPUs, and FPGAs respectively. For these domains, BaCO outperforms current state-of-the-art autotuners by delivering on average 1.39x-1.89x faster code with a tiny search budget, and BaCO is able to reach expert-level performance 2.89x-8.77x faster.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge