Eoin Brophy

Exploration of Algorithmic Trading Strategies for the Bitcoin Market

Oct 28, 2021

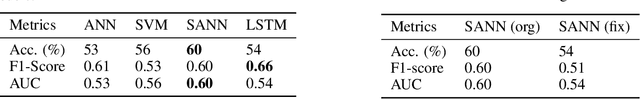

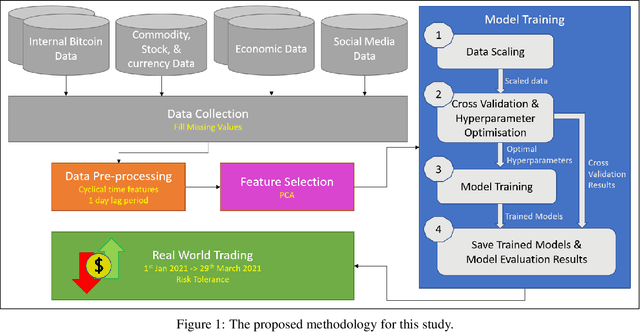

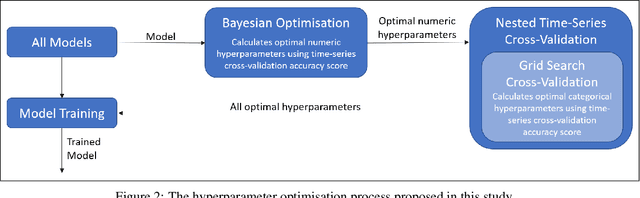

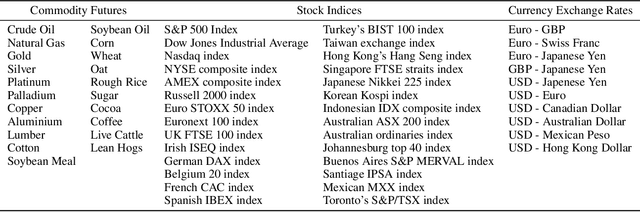

Abstract:Bitcoin is firmly becoming a mainstream asset in our global society. Its highly volatile nature has traders and speculators flooding into the market to take advantage of its significant price swings in the hope of making money. This work brings an algorithmic trading approach to the Bitcoin market to exploit the variability in its price on a day-to-day basis through the classification of its direction. Building on previous work, in this paper, we utilise both features internal to the Bitcoin network and external features to inform the prediction of various machine learning models. As an empirical test of our models, we evaluate them using a real-world trading strategy on completely unseen data collected throughout the first quarter of 2021. Using only a binary predictor, at the end of our three-month trading period, our models showed an average profit of 86\%, matching the results of the more traditional buy-and-hold strategy. However, after incorporating a risk tolerance score into our trading strategy by utilising the model's prediction confidence scores, our models were 12.5\% more profitable than the simple buy-and-hold strategy. These results indicate the credible potential that machine learning models have in extracting profit from the Bitcoin market and act as a front-runner for further research into real-world Bitcoin trading.

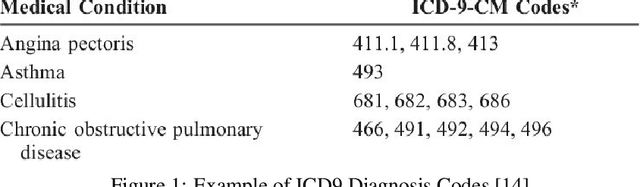

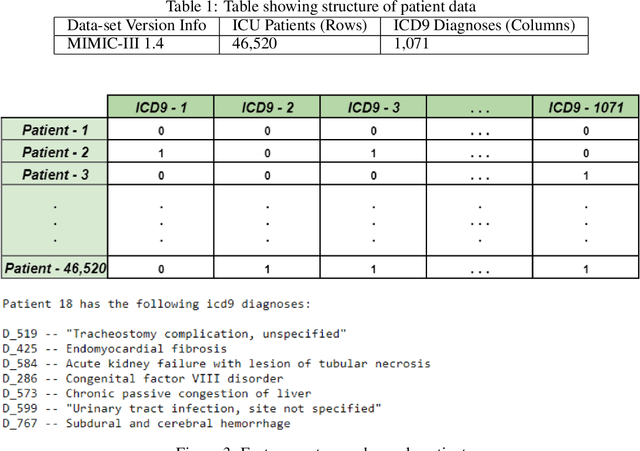

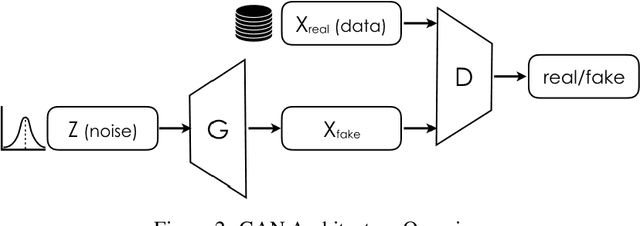

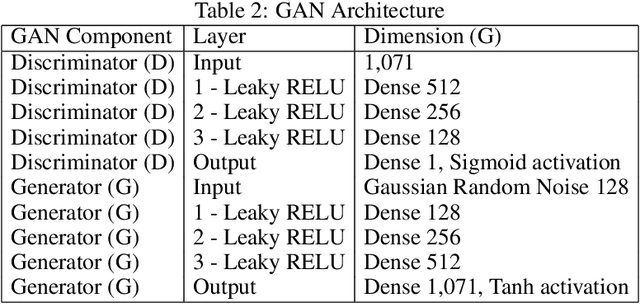

Generation of Synthetic Electronic Health Records Using a Federated GAN

Sep 06, 2021

Abstract:Sensitive medical data is often subject to strict usage constraints. In this paper, we trained a generative adversarial network (GAN) on real-world electronic health records (EHR). It was then used to create a data-set of "fake" patients through synthetic data generation (SDG) to circumvent usage constraints. This real-world data was tabular, binary, intensive care unit (ICU) patient diagnosis data. The entire data-set was split into separate data silos to mimic real-world scenarios where multiple ICU units across different hospitals may have similarly structured data-sets within their own organisations but do not have access to each other's data-sets. We implemented federated learning (FL) to train separate GANs locally at each organisation, using their unique data silo and then combining the GANs into a single central GAN, without any siloed data ever being exposed. This global, central GAN was then used to generate the synthetic patients data-set. We performed an evaluation of these synthetic patients with statistical measures and through a structured review by a group of medical professionals. It was shown that there was no significant reduction in the quality of the synthetic EHR when we moved between training a single central model and training on separate data silos with individual models before combining them into a central model. This was true for both the statistical evaluation (Root Mean Square Error (RMSE) of 0.0154 for single-source vs. RMSE of 0.0169 for dual-source federated) and also for the medical professionals' evaluation (no quality difference between EHR generated from a single source and EHR generated from multiple sources).

Generative adversarial networks in time series: A survey and taxonomy

Jul 23, 2021

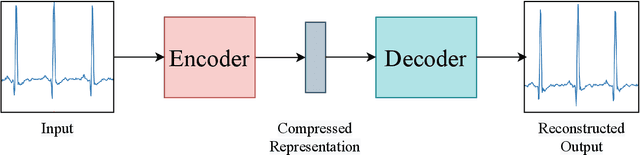

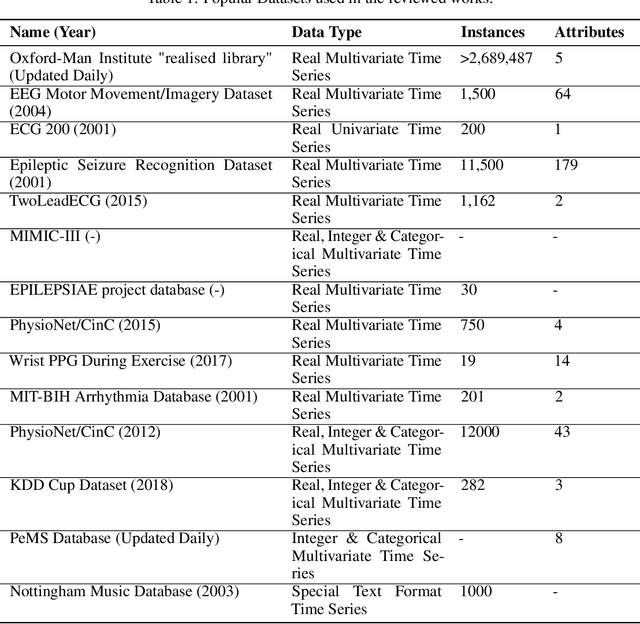

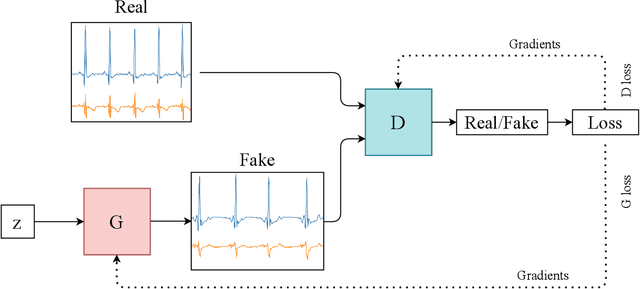

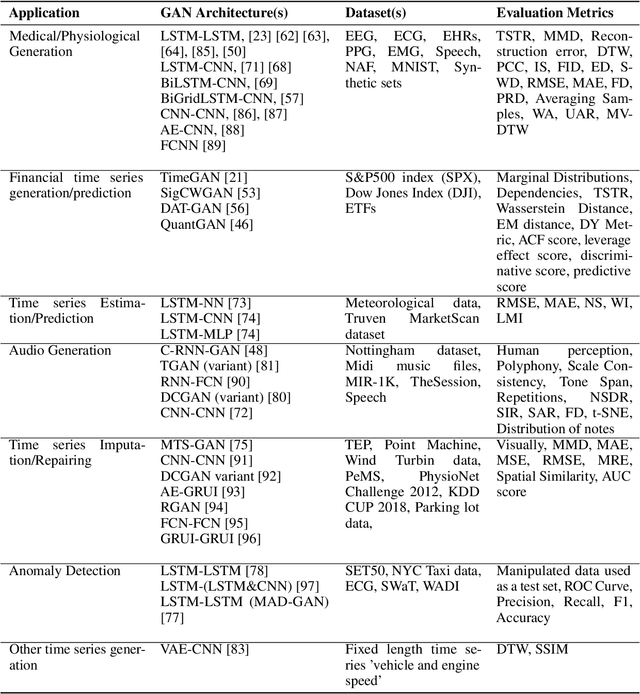

Abstract:Generative adversarial networks (GANs) studies have grown exponentially in the past few years. Their impact has been seen mainly in the computer vision field with realistic image and video manipulation, especially generation, making significant advancements. While these computer vision advances have garnered much attention, GAN applications have diversified across disciplines such as time series and sequence generation. As a relatively new niche for GANs, fieldwork is ongoing to develop high quality, diverse and private time series data. In this paper, we review GAN variants designed for time series related applications. We propose a taxonomy of discrete-variant GANs and continuous-variant GANs, in which GANs deal with discrete time series and continuous time series data. Here we showcase the latest and most popular literature in this field; their architectures, results, and applications. We also provide a list of the most popular evaluation metrics and their suitability across applications. Also presented is a discussion of privacy measures for these GANs and further protections and directions for dealing with sensitive data. We aim to frame clearly and concisely the latest and state-of-the-art research in this area and their applications to real-world technologies.

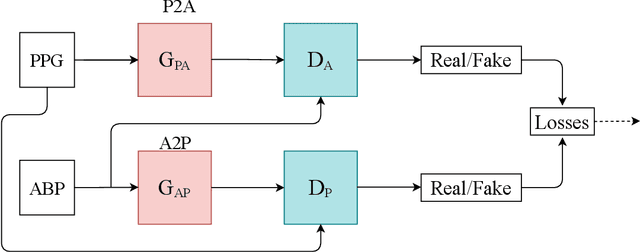

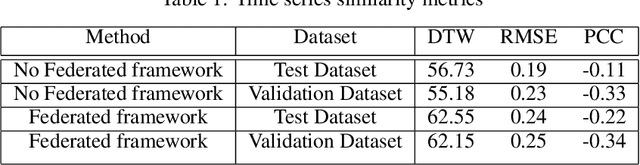

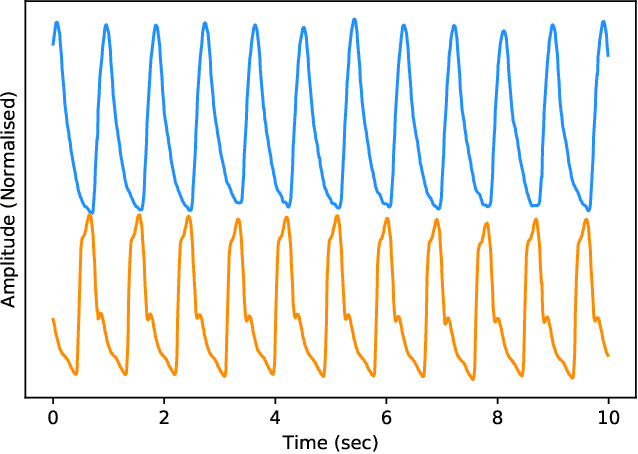

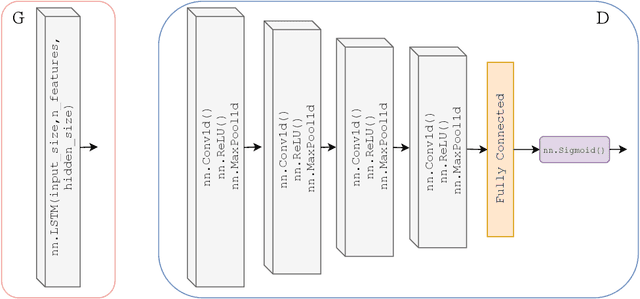

Estimation of Continuous Blood Pressure from PPG via a Federated Learning Approach

Feb 24, 2021

Abstract:Ischemic heart disease is the highest cause of mortality globally each year. This not only puts a massive strain on the lives of those affected but also on the public healthcare systems. To understand the dynamics of the healthy and unhealthy heart doctors commonly use electrocardiogram (ECG) and blood pressure (BP) readings. These methods are often quite invasive, in particular when continuous arterial blood pressure (ABP) readings are taken and not to mention very costly. Using machine learning methods we seek to develop a framework that is capable of inferring ABP from a single optical photoplethysmogram (PPG) sensor alone. We train our framework across distributed models and data sources to mimic a large-scale distributed collaborative learning experiment that could be implemented across low-cost wearables. Our time series-to-time series generative adversarial network (T2TGAN) is capable of high-quality continuous ABP generation from a PPG signal with a mean error of 2.54 mmHg and a standard deviation of 23.7 mmHg when estimating mean arterial pressure on a previously unseen, noisy, independent dataset. To our knowledge, this framework is the first example of a GAN capable of continuous ABP generation from an input PPG signal that also uses a federated learning methodology.

IROS 2019 Lifelong Robotic Vision Challenge -- Lifelong Object Recognition Report

Apr 26, 2020

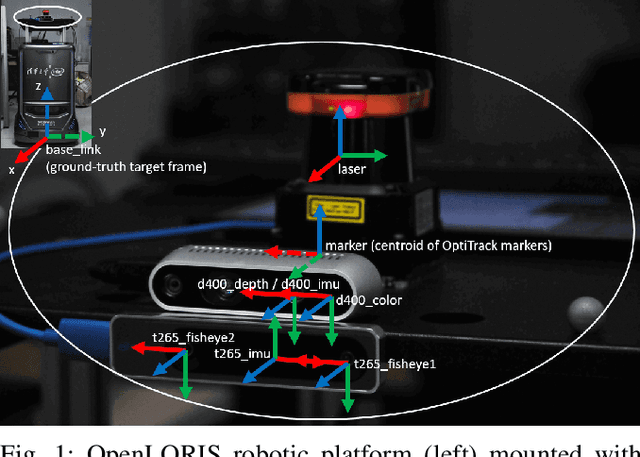

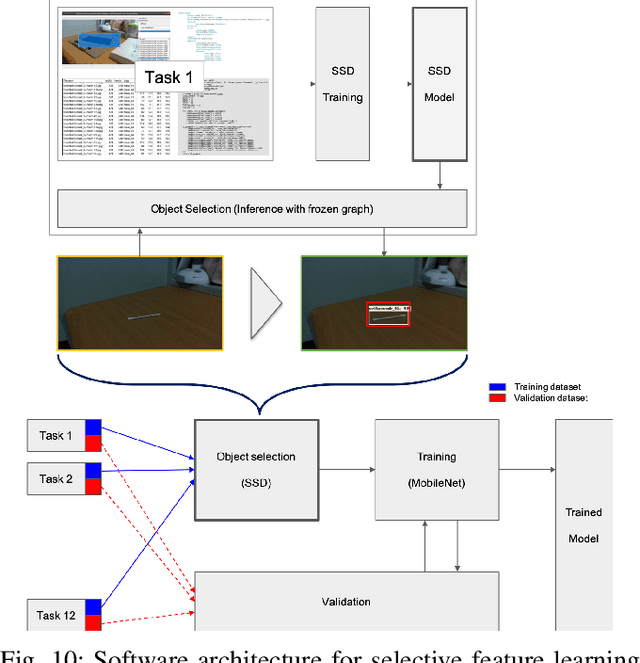

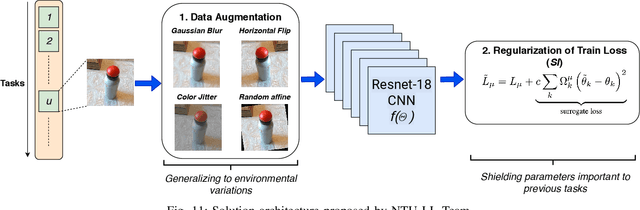

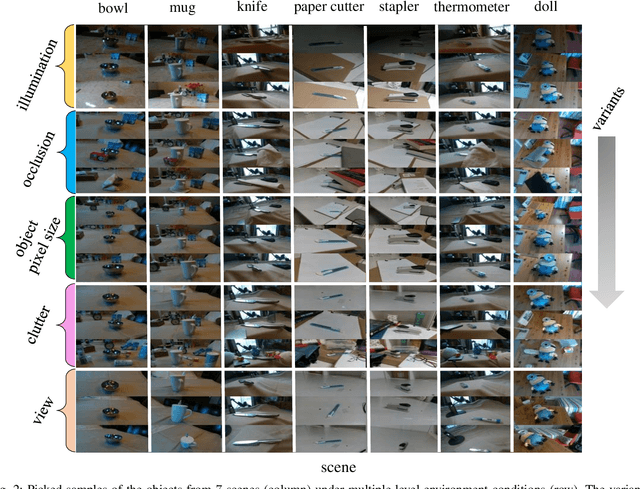

Abstract:This report summarizes IROS 2019-Lifelong Robotic Vision Competition (Lifelong Object Recognition Challenge) with methods and results from the top $8$ finalists (out of over~$150$ teams). The competition dataset (L)ifel(O)ng (R)obotic V(IS)ion (OpenLORIS) - Object Recognition (OpenLORIS-object) is designed for driving lifelong/continual learning research and application in robotic vision domain, with everyday objects in home, office, campus, and mall scenarios. The dataset explicitly quantifies the variants of illumination, object occlusion, object size, camera-object distance/angles, and clutter information. Rules are designed to quantify the learning capability of the robotic vision system when faced with the objects appearing in the dynamic environments in the contest. Individual reports, dataset information, rules, and released source code can be found at the project homepage: "https://lifelong-robotic-vision.github.io/competition/".

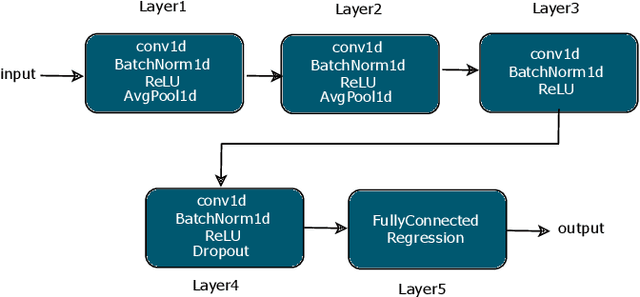

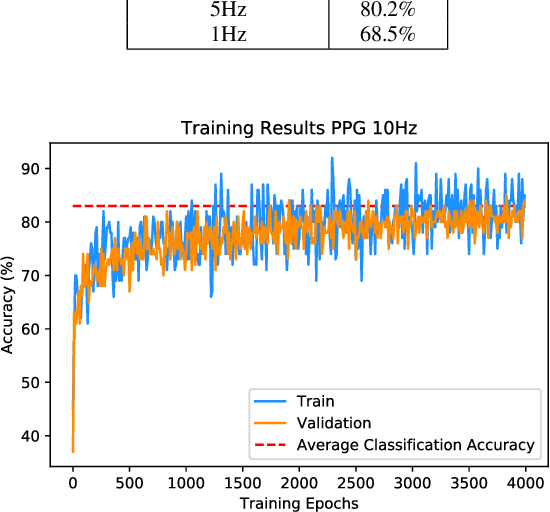

Optimised Convolutional Neural Networks for Heart Rate Estimation and Human Activity Recognition in Wrist Worn Sensing Applications

Mar 30, 2020

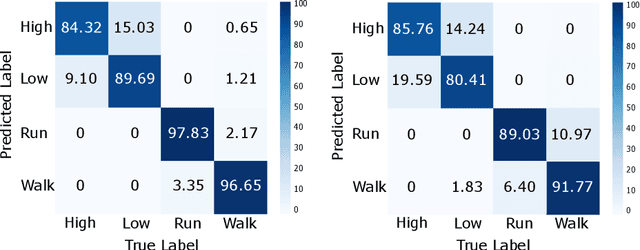

Abstract:Wrist-worn smart devices are providing increased insights into human health, behaviour and performance through sophisticated analytics. However, battery life, device cost and sensor performance in the face of movement-related artefact present challenges which must be further addressed to see effective applications and wider adoption through commoditisation of the technology. We address these challenges by demonstrating, through using a simple optical measurement, photoplethysmography (PPG) used conventionally for heart rate detection in wrist-worn sensors, that we can provide improved heart rate and human activity recognition (HAR) simultaneously at low sample rates, without an inertial measurement unit. This simplifies hardware design and reduces costs and power budgets. We apply two deep learning pipelines, one for human activity recognition and one for heart rate estimation. HAR is achieved through the application of a visual classification approach, capable of robust performance at low sample rates. Here, transfer learning is leveraged to retrain a convolutional neural network (CNN) to distinguish characteristics of the PPG during different human activities. For heart rate estimation we use a CNN adopted for regression which maps noisy optical signals to heart rate estimates. In both cases, comparisons are made with leading conventional approaches. Our results demonstrate a low sampling frequency can achieve good performance without significant degradation of accuracy. 5 Hz and 10 Hz were shown to have 80.2% and 83.0% classification accuracy for HAR respectively. These same sampling frequencies also yielded a robust heart rate estimation which was comparative with that achieved at the more energy-intensive rate of 256 Hz.

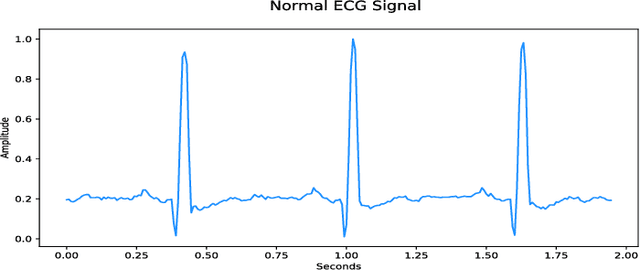

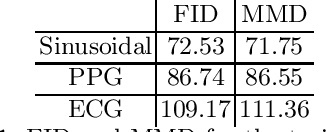

Synthesis of Realistic ECG using Generative Adversarial Networks

Sep 19, 2019

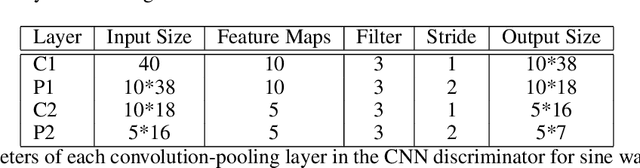

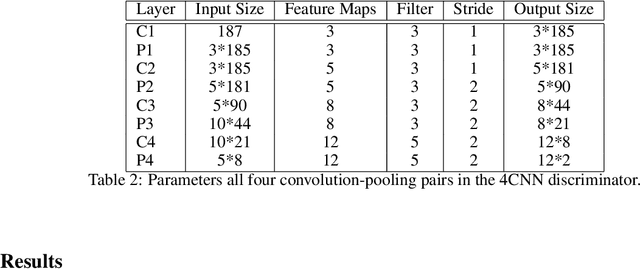

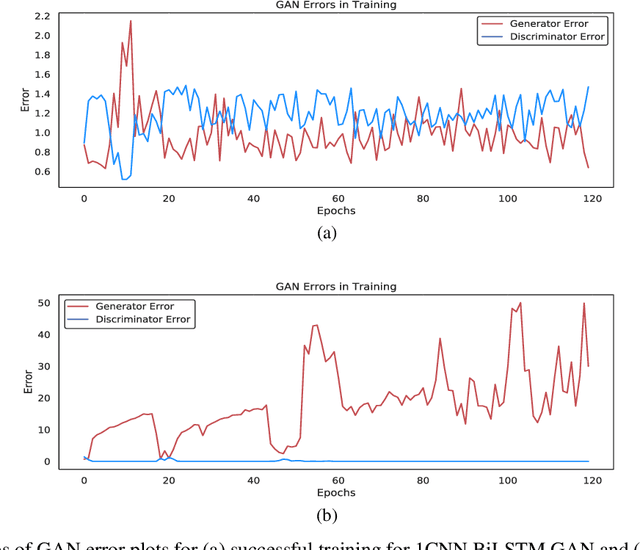

Abstract:Access to medical data is highly restricted due to its sensitive nature, preventing communities from using this data for research or clinical training. Common methods of de-identification implemented to enable the sharing of data are sometimes inadequate to protect the individuals contained in the data. For our research, we investigate the ability of generative adversarial networks (GANs) to produce realistic medical time series data which can be used without concerns over privacy. The aim is to generate synthetic ECG signals representative of normal ECG waveforms. GANs have been used successfully to generate good quality synthetic time series and have been shown to prevent re-identification of individual records. In this work, a range of GAN architectures are developed to generate synthetic sine waves and synthetic ECG. Two evaluation metrics are then used to quantitatively assess how suitable the synthetic data is for real world applications such as clinical training and data analysis. Finally, we discuss the privacy concerns associated with sharing synthetic data produced by GANs and test their ability to withstand a simple membership inference attack. For the first time we both quantitatively and qualitatively demonstrate that GAN architecture can successfully generate time series signals that are not only structurally similar to the training sets but also diverse in nature across generated samples. We also report on their ability to withstand a simple membership inference attack, protecting the privacy of the training set.

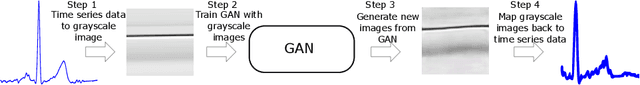

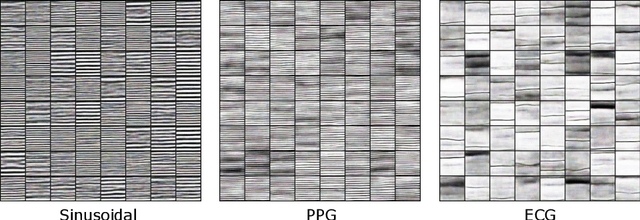

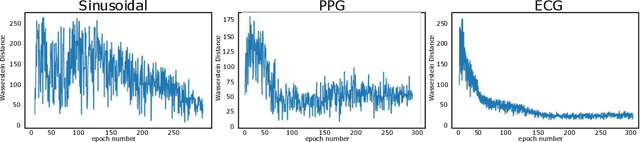

Quick and Easy Time Series Generation with Established Image-based GANs

Feb 18, 2019

Abstract:In the recent years Generative Adversarial Networks (GANs) have demonstrated significant progress in generating authentic looking data. In this work we introduce our simple method to exploit the advancements in well established image-based GANs to synthesise single channel time series data. We implement Wasserstein GANs (WGANs) with gradient penalty due to their stability in training to synthesise three different types of data; sinusoidal data, photoplethysmograph (PPG) data and electrocardiograph (ECG) data. The length of the returned time series data is limited only by the image resolution, we use an image size of 64x64 pixels which yields 4096 data points. We present both visual and quantitative evidence that our novel method can successfully generate time series data using image-based GANs.

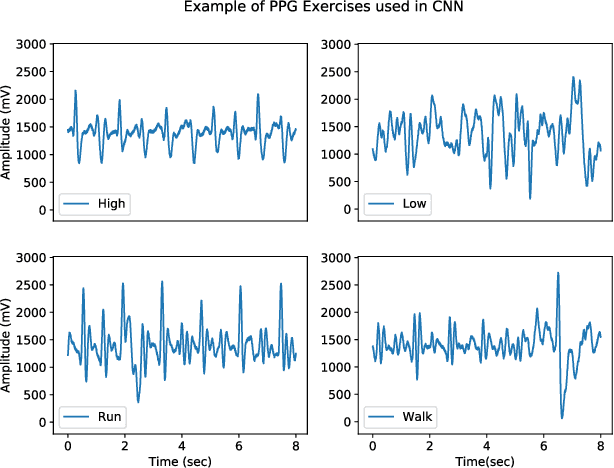

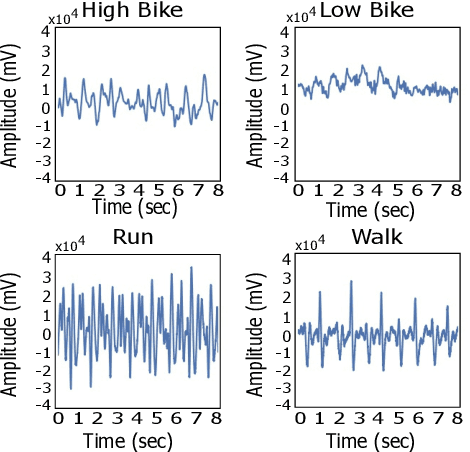

An Interpretable Machine Vision Approach to Human Activity Recognition using Photoplethysmograph Sensor Data

Dec 03, 2018

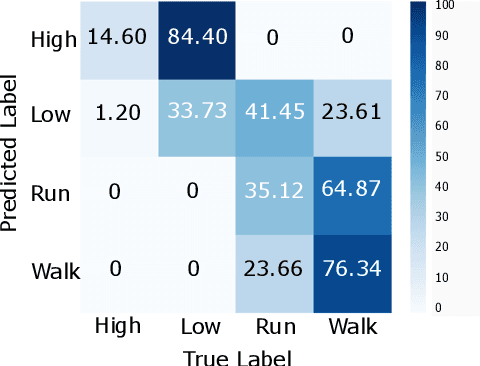

Abstract:The current gold standard for human activity recognition (HAR) is based on the use of cameras. However, the poor scalability of camera systems renders them impractical in pursuit of the goal of wider adoption of HAR in mobile computing contexts. Consequently, researchers instead rely on wearable sensors and in particular inertial sensors. A particularly prevalent wearable is the smart watch which due to its integrated inertial and optical sensing capabilities holds great potential for realising better HAR in a non-obtrusive way. This paper seeks to simplify the wearable approach to HAR through determining if the wrist-mounted optical sensor alone typically found in a smartwatch or similar device can be used as a useful source of data for activity recognition. The approach has the potential to eliminate the need for the inertial sensing element which would in turn reduce the cost of and complexity of smartwatches and fitness trackers. This could potentially commoditise the hardware requirements for HAR while retaining the functionality of both heart rate monitoring and activity capture all from a single optical sensor. Our approach relies on the adoption of machine vision for activity recognition based on suitably scaled plots of the optical signals. We take this approach so as to produce classifications that are easily explainable and interpretable by non-technical users. More specifically, images of photoplethysmography signal time series are used to retrain the penultimate layer of a convolutional neural network which has initially been trained on the ImageNet database. We then use the 2048 dimensional features from the penultimate layer as input to a support vector machine. Results from the experiment yielded an average classification accuracy of 92.3%. This result outperforms that of an optical and inertial sensor combined (78%) and illustrates the capability of HAR systems using...

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge