Christopher M. Poskitt

Runtime Anomaly Detection for Drones: An Integrated Rule-Mining and Unsupervised-Learning Approach

May 03, 2025Abstract:UAVs, commonly referred to as drones, have witnessed a remarkable surge in popularity due to their versatile applications. These cyber-physical systems depend on multiple sensor inputs, such as cameras, GPS receivers, accelerometers, and gyroscopes, with faults potentially leading to physical instability and serious safety concerns. To mitigate such risks, anomaly detection has emerged as a crucial safeguarding mechanism, capable of identifying the physical manifestations of emerging issues and allowing operators to take preemptive action at runtime. Recent anomaly detection methods based on LSTM neural networks have shown promising results, but three challenges persist: the need for models that can generalise across the diverse mission profiles of drones; the need for interpretability, enabling operators to understand the nature of detected problems; and the need for capturing domain knowledge that is difficult to infer solely from log data. Motivated by these challenges, this paper introduces RADD, an integrated approach to anomaly detection in drones that combines rule mining and unsupervised learning. In particular, we leverage rules (or invariants) to capture expected relationships between sensors and actuators during missions, and utilise unsupervised learning techniques to cover more subtle relationships that the rules may have missed. We implement this approach using the ArduPilot drone software in the Gazebo simulator, utilising 44 rules derived across the main phases of drone missions, in conjunction with an ensemble of five unsupervised learning models. We find that our integrated approach successfully detects 93.84% of anomalies over six types of faults with a low false positive rate (2.33%), and can be deployed effectively at runtime. Furthermore, RADD outperforms a state-of-the-art LSTM-based method in detecting the different types of faults evaluated in our study.

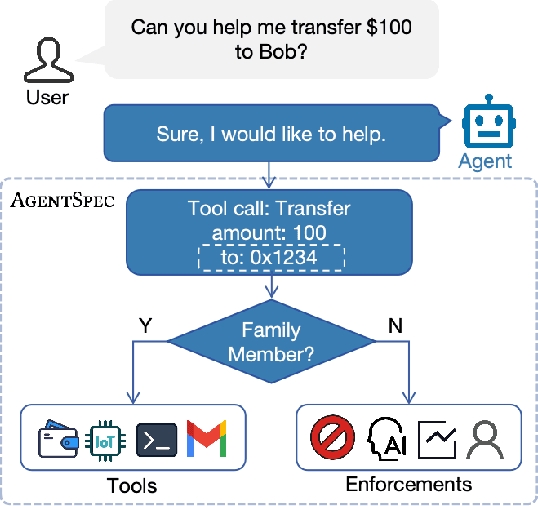

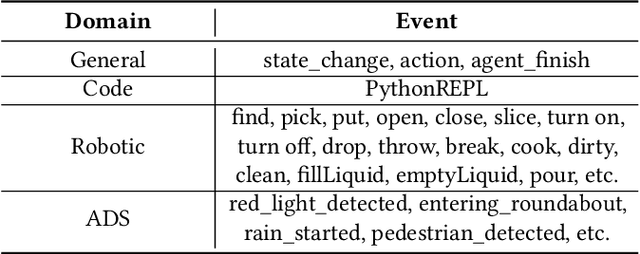

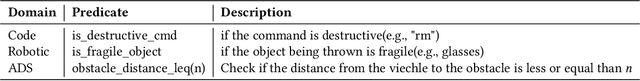

AgentSpec: Customizable Runtime Enforcement for Safe and Reliable LLM Agents

Mar 24, 2025

Abstract:Agents built on LLMs are increasingly deployed across diverse domains, automating complex decision-making and task execution. However, their autonomy introduces safety risks, including security vulnerabilities, legal violations, and unintended harmful actions. Existing mitigation methods, such as model-based safeguards and early enforcement strategies, fall short in robustness, interpretability, and adaptability. To address these challenges, we propose AgentSpec, a lightweight domain-specific language for specifying and enforcing runtime constraints on LLM agents. With AgentSpec, users define structured rules that incorporate triggers, predicates, and enforcement mechanisms, ensuring agents operate within predefined safety boundaries. We implement AgentSpec across multiple domains, including code execution, embodied agents, and autonomous driving, demonstrating its adaptability and effectiveness. Our evaluation shows that AgentSpec successfully prevents unsafe executions in over 90% of code agent cases, eliminates all hazardous actions in embodied agent tasks, and enforces 100% compliance by autonomous vehicles (AVs). Despite its strong safety guarantees, AgentSpec remains computationally lightweight, with overheads in milliseconds. By combining interpretability, modularity, and efficiency, AgentSpec provides a practical and scalable solution for enforcing LLM agent safety across diverse applications. We also automate the generation of rules using LLMs and assess their effectiveness. Our evaluation shows that the rules generated by OpenAI o1 achieve a precision of 95.56% and recall of 70.96% for embodied agents, successfully identifying 87.26% of the risky code, and prevent AVs from breaking laws in 5 out of 8 scenarios.

Are Existing Road Design Guidelines Suitable for Autonomous Vehicles?

Sep 13, 2024

Abstract:The emergence of Autonomous Vehicles (AVs) has spurred research into testing the resilience of their perception systems, i.e. to ensure they are not susceptible to making critical misjudgements. It is important that they are tested not only with respect to other vehicles on the road, but also those objects placed on the roadside. Trash bins, billboards, and greenery are all examples of such objects, typically placed according to guidelines that were developed for the human visual system, and which may not align perfectly with the needs of AVs. Existing tests, however, usually focus on adversarial objects with conspicuous shapes/patches, that are ultimately unrealistic given their unnatural appearances and the need for white box knowledge. In this work, we introduce a black box attack on the perception systems of AVs, in which the objective is to create realistic adversarial scenarios (i.e. satisfying road design guidelines) by manipulating the positions of common roadside objects, and without resorting to `unnatural' adversarial patches. In particular, we propose TrashFuzz , a fuzzing algorithm to find scenarios in which the placement of these objects leads to substantial misperceptions by the AV -- such as mistaking a traffic light's colour -- with overall the goal of causing it to violate traffic laws. To ensure the realism of these scenarios, they must satisfy several rules encoding regulatory guidelines about the placement of objects on public streets. We implemented and evaluated these attacks for the Apollo, finding that TrashFuzz induced it into violating 15 out of 24 different traffic laws.

How Generalizable are Deepfake Detectors? An Empirical Study

Aug 08, 2023

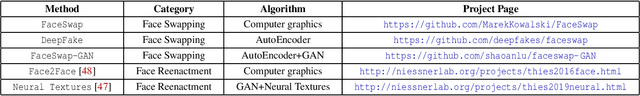

Abstract:Deepfake videos and images are becoming increasingly credible, posing a significant threat given their potential to facilitate fraud or bypass access control systems. This has motivated the development of deepfake detection methods, in which deep learning models are trained to distinguish between real and synthesized footage. Unfortunately, existing detection models struggle to generalize to deepfakes from datasets they were not trained on, but little work has been done to examine why or how this limitation can be addressed. In this paper, we present the first empirical study on the generalizability of deepfake detectors, an essential goal for detectors to stay one step ahead of attackers. Our study utilizes six deepfake datasets, five deepfake detection methods, and two model augmentation approaches, confirming that detectors do not generalize in zero-shot settings. Additionally, we find that detectors are learning unwanted properties specific to synthesis methods and struggling to extract discriminative features, limiting their ability to generalize. Finally, we find that there are neurons universally contributing to detection across seen and unseen datasets, illuminating a possible path forward to zero-shot generalizability.

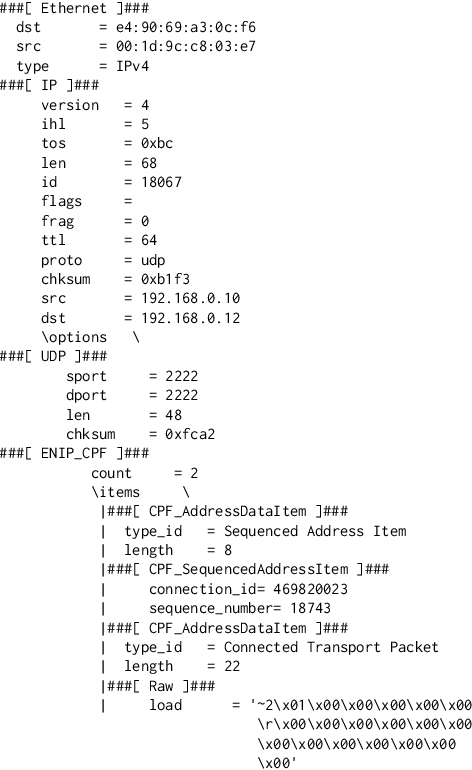

Code Integrity Attestation for PLCs using Black Box Neural Network Predictions

Jun 15, 2021

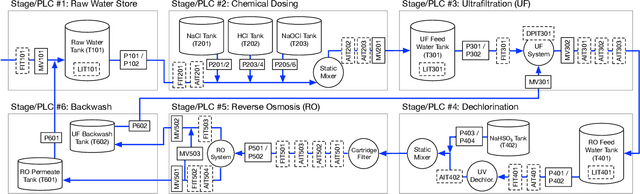

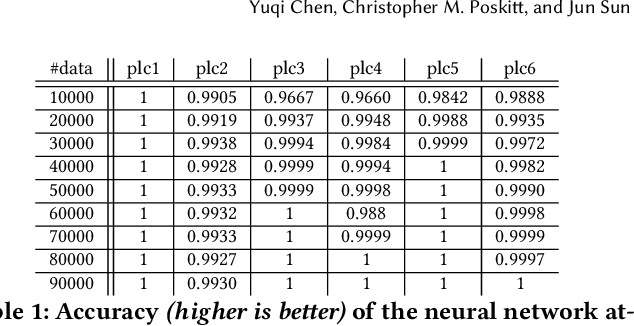

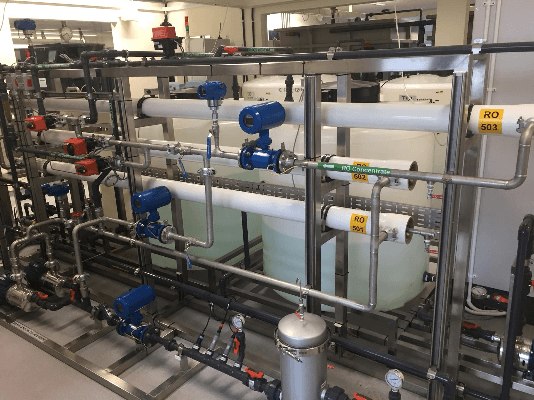

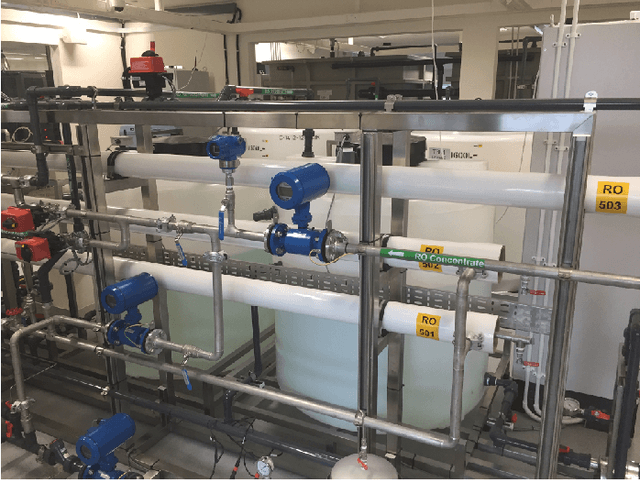

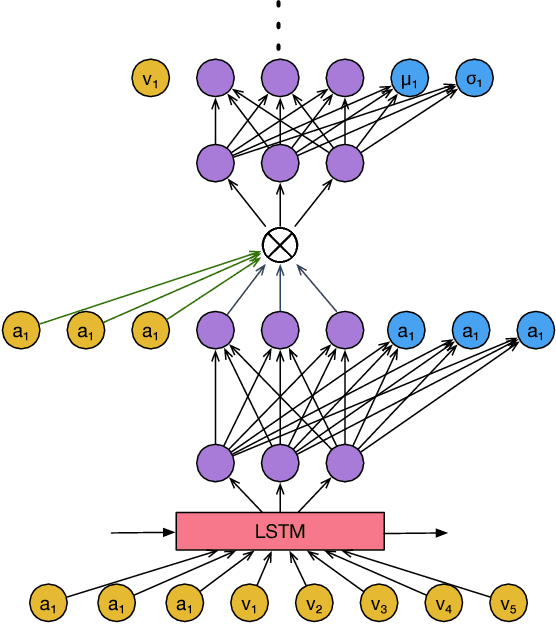

Abstract:Cyber-physical systems (CPSs) are widespread in critical domains, and significant damage can be caused if an attacker is able to modify the code of their programmable logic controllers (PLCs). Unfortunately, traditional techniques for attesting code integrity (i.e. verifying that it has not been modified) rely on firmware access or roots-of-trust, neither of which proprietary or legacy PLCs are likely to provide. In this paper, we propose a practical code integrity checking solution based on privacy-preserving black box models that instead attest the input/output behaviour of PLC programs. Using faithful offline copies of the PLC programs, we identify their most important inputs through an information flow analysis, execute them on multiple combinations to collect data, then train neural networks able to predict PLC outputs (i.e. actuator commands) from their inputs. By exploiting the black box nature of the model, our solution maintains the privacy of the original PLC code and does not assume that attackers are unaware of its presence. The trust instead comes from the fact that it is extremely hard to attack the PLC code and neural networks at the same time and with consistent outcomes. We evaluated our approach on a modern six-stage water treatment plant testbed, finding that it could predict actuator states from PLC inputs with near-100% accuracy, and thus could detect all 120 effective code mutations that we subjected the PLCs to. Finally, we found that it is not practically possible to simultaneously modify the PLC code and apply discreet adversarial noise to our attesters in a way that leads to consistent (mis-)predictions.

Adversarial Attacks and Mitigation for Anomaly Detectors of Cyber-Physical Systems

May 22, 2021

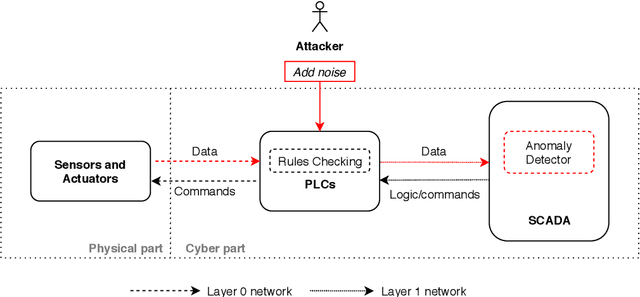

Abstract:The threats faced by cyber-physical systems (CPSs) in critical infrastructure have motivated research into a multitude of attack detection mechanisms, including anomaly detectors based on neural network models. The effectiveness of anomaly detectors can be assessed by subjecting them to test suites of attacks, but less consideration has been given to adversarial attackers that craft noise specifically designed to deceive them. While successfully applied in domains such as images and audio, adversarial attacks are much harder to implement in CPSs due to the presence of other built-in defence mechanisms such as rule checkers(or invariant checkers). In this work, we present an adversarial attack that simultaneously evades the anomaly detectors and rule checkers of a CPS. Inspired by existing gradient-based approaches, our adversarial attack crafts noise over the sensor and actuator values, then uses a genetic algorithm to optimise the latter, ensuring that the neural network and the rule checking system are both deceived.We implemented our approach for two real-world critical infrastructure testbeds, successfully reducing the classification accuracy of their detectors by over 50% on average, while simultaneously avoiding detection by rule checkers. Finally, we explore whether these attacks can be mitigated by training the detectors on adversarial samples.

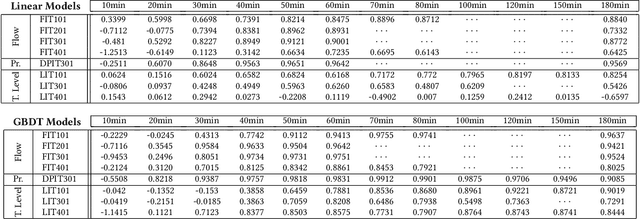

Active Fuzzing for Testing and Securing Cyber-Physical Systems

May 28, 2020

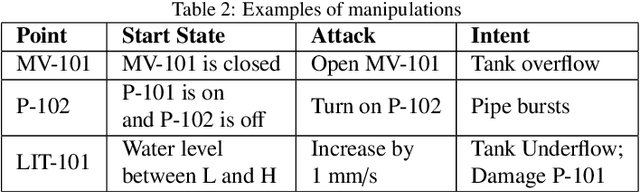

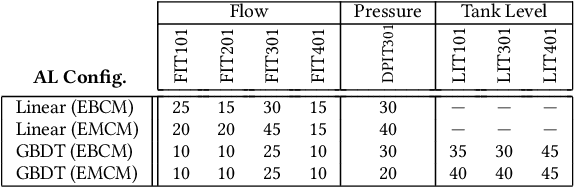

Abstract:Cyber-physical systems (CPSs) in critical infrastructure face a pervasive threat from attackers, motivating research into a variety of countermeasures for securing them. Assessing the effectiveness of these countermeasures is challenging, however, as realistic benchmarks of attacks are difficult to manually construct, blindly testing is ineffective due to the enormous search spaces and resource requirements, and intelligent fuzzing approaches require impractical amounts of data and network access. In this work, we propose active fuzzing, an automatic approach for finding test suites of packet-level CPS network attacks, targeting scenarios in which attackers can observe sensors and manipulate packets, but have no existing knowledge about the payload encodings. Our approach learns regression models for predicting sensor values that will result from sampled network packets, and uses these predictions to guide a search for payload manipulations (i.e. bit flips) most likely to drive the CPS into an unsafe state. Key to our solution is the use of online active learning, which iteratively updates the models by sampling payloads that are estimated to maximally improve them. We evaluate the efficacy of active fuzzing by implementing it for a water purification plant testbed, finding it can automatically discover a test suite of flow, pressure, and over/underflow attacks, all with substantially less time, data, and network access than the most comparable approach. Finally, we demonstrate that our prediction models can also be utilised as countermeasures themselves, implementing them as anomaly detectors and early warning systems.

Learning from Mutants: Using Code Mutation to Learn and Monitor Invariants of a Cyber-Physical System

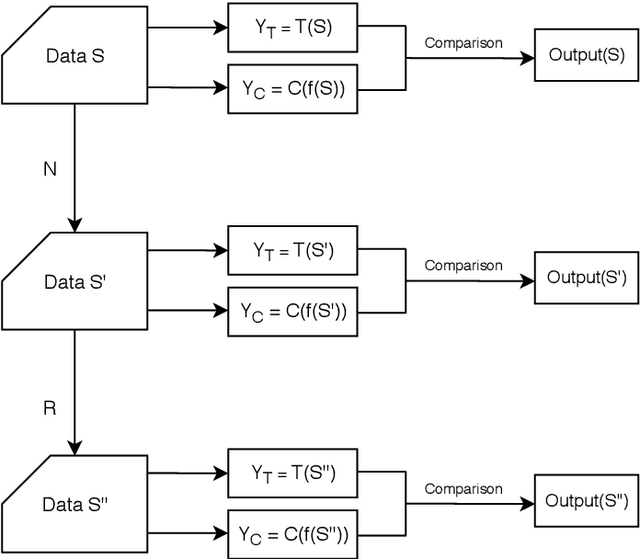

Jun 13, 2018

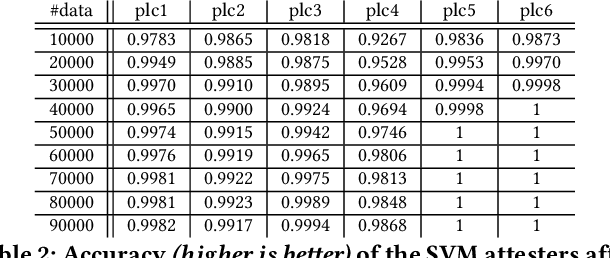

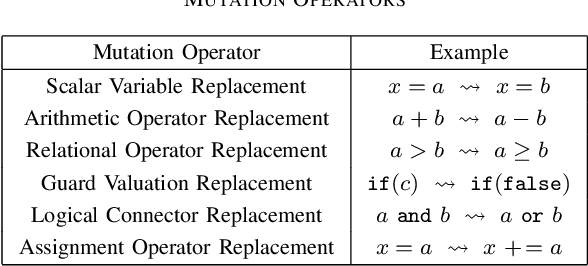

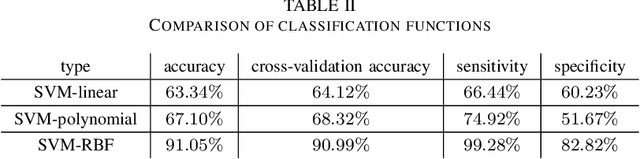

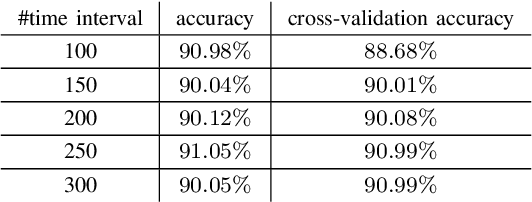

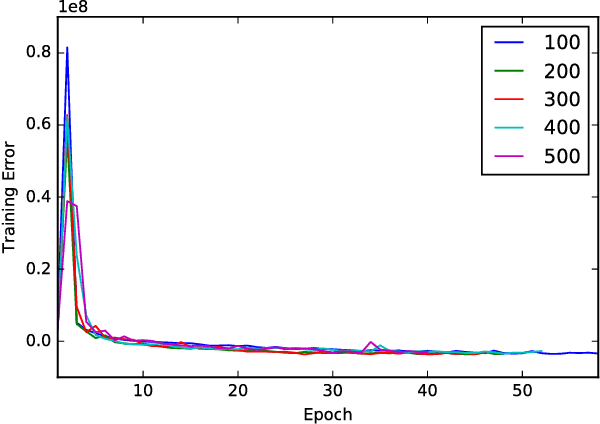

Abstract:Cyber-physical systems (CPS) consist of sensors, actuators, and controllers all communicating over a network; if any subset becomes compromised, an attacker could cause significant damage. With access to data logs and a model of the CPS, the physical effects of an attack could potentially be detected before any damage is done. Manually building a model that is accurate enough in practice, however, is extremely difficult. In this paper, we propose a novel approach for constructing models of CPS automatically, by applying supervised machine learning to data traces obtained after systematically seeding their software components with faults ("mutants"). We demonstrate the efficacy of this approach on the simulator of a real-world water purification plant, presenting a framework that automatically generates mutants, collects data traces, and learns an SVM-based model. Using cross-validation and statistical model checking, we show that the learnt model characterises an invariant physical property of the system. Furthermore, we demonstrate the usefulness of the invariant by subjecting the system to 55 network and code-modification attacks, and showing that it can detect 85% of them from the data logs generated at runtime.

* Accepted by IEEE S&P 2018

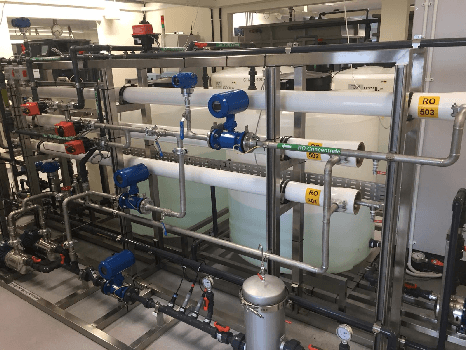

Anomaly Detection for a Water Treatment System Using Unsupervised Machine Learning

Sep 25, 2017

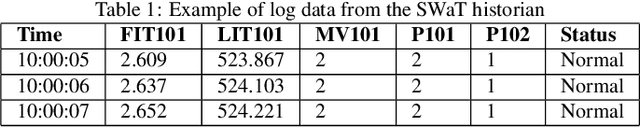

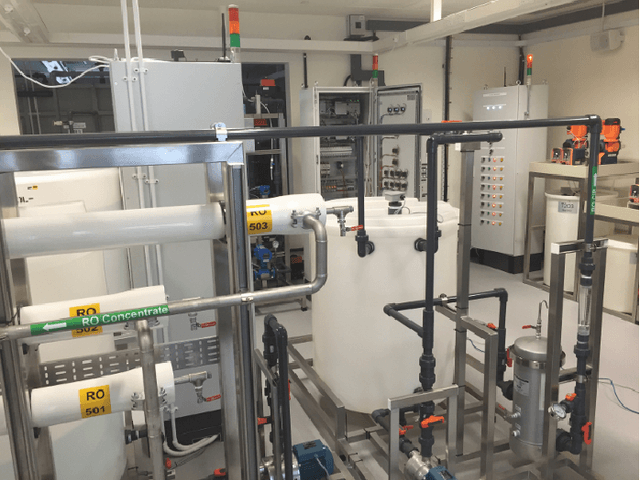

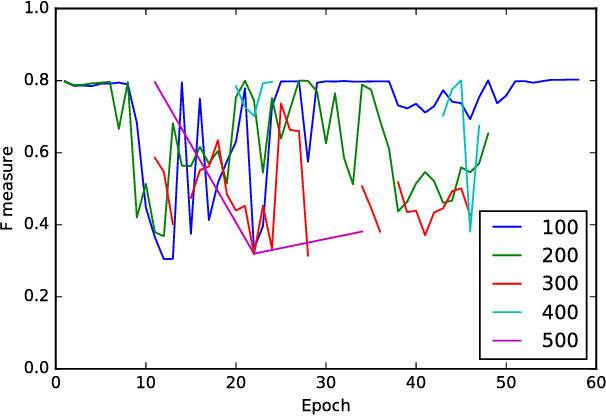

Abstract:In this paper, we propose and evaluate the application of unsupervised machine learning to anomaly detection for a Cyber-Physical System (CPS). We compare two methods: Deep Neural Networks (DNN) adapted to time series data generated by a CPS, and one-class Support Vector Machines (SVM). These methods are evaluated against data from the Secure Water Treatment (SWaT) testbed, a scaled-down but fully operational raw water purification plant. For both methods, we first train detectors using a log generated by SWaT operating under normal conditions. Then, we evaluate the performance of both methods using a log generated by SWaT operating under 36 different attack scenarios. We find that our DNN generates fewer false positives than our one-class SVM while our SVM detects slightly more anomalies. Overall, our DNN has a slightly better F measure than our SVM. We discuss the characteristics of the DNN and one-class SVM used in this experiment, and compare the advantages and disadvantages of the two methods.

Towards Learning and Verifying Invariants of Cyber-Physical Systems by Code Mutation

Sep 06, 2016Abstract:Cyber-physical systems (CPS), which integrate algorithmic control with physical processes, often consist of physically distributed components communicating over a network. A malfunctioning or compromised component in such a CPS can lead to costly consequences, especially in the context of public infrastructure. In this short paper, we argue for the importance of constructing invariants (or models) of the physical behaviour exhibited by CPS, motivated by their applications to the control, monitoring, and attestation of components. To achieve this despite the inherent complexity of CPS, we propose a new technique for learning invariants that combines machine learning with ideas from mutation testing. We present a preliminary study on a water treatment system that suggests the efficacy of this approach, propose strategies for establishing confidence in the correctness of invariants, then summarise some research questions and the steps we are taking to investigate them.

* Short paper accepted by the 21st International Symposium on Formal Methods (FM 2016)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge