Chenxiang Ma

Neuromorphic Sequential Arena: A Benchmark for Neuromorphic Temporal Processing

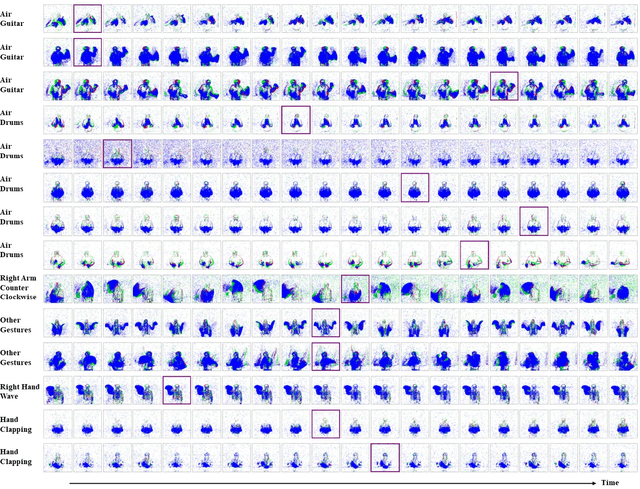

May 28, 2025Abstract:Temporal processing is vital for extracting meaningful information from time-varying signals. Recent advancements in Spiking Neural Networks (SNNs) have shown immense promise in efficiently processing these signals. However, progress in this field has been impeded by the lack of effective and standardized benchmarks, which complicates the consistent measurement of technological advancements and limits the practical applicability of SNNs. To bridge this gap, we introduce the Neuromorphic Sequential Arena (NSA), a comprehensive benchmark that offers an effective, versatile, and application-oriented evaluation framework for neuromorphic temporal processing. The NSA includes seven real-world temporal processing tasks from a diverse range of application scenarios, each capturing rich temporal dynamics across multiple timescales. Utilizing NSA, we conduct extensive comparisons of recently introduced spiking neuron models and neural architectures, presenting comprehensive baselines in terms of task performance, training speed, memory usage, and energy efficiency. Our findings emphasize an urgent need for efficient SNN designs that can consistently deliver high performance across tasks with varying temporal complexities while maintaining low computational costs. NSA enables systematic tracking of advancements in neuromorphic algorithm research and paves the way for developing effective and efficient neuromorphic temporal processing systems.

Spiking Neural Networks for Temporal Processing: Status Quo and Future Prospects

Feb 13, 2025

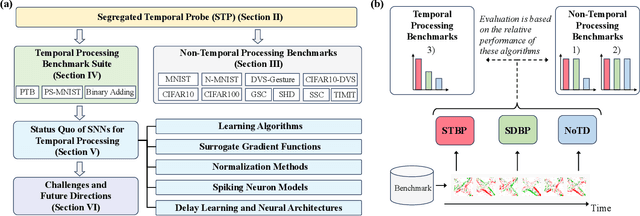

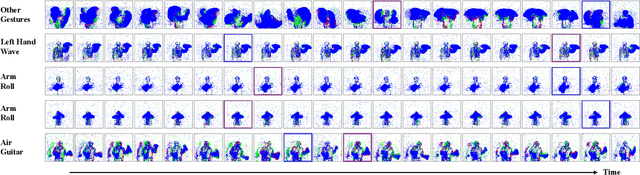

Abstract:Temporal processing is fundamental for both biological and artificial intelligence systems, as it enables the comprehension of dynamic environments and facilitates timely responses. Spiking Neural Networks (SNNs) excel in handling such data with high efficiency, owing to their rich neuronal dynamics and sparse activity patterns. Given the recent surge in the development of SNNs, there is an urgent need for a comprehensive evaluation of their temporal processing capabilities. In this paper, we first conduct an in-depth assessment of commonly used neuromorphic benchmarks, revealing critical limitations in their ability to evaluate the temporal processing capabilities of SNNs. To bridge this gap, we further introduce a benchmark suite consisting of three temporal processing tasks characterized by rich temporal dynamics across multiple timescales. Utilizing this benchmark suite, we perform a thorough evaluation of recently introduced SNN approaches to elucidate the current status of SNNs in temporal processing. Our findings indicate significant advancements in recently developed spiking neuron models and neural architectures regarding their temporal processing capabilities, while also highlighting a performance gap in handling long-range dependencies when compared to state-of-the-art non-spiking models. Finally, we discuss the key challenges and outline potential avenues for future research.

Towards Ultra-Low-Power Neuromorphic Speech Enhancement with Spiking-FullSubNet

Oct 07, 2024

Abstract:Speech enhancement is critical for improving speech intelligibility and quality in various audio devices. In recent years, deep learning-based methods have significantly improved speech enhancement performance, but they often come with a high computational cost, which is prohibitive for a large number of edge devices, such as headsets and hearing aids. This work proposes an ultra-low-power speech enhancement system based on the brain-inspired spiking neural network (SNN) called Spiking-FullSubNet. Spiking-FullSubNet follows a full-band and sub-band fusioned approach to effectively capture both global and local spectral information. To enhance the efficiency of computationally expensive sub-band modeling, we introduce a frequency partitioning method inspired by the sensitivity profile of the human peripheral auditory system. Furthermore, we introduce a novel spiking neuron model that can dynamically control the input information integration and forgetting, enhancing the multi-scale temporal processing capability of SNN, which is critical for speech denoising. Experiments conducted on the recent Intel Neuromorphic Deep Noise Suppression (N-DNS) Challenge dataset show that the Spiking-FullSubNet surpasses state-of-the-art methods by large margins in terms of both speech quality and energy efficiency metrics. Notably, our system won the championship of the Intel N-DNS Challenge (Algorithmic Track), opening up a myriad of opportunities for ultra-low-power speech enhancement at the edge. Our source code and model checkpoints are publicly available at https://github.com/haoxiangsnr/spiking-fullsubnet.

PMSN: A Parallel Multi-compartment Spiking Neuron for Multi-scale Temporal Processing

Aug 27, 2024

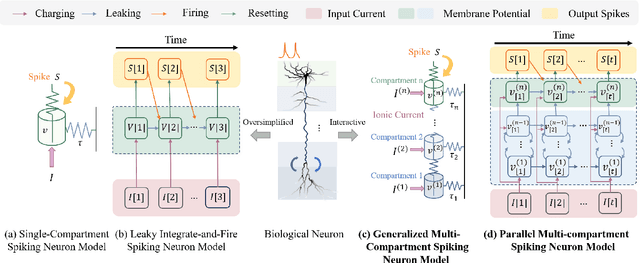

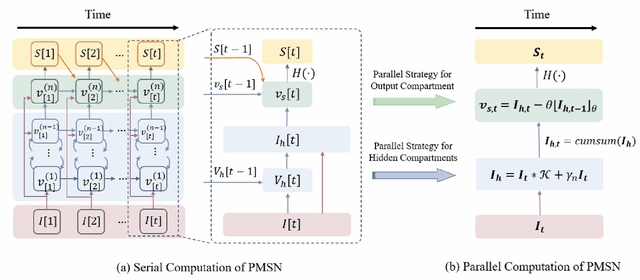

Abstract:Spiking Neural Networks (SNNs) hold great potential to realize brain-inspired, energy-efficient computational systems. However, current SNNs still fall short in terms of multi-scale temporal processing compared to their biological counterparts. This limitation has resulted in poor performance in many pattern recognition tasks with information that varies across different timescales. To address this issue, we put forward a novel spiking neuron model called Parallel Multi-compartment Spiking Neuron (PMSN). The PMSN emulates biological neurons by incorporating multiple interacting substructures and allows for flexible adjustment of the substructure counts to effectively represent temporal information across diverse timescales. Additionally, to address the computational burden associated with the increased complexity of the proposed model, we introduce two parallelization techniques that decouple the temporal dependencies of neuronal updates, enabling parallelized training across different time steps. Our experimental results on a wide range of pattern recognition tasks demonstrate the superiority of PMSN. It outperforms other state-of-the-art spiking neuron models in terms of its temporal processing capacity, training speed, and computation cost. Specifically, compared with the commonly used Leaky Integrate-and-Fire neuron, PMSN offers a simulation acceleration of over 10 $\times$ and a 30 % improvement in accuracy on Sequential CIFAR10 dataset, while maintaining comparable computational cost.

Scaling Supervised Local Learning with Augmented Auxiliary Networks

Feb 27, 2024Abstract:Deep neural networks are typically trained using global error signals that backpropagate (BP) end-to-end, which is not only biologically implausible but also suffers from the update locking problem and requires huge memory consumption. Local learning, which updates each layer independently with a gradient-isolated auxiliary network, offers a promising alternative to address the above problems. However, existing local learning methods are confronted with a large accuracy gap with the BP counterpart, particularly for large-scale networks. This is due to the weak coupling between local layers and their subsequent network layers, as there is no gradient communication across layers. To tackle this issue, we put forward an augmented local learning method, dubbed AugLocal. AugLocal constructs each hidden layer's auxiliary network by uniformly selecting a small subset of layers from its subsequent network layers to enhance their synergy. We also propose to linearly reduce the depth of auxiliary networks as the hidden layer goes deeper, ensuring sufficient network capacity while reducing the computational cost of auxiliary networks. Our extensive experiments on four image classification datasets (i.e., CIFAR-10, SVHN, STL-10, and ImageNet) demonstrate that AugLocal can effectively scale up to tens of local layers with a comparable accuracy to BP-trained networks while reducing GPU memory usage by around 40%. The proposed AugLocal method, therefore, opens up a myriad of opportunities for training high-performance deep neural networks on resource-constrained platforms.Code is available at https://github.com/ChenxiangMA/AugLocal.

Efficient Online Learning for Networks of Two-Compartment Spiking Neurons

Feb 25, 2024Abstract:The brain-inspired Spiking Neural Networks (SNNs) have garnered considerable research interest due to their superior performance and energy efficiency in processing temporal signals. Recently, a novel multi-compartment spiking neuron model, namely the Two-Compartment LIF (TC-LIF) model, has been proposed and exhibited a remarkable capacity for sequential modelling. However, training the TC-LIF model presents challenges stemming from the large memory consumption and the issue of gradient vanishing associated with the Backpropagation Through Time (BPTT) algorithm. To address these challenges, online learning methodologies emerge as a promising solution. Yet, to date, the application of online learning methods in SNNs has been predominantly confined to simplified Leaky Integrate-and-Fire (LIF) neuron models. In this paper, we present a novel online learning method specifically tailored for networks of TC-LIF neurons. Additionally, we propose a refined TC-LIF neuron model called Adaptive TC-LIF, which is carefully designed to enhance temporal information integration in online learning scenarios. Extensive experiments, conducted on various sequential benchmarks, demonstrate that our approach successfully preserves the superior sequential modeling capabilities of the TC-LIF neuron while incorporating the training efficiency and hardware friendliness of online learning. As a result, it offers a multitude of opportunities to leverage neuromorphic solutions for processing temporal signals.

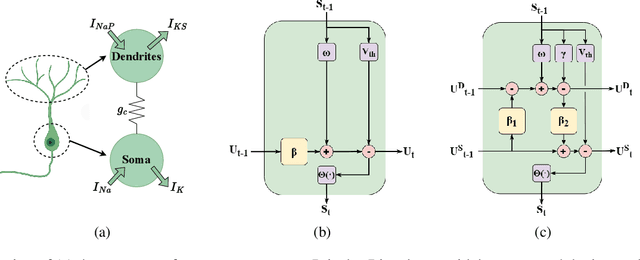

TC-LIF: A Two-Compartment Spiking Neuron Model for Long-term Sequential Modelling

Aug 25, 2023

Abstract:The identification of sensory cues associated with potential opportunities and dangers is frequently complicated by unrelated events that separate useful cues by long delays. As a result, it remains a challenging task for state-of-the-art spiking neural networks (SNNs) to establish long-term temporal dependency between distant cues. To address this challenge, we propose a novel biologically inspired Two-Compartment Leaky Integrate-and-Fire spiking neuron model, dubbed TC-LIF. The proposed model incorporates carefully designed somatic and dendritic compartments that are tailored to facilitate learning long-term temporal dependencies. Furthermore, a theoretical analysis is provided to validate the effectiveness of TC-LIF in propagating error gradients over an extended temporal duration. Our experimental results, on a diverse range of temporal classification tasks, demonstrate superior temporal classification capability, rapid training convergence, and high energy efficiency of the proposed TC-LIF model. Therefore, this work opens up a myriad of opportunities for solving challenging temporal processing tasks on emerging neuromorphic computing systems.

Long Short-term Memory with Two-Compartment Spiking Neuron

Jul 14, 2023Abstract:The identification of sensory cues associated with potential opportunities and dangers is frequently complicated by unrelated events that separate useful cues by long delays. As a result, it remains a challenging task for state-of-the-art spiking neural networks (SNNs) to identify long-term temporal dependencies since bridging the temporal gap necessitates an extended memory capacity. To address this challenge, we propose a novel biologically inspired Long Short-Term Memory Leaky Integrate-and-Fire spiking neuron model, dubbed LSTM-LIF. Our model incorporates carefully designed somatic and dendritic compartments that are tailored to retain short- and long-term memories. The theoretical analysis further confirms its effectiveness in addressing the notorious vanishing gradient problem. Our experimental results, on a diverse range of temporal classification tasks, demonstrate superior temporal classification capability, rapid training convergence, strong network generalizability, and high energy efficiency of the proposed LSTM-LIF model. This work, therefore, opens up a myriad of opportunities for resolving challenging temporal processing tasks on emerging neuromorphic computing machines.

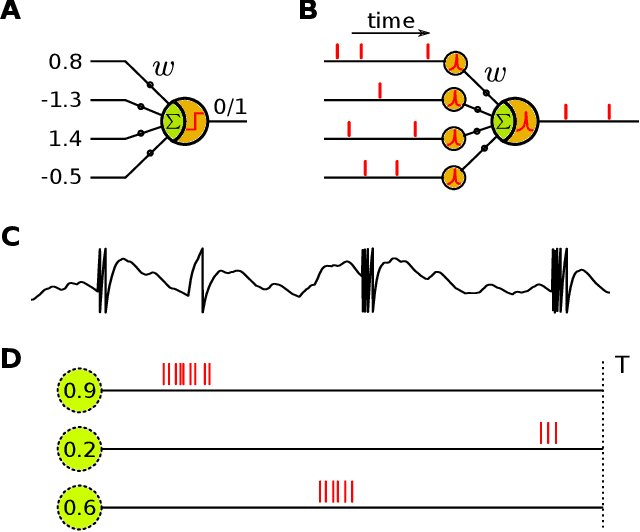

Synaptic Learning with Augmented Spikes

May 11, 2020

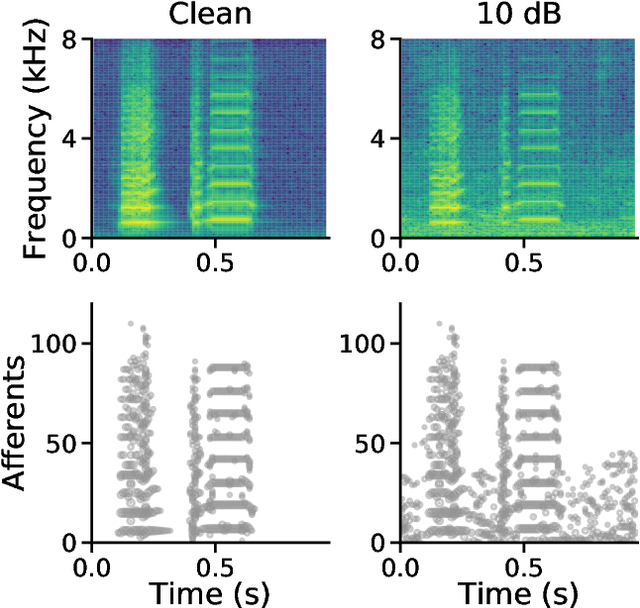

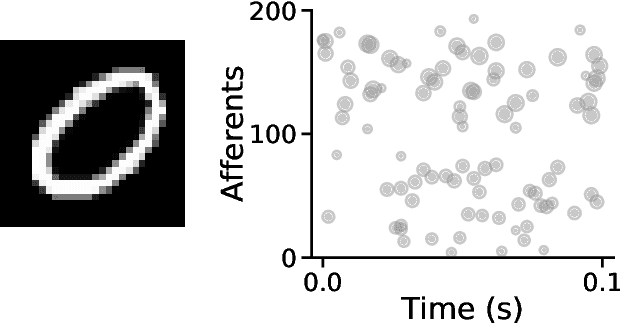

Abstract:Traditional neuron models use analog values for information representation and computation, while all-or-nothing spikes are employed in the spiking ones. With a more brain-like processing paradigm, spiking neurons are more promising for improvements on efficiency and computational capability. They extend the computation of traditional neurons with an additional dimension of time carried by all-or-nothing spikes. Could one benefit from both the accuracy of analog values and the time-processing capability of spikes? In this paper, we introduce a concept of augmented spikes to carry complementary information with spike coefficients in addition to spike latencies. New augmented spiking neuron model and synaptic learning rules are proposed to process and learn patterns of augmented spikes. We provide systematic insight into the properties and characteristics of our methods, including classification of augmented spike patterns, learning capacity, construction of causality, feature detection, robustness and applicability to practical tasks such as acoustic and visual pattern recognition. The remarkable results highlight the effectiveness and potential merits of our methods. Importantly, our augmented approaches are versatile and can be easily generalized to other spike-based systems, contributing to a potential development for them including neuromorphic computing.

Constructing Accurate and Efficient Deep Spiking Neural Networks with Double-threshold and Augmented Schemes

May 05, 2020

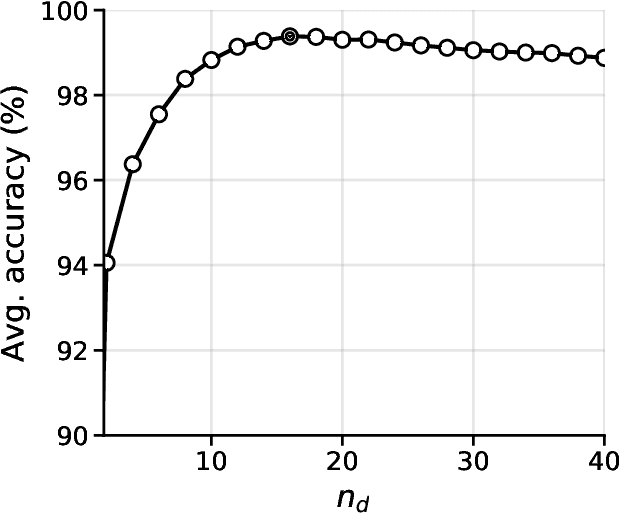

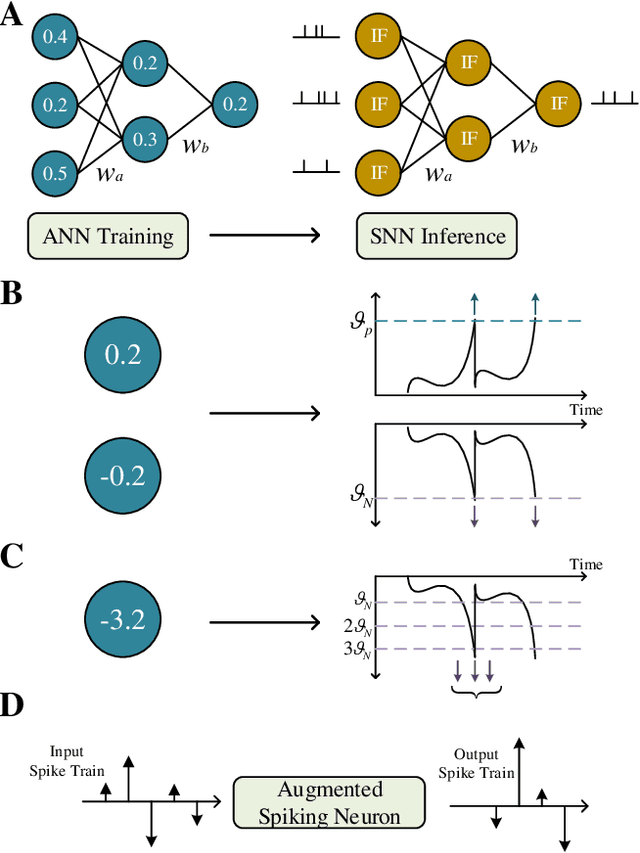

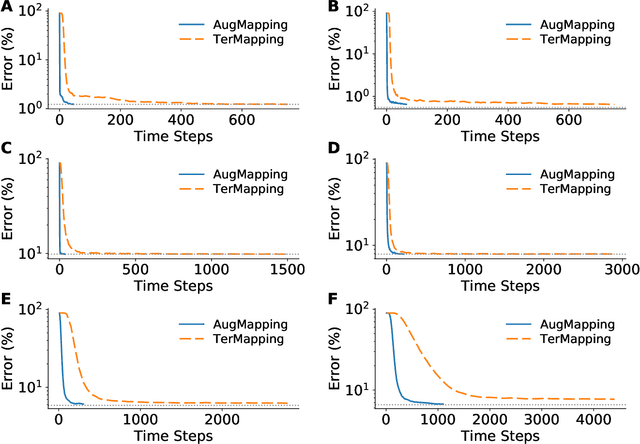

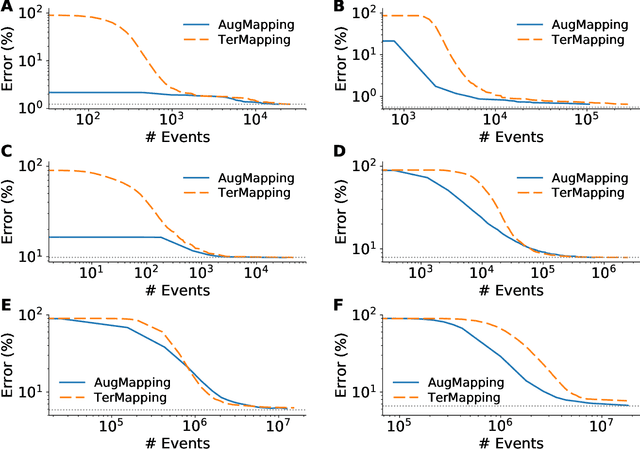

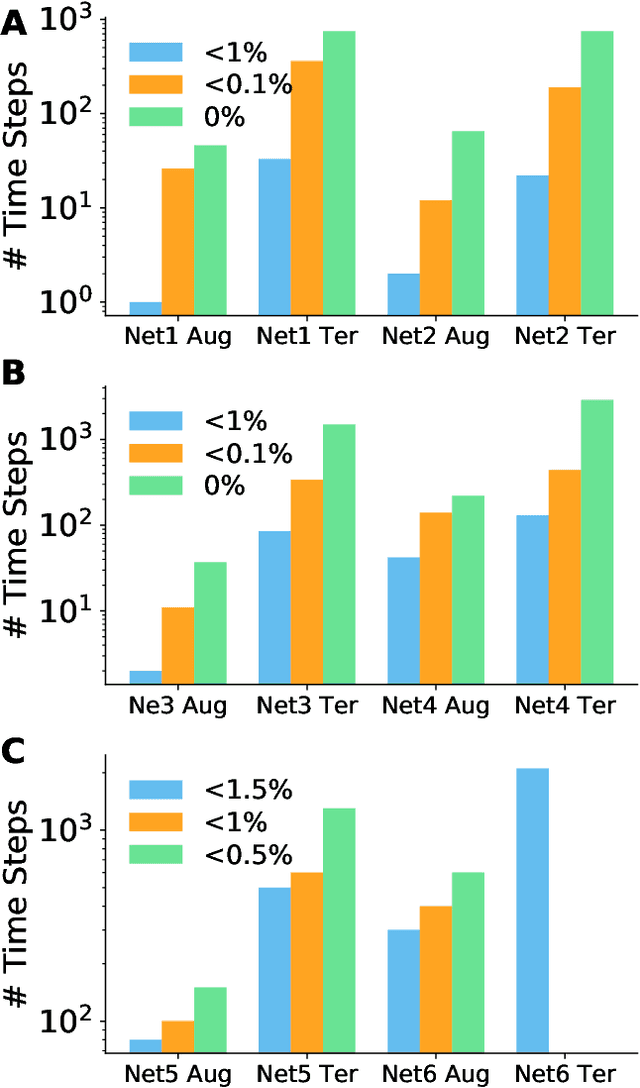

Abstract:Spiking neural networks (SNNs) are considered as a potential candidate to overcome current challenges such as the high-power consumption encountered by artificial neural networks (ANNs), however there is still a gap between them with respect to the recognition accuracy on practical tasks. A conversion strategy was thus introduced recently to bridge this gap by mapping a trained ANN to an SNN. However, it is still unclear that to what extent this obtained SNN can benefit both the accuracy advantage from ANN and high efficiency from the spike-based paradigm of computation. In this paper, we propose two new conversion methods, namely TerMapping and AugMapping. The TerMapping is a straightforward extension of a typical threshold-balancing method with a double-threshold scheme, while the AugMapping additionally incorporates a new scheme of augmented spike that employs a spike coefficient to carry the number of typical all-or-nothing spikes occurring at a time step. We examine the performance of our methods based on MNIST, Fashion-MNIST and CIFAR10 datasets. The results show that the proposed double-threshold scheme can effectively improve accuracies of the converted SNNs. More importantly, the proposed AugMapping is more advantageous for constructing accurate, fast and efficient deep SNNs as compared to other state-of-the-art approaches. Our study therefore provides new approaches for further integration of advanced techniques in ANNs to improve the performance of SNNs, which could be of great merit to applied developments with spike-based neuromorphic computing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge