Chengxin Li

Smark: A Watermark for Text-to-Speech Diffusion Models via Discrete Wavelet Transform

Dec 21, 2025

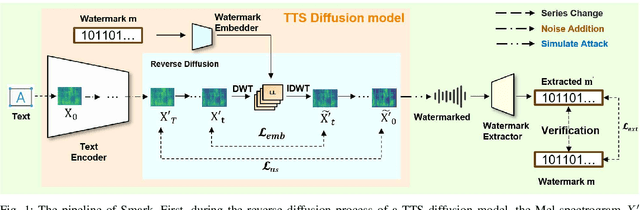

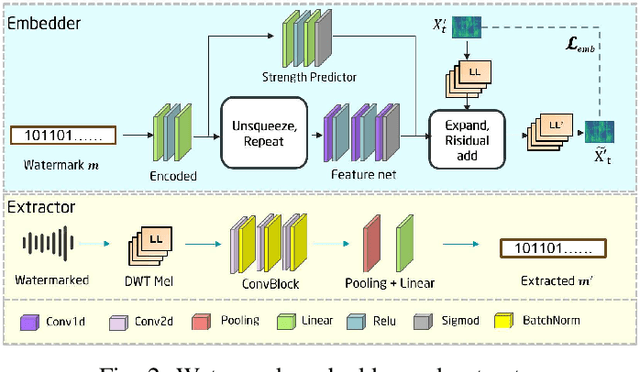

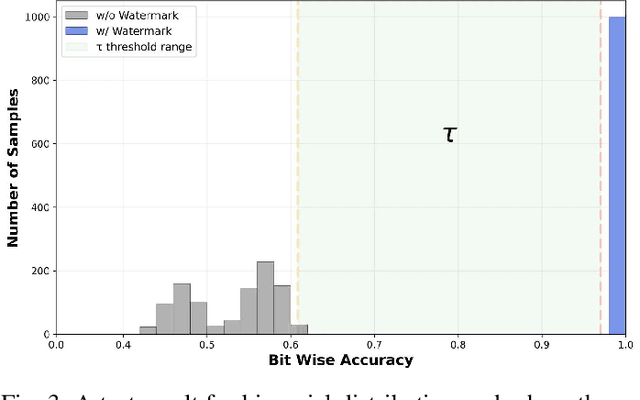

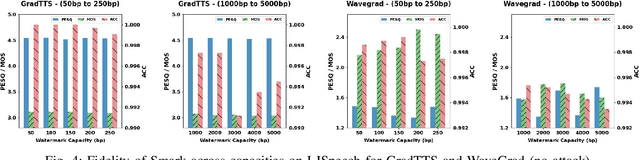

Abstract:Text-to-Speech (TTS) diffusion models generate high-quality speech, which raises challenges for the model intellectual property protection and speech tracing for legal use. Audio watermarking is a promising solution. However, due to the structural differences among various TTS diffusion models, existing watermarking methods are often designed for a specific model and degrade audio quality, which limits their practical applicability. To address this dilemma, this paper proposes a universal watermarking scheme for TTS diffusion models, termed Smark. This is achieved by designing a lightweight watermark embedding framework that operates in the common reverse diffusion paradigm shared by all TTS diffusion models. To mitigate the impact on audio quality, Smark utilizes the discrete wavelet transform (DWT) to embed watermarks into the relatively stable low-frequency regions of the audio, which ensures seamless watermark-audio integration and is resistant to removal during the reverse diffusion process. Extensive experiments are conducted to evaluate the audio quality and watermark performance in various simulated real-world attack scenarios. The experimental results show that Smark achieves superior performance in both audio quality and watermark extraction accuracy.

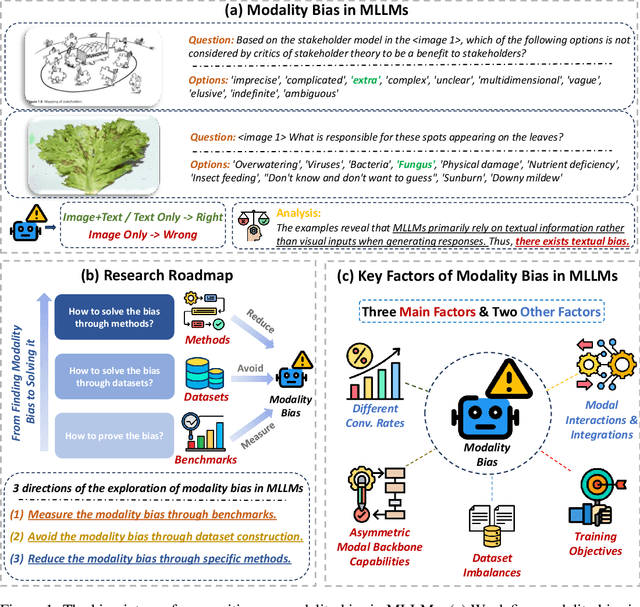

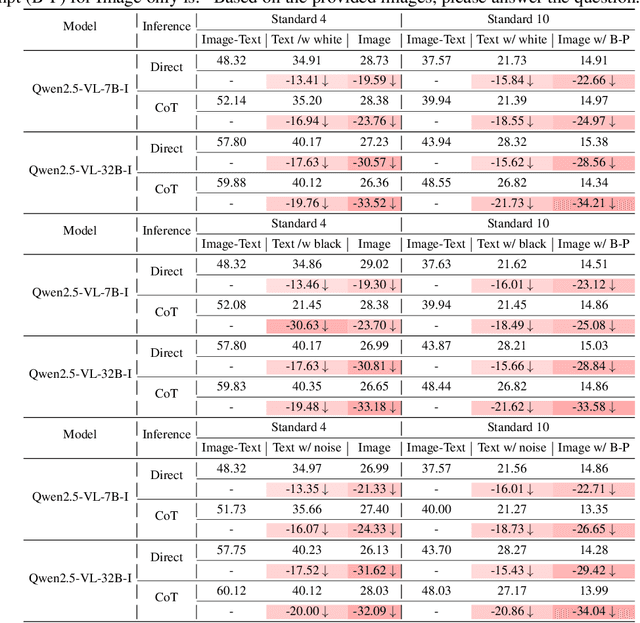

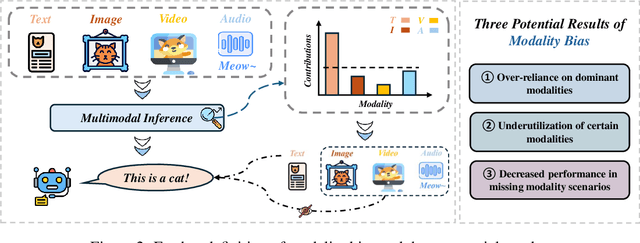

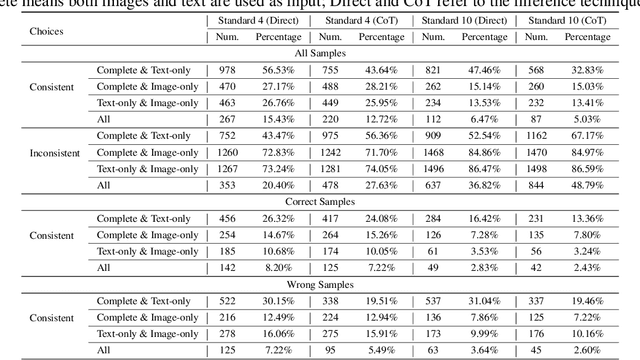

MLLMs are Deeply Affected by Modality Bias

May 24, 2025

Abstract:Recent advances in Multimodal Large Language Models (MLLMs) have shown promising results in integrating diverse modalities such as texts and images. MLLMs are heavily influenced by modality bias, often relying on language while under-utilizing other modalities like visual inputs. This position paper argues that MLLMs are deeply affected by modality bias. Firstly, we diagnose the current state of modality bias, highlighting its manifestations across various tasks. Secondly, we propose a systematic research road-map related to modality bias in MLLMs. Thirdly, we identify key factors of modality bias in MLLMs and offer actionable suggestions for future research to mitigate it. To substantiate these findings, we conduct experiments that demonstrate the influence of each factor: 1. Data Characteristics: Language data is compact and abstract, while visual data is redundant and complex, creating an inherent imbalance in learning dynamics. 2. Imbalanced Backbone Capabilities: The dominance of pretrained language models in MLLMs leads to overreliance on language and neglect of visual information. 3. Training Objectives: Current objectives often fail to promote balanced cross-modal alignment, resulting in shortcut learning biased toward language. These findings highlight the need for balanced training strategies and model architectures to better integrate multiple modalities in MLLMs. We call for interdisciplinary efforts to tackle these challenges and drive innovation in MLLM research. Our work provides a fresh perspective on modality bias in MLLMs and offers insights for developing more robust and generalizable multimodal systems-advancing progress toward Artificial General Intelligence.

Seamless Detection: Unifying Salient Object Detection and Camouflaged Object Detection

Dec 22, 2024Abstract:Achieving joint learning of Salient Object Detection (SOD) and Camouflaged Object Detection (COD) is extremely challenging due to their distinct object characteristics, i.e., saliency and camouflage. The only preliminary research treats them as two contradictory tasks, training models on large-scale labeled data alternately for each task and assessing them independently. However, such task-specific mechanisms fail to meet real-world demands for addressing unknown tasks effectively. To address this issue, in this paper, we pioneer a task-agnostic framework to unify SOD and COD. To this end, inspired by the agreeable nature of binary segmentation for SOD and COD, we propose a Contrastive Distillation Paradigm (CDP) to distil the foreground from the background, facilitating the identification of salient and camouflaged objects amidst their surroundings. To probe into the contribution of our CDP, we design a simple yet effective contextual decoder involving the interval-layer and global context, which achieves an inference speed of 67 fps. Besides the supervised setting, our CDP can be seamlessly integrated into unsupervised settings, eliminating the reliance on extensive human annotations. Experiments on public SOD and COD datasets demonstrate the superiority of our proposed framework in both supervised and unsupervised settings, compared with existing state-of-the-art approaches. Code is available on https://github.com/liuyi1989/Seamless-Detection.

A Fuzzy Reinforcement LSTM-based Long-term Prediction Model for Fault Conditions in Nuclear Power Plants

Nov 13, 2024

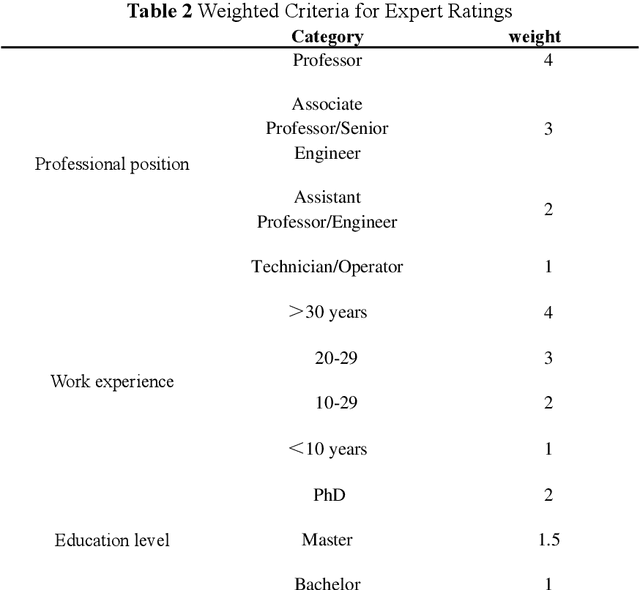

Abstract:Early fault detection and timely maintenance scheduling can significantly mitigate operational risks in NPPs and enhance the reliability of operator decision-making. Therefore, it is necessary to develop an efficient Prognostics and Health Management (PHM) multi-step prediction model for predicting of system health status and prompt execution of maintenance operations. In this study, we propose a novel predictive model that integrates reinforcement learning with Long Short-Term Memory (LSTM) neural networks and the Expert Fuzzy Evaluation Method. The model is validated using parameter data for 20 different breach sizes in the Main Steam Line Break (MSLB) accident condition of the CPR1000 pressurized water reactor simulation model and it demonstrates a remarkable capability in accurately forecasting NPP parameter changes up to 128 steps ahead (with a time interval of 10 seconds per step, i.e., 1280 seconds), thereby satisfying the temporal advance requirement for fault prognostics in NPPs. Furthermore, this method provides an effective reference solution for PHM applications such as anomaly detection and remaining useful life prediction.

Part-Whole Relational Fusion Towards Multi-Modal Scene Understanding

Oct 19, 2024Abstract:Multi-modal fusion has played a vital role in multi-modal scene understanding. Most existing methods focus on cross-modal fusion involving two modalities, often overlooking more complex multi-modal fusion, which is essential for real-world applications like autonomous driving, where visible, depth, event, LiDAR, etc., are used. Besides, few attempts for multi-modal fusion, \emph{e.g.}, simple concatenation, cross-modal attention, and token selection, cannot well dig into the intrinsic shared and specific details of multiple modalities. To tackle the challenge, in this paper, we propose a Part-Whole Relational Fusion (PWRF) framework. For the first time, this framework treats multi-modal fusion as part-whole relational fusion. It routes multiple individual part-level modalities to a fused whole-level modality using the part-whole relational routing ability of Capsule Networks (CapsNets). Through this part-whole routing, our PWRF generates modal-shared and modal-specific semantics from the whole-level modal capsules and the routing coefficients, respectively. On top of that, modal-shared and modal-specific details can be employed to solve the issue of multi-modal scene understanding, including synthetic multi-modal segmentation and visible-depth-thermal salient object detection in this paper. Experiments on several datasets demonstrate the superiority of the proposed PWRF framework for multi-modal scene understanding. The source code has been released on https://github.com/liuyi1989/PWRF.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge