Bogdan Trasnea

ObserveNet Control: A Vision-Dynamics Learning Approach to Predictive Control in Autonomous Vehicles

Jul 19, 2021

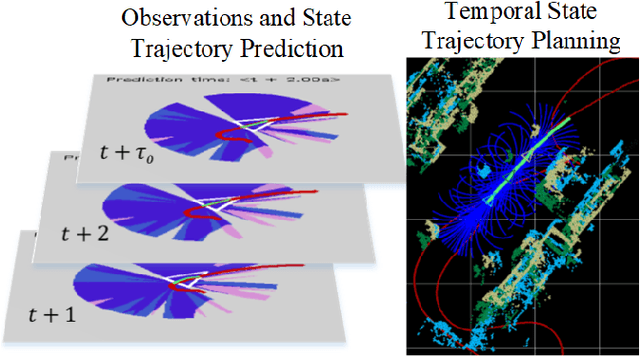

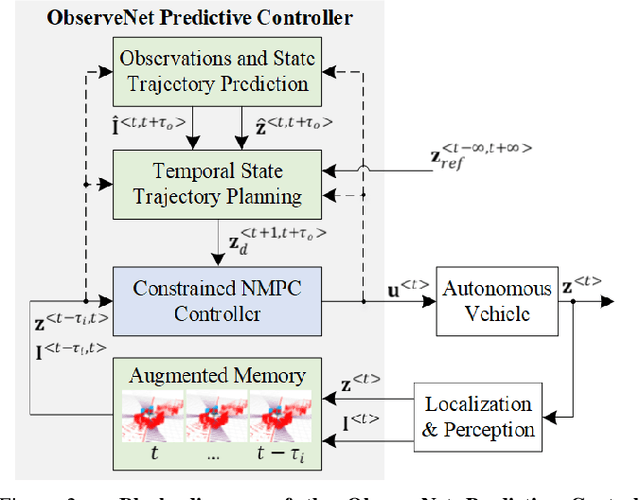

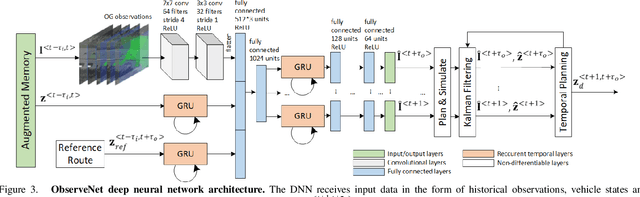

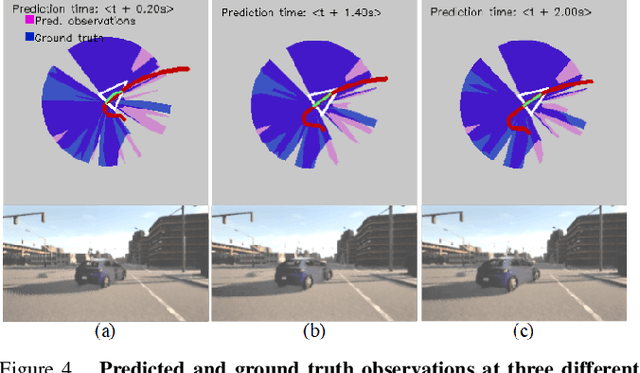

Abstract:A key component in autonomous driving is the ability of the self-driving car to understand, track and predict the dynamics of the surrounding environment. Although there is significant work in the area of object detection, tracking and observations prediction, there is no prior work demonstrating that raw observations prediction can be used for motion planning and control. In this paper, we propose ObserveNet Control, which is a vision-dynamics approach to the predictive control problem of autonomous vehicles. Our method is composed of a: i) deep neural network able to confidently predict future sensory data on a time horizon of up to 10s and ii) a temporal planner designed to compute a safe vehicle state trajectory based on the predicted sensory data. Given the vehicle's historical state and sensing data in the form of Lidar point clouds, the method aims to learn the dynamics of the observed driving environment in a self-supervised manner, without the need to manually specify training labels. The experiments are performed both in simulation and real-life, using CARLA and RovisLab's AMTU mobile platform as a 1:4 scaled model of a car. We evaluate the capabilities of ObserveNet Control in aggressive driving contexts, such as overtaking maneuvers or side cut-off situations, while comparing the results with a baseline Dynamic Window Approach (DWA) and two state-of-the-art imitation learning systems, that is, Learning by Cheating (LBC) and World on Rails (WOR).

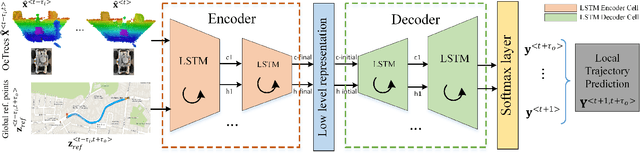

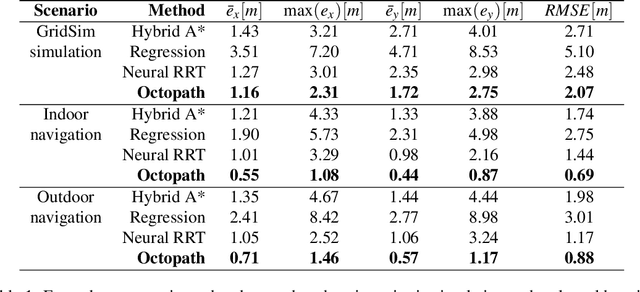

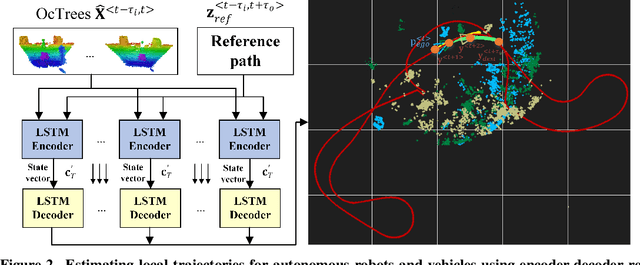

OctoPath: An OcTree Based Self-Supervised Learning Approach to Local Trajectory Planning for Mobile Robots

Jun 02, 2021

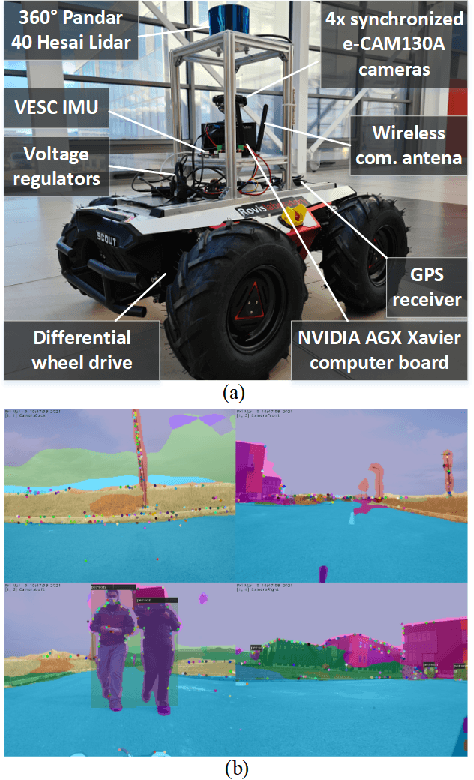

Abstract:Autonomous mobile robots are usually faced with challenging situations when driving in complex environments. Namely, they have to recognize the static and dynamic obstacles, plan the driving path and execute their motion. For addressing the issue of perception and path planning, in this paper, we introduce OctoPath , which is an encoder-decoder deep neural network, trained in a self-supervised manner to predict the local optimal trajectory for the ego-vehicle. Using the discretization provided by a 3D octree environment model, our approach reformulates trajectory prediction as a classification problem with a configurable resolution. During training, OctoPath minimizes the error between the predicted and the manually driven trajectories in a given training dataset. This allows us to avoid the pitfall of regression-based trajectory estimation, in which there is an infinite state space for the output trajectory points. Environment sensing is performed using a 40-channel mechanical LiDAR sensor, fused with an inertial measurement unit and wheels odometry for state estimation. The experiments are performed both in simulation and real-life, using our own developed GridSim simulator and RovisLab's Autonomous Mobile Test Unit platform. We evaluate the predictions of OctoPath in different driving scenarios, both indoor and outdoor, while benchmarking our system against a baseline hybrid A-Star algorithm and a regression-based supervised learning method, as well as against a CNN learning-based optimal path planning method.

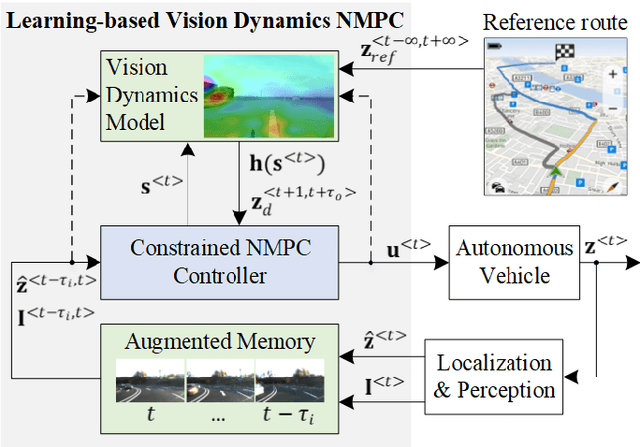

LVD-NMPC: A Learning-based Vision Dynamics Approach to Nonlinear Model Predictive Control for Autonomous Vehicles

May 27, 2021

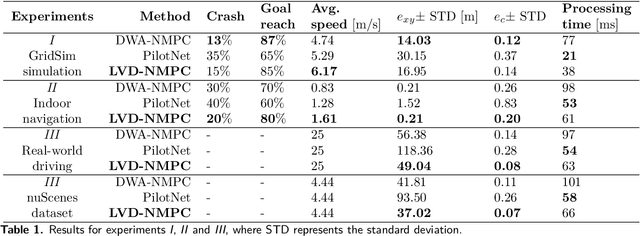

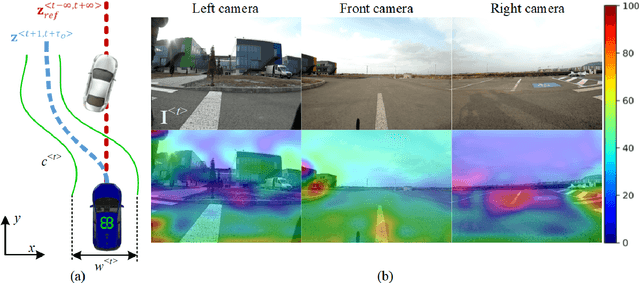

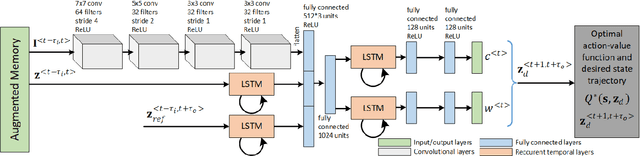

Abstract:In this paper, we introduce a learning-based vision dynamics approach to nonlinear model predictive control for autonomous vehicles, coined LVD-NMPC. LVD-NMPC uses an a-priori process model and a learned vision dynamics model used to calculate the dynamics of the driving scene, the controlled system's desired state trajectory and the weighting gains of the quadratic cost function optimized by a constrained predictive controller. The vision system is defined as a deep neural network designed to estimate the dynamics of the images scene. The input is based on historic sequences of sensory observations and vehicle states, integrated by an Augmented Memory component. Deep Q-Learning is used to train the deep network, which once trained can be used to also calculate the desired trajectory of the vehicle. We evaluate LVD-NMPC against a baseline Dynamic Window Approach (DWA) path planning executed using standard NMPC, as well as against the PilotNet neural network. Performance is measured in our simulation environment GridSim, on a real-world 1:8 scaled model car, as well as on a real size autonomous test vehicle and the nuScenes computer vision dataset.

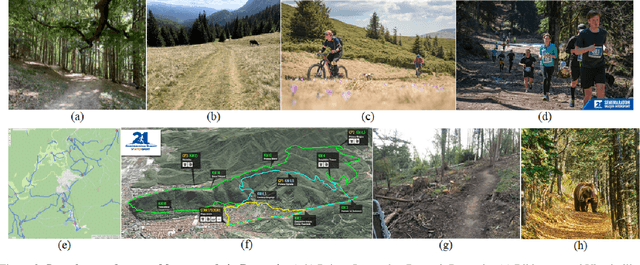

Embedded Vision for Self-Driving on Forest Roads

May 27, 2021

Abstract:Forest roads in Romania are unique natural wildlife sites used for recreation by countless tourists. In order to protect and maintain these roads, we propose RovisLab AMTU (Autonomous Mobile Test Unit), which is a robotic system designed to autonomously navigate off-road terrain and inspect if any deforestation or damage occurred along tracked route. AMTU's core component is its embedded vision module, optimized for real-time environment perception. For achieving a high computation speed, we use a learning system to train a multi-task Deep Neural Network (DNN) for scene and instance segmentation of objects, while the keypoints required for simultaneous localization and mapping are calculated using a handcrafted FAST feature detector and the Lucas-Kanade tracking algorithm. Both the DNN and the handcrafted backbone are run in parallel on the GPU of an NVIDIA AGX Xavier board. We show experimental results on the test track of our research facility.

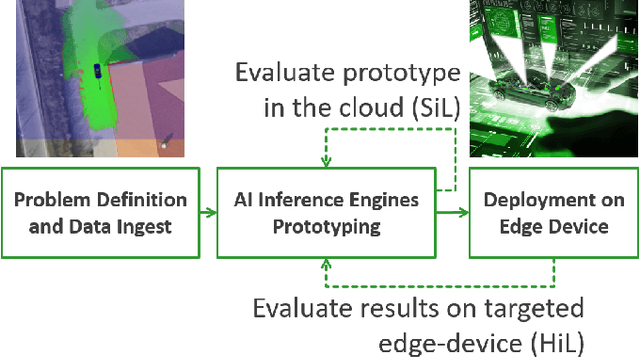

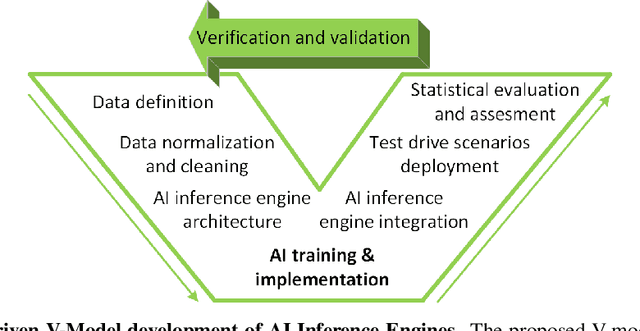

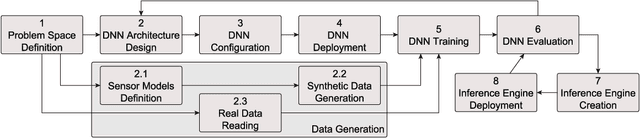

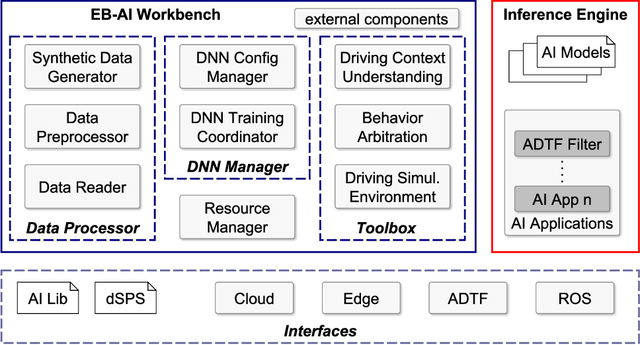

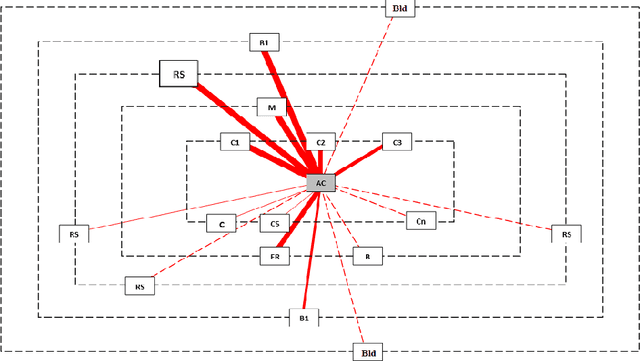

Cloud2Edge Elastic AI Framework for Prototyping and Deployment of AI Inference Engines in Autonomous Vehicles

Sep 23, 2020

Abstract:Self-driving cars and autonomous vehicles are revolutionizing the automotive sector, shaping the future of mobility altogether. Although the integration of novel technologies such as Artificial Intelligence (AI) and Cloud/Edge computing provides golden opportunities to improve autonomous driving applications, there is the need to modernize accordingly the whole prototyping and deployment cycle of AI components. This paper proposes a novel framework for developing so-called AI Inference Engines for autonomous driving applications based on deep learning modules, where training tasks are deployed elastically over both Cloud and Edge resources, with the purpose of reducing the required network bandwidth, as well as mitigating privacy issues. Based on our proposed data driven V-Model, we introduce a simple yet elegant solution for the AI components development cycle, where prototyping takes place in the cloud according to the Software-in-the-Loop (SiL) paradigm, while deployment and evaluation on the target ECUs (Electronic Control Units) is performed as Hardware-in-the-Loop (HiL) testing. The effectiveness of the proposed framework is demonstrated using two real-world use-cases of AI inference engines for autonomous vehicles, that is environment perception and most probable path prediction.

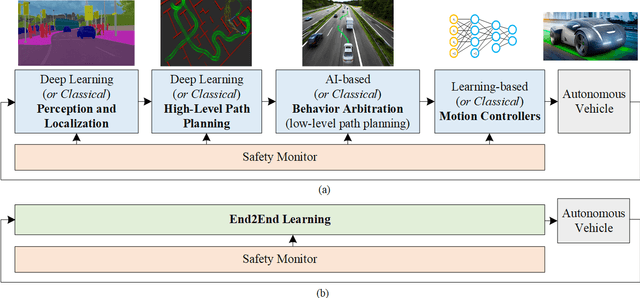

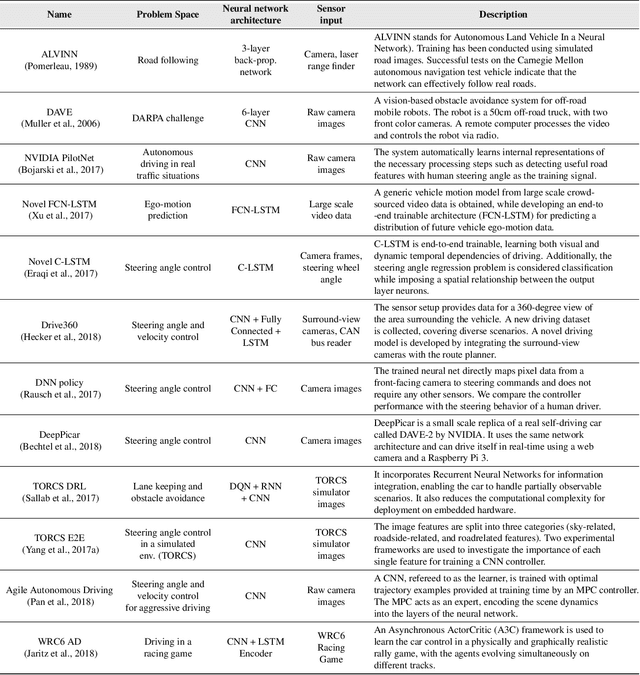

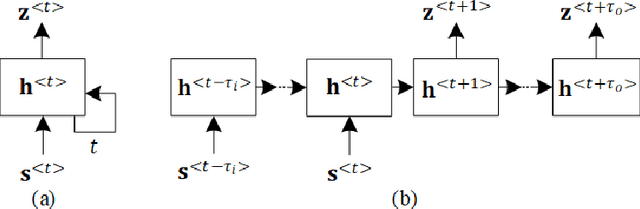

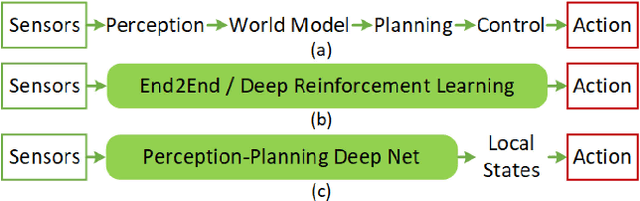

A Survey of Deep Learning Techniques for Autonomous Driving

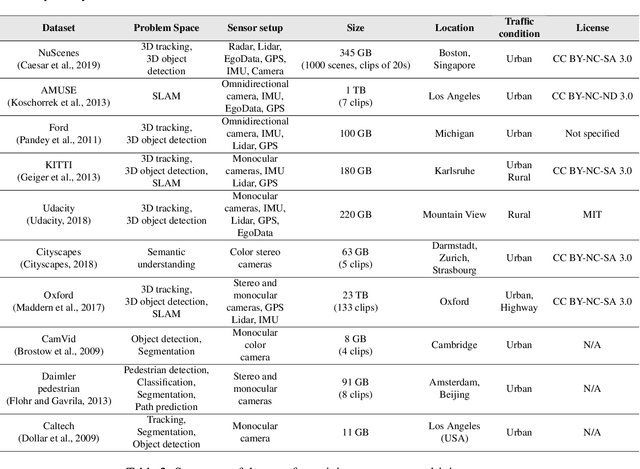

Oct 17, 2019

Abstract:The last decade witnessed increasingly rapid progress in self-driving vehicle technology, mainly backed up by advances in the area of deep learning and artificial intelligence. The objective of this paper is to survey the current state-of-the-art on deep learning technologies used in autonomous driving. We start by presenting AI-based self-driving architectures, convolutional and recurrent neural networks, as well as the deep reinforcement learning paradigm. These methodologies form a base for the surveyed driving scene perception, path planning, behavior arbitration and motion control algorithms. We investigate both the modular perception-planning-action pipeline, where each module is built using deep learning methods, as well as End2End systems, which directly map sensory information to steering commands. Additionally, we tackle current challenges encountered in designing AI architectures for autonomous driving, such as their safety, training data sources and computational hardware. The comparison presented in this survey helps to gain insight into the strengths and limitations of deep learning and AI approaches for autonomous driving and assist with design choices

* 38 pages, 7 figures

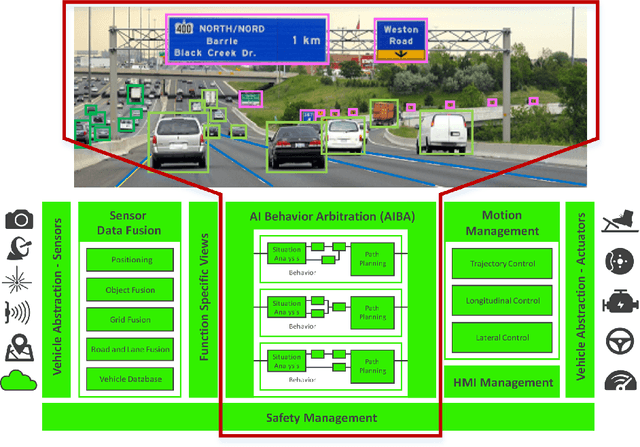

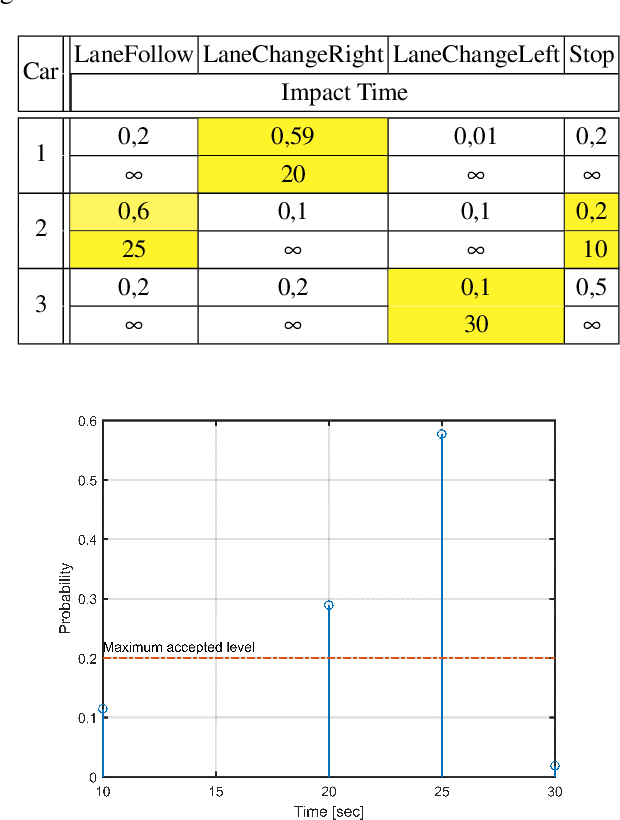

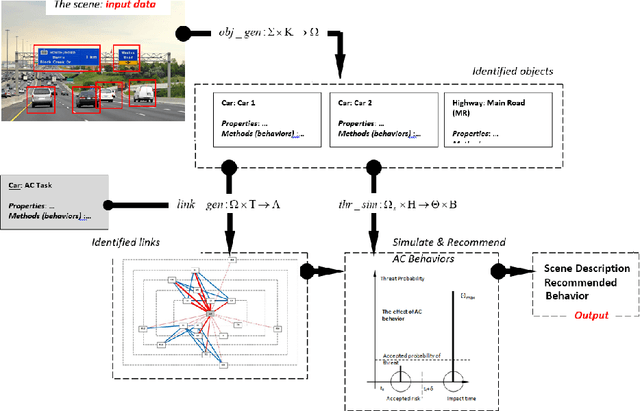

AIBA: An AI Model for Behavior Arbitration in Autonomous Driving

Sep 20, 2019

Abstract:Driving in dynamically changing traffic is a highly challenging task for autonomous vehicles, especially in crowded urban roadways. The Artificial Intelligence (AI) system of a driverless car must be able to arbitrate between different driving strategies in order to properly plan the car's path, based on an understandable traffic scene model. In this paper, an AI behavior arbitration algorithm for Autonomous Driving (AD) is proposed. The method, coined AIBA (AI Behavior Arbitration), has been developed in two stages: (i) human driving scene description and understanding and (ii) formal modelling. The description of the scene is achieved by mimicking a human cognition model, while the modelling part is based on a formal representation which approximates the human driver understanding process. The advantage of the formal representation is that the functional safety of the system can be analytically inferred. The performance of the algorithm has been evaluated in Virtual Test Drive (VTD), a comprehensive traffic simulator, and in GridSim, a vehicle kinematics engine for prototypes.

* 12 pages

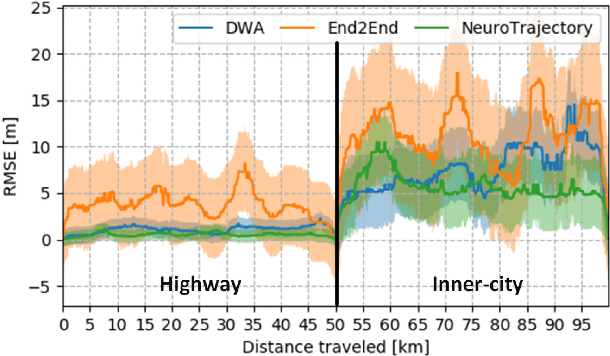

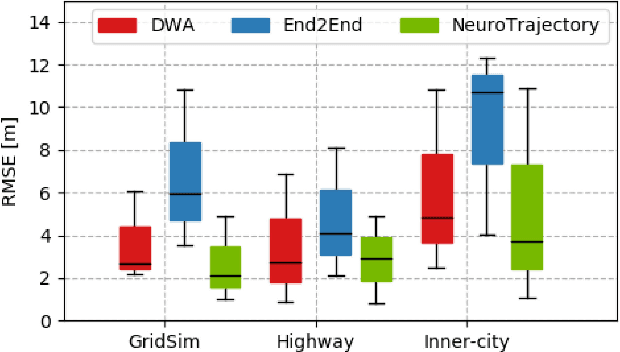

NeuroTrajectory: A Neuroevolutionary Approach to Local State Trajectory Learning for Autonomous Vehicles

Jun 26, 2019

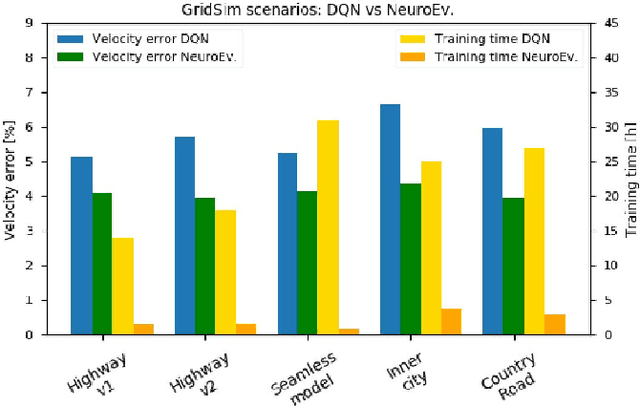

Abstract:Autonomous vehicles are controlled today either based on sequences of decoupled perception-planning-action operations, either based on End2End or Deep Reinforcement Learning (DRL) systems. Current deep learning solutions for autonomous driving are subject to several limitations (e.g. they estimate driving actions through a direct mapping of sensors to actuators, or require complex reward shaping methods). Although the cost function used for training can aggregate multiple weighted objectives, the gradient descent step is computed by the backpropagation algorithm using a single-objective loss. To address these issues, we introduce NeuroTrajectory, which is a multi-objective neuroevolutionary approach to local state trajectory learning for autonomous driving, where the desired state trajectory of the ego-vehicle is estimated over a finite prediction horizon by a perception-planning deep neural network. In comparison to DRL methods, which predict optimal actions for the upcoming sampling time, we estimate a sequence of optimal states that can be used for motion control. We propose an approach which uses genetic algorithms for training a population of deep neural networks, where each network individual is evaluated based on a multi-objective fitness vector, with the purpose of establishing a so-called Pareto front of optimal deep neural networks. The performance of an individual is given by a fitness vector composed of three elements. Each element describes the vehicle's travel path, lateral velocity and longitudinal speed, respectively. The same network structure can be trained on synthetic, as well as on real-world data sequences. We have benchmarked our system against a baseline Dynamic Window Approach (DWA), as well as against an End2End supervised learning method.

Deep Grid Net : A Deep Learning System for Real-Time Driving Context Understanding

Jan 16, 2019

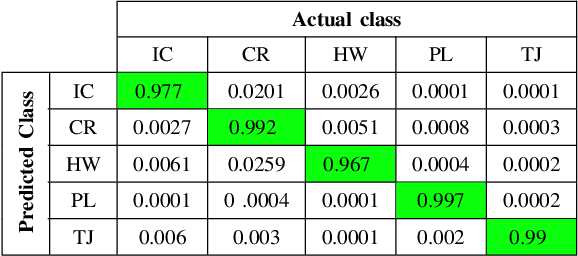

Abstract:Grid maps obtained from fused sensory information are nowadays among the most popular approaches for motion planning for autonomous driving cars. In this paper, we introduce Deep Grid Net (DGN), a deep learning (DL) system designed for understanding the context in which an autonomous car is driving. DGN incorporates a learned driving environment representation based on Occupancy Grids (OG) obtained from raw Lidar data and constructed on top of the Dempster-Shafer (DS) theory. The predicted driving context is further used for switching between different driving strategies implemented within EB robinos, Elektrobit's Autonomous Driving (AD) software platform. Based on genetic algorithms (GAs), we also propose a neuroevolutionary approach for learning the tuning hyperparameters of DGN. The performance of the proposed deep network has been evaluated against similar competing driving context estimation classifiers.

GridSim: A Vehicle Kinematics Engine for Deep Neuroevolutionary Control in Autonomous Driving

Jan 16, 2019

Abstract:Current state of the art solutions in the control of an autonomous vehicle mainly use supervised end-to-end learning, or decoupled perception, planning and action pipelines. Another possible solution is deep reinforcement learning, but such a method requires that the agent interacts with its surroundings in a simulated environment. In this paper we introduce GridSim, which is an autonomous driving simulator engine running a car-like robot architecture to generate occupancy grids from simulated sensors. We use GridSim to study the performance of two deep learning approaches, deep reinforcement learning and driving behavioral learning through genetic algorithms. The deep network encodes the desired behavior in a two elements fitness function describing a maximum travel distance and a maximum forward speed, bounded to a specific interval. The algorithms are evaluated on simulated highways, curved roads and inner-city scenarios, all including different driving limitations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge