Claudiu Pozna

OctoPath: An OcTree Based Self-Supervised Learning Approach to Local Trajectory Planning for Mobile Robots

Jun 02, 2021

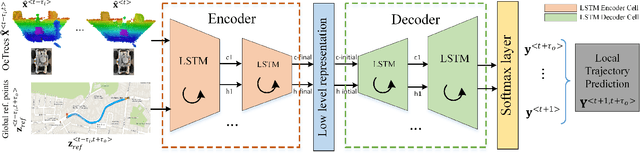

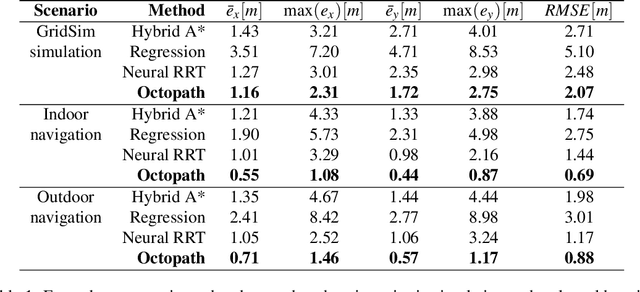

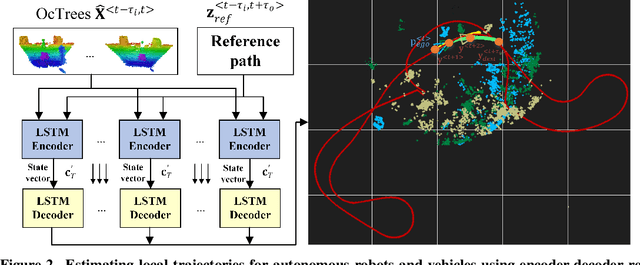

Abstract:Autonomous mobile robots are usually faced with challenging situations when driving in complex environments. Namely, they have to recognize the static and dynamic obstacles, plan the driving path and execute their motion. For addressing the issue of perception and path planning, in this paper, we introduce OctoPath , which is an encoder-decoder deep neural network, trained in a self-supervised manner to predict the local optimal trajectory for the ego-vehicle. Using the discretization provided by a 3D octree environment model, our approach reformulates trajectory prediction as a classification problem with a configurable resolution. During training, OctoPath minimizes the error between the predicted and the manually driven trajectories in a given training dataset. This allows us to avoid the pitfall of regression-based trajectory estimation, in which there is an infinite state space for the output trajectory points. Environment sensing is performed using a 40-channel mechanical LiDAR sensor, fused with an inertial measurement unit and wheels odometry for state estimation. The experiments are performed both in simulation and real-life, using our own developed GridSim simulator and RovisLab's Autonomous Mobile Test Unit platform. We evaluate the predictions of OctoPath in different driving scenarios, both indoor and outdoor, while benchmarking our system against a baseline hybrid A-Star algorithm and a regression-based supervised learning method, as well as against a CNN learning-based optimal path planning method.

GridSim: A Vehicle Kinematics Engine for Deep Neuroevolutionary Control in Autonomous Driving

Jan 16, 2019

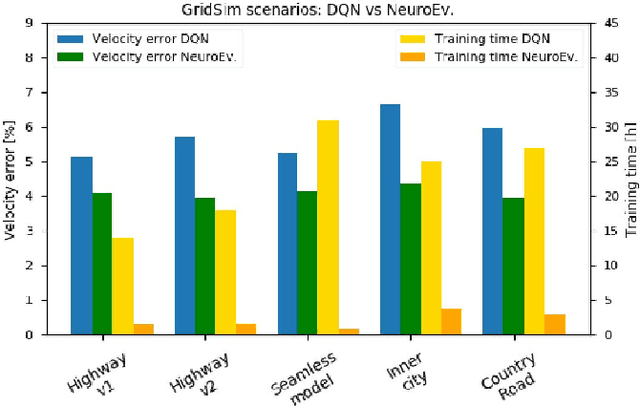

Abstract:Current state of the art solutions in the control of an autonomous vehicle mainly use supervised end-to-end learning, or decoupled perception, planning and action pipelines. Another possible solution is deep reinforcement learning, but such a method requires that the agent interacts with its surroundings in a simulated environment. In this paper we introduce GridSim, which is an autonomous driving simulator engine running a car-like robot architecture to generate occupancy grids from simulated sensors. We use GridSim to study the performance of two deep learning approaches, deep reinforcement learning and driving behavioral learning through genetic algorithms. The deep network encodes the desired behavior in a two elements fitness function describing a maximum travel distance and a maximum forward speed, bounded to a specific interval. The algorithms are evaluated on simulated highways, curved roads and inner-city scenarios, all including different driving limitations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge