Bencheng Yan

From Scaling to Structured Expressivity: Rethinking Transformers for CTR Prediction

Nov 15, 2025

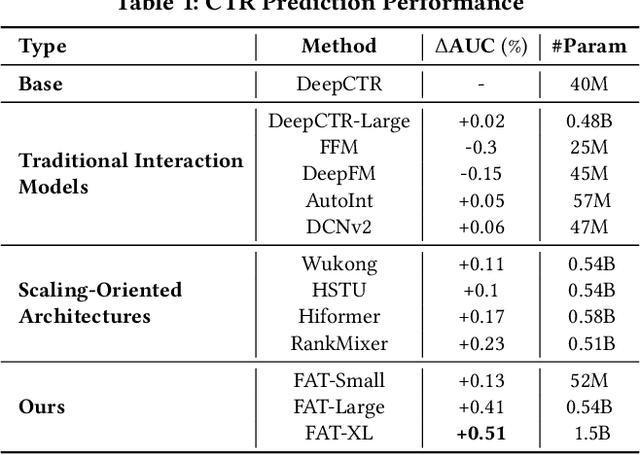

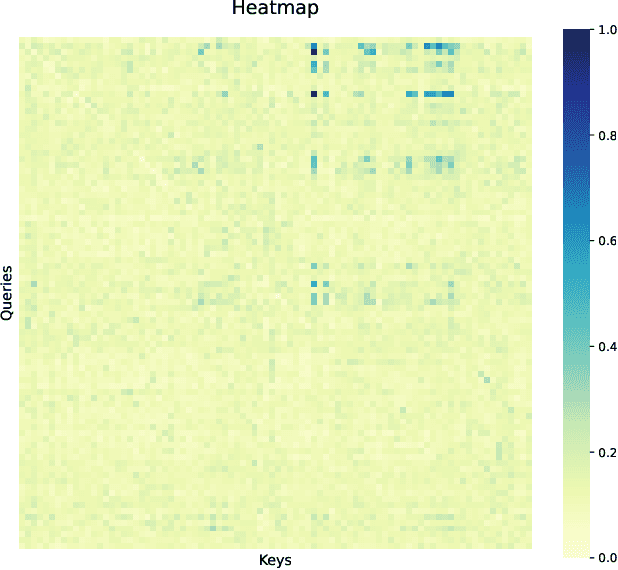

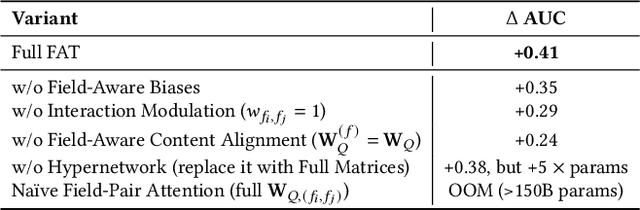

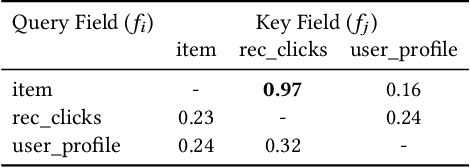

Abstract:Despite massive investments in scale, deep models for click-through rate (CTR) prediction often exhibit rapidly diminishing returns - a stark contrast to the smooth, predictable gains seen in large language models. We identify the root cause as a structural misalignment: Transformers assume sequential compositionality, while CTR data demand combinatorial reasoning over high-cardinality semantic fields. Unstructured attention spreads capacity indiscriminately, amplifying noise under extreme sparsity and breaking scalable learning. To restore alignment, we introduce the Field-Aware Transformer (FAT), which embeds field-based interaction priors into attention through decomposed content alignment and cross-field modulation. This design ensures model complexity scales with the number of fields F, not the total vocabulary size n >> F, leading to tighter generalization and, critically, observed power-law scaling in AUC as model width increases. We present the first formal scaling law for CTR models, grounded in Rademacher complexity, that explains and predicts this behavior. On large-scale benchmarks, FAT improves AUC by up to +0.51% over state-of-the-art methods. Deployed online, it delivers +2.33% CTR and +0.66% RPM. Our work establishes that effective scaling in recommendation arises not from size, but from structured expressivity-architectural coherence with data semantics.

UQABench: Evaluating User Embedding for Prompting LLMs in Personalized Question Answering

Feb 26, 2025Abstract:Large language models (LLMs) achieve remarkable success in natural language processing (NLP). In practical scenarios like recommendations, as users increasingly seek personalized experiences, it becomes crucial to incorporate user interaction history into the context of LLMs to enhance personalization. However, from a practical utility perspective, user interactions' extensive length and noise present challenges when used directly as text prompts. A promising solution is to compress and distill interactions into compact embeddings, serving as soft prompts to assist LLMs in generating personalized responses. Although this approach brings efficiency, a critical concern emerges: Can user embeddings adequately capture valuable information and prompt LLMs? To address this concern, we propose \name, a benchmark designed to evaluate the effectiveness of user embeddings in prompting LLMs for personalization. We establish a fair and standardized evaluation process, encompassing pre-training, fine-tuning, and evaluation stages. To thoroughly evaluate user embeddings, we design three dimensions of tasks: sequence understanding, action prediction, and interest perception. These evaluation tasks cover the industry's demands in traditional recommendation tasks, such as improving prediction accuracy, and its aspirations for LLM-based methods, such as accurately understanding user interests and enhancing the user experience. We conduct extensive experiments on various state-of-the-art methods for modeling user embeddings. Additionally, we reveal the scaling laws of leveraging user embeddings to prompt LLMs. The benchmark is available online.

Unlocking Scaling Law in Industrial Recommendation Systems with a Three-step Paradigm based Large User Model

Feb 12, 2025Abstract:Recent advancements in autoregressive Large Language Models (LLMs) have achieved significant milestones, largely attributed to their scalability, often referred to as the "scaling law". Inspired by these achievements, there has been a growing interest in adapting LLMs for Recommendation Systems (RecSys) by reformulating RecSys tasks into generative problems. However, these End-to-End Generative Recommendation (E2E-GR) methods tend to prioritize idealized goals, often at the expense of the practical advantages offered by traditional Deep Learning based Recommendation Models (DLRMs) in terms of in features, architecture, and practices. This disparity between idealized goals and practical needs introduces several challenges and limitations, locking the scaling law in industrial RecSys. In this paper, we introduce a large user model (LUM) that addresses these limitations through a three-step paradigm, designed to meet the stringent requirements of industrial settings while unlocking the potential for scalable recommendations. Our extensive experimental evaluations demonstrate that LUM outperforms both state-of-the-art DLRMs and E2E-GR approaches. Notably, LUM exhibits excellent scalability, with performance improvements observed as the model scales up to 7 billion parameters. Additionally, we have successfully deployed LUM in an industrial application, where it achieved significant gains in an A/B test, further validating its effectiveness and practicality.

APG: Adaptive Parameter Generation Network for Click-Through Rate Prediction

Mar 30, 2022

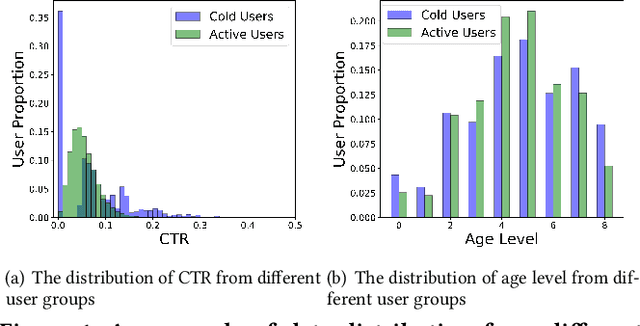

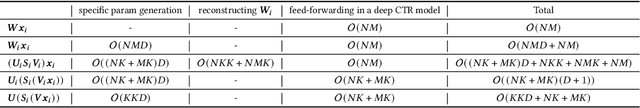

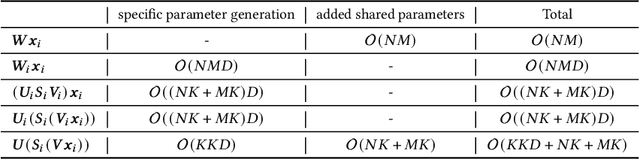

Abstract:In many web applications, deep learning-based CTR prediction models (deep CTR models for short) are widely adopted. Traditional deep CTR models learn patterns in a static manner, i.e., the network parameters are the same across all the instances. However, such a manner can hardly characterize each of the instances which may have different underlying distribution. It actually limits the representation power of deep CTR models, leading to sub-optimal results. In this paper, we propose an efficient, effective, and universal module, Adaptive Parameter Generation network (APG), where the parameters of deep CTR models are dynamically generated on-the-fly based on different instances. Extensive experimental evaluation results show that APG can be applied to a variety of deep CTR models and significantly improve their performance. We have deployed APG in the Taobao sponsored search system and achieved 3\% CTR gain and 1\% RPM gain respectively.

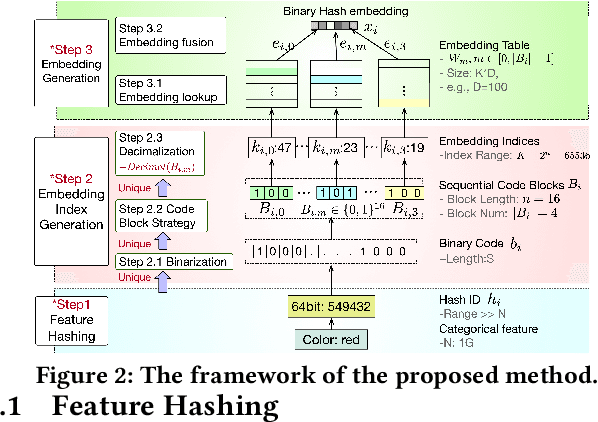

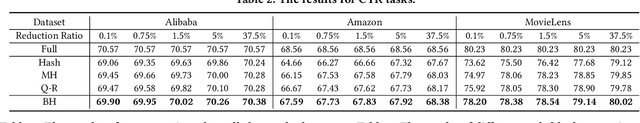

Binary Code based Hash Embedding for Web-scale Applications

Aug 24, 2021

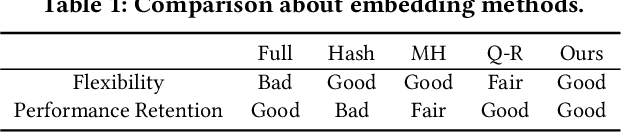

Abstract:Nowadays, deep learning models are widely adopted in web-scale applications such as recommender systems, and online advertising. In these applications, embedding learning of categorical features is crucial to the success of deep learning models. In these models, a standard method is that each categorical feature value is assigned a unique embedding vector which can be learned and optimized. Although this method can well capture the characteristics of the categorical features and promise good performance, it can incur a huge memory cost to store the embedding table, especially for those web-scale applications. Such a huge memory cost significantly holds back the effectiveness and usability of EDRMs. In this paper, we propose a binary code based hash embedding method which allows the size of the embedding table to be reduced in arbitrary scale without compromising too much performance. Experimental evaluation results show that one can still achieve 99\% performance even if the embedding table size is reduced 1000$\times$ smaller than the original one with our proposed method.

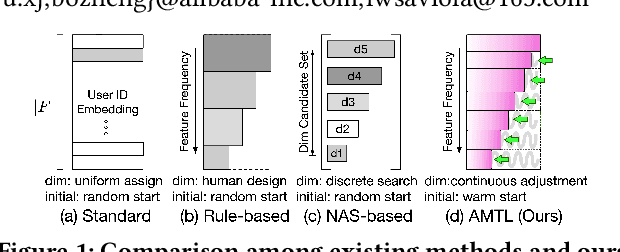

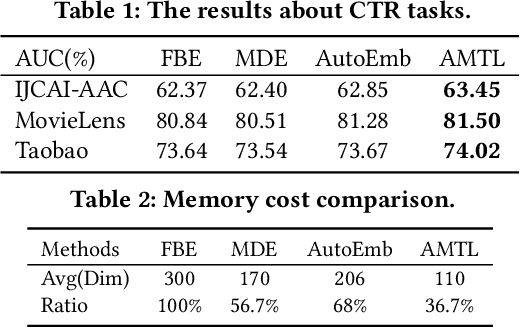

Learning Effective and Efficient Embedding via an Adaptively-Masked Twins-based Layer

Aug 24, 2021

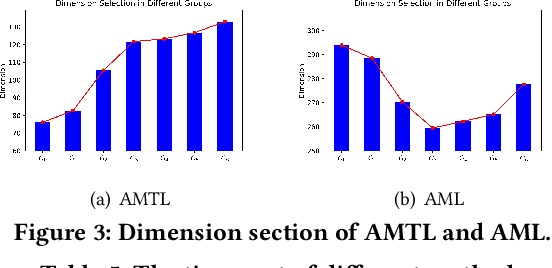

Abstract:Embedding learning for categorical features is crucial for the deep learning-based recommendation models (DLRMs). Each feature value is mapped to an embedding vector via an embedding learning process. Conventional methods configure a fixed and uniform embedding size to all feature values from the same feature field. However, such a configuration is not only sub-optimal for embedding learning but also memory costly. Existing methods that attempt to resolve these problems, either rule-based or neural architecture search (NAS)-based, need extensive efforts on the human design or network training. They are also not flexible in embedding size selection or in warm-start-based applications. In this paper, we propose a novel and effective embedding size selection scheme. Specifically, we design an Adaptively-Masked Twins-based Layer (AMTL) behind the standard embedding layer. AMTL generates a mask vector to mask the undesired dimensions for each embedding vector. The mask vector brings flexibility in selecting the dimensions and the proposed layer can be easily added to either untrained or trained DLRMs. Extensive experimental evaluations show that the proposed scheme outperforms competitive baselines on all the benchmark tasks, and is also memory-efficient, saving 60\% memory usage without compromising any performance metrics.

Explicit Semantic Cross Feature Learning via Pre-trained Graph Neural Networks for CTR Prediction

May 17, 2021

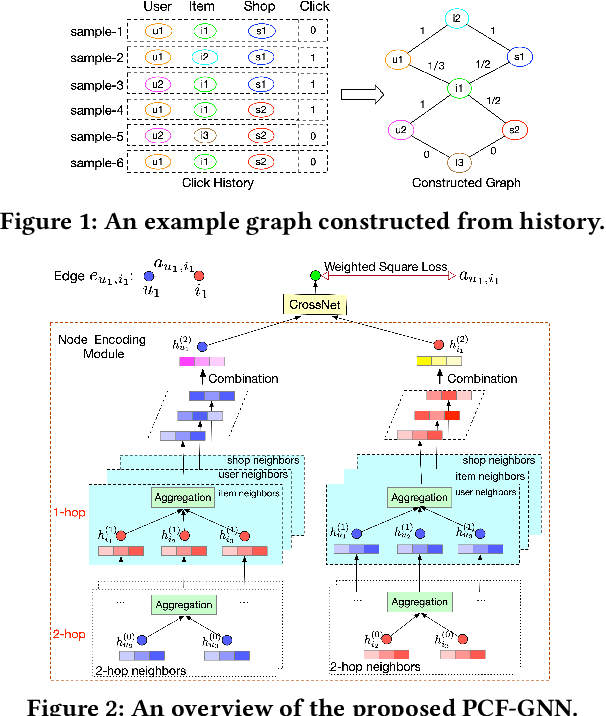

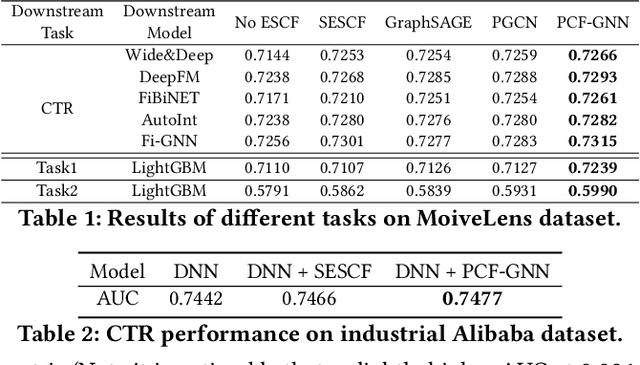

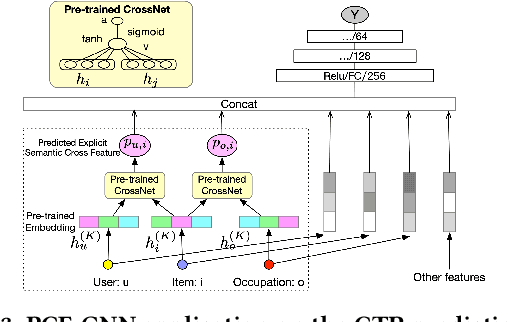

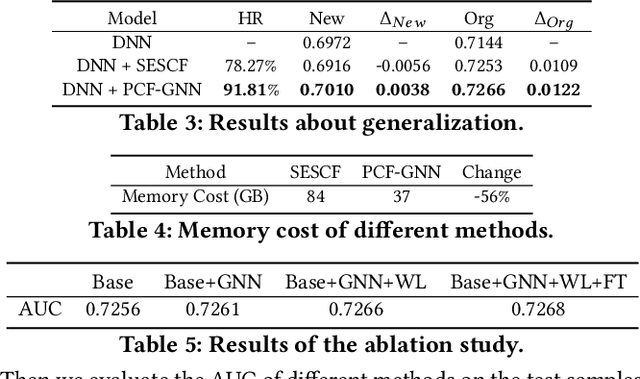

Abstract:Cross features play an important role in click-through rate (CTR) prediction. Most of the existing methods adopt a DNN-based model to capture the cross features in an implicit manner. These implicit methods may lead to a sub-optimized performance due to the limitation in explicit semantic modeling. Although traditional statistical explicit semantic cross features can address the problem in these implicit methods, it still suffers from some challenges, including lack of generalization and expensive memory cost. Few works focus on tackling these challenges. In this paper, we take the first step in learning the explicit semantic cross features and propose Pre-trained Cross Feature learning Graph Neural Networks (PCF-GNN), a GNN based pre-trained model aiming at generating cross features in an explicit fashion. Extensive experiments are conducted on both public and industrial datasets, where PCF-GNN shows competence in both performance and memory-efficiency in various tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge