Arin Chaudhuri

Visualizing the Finer Cluster Structure of Large-Scale and High-Dimensional Data

Jul 17, 2020

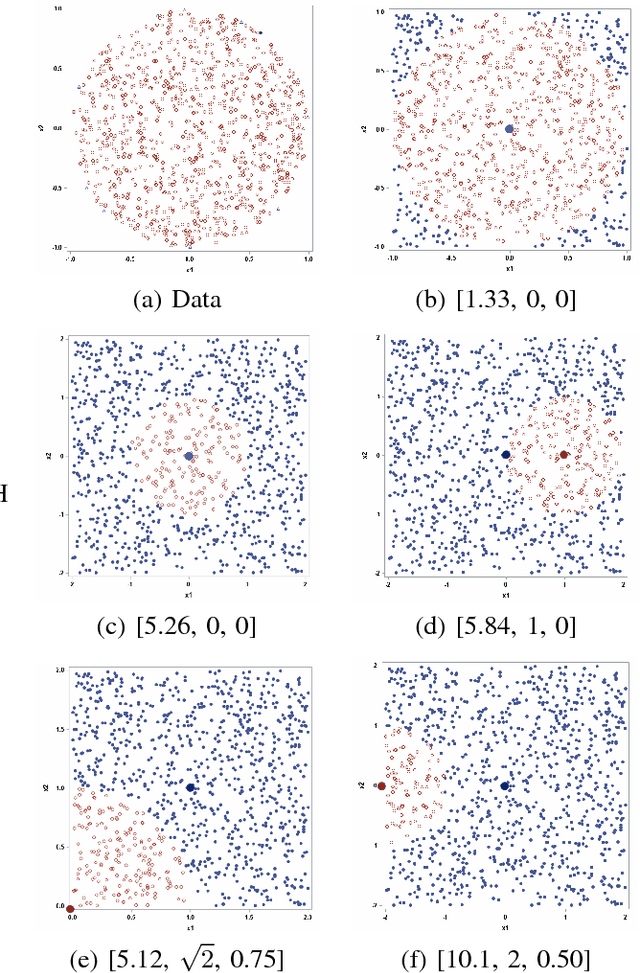

Abstract:Dimension reduction and visualization of high-dimensional data have become very important research topics because of the rapid growth of large databases in data science. In this paper, we propose using a generalized sigmoid function to model the distance similarity in both high- and low-dimensional spaces. In particular, the parameter b is introduced to the generalized sigmoid function in low-dimensional space, so that we can adjust the heaviness of the function tail by changing the value of b. Using both simulated and real-world data sets, we show that our proposed method can generate visualization results comparable to those of uniform manifold approximation and projection (UMAP), which is a newly developed manifold learning technique with fast running speed, better global structure, and scalability to massive data sets. In addition, according to the purpose of the study and the data structure, we can decrease or increase the value of b to either reveal the finer cluster structure of the data or maintain the neighborhood continuity of the embedding for better visualization. Finally, we use domain knowledge to demonstrate that the finer subclusters revealed with small values of b are meaningful.

Fault Detection Using Nonlinear Low-Dimensional Representation of Sensor Data

Oct 02, 2019

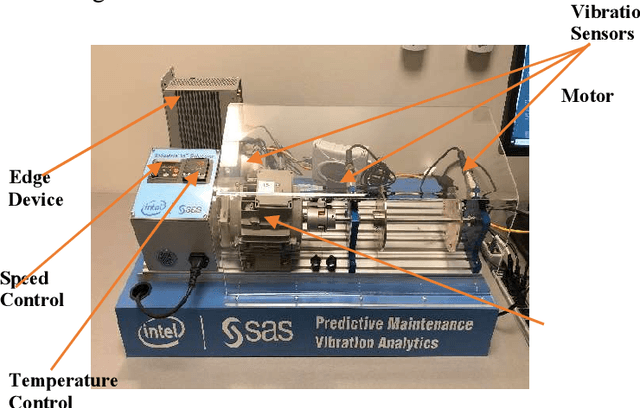

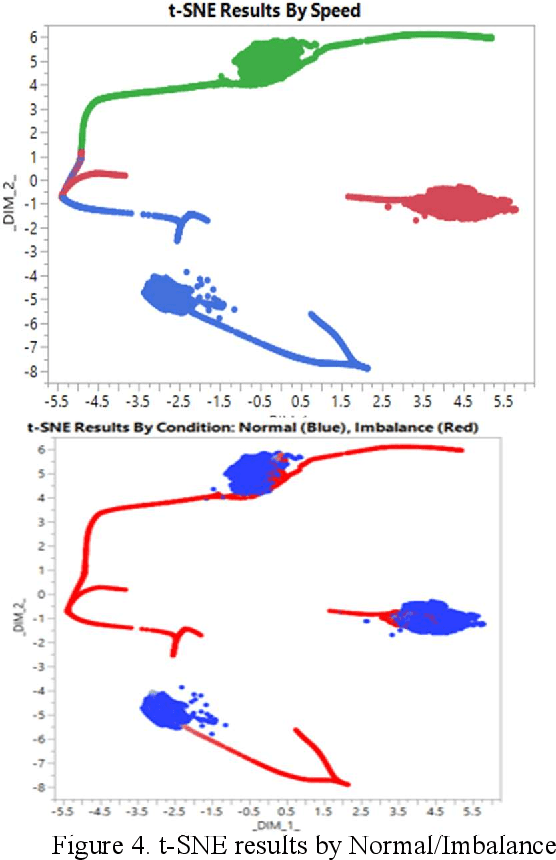

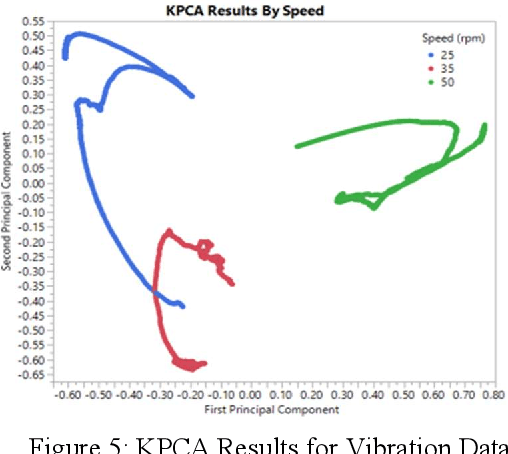

Abstract:Sensor data analysis plays a key role in health assessment of critical equipment. Such data are multivariate and exhibit nonlinear relationships. This paper describes how one can exploit nonlinear dimension reduction techniques, such as the t-distributed stochastic neighbor embedding (t-SNE) and kernel principal component analysis (KPCA) for fault detection. We show that using anomaly detection with low dimensional representations provides better interpretability and is conducive to edge processing in IoT applications.

Automatic Hyperparameter Tuning Method for Local Outlier Factor, with Applications to Anomaly Detection

Feb 01, 2019

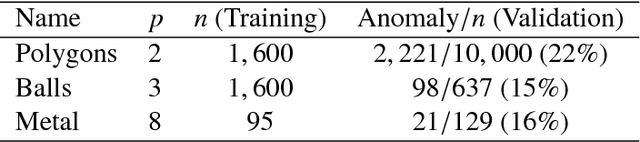

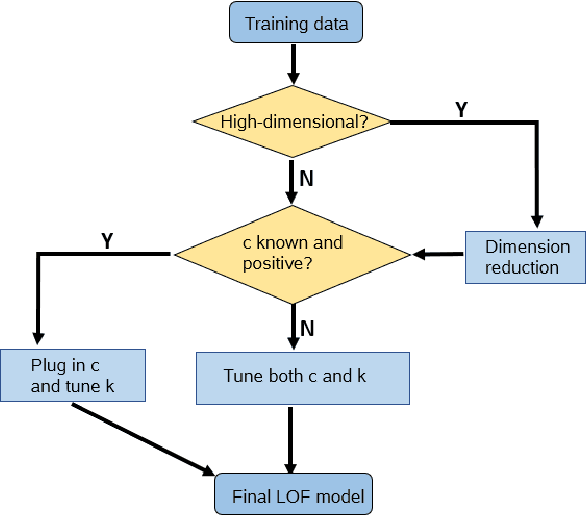

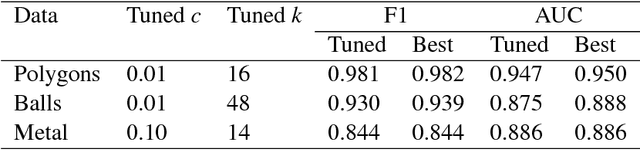

Abstract:In recent years, there have been many practical applications of anomaly detection such as in predictive maintenance, detection of credit fraud, network intrusion, and system failure. The goal of anomaly detection is to identify in the test data anomalous behaviors that are either rare or unseen in the training data. This is a common goal in predictive maintenance, which aims to forecast the imminent faults of an appliance given abundant samples of normal behaviors. Local outlier factor (LOF) is one of the state-of-the-art models used for anomaly detection, but the predictive performance of LOF depends greatly on the selection of hyperparameters. In this paper, we propose a novel, heuristic methodology to tune the hyperparameters in LOF. A tuned LOF model that uses the proposed method shows good predictive performance in both simulations and real data sets.

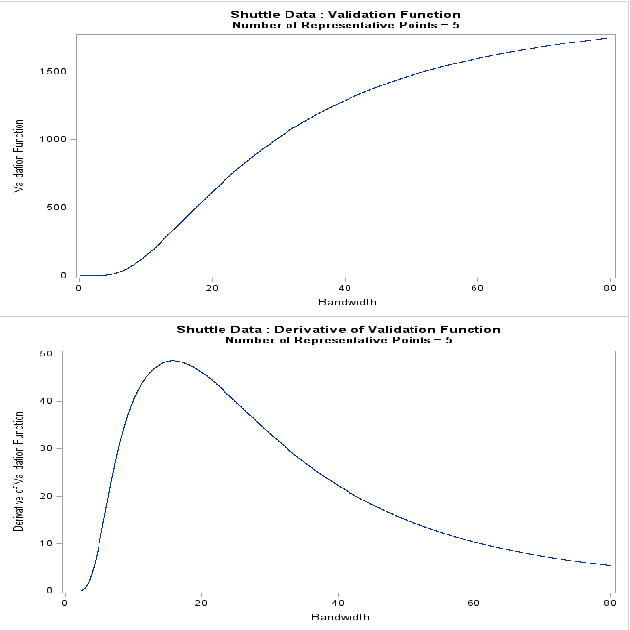

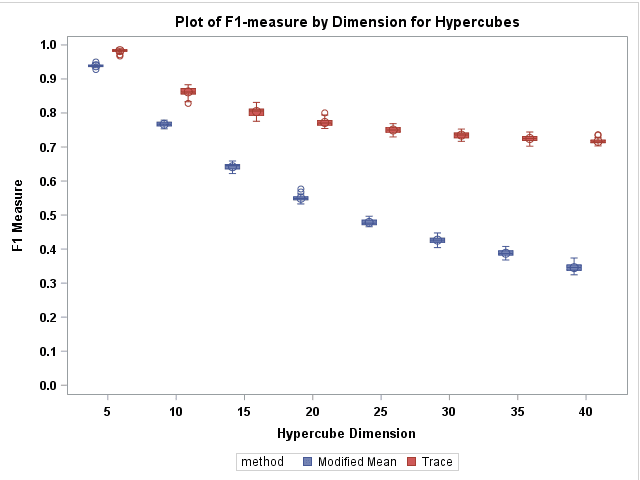

The Trace Criterion for Kernel Bandwidth Selection for Support Vector Data Description

Nov 15, 2018

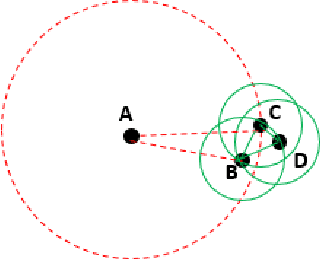

Abstract:Support vector data description (SVDD) is a popular anomaly detection technique. The SVDD classifier partitions the whole data space into an $\textit{inlier}$ region, which consists of the region $\textit{near}$ the training data, and an $\textit{outlier}$ region, which consists of points $\textit{away}$ from the training data. The computation of the SVDD classifier requires a kernel function, for which the Gaussian kernel is a common choice. The Gaussian kernel has a bandwidth parameter, and it is important to set the value of this parameter correctly for good results. A small bandwidth leads to overfitting such that the resulting SVDD classifier overestimates the number of anomalies, whereas a large bandwidth leads to underfitting and an inability to detect many anomalies. In this paper, we present a new unsupervised method for selecting the Gaussian kernel bandwidth. Our method, which exploits the low-rank representation of the kernel matrix to suggest a kernel bandwidth value, is competitive with existing bandwidth selection methods.

A New SVDD-Based Multivariate Non-parametric Process Capability Index

Nov 13, 2018

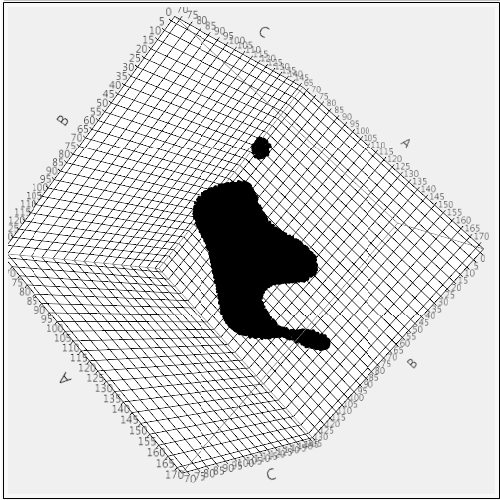

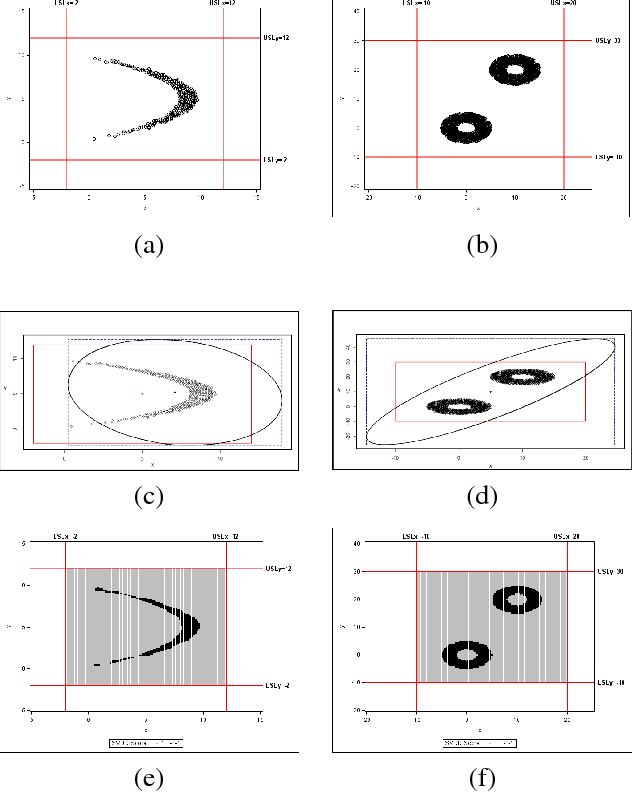

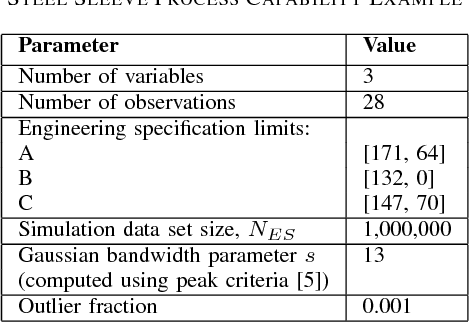

Abstract:Process capability index (PCI) is a commonly used statistic to measure ability of a process to operate within the given specifications or to produce products which meet the required quality specifications. PCI can be univariate or multivariate depending upon the number of process specifications or quality characteristics of interest. Most PCIs make distributional assumptions which are often unrealistic in practice. This paper proposes a new multivariate non-parametric process capability index. This index can be used when distribution of the process or quality parameters is either unknown or does not follow commonly used distributions such as multivariate normal.

The Mean and Median Criterion for Automatic Kernel Bandwidth Selection for Support Vector Data Description

Aug 21, 2017

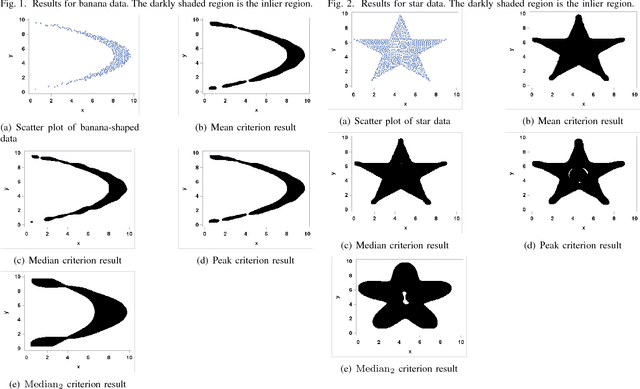

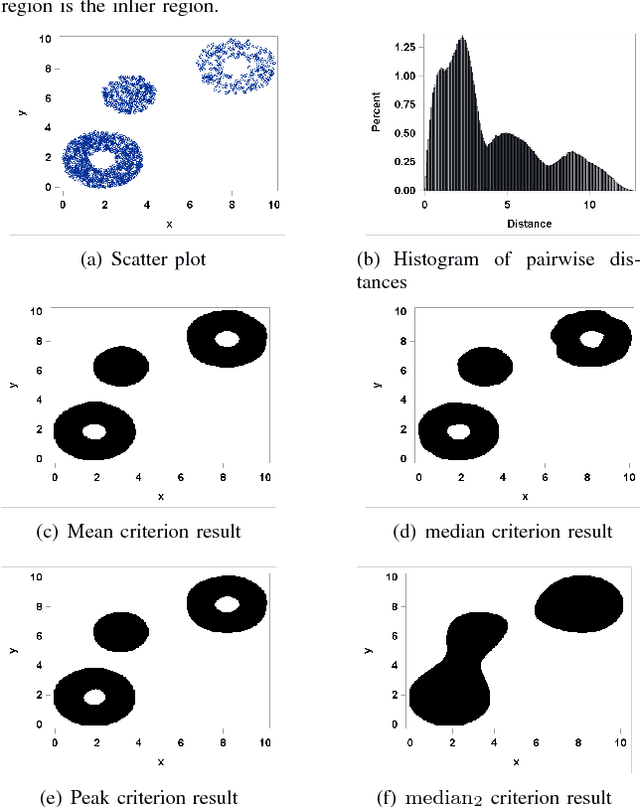

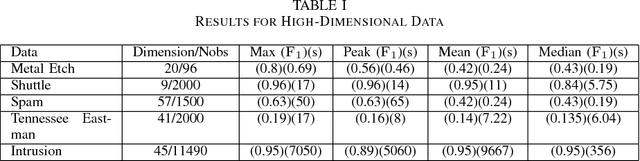

Abstract:Support vector data description (SVDD) is a popular technique for detecting anomalies. The SVDD classifier partitions the whole space into an inlier region, which consists of the region near the training data, and an outlier region, which consists of points away from the training data. The computation of the SVDD classifier requires a kernel function, and the Gaussian kernel is a common choice for the kernel function. The Gaussian kernel has a bandwidth parameter, whose value is important for good results. A small bandwidth leads to overfitting, and the resulting SVDD classifier overestimates the number of anomalies. A large bandwidth leads to underfitting, and the classifier fails to detect many anomalies. In this paper we present a new automatic, unsupervised method for selecting the Gaussian kernel bandwidth. The selected value can be computed quickly, and it is competitive with existing bandwidth selection methods.

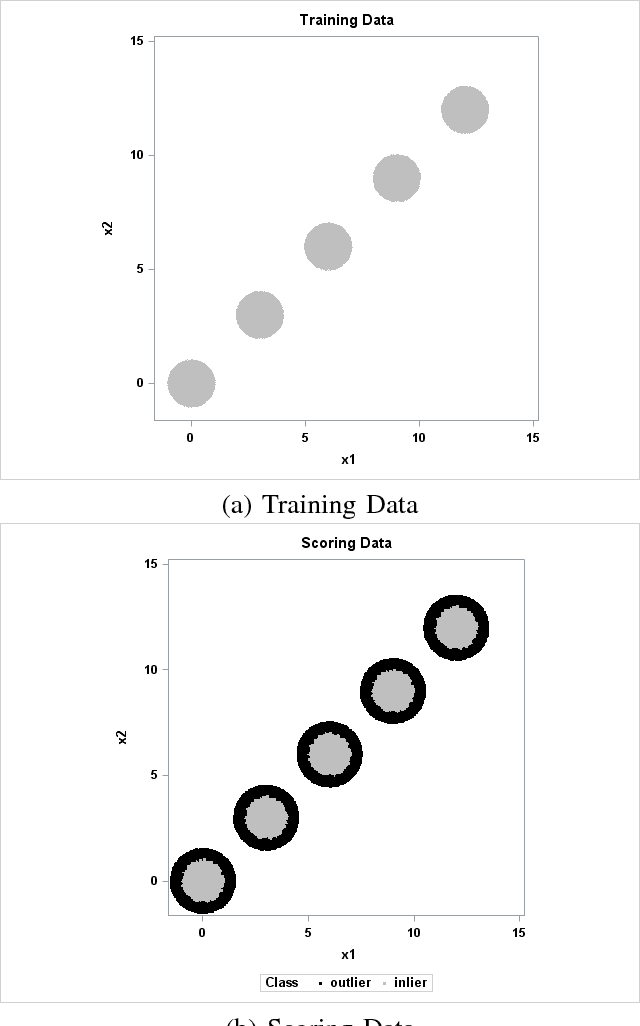

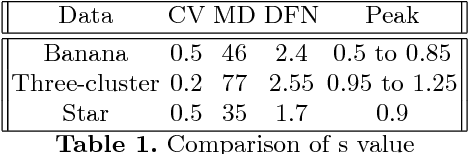

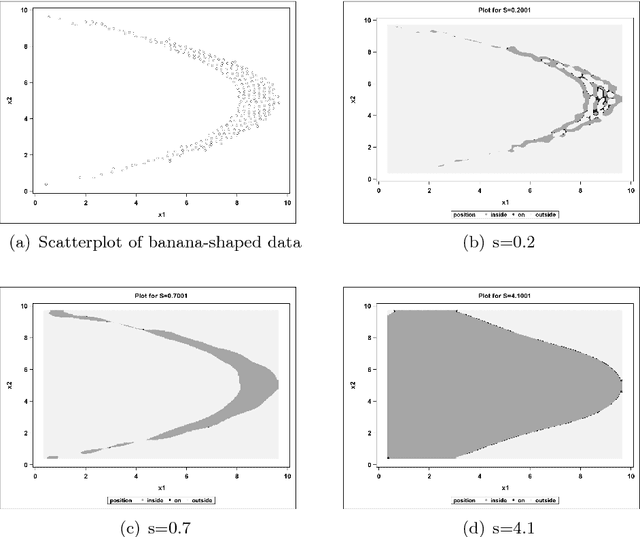

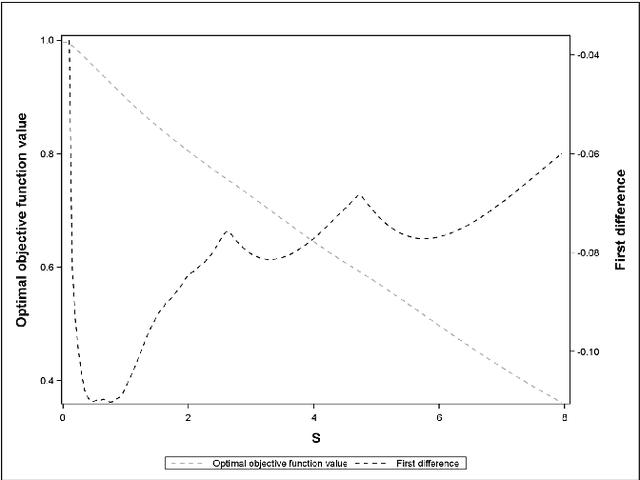

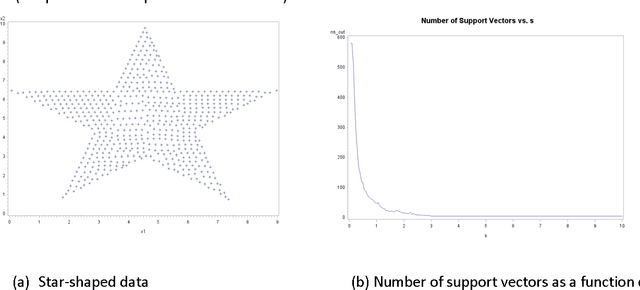

Peak Criterion for Choosing Gaussian Kernel Bandwidth in Support Vector Data Description

Aug 08, 2017

Abstract:Support Vector Data Description (SVDD) is a machine-learning technique used for single class classification and outlier detection. SVDD formulation with kernel function provides a flexible boundary around data. The value of kernel function parameters affects the nature of the data boundary. For example, it is observed that with a Gaussian kernel, as the value of kernel bandwidth is lowered, the data boundary changes from spherical to wiggly. The spherical data boundary leads to underfitting, and an extremely wiggly data boundary leads to overfitting. In this paper, we propose empirical criterion to obtain good values of the Gaussian kernel bandwidth parameter. This criterion provides a smooth boundary that captures the essential geometric features of the data.

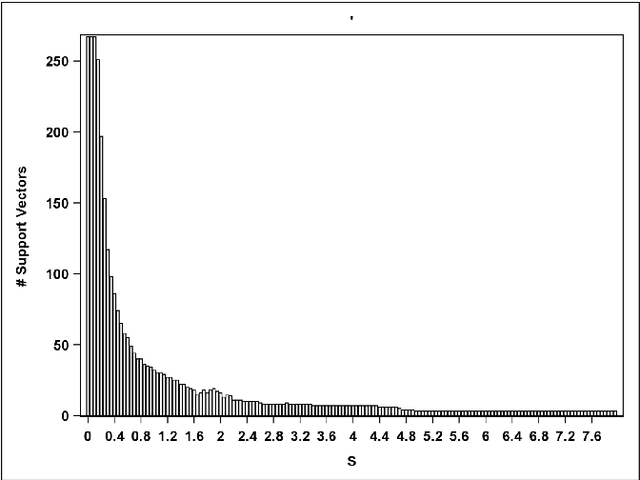

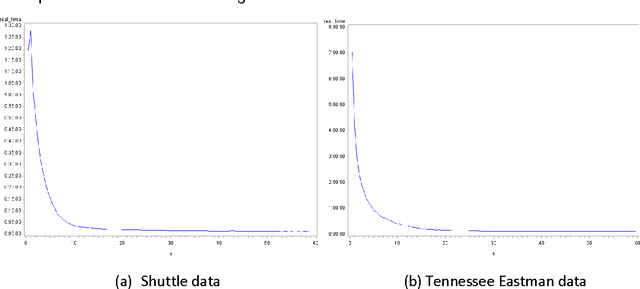

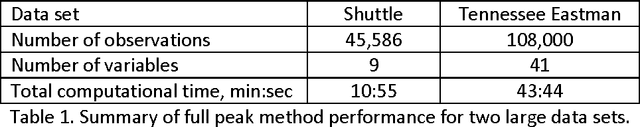

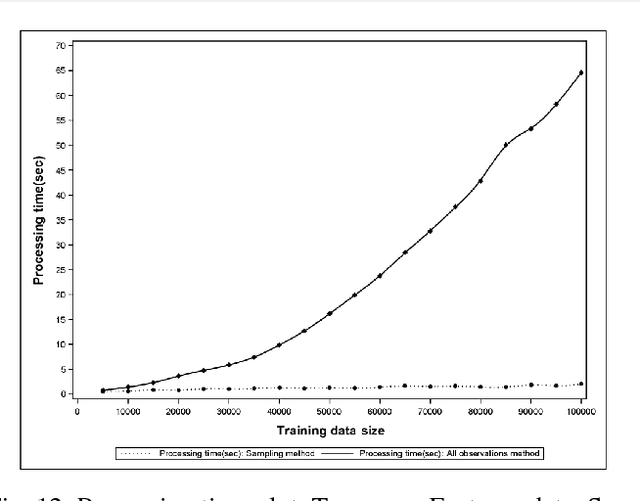

Kernel Bandwidth Selection for SVDD: Peak Criterion Approach for Large Data

May 19, 2017

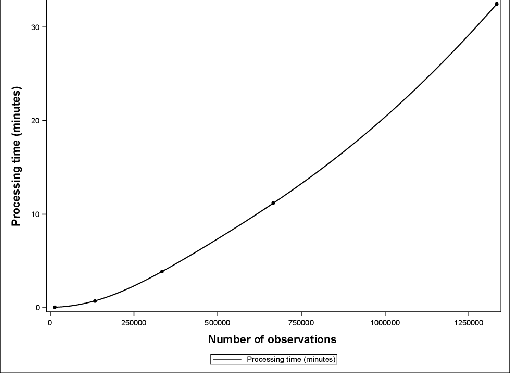

Abstract:Support Vector Data Description (SVDD) provides a useful approach to construct a description of multivariate data for single-class classification and outlier detection with various practical applications. Gaussian kernel used in SVDD formulation allows flexible data description defined by observations designated as support vectors. The data boundary of such description is non-spherical and conforms to the geometric features of the data. By varying the Gaussian kernel bandwidth parameter, the SVDD-generated boundary can be made either smoother (more spherical) or tighter/jagged. The former case may lead to under-fitting, whereas the latter may result in overfitting. Peak criterion has been proposed to select an optimal value of the kernel bandwidth to strike the balance between the data boundary smoothness and its ability to capture the general geometric shape of the data. Peak criterion involves training SVDD at various values of the kernel bandwidth parameter. When training datasets are large, the time required to obtain the optimal value of the Gaussian kernel bandwidth parameter according to Peak method can become prohibitively large. This paper proposes an extension of Peak method for the case of large data. The proposed method gives good results when applied to several datasets. Two existing alternative methods of computing the Gaussian kernel bandwidth parameter (Coefficient of Variation and Distance to the Farthest Neighbor) were modified to allow comparison with the proposed method on convergence. Empirical comparison demonstrates the advantage of the proposed method.

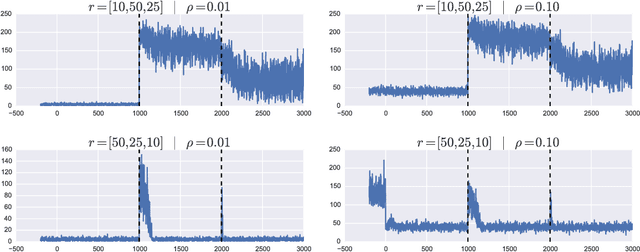

Online Robust Principal Component Analysis with Change Point Detection

Mar 20, 2017

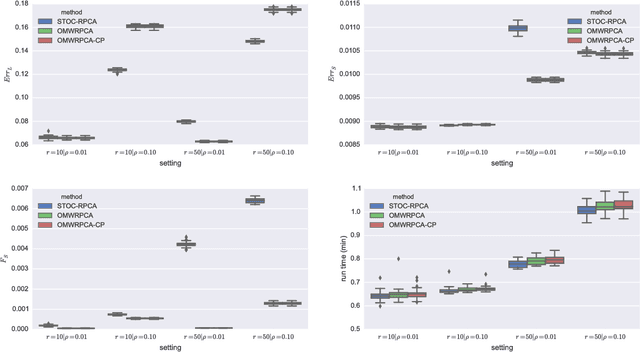

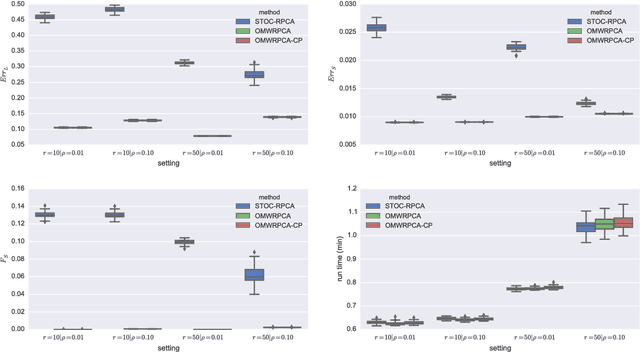

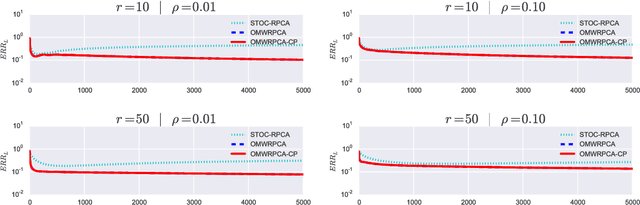

Abstract:Robust PCA methods are typically batch algorithms which requires loading all observations into memory before processing. This makes them inefficient to process big data. In this paper, we develop an efficient online robust principal component methods, namely online moving window robust principal component analysis (OMWRPCA). Unlike existing algorithms, OMWRPCA can successfully track not only slowly changing subspace but also abruptly changed subspace. By embedding hypothesis testing into the algorithm, OMWRPCA can detect change points of the underlying subspaces. Extensive simulation studies demonstrate the superior performance of OMWRPCA compared with other state-of-art approaches. We also apply the algorithm for real-time background subtraction of surveillance video.

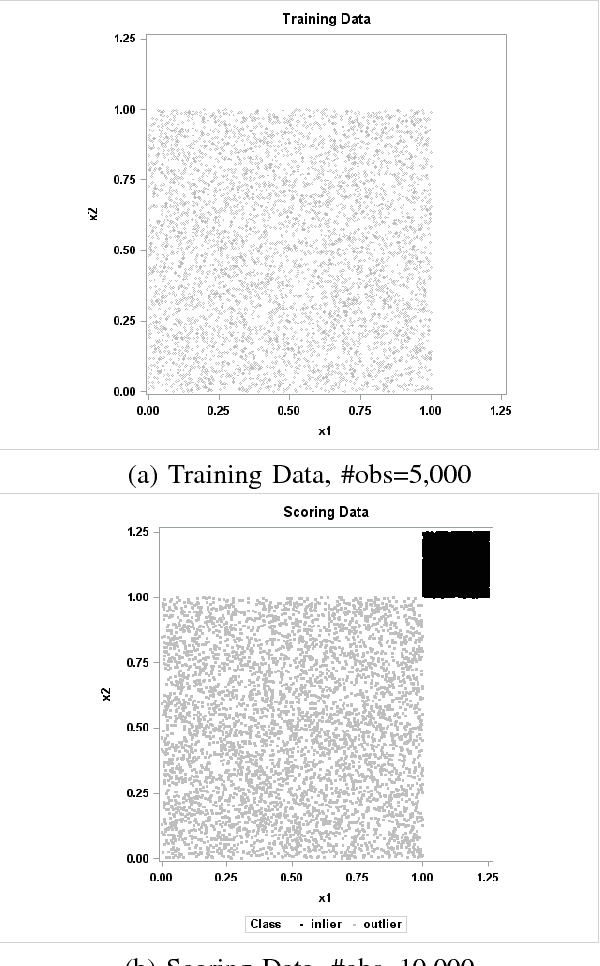

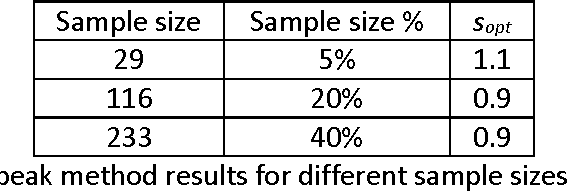

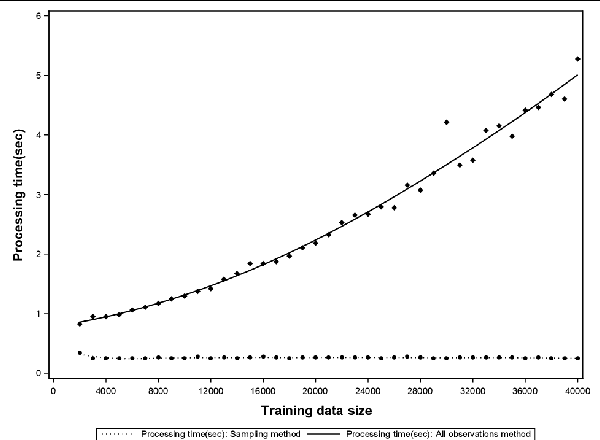

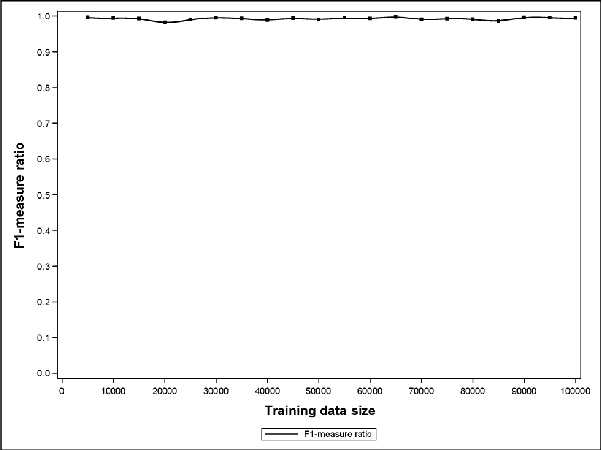

Sampling Method for Fast Training of Support Vector Data Description

Sep 25, 2016

Abstract:Support Vector Data Description (SVDD) is a popular outlier detection technique which constructs a flexible description of the input data. SVDD computation time is high for large training datasets which limits its use in big-data process-monitoring applications. We propose a new iterative sampling-based method for SVDD training. The method incrementally learns the training data description at each iteration by computing SVDD on an independent random sample selected with replacement from the training data set. The experimental results indicate that the proposed method is extremely fast and provides a good data description .

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge